Wolfgang Minker

Enabling Personalized Long-term Interactions in LLM-based Agents through Persistent Memory and User Profiles

Oct 09, 2025Abstract:Large language models (LLMs) increasingly serve as the central control unit of AI agents, yet current approaches remain limited in their ability to deliver personalized interactions. While Retrieval Augmented Generation enhances LLM capabilities by improving context-awareness, it lacks mechanisms to combine contextual information with user-specific data. Although personalization has been studied in fields such as human-computer interaction or cognitive science, existing perspectives largely remain conceptual, with limited focus on technical implementation. To address these gaps, we build on a unified definition of personalization as a conceptual foundation to derive technical requirements for adaptive, user-centered LLM-based agents. Combined with established agentic AI patterns such as multi-agent collaboration or multi-source retrieval, we present a framework that integrates persistent memory, dynamic coordination, self-validation, and evolving user profiles to enable personalized long-term interactions. We evaluate our approach on three public datasets using metrics such as retrieval accuracy, response correctness, or BertScore. We complement these results with a five-day pilot user study providing initial insights into user feedback on perceived personalization. The study provides early indications that guide future work and highlights the potential of integrating persistent memory and user profiles to improve the adaptivity and perceived personalization of LLM-based agents.

CAIM: Development and Evaluation of a Cognitive AI Memory Framework for Long-Term Interaction with Intelligent Agents

May 19, 2025Abstract:Large language models (LLMs) have advanced the field of artificial intelligence (AI) and are a powerful enabler for interactive systems. However, they still face challenges in long-term interactions that require adaptation towards the user as well as contextual knowledge and understanding of the ever-changing environment. To overcome these challenges, holistic memory modeling is required to efficiently retrieve and store relevant information across interaction sessions for suitable responses. Cognitive AI, which aims to simulate the human thought process in a computerized model, highlights interesting aspects, such as thoughts, memory mechanisms, and decision-making, that can contribute towards improved memory modeling for LLMs. Inspired by these cognitive AI principles, we propose our memory framework CAIM. CAIM consists of three modules: 1.) The Memory Controller as the central decision unit; 2.) the Memory Retrieval, which filters relevant data for interaction upon request; and 3.) the Post-Thinking, which maintains the memory storage. We compare CAIM against existing approaches, focusing on metrics such as retrieval accuracy, response correctness, contextual coherence, and memory storage. The results demonstrate that CAIM outperforms baseline frameworks across different metrics, highlighting its context-awareness and potential to improve long-term human-AI interactions.

System-Initiated Transitions from Chit-Chat to Task-Oriented Dialogues with Transition Info Extractor and Transition Sentence Generator

Aug 06, 2023

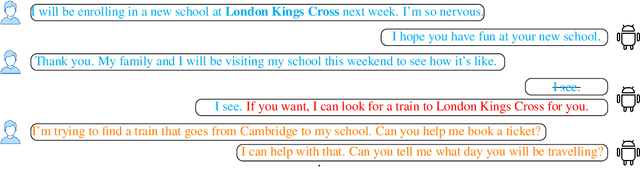

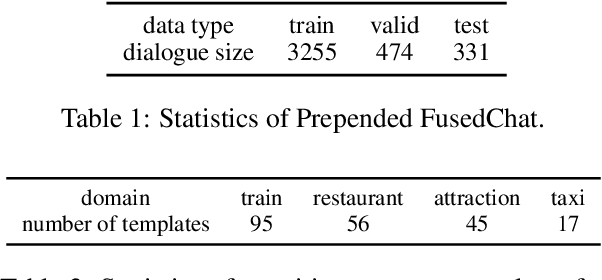

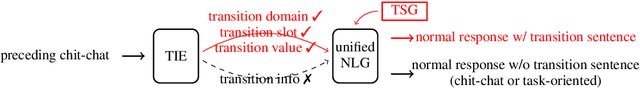

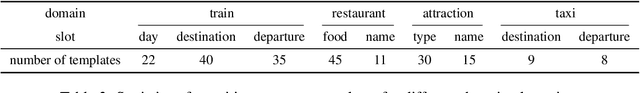

Abstract:In this work, we study dialogue scenarios that start from chit-chat but eventually switch to task-related services, and investigate how a unified dialogue model, which can engage in both chit-chat and task-oriented dialogues, takes the initiative during the dialogue mode transition from chit-chat to task-oriented in a coherent and cooperative manner. We firstly build a {transition info extractor} (TIE) that keeps track of the preceding chit-chat interaction and detects the potential user intention to switch to a task-oriented service. Meanwhile, in the unified model, a {transition sentence generator} (TSG) is extended through efficient Adapter tuning and transition prompt learning. When the TIE successfully finds task-related information from the preceding chit-chat, such as a transition domain, then the TSG is activated automatically in the unified model to initiate this transition by generating a transition sentence under the guidance of transition information extracted by TIE. The experimental results show promising performance regarding the proactive transitions. We achieve an additional large improvement on TIE model by utilizing Conditional Random Fields (CRF). The TSG can flexibly generate transition sentences while maintaining the unified capabilities of normal chit-chat and task-oriented response generation.

Unified Conversational Models with System-Initiated Transitions between Chit-Chat and Task-Oriented Dialogues

Jul 04, 2023Abstract:Spoken dialogue systems (SDSs) have been separately developed under two different categories, task-oriented and chit-chat. The former focuses on achieving functional goals and the latter aims at creating engaging social conversations without special goals. Creating a unified conversational model that can engage in both chit-chat and task-oriented dialogue is a promising research topic in recent years. However, the potential ``initiative'' that occurs when there is a change between dialogue modes in one dialogue has rarely been explored. In this work, we investigate two kinds of dialogue scenarios, one starts from chit-chat implicitly involving task-related topics and finally switching to task-oriented requests; the other starts from task-oriented interaction and eventually changes to casual chat after all requested information is provided. We contribute two efficient prompt models which can proactively generate a transition sentence to trigger system-initiated transitions in a unified dialogue model. One is a discrete prompt model trained with two discrete tokens, the other one is a continuous prompt model using continuous prompt embeddings automatically generated by a classifier. We furthermore show that the continuous prompt model can also be used to guide the proactive transitions between particular domains in a multi-domain task-oriented setting.

Development of a Trust-Aware User Simulator for Statistical Proactive Dialog Modeling in Human-AI Teams

Apr 24, 2023

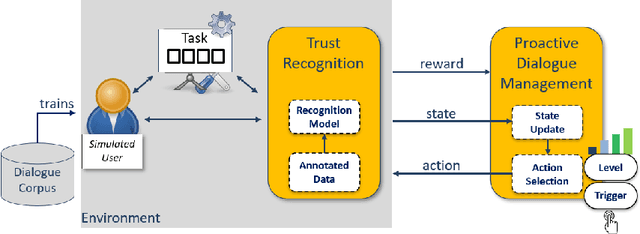

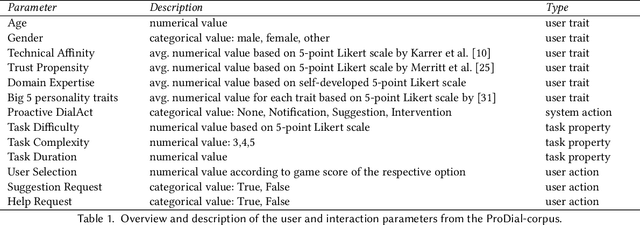

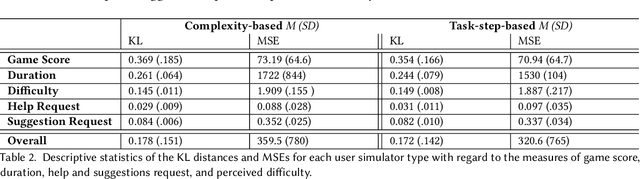

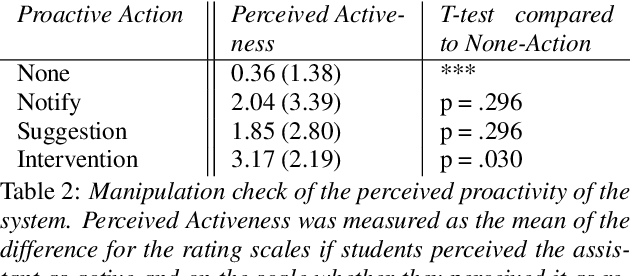

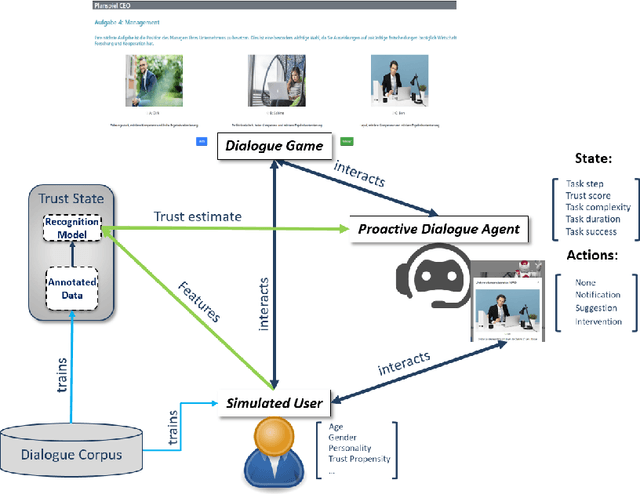

Abstract:The concept of a Human-AI team has gained increasing attention in recent years. For effective collaboration between humans and AI teammates, proactivity is crucial for close coordination and effective communication. However, the design of adequate proactivity for AI-based systems to support humans is still an open question and a challenging topic. In this paper, we present the development of a corpus-based user simulator for training and testing proactive dialog policies. The simulator incorporates informed knowledge about proactive dialog and its effect on user trust and simulates user behavior and personal information, including socio-demographic features and personality traits. Two different simulation approaches were compared, and a task-step-based approach yielded better overall results due to enhanced modeling of sequential dependencies. This research presents a promising avenue for exploring and evaluating appropriate proactive strategies in a dialog game setting for improving Human-AI teams.

Does It Affect You? Social and Learning Implications of Using Cognitive-Affective State Recognition for Proactive Human-Robot Tutoring

Dec 20, 2022

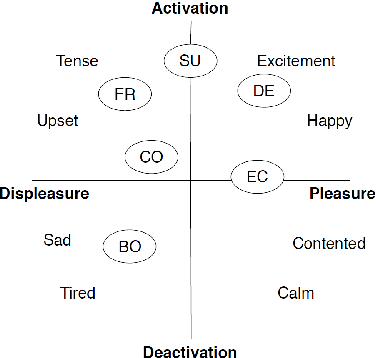

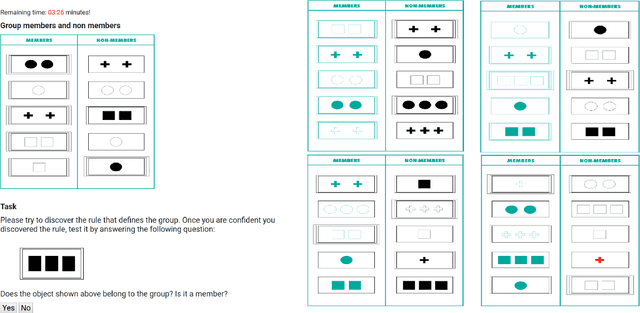

Abstract:Using robots in educational contexts has already shown to be beneficial for a student's learning and social behaviour. For levitating them to the next level of providing more effective and human-like tutoring, the ability to adapt to the user and to express proactivity is fundamental. By acting proactively, intelligent robotic tutors anticipate possible situations where problems for the student may arise and act in advance for preventing negative outcomes. Still, the decisions of when and how to behave proactively are open questions. Therefore, this paper deals with the investigation of how the student's cognitive-affective states can be used by a robotic tutor for triggering proactive tutoring dialogue. In doing so, it is aimed to improve the learning experience. For this reason, a concept learning task scenario was observed where a robotic assistant proactively helped when negative user states were detected. In a learning task, the user's states of frustration and confusion were deemed to have negative effects on the outcome of the task and were used to trigger proactive behaviour. In an empirical user study with 40 undergraduate and doctoral students, we studied whether the initiation of proactive behaviour after the detection of signs of confusion and frustration improves the student's concentration and trust in the agent. Additionally, we investigated which level of proactive dialogue is useful for promoting the student's concentration and trust. The results show that high proactive behaviour harms trust, especially when triggered during negative cognitive-affective states but contributes to keeping the student focused on the task when triggered in these states. Based on our study results, we further discuss future steps for improving the proactive assistance of robotic tutoring systems.

Towards Improving Proactive Dialog Agents Using Socially-Aware Reinforcement Learning

Nov 25, 2022

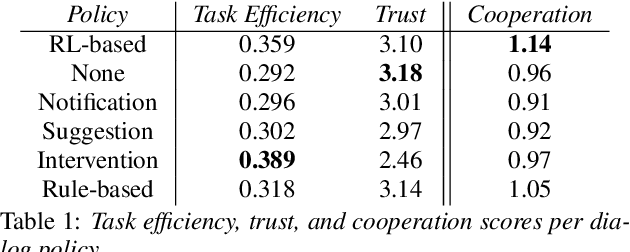

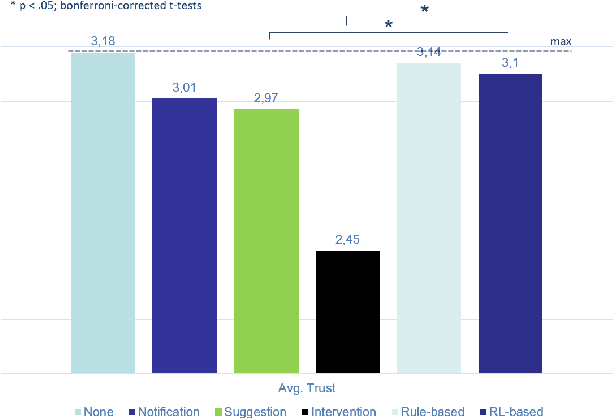

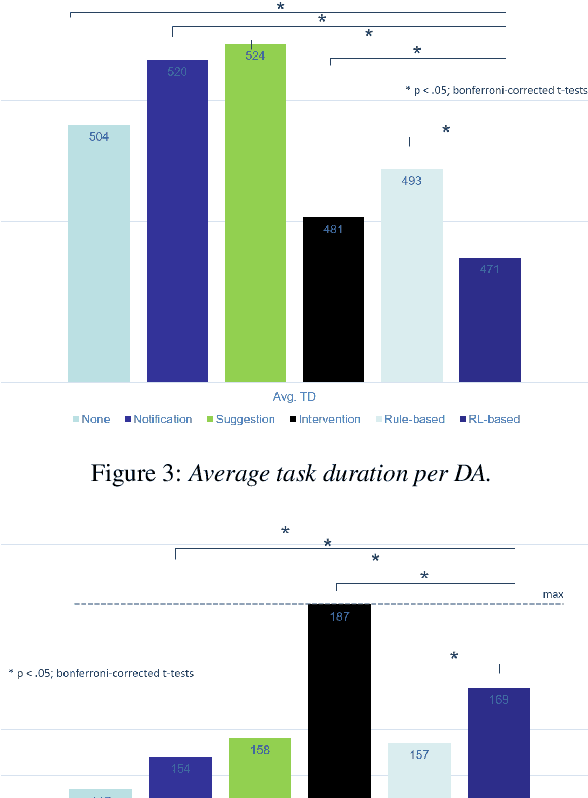

Abstract:The next step for intelligent dialog agents is to escape their role as silent bystanders and become proactive. Well-defined proactive behavior may improve human-machine cooperation, as the agent takes a more active role during interaction and takes off responsibility from the user. However, proactivity is a double-edged sword because poorly executed pre-emptive actions may have a devastating effect not only on the task outcome but also on the relationship with the user. For designing adequate proactive dialog strategies, we propose a novel approach including both social as well as task-relevant features in the dialog. Here, the primary goal is to optimize proactive behavior so that it is task-oriented - this implies high task success and efficiency - while also being socially effective by fostering user trust. Including both aspects in the reward function for training a proactive dialog agent using reinforcement learning showed the benefit of our approach for more successful human-machine cooperation.

ConceptNet infused DialoGPT for Underlying Commonsense Understanding and Reasoning in Dialogue Response Generation

Sep 29, 2022

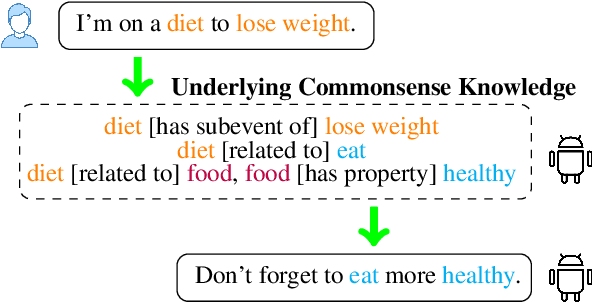

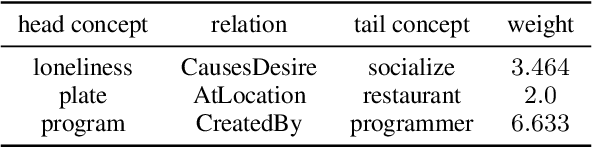

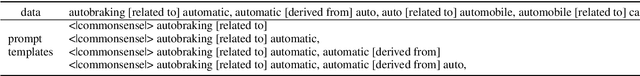

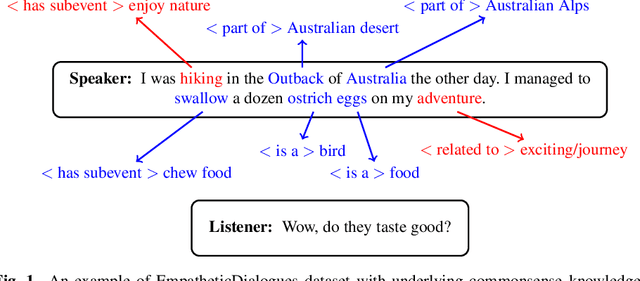

Abstract:The pre-trained conversational models still fail to capture the implicit commonsense (CS) knowledge hidden in the dialogue interaction, even though they were pre-trained with an enormous dataset. In order to build a dialogue agent with CS capability, we firstly inject external knowledge into a pre-trained conversational model to establish basic commonsense through efficient Adapter tuning (Section 4). Secondly, we propose the ``two-way learning'' method to enable the bidirectional relationship between CS knowledge and sentence pairs so that the model can generate a sentence given the CS triplets, also generate the underlying CS knowledge given a sentence (Section 5). Finally, we leverage this integrated CS capability to improve open-domain dialogue response generation so that the dialogue agent is capable of understanding the CS knowledge hidden in dialogue history on top of inferring related other knowledge to further guide response generation (Section 6). The experiment results demonstrate that CS\_Adapter fusion helps DialoGPT to be able to generate series of CS knowledge. And the DialoGPT+CS\_Adapter response model adapted from CommonGen training can generate underlying CS triplets that fits better to dialogue context.

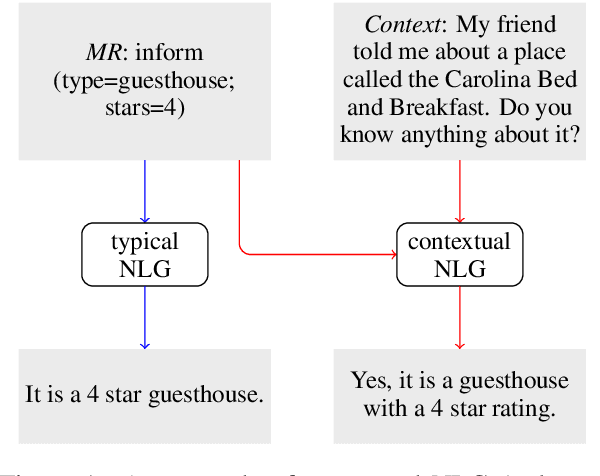

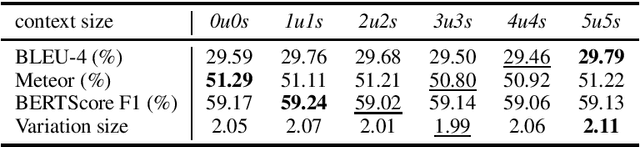

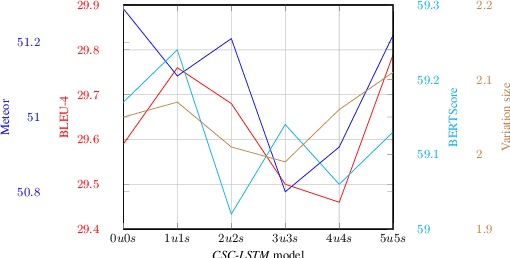

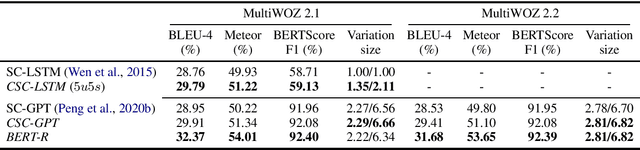

Context Matters in Semantically Controlled Language Generation for Task-oriented Dialogue Systems

Nov 28, 2021

Abstract:This work combines information about the dialogue history encoded by pre-trained model with a meaning representation of the current system utterance to realize contextual language generation in task-oriented dialogues. We utilize the pre-trained multi-context ConveRT model for context representation in a model trained from scratch; and leverage the immediate preceding user utterance for context generation in a model adapted from the pre-trained GPT-2. Both experiments with the MultiWOZ dataset show that contextual information encoded by pre-trained model improves the performance of response generation both in automatic metrics and human evaluation. Our presented contextual generator enables higher variety of generated responses that fit better to the ongoing dialogue. Analysing the context size shows that longer context does not automatically lead to better performance, but the immediate preceding user utterance plays an essential role for contextual generation. In addition, we also propose a re-ranker for the GPT-based generation model. The experiments show that the response selected by the re-ranker has a significant improvement on automatic metrics.

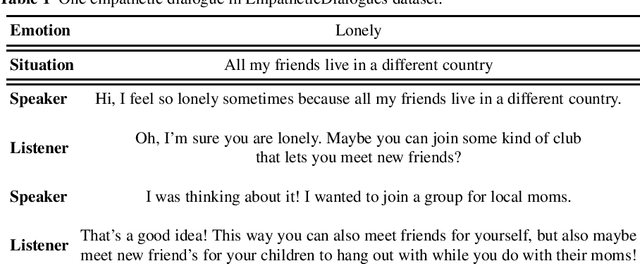

Empathetic Dialogue Generation with Pre-trained RoBERTa-GPT2 and External Knowledge

Sep 07, 2021

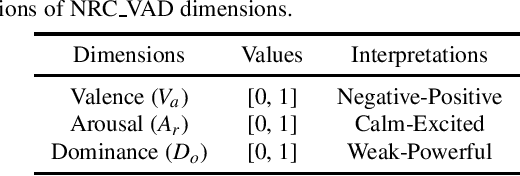

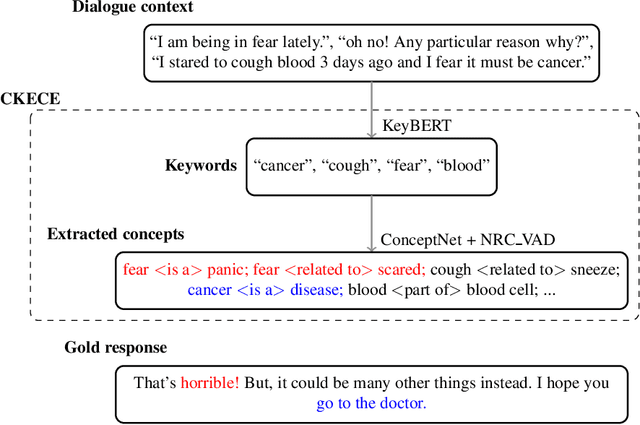

Abstract:One challenge for dialogue agents is to recognize feelings of the conversation partner and respond accordingly. In this work, RoBERTa-GPT2 is proposed for empathetic dialogue generation, where the pre-trained auto-encoding RoBERTa is utilised as encoder and the pre-trained auto-regressive GPT-2 as decoder. With the combination of the pre-trained RoBERTa and GPT-2, our model realizes a new state-of-the-art emotion accuracy. To enable the empathetic ability of RoBERTa-GPT2 model, we propose a commonsense knowledge and emotional concepts extractor, in which the commonsensible and emotional concepts of dialogue context are extracted for the GPT-2 decoder. The experiment results demonstrate that the empathetic dialogue generation benefits from both pre-trained encoder-decoder architecture and external knowledge.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge