William Freeman

Beyond I-Con: Exploring New Dimension of Distance Measures in Representation Learning

Sep 05, 2025Abstract:The Information Contrastive (I-Con) framework revealed that over 23 representation learning methods implicitly minimize KL divergence between data and learned distributions that encode similarities between data points. However, a KL-based loss may be misaligned with the true objective, and properties of KL divergence such as asymmetry and unboundedness may create optimization challenges. We present Beyond I-Con, a framework that enables systematic discovery of novel loss functions by exploring alternative statistical divergences and similarity kernels. Key findings: (1) on unsupervised clustering of DINO-ViT embeddings, we achieve state-of-the-art results by modifying the PMI algorithm to use total variation (TV) distance; (2) on supervised contrastive learning, we outperform the standard approach by using TV and a distance-based similarity kernel instead of KL and an angular kernel; (3) on dimensionality reduction, we achieve superior qualitative results and better performance on downstream tasks than SNE by replacing KL with a bounded f-divergence. Our results highlight the importance of considering divergence and similarity kernel choices in representation learning optimization.

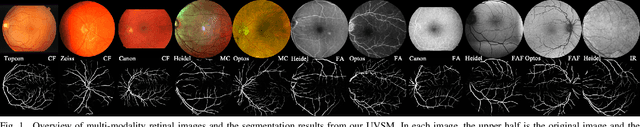

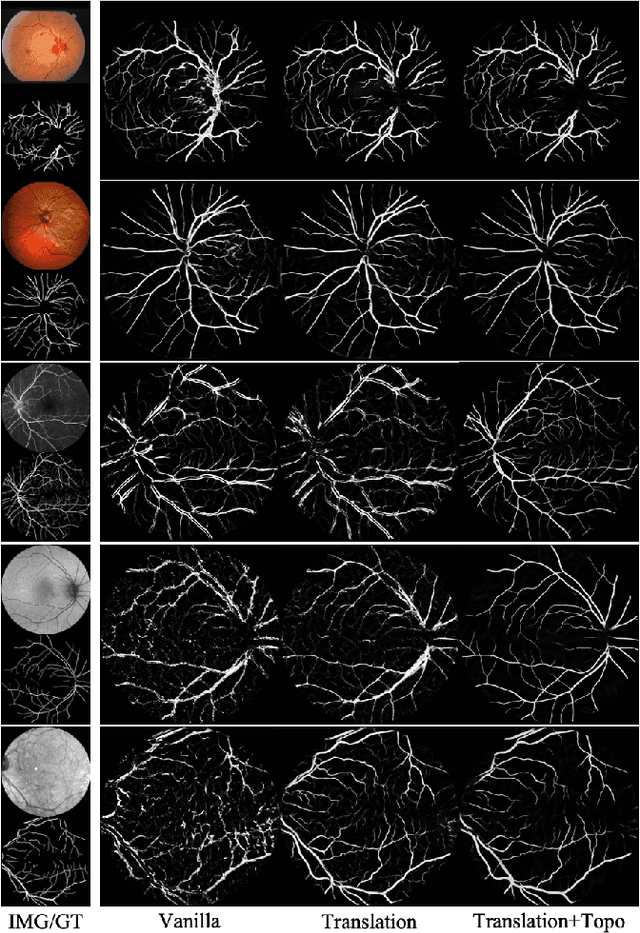

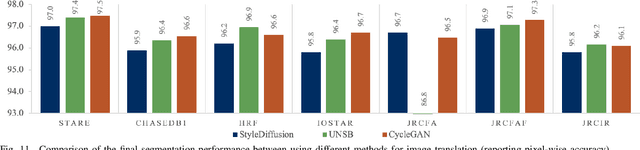

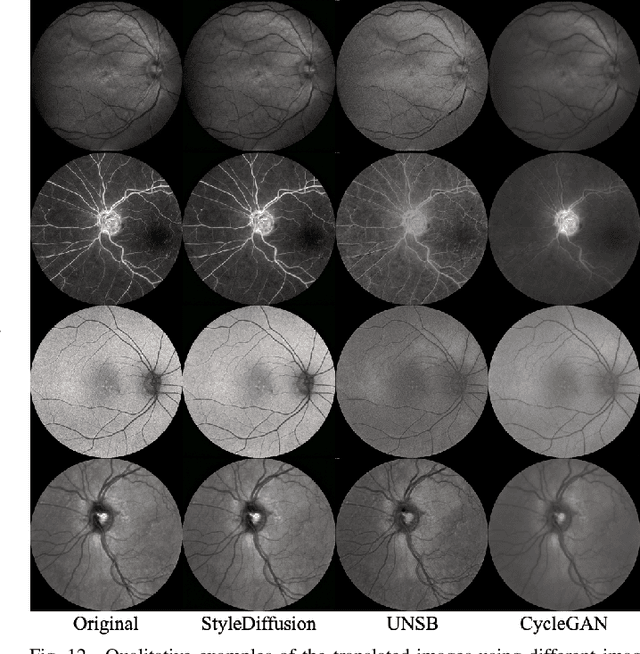

Universal Vessel Segmentation for Multi-Modality Retinal Images

Feb 10, 2025

Abstract:We identify two major limitations in the existing studies on retinal vessel segmentation: (1) Most existing works are restricted to one modality, i.e, the Color Fundus (CF). However, multi-modality retinal images are used every day in the study of retina and retinal diseases, and the study of vessel segmentation on the other modalities is scarce; (2) Even though a small amount of works extended their experiments to limited new modalities such as the Multi-Color Scanning Laser Ophthalmoscopy (MC), these works still require finetuning a separate model for the new modality. And the finetuning will require extra training data, which is difficult to acquire. In this work, we present a foundational universal vessel segmentation model (UVSM) for multi-modality retinal images. Not only do we perform the study on a much wider range of modalities, but also we propose a universal model to segment the vessels in all these commonly-used modalities. Despite being much more versatile comparing with existing methods, our universal model still demonstrates comparable performance with the state-of-the- art finetuned methods. To the best of our knowledge, this is the first work that achieves cross-modality retinal vessel segmentation and also the first work to study retinal vessel segmentation in some novel modalities.

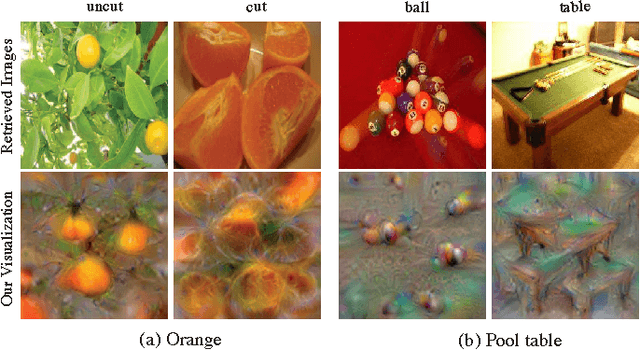

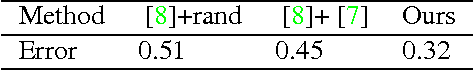

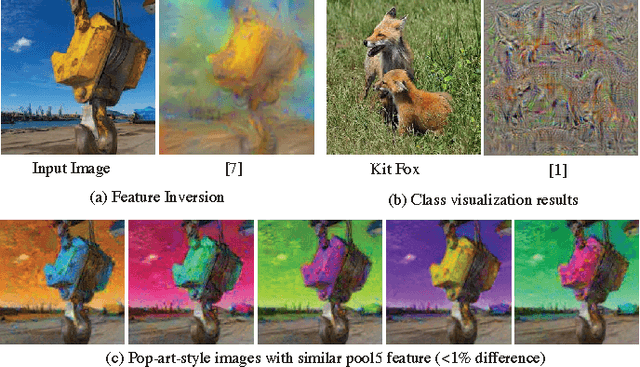

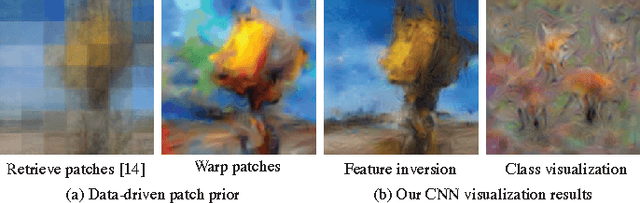

Understanding Intra-Class Knowledge Inside CNN

Jul 21, 2015

Abstract:Convolutional Neural Network (CNN) has been successful in image recognition tasks, and recent works shed lights on how CNN separates different classes with the learned inter-class knowledge through visualization. In this work, we instead visualize the intra-class knowledge inside CNN to better understand how an object class is represented in the fully-connected layers. To invert the intra-class knowledge into more interpretable images, we propose a non-parametric patch prior upon previous CNN visualization models. With it, we show how different "styles" of templates for an object class are organized by CNN in terms of location and content, and represented in a hierarchical and ensemble way. Moreover, such intra-class knowledge can be used in many interesting applications, e.g. style-based image retrieval and style-based object completion.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge