Wenhan Xian

Communication-Efficient Adam-Type Algorithms for Distributed Data Mining

Oct 14, 2022

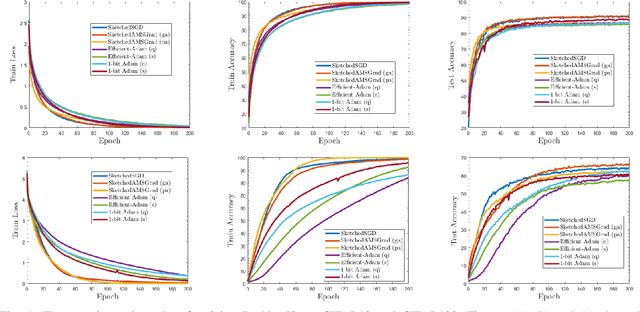

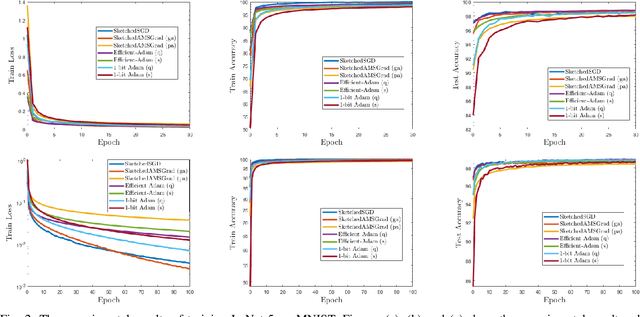

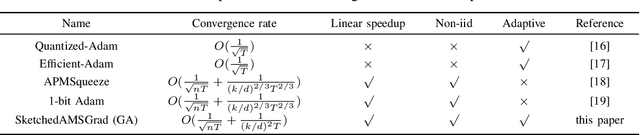

Abstract:Distributed data mining is an emerging research topic to effectively and efficiently address hard data mining tasks using big data, which are partitioned and computed on different worker nodes, instead of one centralized server. Nevertheless, distributed learning methods often suffer from the communication bottleneck when the network bandwidth is limited or the size of model is large. To solve this critical issue, many gradient compression methods have been proposed recently to reduce the communication cost for multiple optimization algorithms. However, the current applications of gradient compression to adaptive gradient method, which is widely adopted because of its excellent performance to train DNNs, do not achieve the same ideal compression rate or convergence rate as Sketched-SGD. To address this limitation, in this paper, we propose a class of novel distributed Adam-type algorithms (\emph{i.e.}, SketchedAMSGrad) utilizing sketching, which is a promising compression technique that reduces the communication cost from $O(d)$ to $O(\log(d))$ where $d$ is the parameter dimension. In our theoretical analysis, we prove that our new algorithm achieves a fast convergence rate of $O(\frac{1}{\sqrt{nT}} + \frac{1}{(k/d)^2 T})$ with the communication cost of $O(k \log(d))$ at each iteration. Compared with single-machine AMSGrad, our algorithm can achieve the linear speedup with respect to the number of workers $n$. The experimental results on training various DNNs in distributed paradigm validate the efficiency of our algorithms.

Distributed Dynamic Safe Screening Algorithms for Sparse Regularization

Apr 23, 2022

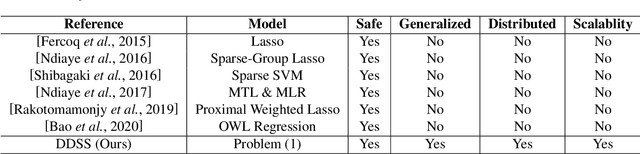

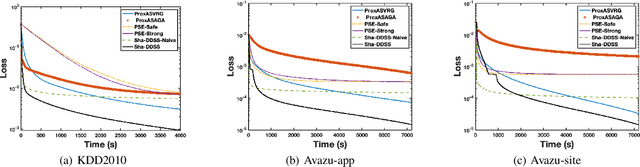

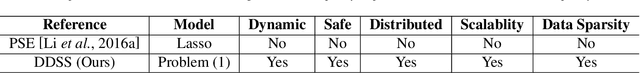

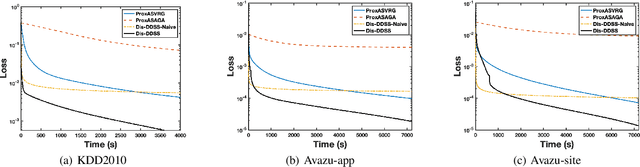

Abstract:Distributed optimization has been widely used as one of the most efficient approaches for model training with massive samples. However, large-scale learning problems with both massive samples and high-dimensional features widely exist in the era of big data. Safe screening is a popular technique to speed up high-dimensional models by discarding the inactive features with zero coefficients. Nevertheless, existing safe screening methods are limited to the sequential setting. In this paper, we propose a new distributed dynamic safe screening (DDSS) method for sparsity regularized models and apply it on shared-memory and distributed-memory architecture respectively, which can achieve significant speedup without any loss of accuracy by simultaneously enjoying the sparsity of the model and dataset. To the best of our knowledge, this is the first work of distributed safe dynamic screening method. Theoretically, we prove that the proposed method achieves the linear convergence rate with lower overall complexity and can eliminate almost all the inactive features in a finite number of iterations almost surely. Finally, extensive experimental results on benchmark datasets confirm the superiority of our proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge