Wengui Zhang

Investigating Memory Failure Prediction Across CPU Architectures

Jun 08, 2024

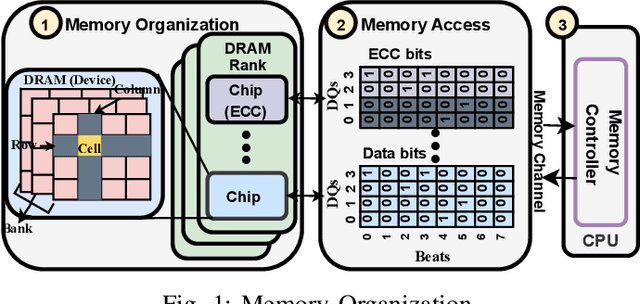

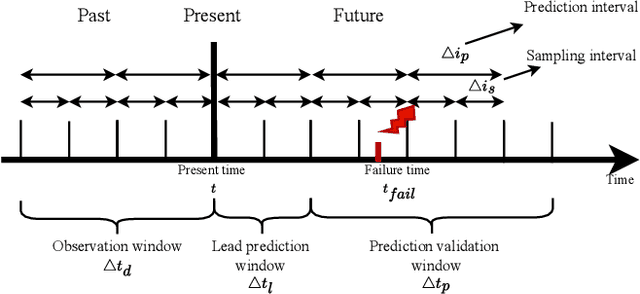

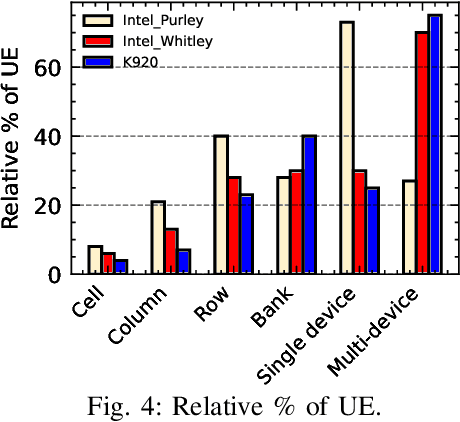

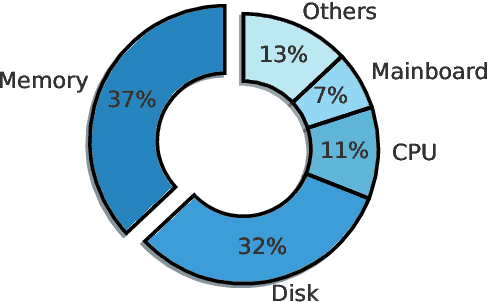

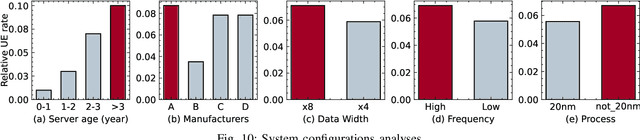

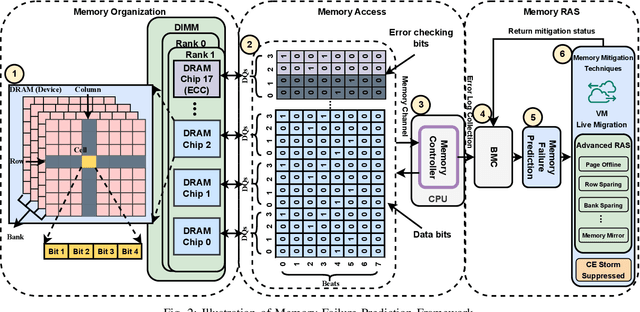

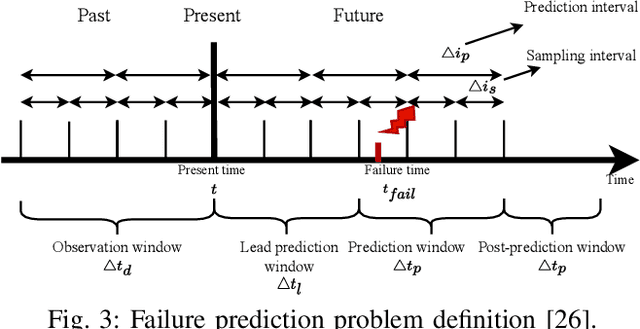

Abstract:Large-scale datacenters often experience memory failures, where Uncorrectable Errors (UEs) highlight critical malfunction in Dual Inline Memory Modules (DIMMs). Existing approaches primarily utilize Correctable Errors (CEs) to predict UEs, yet they typically neglect how these errors vary between different CPU architectures, especially in terms of Error Correction Code (ECC) applicability. In this paper, we investigate the correlation between CEs and UEs across different CPU architectures, including X86 and ARM. Our analysis identifies unique patterns of memory failure associated with each processor platform. Leveraging Machine Learning (ML) techniques on production datasets, we conduct the memory failure prediction in different processors' platforms, achieving up to 15% improvements in F1-score compared to the existing algorithm. Finally, an MLOps (Machine Learning Operations) framework is provided to consistently improve the failure prediction in the production environment.

Exploring Error Bits for Memory Failure Prediction: An In-Depth Correlative Study

Dec 18, 2023

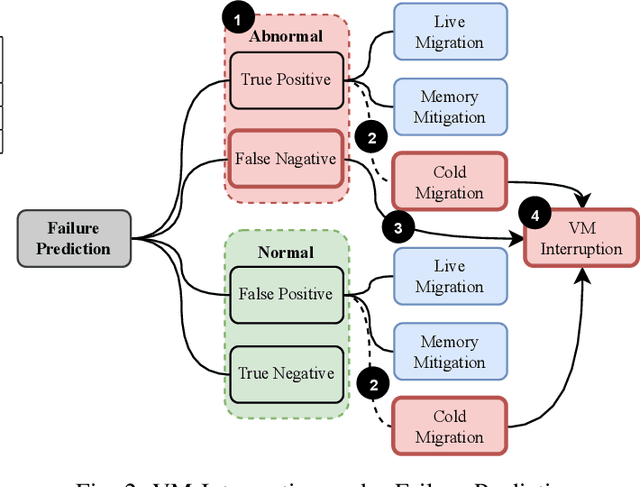

Abstract:In large-scale datacenters, memory failure is a common cause of server crashes, with Uncorrectable Errors (UEs) being a major indicator of Dual Inline Memory Module (DIMM) defects. Existing approaches primarily focus on predicting UEs using Correctable Errors (CEs), without fully considering the information provided by error bits. However, error bit patterns have a strong correlation with the occurrence of UEs. In this paper, we present a comprehensive study on the correlation between CEs and UEs, specifically emphasizing the importance of spatio-temporal error bit information. Our analysis reveals a strong correlation between spatio-temporal error bits and UE occurrence. Through evaluations using real-world datasets, we demonstrate that our approach significantly improves prediction performance by 15% in F1-score compared to the state-of-the-art algorithms. Overall, our approach effectively reduces the number of virtual machine interruptions caused by UEs by approximately 59%.

* Published at ICCAD 2023

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge