Vipul Bansal

Activation Functions: Dive into an optimal activation function

Feb 24, 2022

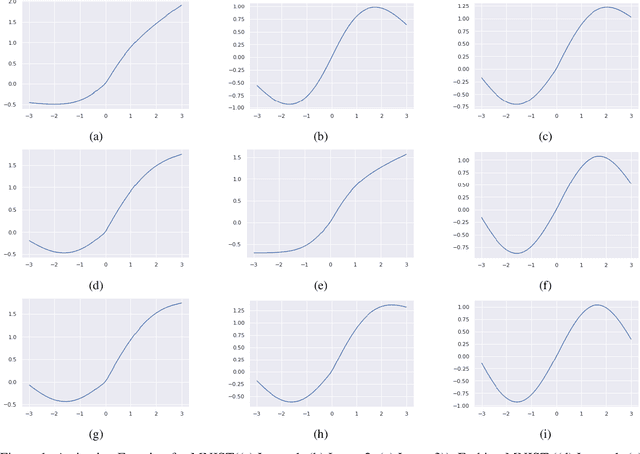

Abstract:Activation functions have come up as one of the essential components of neural networks. The choice of adequate activation function can impact the accuracy of these methods. In this study, we experiment for finding an optimal activation function by defining it as a weighted sum of existing activation functions and then further optimizing these weights while training the network. The study uses three activation functions, ReLU, tanh, and sin, over three popular image datasets, MNIST, FashionMNIST, and KMNIST. We observe that the ReLU activation function can easily overlook other activation functions. Also, we see that initial layers prefer to have ReLU or LeakyReLU type of activation functions, but deeper layers tend to prefer more convergent activation functions.

Tensor Ring Parametrized Variational Quantum Circuits for Large Scale Quantum Machine Learning

Jan 21, 2022

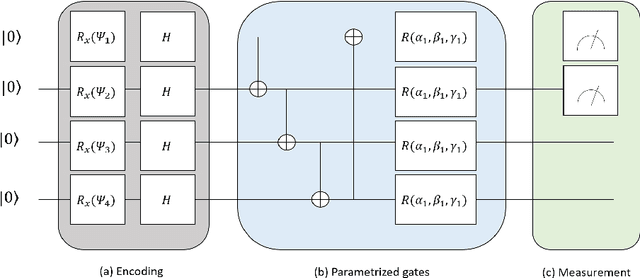

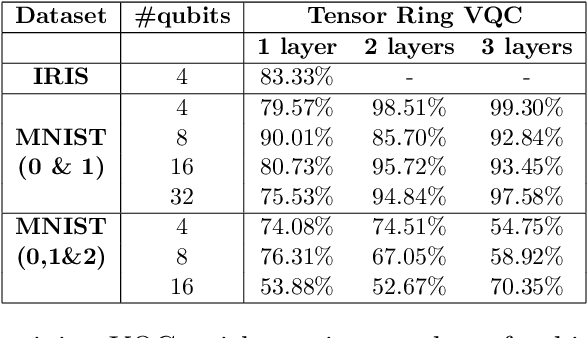

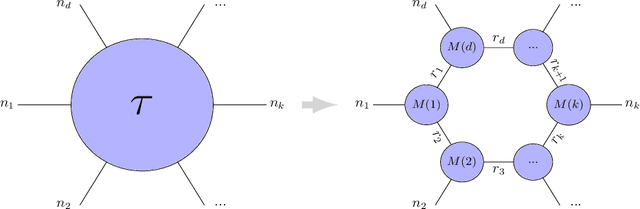

Abstract:Quantum Machine Learning (QML) is an emerging research area advocating the use of quantum computing for advancement in machine learning. Since the discovery of the capability of Parametrized Variational Quantum Circuits (VQC) to replace Artificial Neural Networks, they have been widely adopted to different tasks in Quantum Machine Learning. However, despite their potential to outperform neural networks, VQCs are limited to small scale applications given the challenges in scalability of quantum circuits. To address this shortcoming, we propose an algorithm that compresses the quantum state within the circuit using a tensor ring representation. Using the input qubit state in the tensor ring representation, single qubit gates maintain the tensor ring representation. However, the same is not true for two qubit gates in general, where an approximation is used to have the output as a tensor ring representation. Using this approximation, the storage and computational time increases linearly in the number of qubits and number of layers, as compared to the exponential increase with exact simulation algorithms. This approximation is used to implement the tensor ring VQC. The training of the parameters of tensor ring VQC is performed using a gradient descent based algorithm, where efficient approaches for backpropagation are used. The proposed approach is evaluated on two datasets: Iris and MNIST for the classification task to show the improved accuracy using more number of qubits. We achieve a test accuracy of 83.33\% on Iris dataset and a maximum of 99.30\% and 76.31\% on binary and ternary classification of MNIST dataset using various circuit architectures. The results from the IRIS dataset outperform the results on VQC implemented on Qiskit, and being scalable, demonstrates the potential for VQCs to be used for large scale Quantum Machine Learning applications.

Computational Models for Academic Performance Estimation

Sep 06, 2020

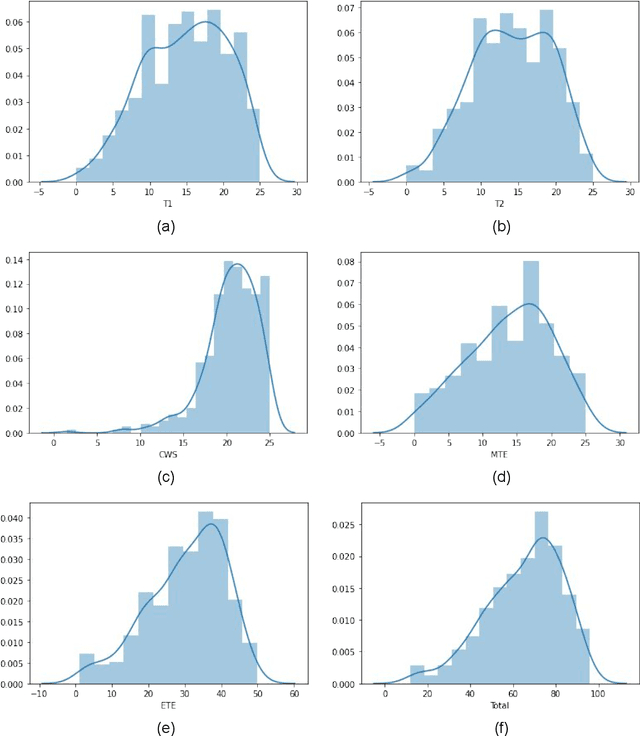

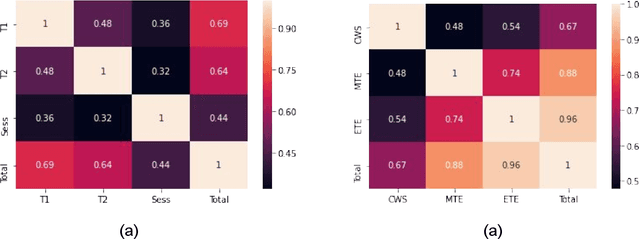

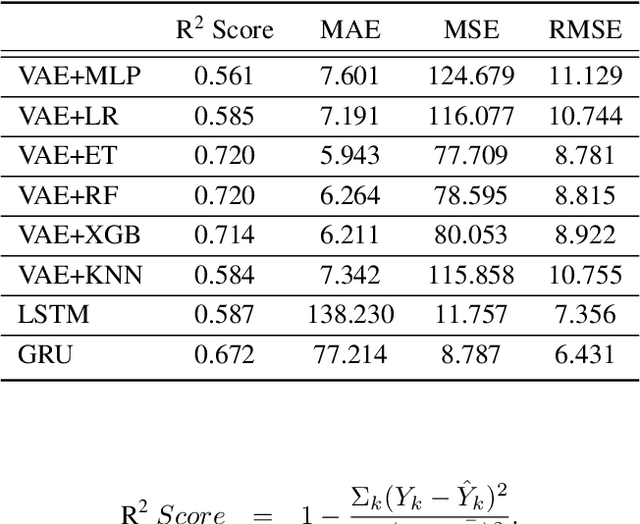

Abstract:Evaluation of students' performance for the completion of courses has been a major problem for both students and faculties during the work-from-home period in this COVID pandemic situation. To this end, this paper presents an in-depth analysis of deep learning and machine learning approaches for the formulation of an automated students' performance estimation system that works on partially available students' academic records. Our main contributions are (a) a large dataset with fifteen courses (shared publicly for academic research) (b) statistical analysis and ablations on the estimation problem for this dataset (c) predictive analysis through deep learning approaches and comparison with other arts and machine learning algorithms. Unlike previous approaches that rely on feature engineering or logical function deduction, our approach is fully data-driven and thus highly generic with better performance across different prediction tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge