Vincent Wang-Mascianica

A Pattern Language for Machine Learning Tasks

Jul 02, 2024

Abstract:Idealised as universal approximators, learners such as neural networks can be viewed as "variable functions" that may become one of a range of concrete functions after training. In the same way that equations constrain the possible values of variables in algebra, we may view objective functions as constraints on the behaviour of learners. We extract the equivalences perfectly optimised objective functions impose, calling them "tasks". For these tasks, we develop a formal graphical language that allows us to: (1) separate the core tasks of a behaviour from its implementation details; (2) reason about and design behaviours model-agnostically; and (3) simply describe and unify approaches in machine learning across domains. As proof-of-concept, we design a novel task that enables converting classifiers into generative models we call "manipulators", which we implement by directly translating task specifications into code. The resulting models exhibit capabilities such as style transfer and interpretable latent-space editing, without the need for custom architectures, adversarial training or random sampling. We formally relate the behaviour of manipulators to GANs, and empirically demonstrate their competitive performance with VAEs. We report on experiments across vision and language domains aiming to characterise manipulators as approximate Bayesian inversions of discriminative classifiers.

Distilling Text into Circuits

Jan 25, 2023

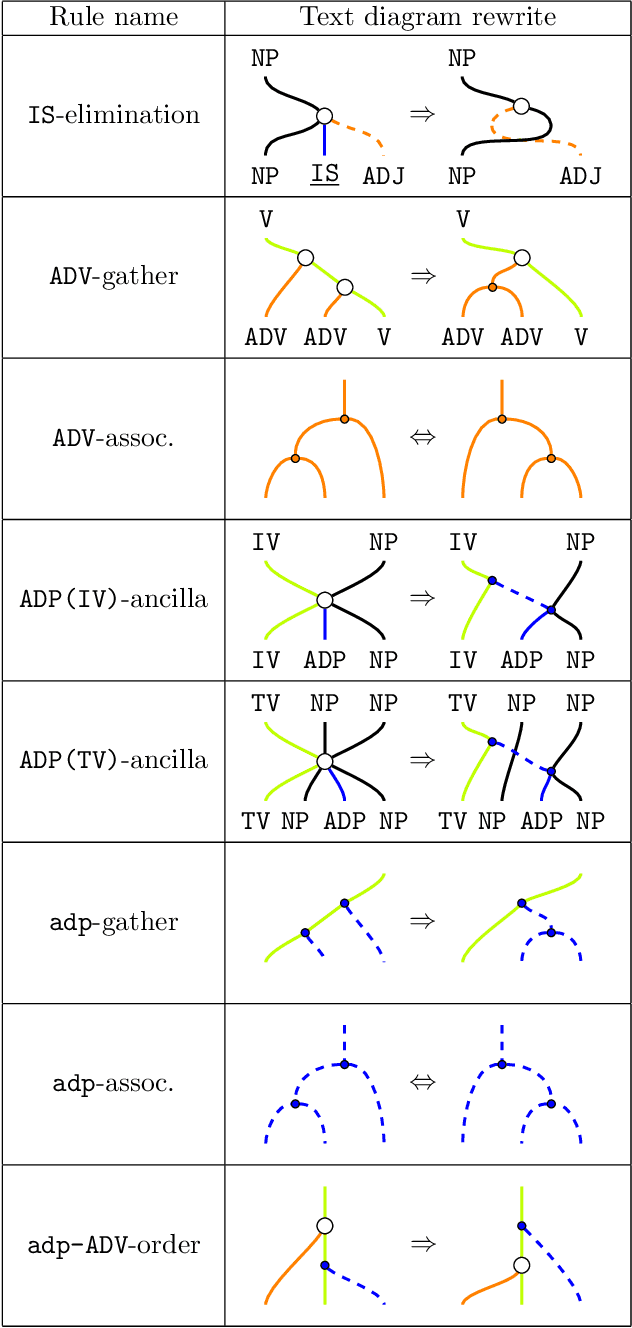

Abstract:This paper concerns the structure of meanings within natural language. Earlier, a framework named DisCoCirc was sketched that (1) is compositional and distributional (a.k.a. vectorial); (2) applies to general text; (3) captures linguistic `connections' between meanings (cf. grammar) (4) updates word meanings as text progresses; (5) structures sentence types; (6) accommodates ambiguity. Here, we realise DisCoCirc for a substantial fragment of English. When passing to DisCoCirc's text circuits, some `grammatical bureaucracy' is eliminated, that is, DisCoCirc displays a significant degree of (7) inter- and intra-language independence. That is, e.g., independence from word-order conventions that differ across languages, and independence from choices like many short sentences vs. few long sentences. This inter-language independence means our text circuits should carry over to other languages, unlike the language-specific typings of categorial grammars. Hence, text circuits are a lean structure for the `actual substance of text', that is, the inner-workings of meanings within text across several layers of expressiveness (cf. words, sentences, text), and may capture that what is truly universal beneath grammar. The elimination of grammatical bureaucracy also explains why DisCoCirc: (8) applies beyond language, e.g. to spatial, visual and other cognitive modes. While humans could not verbally communicate in terms of text circuits, machines can. We first define a `hybrid grammar' for a fragment of English, i.e. a purpose-built, minimal grammatical formalism needed to obtain text circuits. We then detail a translation process such that all text generated by this grammar yields a text circuit. Conversely, for any text circuit obtained by freely composing the generators, there exists a text (with hybrid grammar) that gives rise to it. Hence: (9) text circuits are generative for text.

Talking Space: inference from spatial linguistic meanings

Sep 16, 2021Abstract:This paper concerns the intersection of natural language and the physical space around us in which we live, that we observe and/or imagine things within. Many important features of language have spatial connotations, for example, many prepositions (like in, next to, after, on, etc.) are fundamentally spatial. Space is also a key factor of the meanings of many words/phrases/sentences/text, and space is a, if not the key, context for referencing (e.g. pointing) and embodiment. We propose a mechanism for how space and linguistic structure can be made to interact in a matching compositional fashion. Examples include Cartesian space, subway stations, chesspieces on a chess-board, and Penrose's staircase. The starting point for our construction is the DisCoCat model of compositional natural language meaning, which we relax to accommodate physical space. We address the issue of having multiple agents/objects in a space, including the case that each agent has different capabilities with respect to that space, e.g., the specific moves each chesspiece can make, or the different velocities one may be able to reach. Once our model is in place, we show how inferences drawing from the structure of physical space can be made. We also how how linguistic model of space can interact with other such models related to our senses and/or embodiment, such as the conceptual spaces of colour, taste and smell, resulting in a rich compositional model of meaning that is close to human experience and embodiment in the world.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge