Vincent Tan

Provable Benefits of Multi-task RL under Non-Markovian Decision Making Processes

Oct 20, 2023

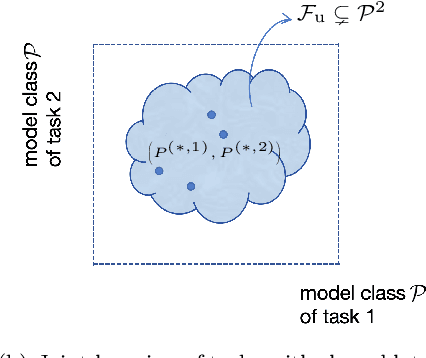

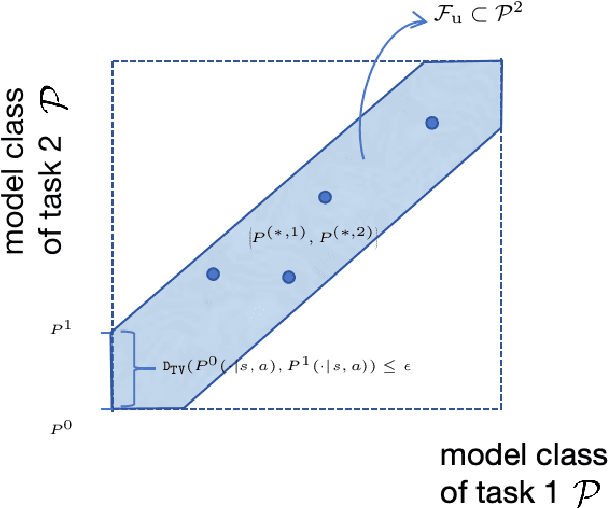

Abstract:In multi-task reinforcement learning (RL) under Markov decision processes (MDPs), the presence of shared latent structures among multiple MDPs has been shown to yield significant benefits to the sample efficiency compared to single-task RL. In this paper, we investigate whether such a benefit can extend to more general sequential decision making problems, such as partially observable MDPs (POMDPs) and more general predictive state representations (PSRs). The main challenge here is that the large and complex model space makes it hard to identify what types of common latent structure of multi-task PSRs can reduce the model complexity and improve sample efficiency. To this end, we posit a joint model class for tasks and use the notion of $\eta$-bracketing number to quantify its complexity; this number also serves as a general metric to capture the similarity of tasks and thus determines the benefit of multi-task over single-task RL. We first study upstream multi-task learning over PSRs, in which all tasks share the same observation and action spaces. We propose a provably efficient algorithm UMT-PSR for finding near-optimal policies for all PSRs, and demonstrate that the advantage of multi-task learning manifests if the joint model class of PSRs has a smaller $\eta$-bracketing number compared to that of individual single-task learning. We also provide several example multi-task PSRs with small $\eta$-bracketing numbers, which reap the benefits of multi-task learning. We further investigate downstream learning, in which the agent needs to learn a new target task that shares some commonalities with the upstream tasks via a similarity constraint. By exploiting the learned PSRs from the upstream, we develop a sample-efficient algorithm that provably finds a near-optimal policy.

Unsupervised Image Noise Modeling with Self-Consistent GAN

Jun 13, 2019

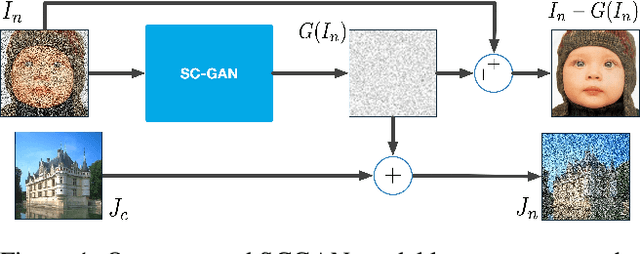

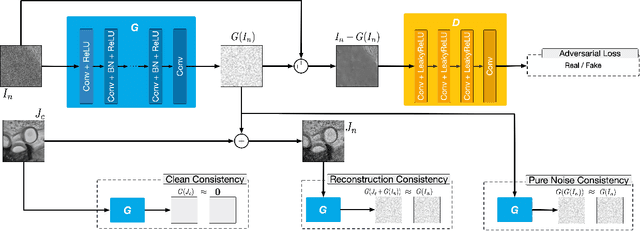

Abstract:Noise modeling lies in the heart of many image processing tasks. However, existing deep learning methods for noise modeling generally require clean and noisy image pairs for model training; these image pairs are difficult to obtain in many realistic scenarios. To ameliorate this problem, we propose a self-consistent GAN (SCGAN), that can directly extract noise maps from noisy images, thus enabling unsupervised noise modeling. In particular, the SCGAN introduces three novel self-consistent constraints that are complementary to one another, viz.: the noise model should produce a zero response over a clean input; the noise model should return the same output when fed with a specific pure noise input; and the noise model also should re-extract a pure noise map if the map is added to a clean image. These three constraints are simple yet effective. They jointly facilitate unsupervised learning of a noise model for various noise types. To demonstrate its wide applicability, we deploy the SCGAN on three image processing tasks including blind image denoising, rain streak removal, and noisy image super-resolution. The results demonstrate the effectiveness and superiority of our method over the state-of-the-art methods on a variety of benchmark datasets, even though the noise types vary significantly and paired clean images are not available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge