Vincent Danos

Efficient estimates of optimal transport via low-dimensional embeddings

Nov 08, 2021

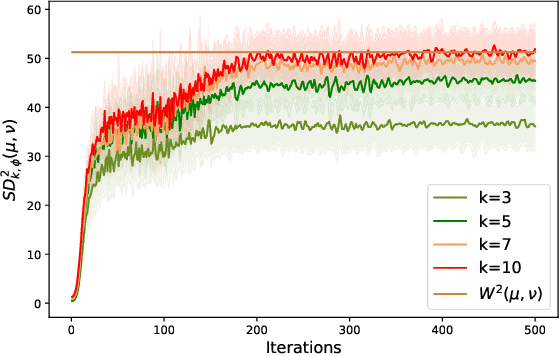

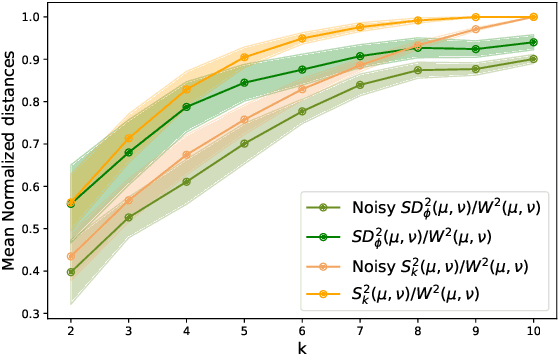

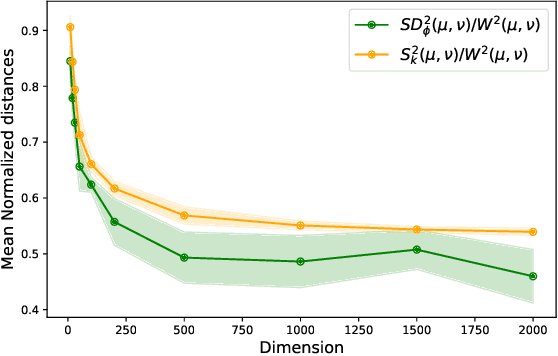

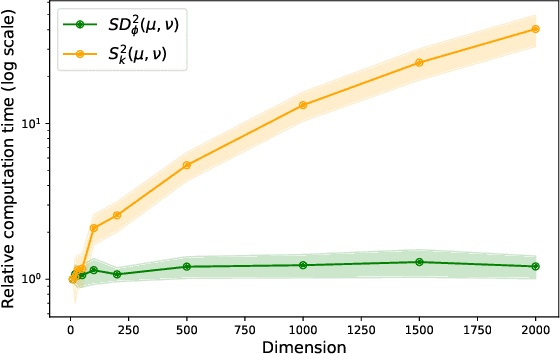

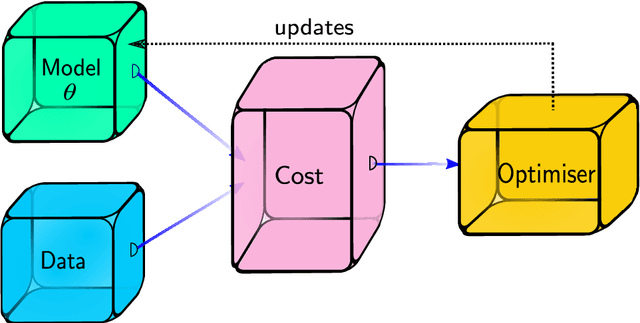

Abstract:Optimal transport distances (OT) have been widely used in recent work in Machine Learning as ways to compare probability distributions. These are costly to compute when the data lives in high dimension. Recent work by Paty et al., 2019, aims specifically at reducing this cost by computing OT using low-rank projections of the data (seen as discrete measures). We extend this approach and show that one can approximate OT distances by using more general families of maps provided they are 1-Lipschitz. The best estimate is obtained by maximising OT over the given family. As OT calculations are done after mapping data to a lower dimensional space, our method scales well with the original data dimension. We demonstrate the idea with neural networks.

The Born Supremacy: Quantum Advantage and Training of an Ising Born Machine

Apr 03, 2019

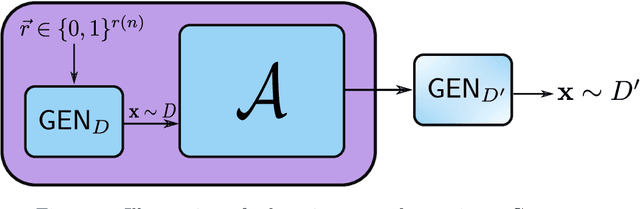

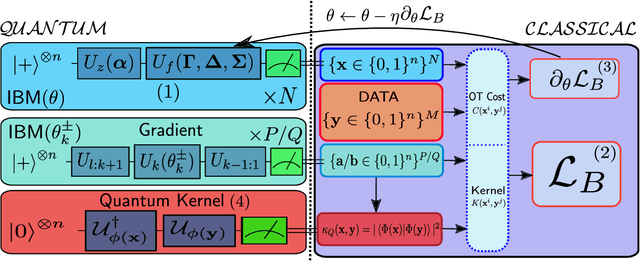

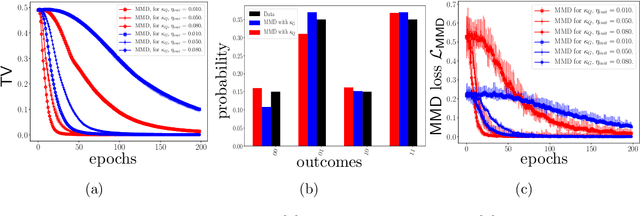

Abstract:The search for an application of near-term quantum devices is widespread. Quantum Machine Learning is touted as a potential utilisation of such devices, particularly those which are out of the reach of the simulation capabilities of classical computers. In this work, we propose a generative Quantum Machine Learning Model, called the Ising Born Machine (IBM), which we show cannot, in the worst case, and up to suitable notions of error, be simulated efficiently by a classical device. We also show this holds for all the circuit families encountered during training. In particular, we explore quantum circuit learning using non-universal circuits derived from Ising Model Hamiltonians, which are implementable on near term quantum devices. We propose two novel training methods for the IBM by utilising the Stein Discrepancy and the Sinkhorn Divergence cost functions. We show numerically, both using a simulator within Rigetti's Forest platform and on the Aspen-1 16Q chip, that the cost functions we suggest outperform the more commonly used Maximum Mean Discrepancy (MMD) for differentiable training. We also propose an improvement to the MMD by proposing a novel utilisation of quantum kernels which we demonstrate provides improvements over its classical counterpart. We discuss the potential of these methods to learn `hard' quantum distributions, a feat which would demonstrate the advantage of quantum over classical computers, and provide the first formal definitions for what we call `Quantum Learning Supremacy'. Finally, we propose a novel view on the area of quantum circuit compilation by using the IBM to `mimic' target quantum circuits using classical output data only.

Proceedings Fifth Workshop on Developments in Computational Models--Computational Models From Nature

Nov 15, 2009Abstract:The special theme of DCM 2009, co-located with ICALP 2009, concerned Computational Models From Nature, with a particular emphasis on computational models derived from physics and biology. The intention was to bring together different approaches - in a community with a strong foundational background as proffered by the ICALP attendees - to create inspirational cross-boundary exchanges, and to lead to innovative further research. Specifically DCM 2009 sought contributions in quantum computation and information, probabilistic models, chemical, biological and bio-inspired ones, including spatial models, growth models and models of self-assembly. Contributions putting to the test logical or algorithmic aspects of computing (e.g., continuous computing with dynamical systems, or solid state computing models) were also very much welcomed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge