Victor P. Hamran

Removing Adverse Volumetric Effects From Trained Neural Radiance Fields

Nov 17, 2023

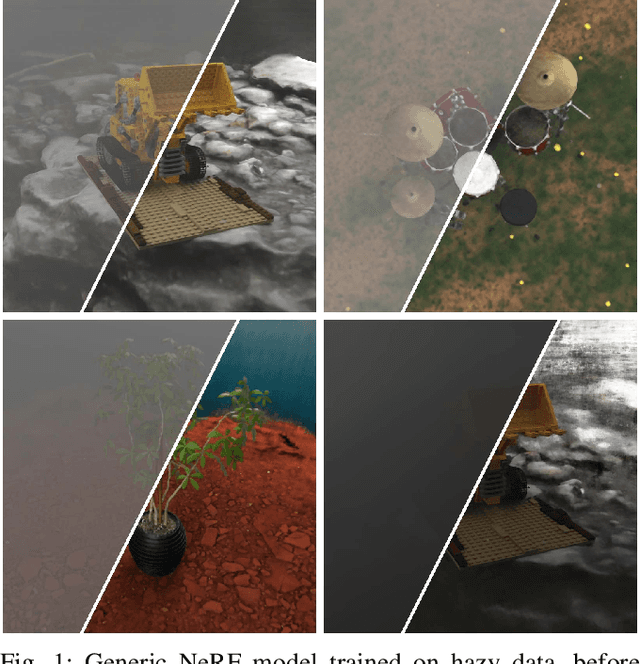

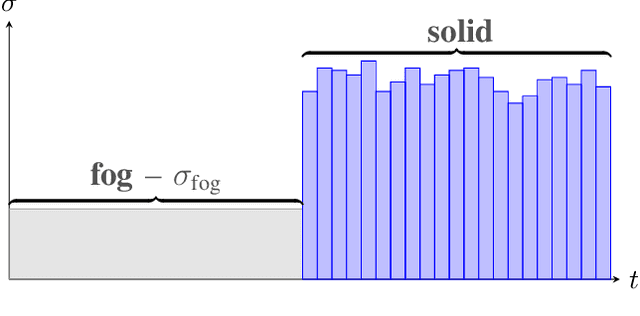

Abstract:While the use of neural radiance fields (NeRFs) in different challenging settings has been explored, only very recently have there been any contributions that focus on the use of NeRF in foggy environments. We argue that the traditional NeRF models are able to replicate scenes filled with fog and propose a method to remove the fog when synthesizing novel views. By calculating the global contrast of a scene, we can estimate a density threshold that, when applied, removes all visible fog. This makes it possible to use NeRF as a way of rendering clear views of objects of interest located in fog-filled environments. Additionally, to benchmark performance on such scenes, we introduce a new dataset that expands some of the original synthetic NeRF scenes through the addition of fog and natural environments. The code, dataset, and video results can be found on our project page: https://vegardskui.com/fognerf/

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge