Utku Gunay Acer

Enabling Cross-Camera Collaboration for Video Analytics on Distributed Smart Cameras

Jan 27, 2024

Abstract:Overlapping cameras offer exciting opportunities to view a scene from different angles, allowing for more advanced, comprehensive and robust analysis. However, existing visual analytics systems for multi-camera streams are mostly limited to (i) per-camera processing and aggregation and (ii) workload-agnostic centralized processing architectures. In this paper, we present Argus, a distributed video analytics system with cross-camera collaboration on smart cameras. We identify multi-camera, multi-target tracking as the primary task of multi-camera video analytics and develop a novel technique that avoids redundant, processing-heavy identification tasks by leveraging object-wise spatio-temporal association in the overlapping fields of view across multiple cameras. We further develop a set of techniques to perform these operations across distributed cameras without cloud support at low latency by (i) dynamically ordering the camera and object inspection sequence and (ii) flexibly distributing the workload across smart cameras, taking into account network transmission and heterogeneous computational capacities. Evaluation of three real-world overlapping camera datasets with two Nvidia Jetson devices shows that Argus reduces the number of object identifications and end-to-end latency by up to 7.13x and 2.19x (4.86x and 1.60x compared to the state-of-the-art), while achieving comparable tracking quality.

SensiX++: Bringing MLOPs and Multi-tenant Model Serving to Sensory Edge Devices

Sep 08, 2021

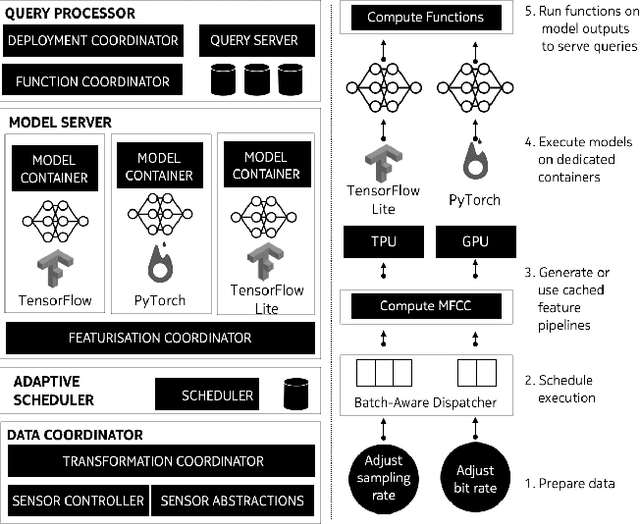

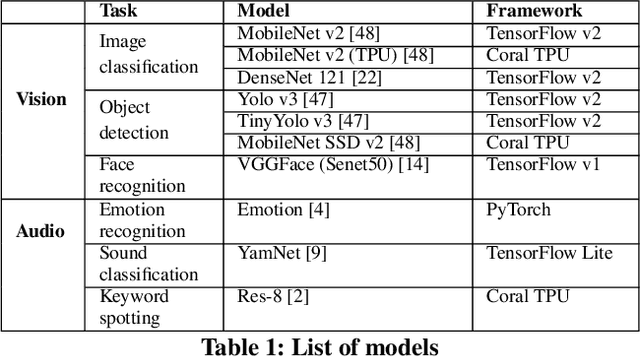

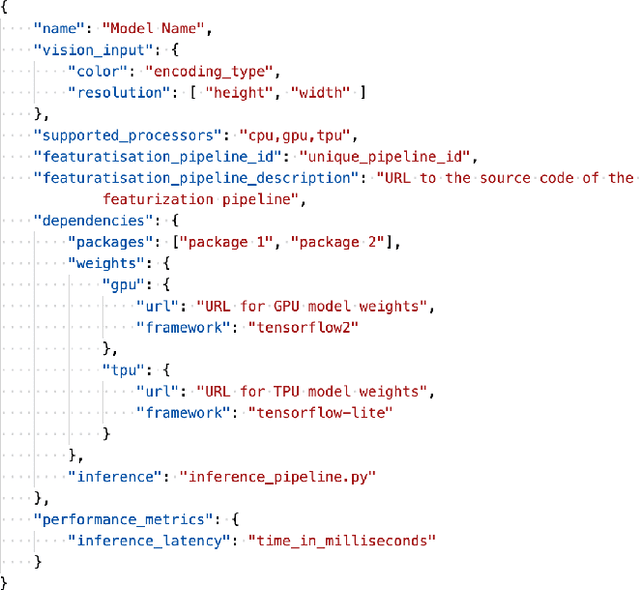

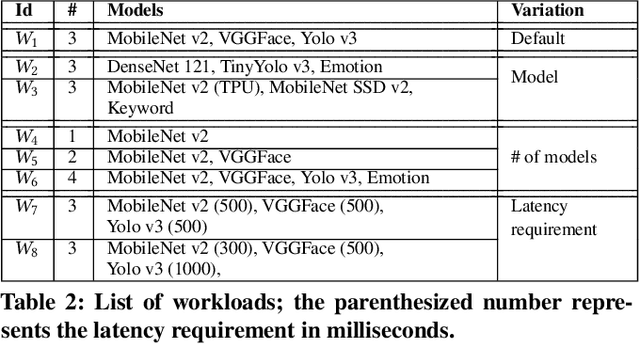

Abstract:We present SensiX++ - a multi-tenant runtime for adaptive model execution with integrated MLOps on edge devices, e.g., a camera, a microphone, or IoT sensors. SensiX++ operates on two fundamental principles - highly modular componentisation to externalise data operations with clear abstractions and document-centric manifestation for system-wide orchestration. First, a data coordinator manages the lifecycle of sensors and serves models with correct data through automated transformations. Next, a resource-aware model server executes multiple models in isolation through model abstraction, pipeline automation and feature sharing. An adaptive scheduler then orchestrates the best-effort executions of multiple models across heterogeneous accelerators, balancing latency and throughput. Finally, microservices with REST APIs serve synthesised model predictions, system statistics, and continuous deployment. Collectively, these components enable SensiX++ to serve multiple models efficiently with fine-grained control on edge devices while minimising data operation redundancy, managing data and device heterogeneity, reducing resource contention and removing manual MLOps. We benchmark SensiX++ with ten different vision and acoustics models across various multi-tenant configurations on different edge accelerators (Jetson AGX and Coral TPU) designed for sensory devices. We report on the overall throughput and quantified benefits of various automation components of SensiX++ and demonstrate its efficacy to significantly reduce operational complexity and lower the effort to deploy, upgrade, reconfigure and serve embedded models on edge devices.

SensiX: A Platform for Collaborative Machine Learning on the Edge

Dec 04, 2020

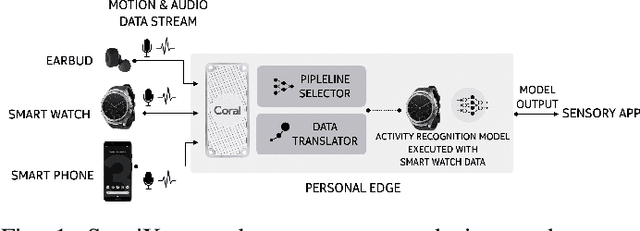

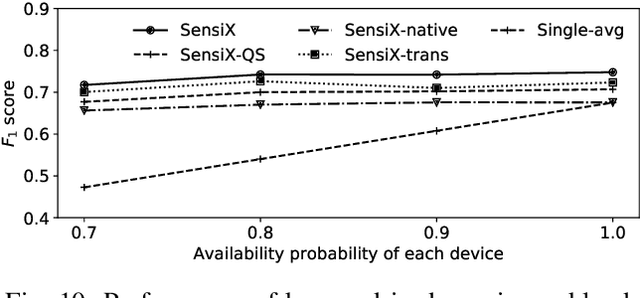

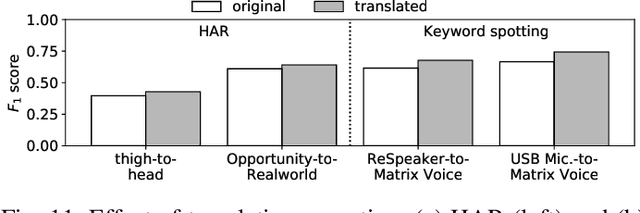

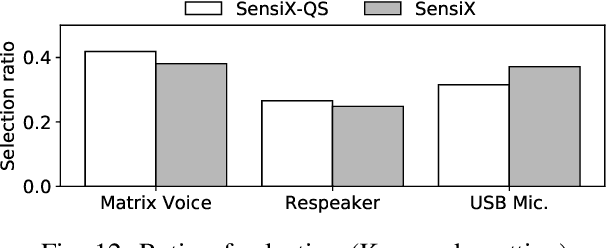

Abstract:The emergence of multiple sensory devices on or near a human body is uncovering new dynamics of extreme edge computing. In this, a powerful and resource-rich edge device such as a smartphone or a Wi-Fi gateway is transformed into a personal edge, collaborating with multiple devices to offer remarkable sensory al eapplications, while harnessing the power of locality, availability, and proximity. Naturally, this transformation pushes us to rethink how to construct accurate, robust, and efficient sensory systems at personal edge. For instance, how do we build a reliable activity tracker with multiple on-body IMU-equipped devices? While the accuracy of sensing models is improving, their runtime performance still suffers, especially under this emerging multi-device, personal edge environments. Two prime caveats that impact their performance are device and data variabilities, contributed by several runtime factors, including device availability, data quality, and device placement. To this end, we present SensiX, a personal edge platform that stays between sensor data and sensing models, and ensures best-effort inference under any condition while coping with device and data variabilities without demanding model engineering. SensiX externalises model execution away from applications, and comprises of two essential functions, a translation operator for principled mapping of device-to-device data and a quality-aware selection operator to systematically choose the right execution path as a function of model accuracy. We report the design and implementation of SensiX and demonstrate its efficacy in developing motion and audio-based multi-device sensing systems. Our evaluation shows that SensiX offers a 7-13% increase in overall accuracy and up to 30% increase across different environment dynamics at the expense of 3mW power overhead.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge