Thomas M. H. Hope

Predicting recovery following stroke: deep learning, multimodal data and feature selection using explainable AI

Oct 29, 2023

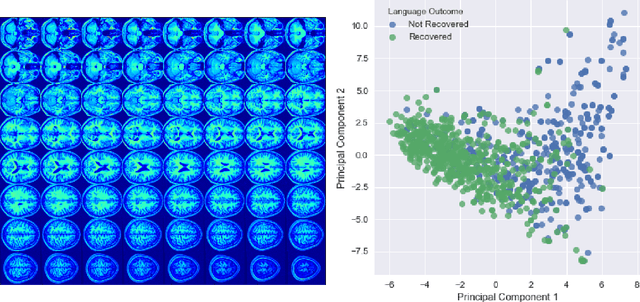

Abstract:Machine learning offers great potential for automated prediction of post-stroke symptoms and their response to rehabilitation. Major challenges for this endeavour include the very high dimensionality of neuroimaging data, the relatively small size of the datasets available for learning, and how to effectively combine neuroimaging and tabular data (e.g. demographic information and clinical characteristics). This paper evaluates several solutions based on two strategies. The first is to use 2D images that summarise MRI scans. The second is to select key features that improve classification accuracy. Additionally, we introduce the novel approach of training a convolutional neural network (CNN) on images that combine regions-of-interest extracted from MRIs, with symbolic representations of tabular data. We evaluate a series of CNN architectures (both 2D and a 3D) that are trained on different representations of MRI and tabular data, to predict whether a composite measure of post-stroke spoken picture description ability is in the aphasic or non-aphasic range. MRI and tabular data were acquired from 758 English speaking stroke survivors who participated in the PLORAS study. The classification accuracy for a baseline logistic regression was 0.678 for lesion size alone, rising to 0.757 and 0.813 when initial symptom severity and recovery time were successively added. The highest classification accuracy 0.854 was observed when 8 regions-of-interest was extracted from each MRI scan and combined with lesion size, initial severity and recovery time in a 2D Residual Neural Network.Our findings demonstrate how imaging and tabular data can be combined for high post-stroke classification accuracy, even when the dataset is small in machine learning terms. We conclude by proposing how the current models could be improved to achieve even higher levels of accuracy using images from hospital scanners.

Predicting Language Recovery after Stroke with Convolutional Networks on Stitched MRI

Nov 26, 2018

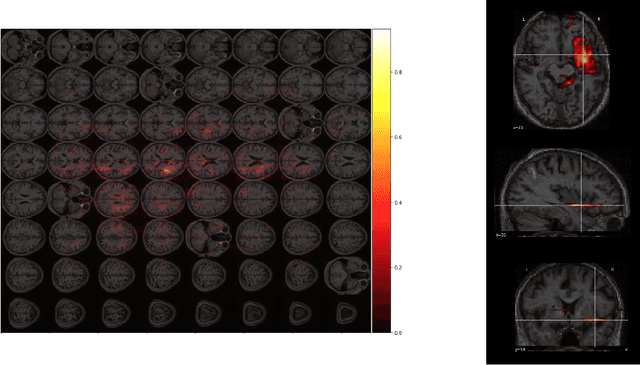

Abstract:One third of stroke survivors have language difficulties. Emerging evidence suggests that their likelihood of recovery depends mainly on the damage to language centers. Thus previous research for predicting language recovery post-stroke has focused on identifying damaged regions of the brain. In this paper, we introduce a novel method where we only make use of stitched 2-dimensional cross-sections of raw MRI scans in a deep convolutional neural network setup to predict language recovery post-stroke. Our results show: a) the proposed model that only uses MRI scans has comparable performance to models that are dependent on lesion specific information; b) the features learned by our model are complementary to the lesion specific information and the combination of both appear to outperform previously reported results in similar settings. We further analyse the CNN model for understanding regions in brain that are responsible for arriving at these predictions using gradient based saliency maps. Our findings are in line with previous lesion studies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge