Syed Saiq Hussain

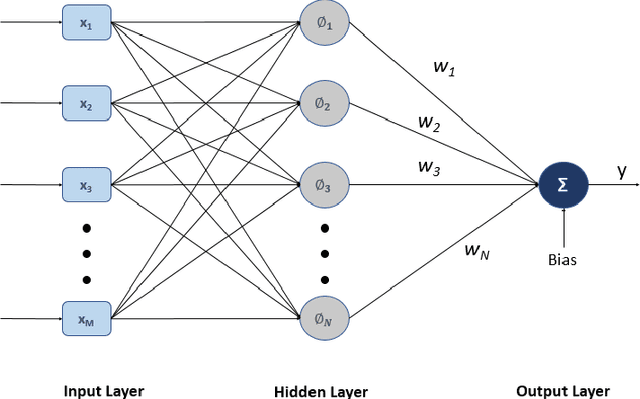

q-RBFNN:A Quantum Calculus-based RBF Neural Network

Jun 02, 2021

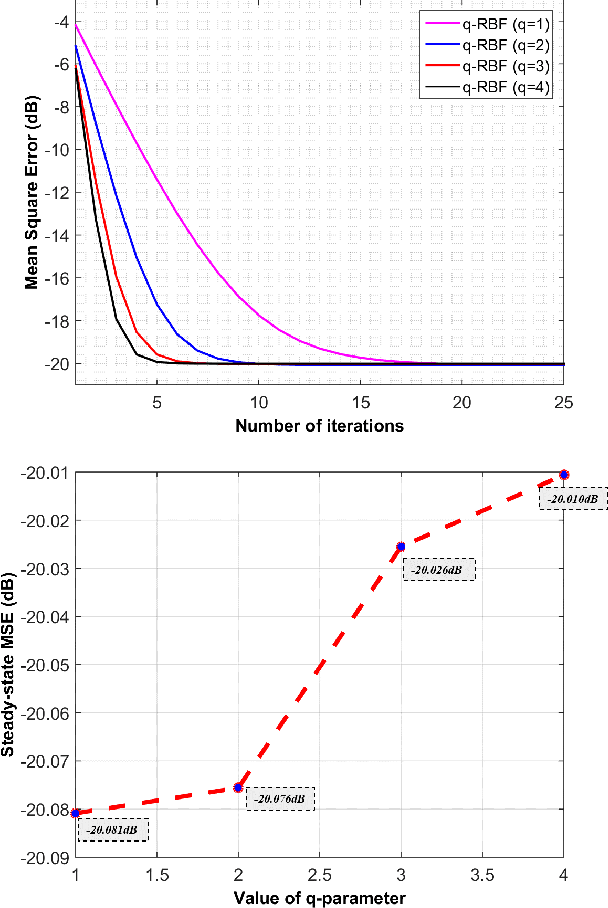

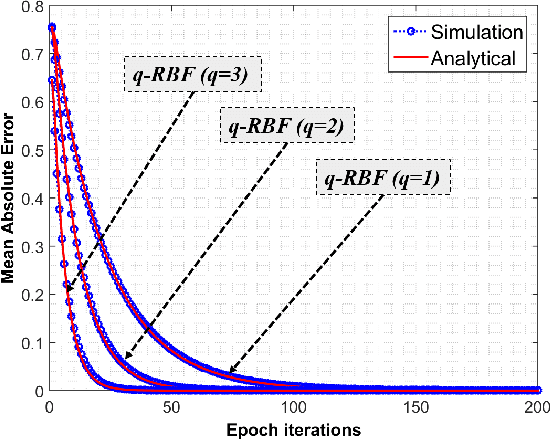

Abstract:In this research a novel stochastic gradient descent based learning approach for the radial basis function neural networks (RBFNN) is proposed. The proposed method is based on the q-gradient which is also known as Jackson derivative. In contrast to the conventional gradient, which finds the tangent, the q-gradient finds the secant of the function and takes larger steps towards the optimal solution. The proposed $q$-RBFNN is analyzed for its convergence performance in the context of least square algorithm. In particular, a closed form expression of the Wiener solution is obtained, and stability bounds of the learning rate (step-size) is derived. The analytical results are validated through computer simulation. Additionally, we propose an adaptive technique for the time-varying $q$-parameter to improve convergence speed with no trade-offs in the steady state performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge