Susanna Loeb

Educator Attention: How computational tools can systematically identify the distribution of a key resource for students

Feb 27, 2025

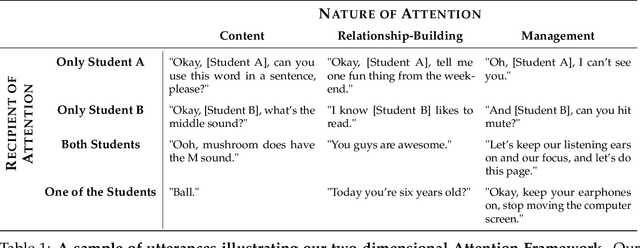

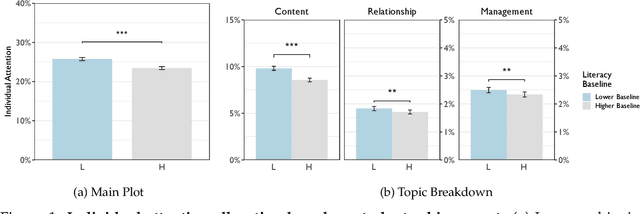

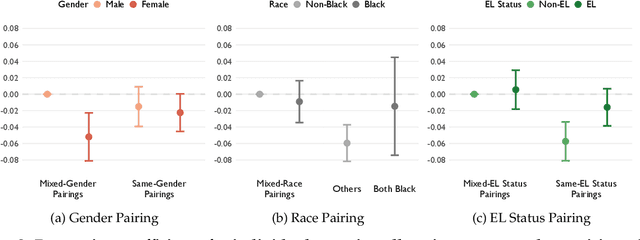

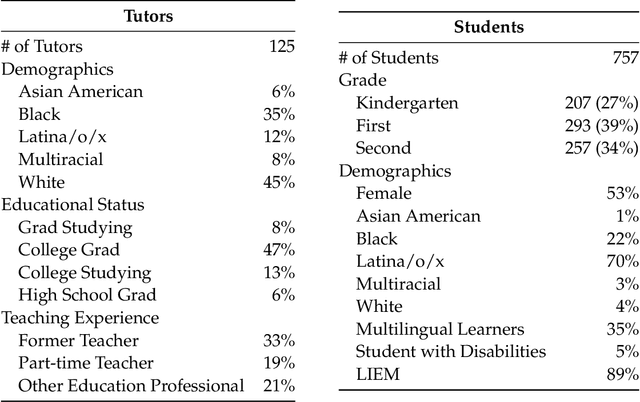

Abstract:Educator attention is critical for student success, yet how educators distribute their attention across students remains poorly understood due to data and methodological constraints. This study presents the first large-scale computational analysis of educator attention patterns, leveraging over 1 million educator utterances from virtual group tutoring sessions linked to detailed student demographic and academic achievement data. Using natural language processing techniques, we systematically examine the recipient and nature of educator attention. Our findings reveal that educators often provide more attention to lower-achieving students. However, disparities emerge across demographic lines, particularly by gender. Girls tend to receive less attention when paired with boys, even when they are the lower achieving student in the group. Lower-achieving female students in mixed-gender pairs receive significantly less attention than their higher-achieving male peers, while lower-achieving male students receive significantly and substantially more attention than their higher-achieving female peers. We also find some differences by race and English learner (EL) status, with low-achieving Black students receiving additional attention only when paired with another Black student but not when paired with a non-Black peer. In contrast, higher-achieving EL students receive disproportionately more attention than their lower-achieving EL peers. This work highlights how large-scale interaction data and computational methods can uncover subtle but meaningful disparities in teaching practices, providing empirical insights to inform more equitable and effective educational strategies.

Tutor CoPilot: A Human-AI Approach for Scaling Real-Time Expertise

Oct 03, 2024

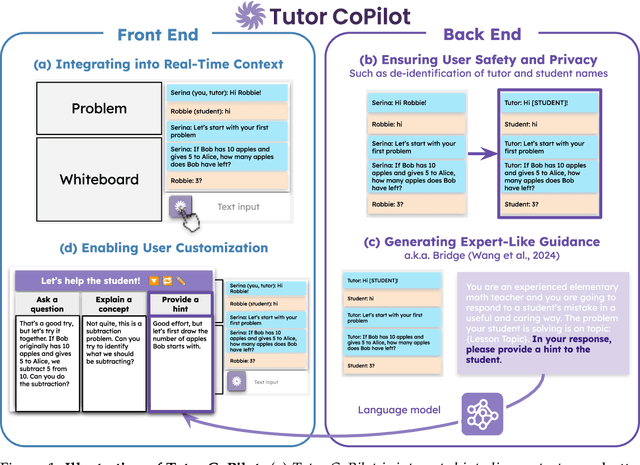

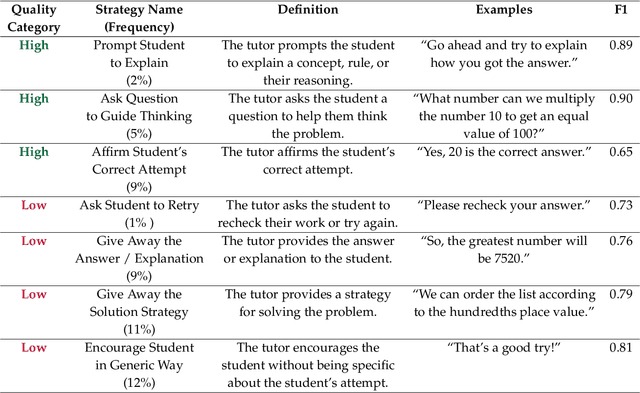

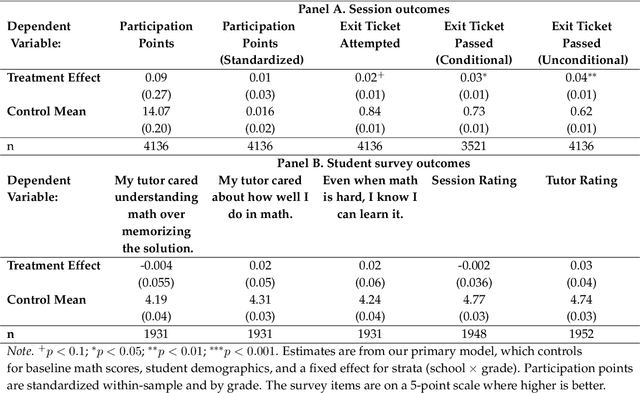

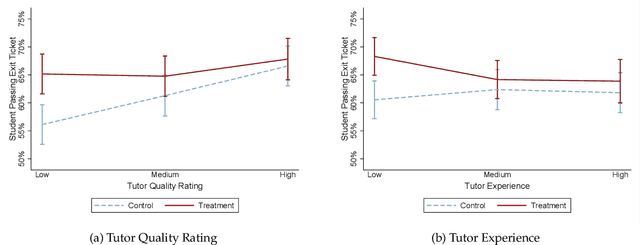

Abstract:Generative AI, particularly Language Models (LMs), has the potential to transform real-world domains with societal impact, particularly where access to experts is limited. For example, in education, training novice educators with expert guidance is important for effectiveness but expensive, creating significant barriers to improving education quality at scale. This challenge disproportionately harms students from under-served communities, who stand to gain the most from high-quality education. We introduce Tutor CoPilot, a novel Human-AI approach that leverages a model of expert thinking to provide expert-like guidance to tutors as they tutor. This study is the first randomized controlled trial of a Human-AI system in live tutoring, involving 900 tutors and 1,800 K-12 students from historically under-served communities. Following a preregistered analysis plan, we find that students working with tutors that have access to Tutor CoPilot are 4 percentage points (p.p.) more likely to master topics (p<0.01). Notably, students of lower-rated tutors experienced the greatest benefit, improving mastery by 9 p.p. We find that Tutor CoPilot costs only $20 per-tutor annually. We analyze 550,000+ messages using classifiers to identify pedagogical strategies, and find that tutors with access to Tutor CoPilot are more likely to use high-quality strategies to foster student understanding (e.g., asking guiding questions) and less likely to give away the answer to the student. Tutor interviews highlight how Tutor CoPilot's guidance helps tutors to respond to student needs, though they flag issues in Tutor CoPilot, such as generating suggestions that are not grade-level appropriate. Altogether, our study of Tutor CoPilot demonstrates how Human-AI systems can scale expertise in real-world domains, bridge gaps in skills and create a future where high-quality education is accessible to all students.

Step-by-Step Remediation of Students' Mathematical Mistakes

Oct 16, 2023

Abstract:Scaling high-quality tutoring is a major challenge in education. Because of the growing demand, many platforms employ novice tutors who, unlike professional educators, struggle to effectively address student mistakes and thus fail to seize prime learning opportunities for students. In this paper, we explore the potential for large language models (LLMs) to assist math tutors in remediating student mistakes. We present ReMath, a benchmark co-developed with experienced math teachers that deconstructs their thought process for remediation. The benchmark consists of three step-by-step tasks: (1) infer the type of student error, (2) determine the strategy to address the error, and (3) generate a response that incorporates that information. We evaluate the performance of state-of-the-art instruct-tuned and dialog models on ReMath. Our findings suggest that although models consistently improve upon original tutor responses, we cannot rely on models alone to remediate mistakes. Providing models with the error type (e.g., the student is guessing) and strategy (e.g., simplify the problem) leads to a 75% improvement in the response quality over models without that information. Nonetheless, despite the improvement, the quality of the best model's responses still falls short of experienced math teachers. Our work sheds light on the potential and limitations of using current LLMs to provide high-quality learning experiences for both tutors and students at scale. Our work is open-sourced at this link: \url{https://github.com/rosewang2008/remath}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge