Stephen Sloan

Protecting Anonymous Speech: A Generative Adversarial Network Methodology for Removing Stylistic Indicators in Text

Oct 18, 2021

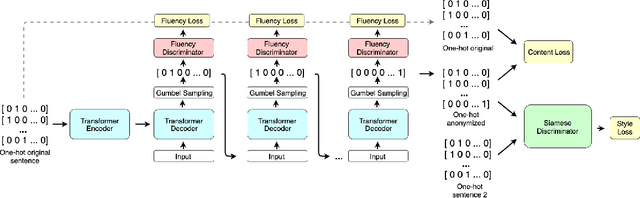

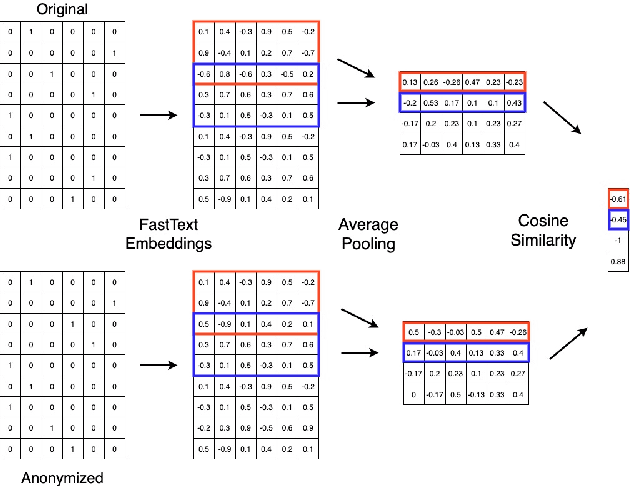

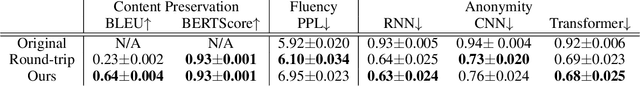

Abstract:With Internet users constantly leaving a trail of text, whether through blogs, emails, or social media posts, the ability to write and protest anonymously is being eroded because artificial intelligence, when given a sample of previous work, can match text with its author out of hundreds of possible candidates. Existing approaches to authorship anonymization, also known as authorship obfuscation, often focus on protecting binary demographic attributes rather than identity as a whole. Even those that do focus on obfuscating identity require manual feedback, lose the coherence of the original sentence, or only perform well given a limited subset of authors. In this paper, we develop a new approach to authorship anonymization by constructing a generative adversarial network that protects identity and optimizes for three different losses corresponding to anonymity, fluency, and content preservation. Our fully automatic method achieves comparable results to other methods in terms of content preservation and fluency, but greatly outperforms baselines in regards to anonymization. Moreover, our approach is able to generalize well to an open-set context and anonymize sentences from authors it has not encountered before.

Covariance-Robust Dynamic Watermarking

Mar 31, 2020

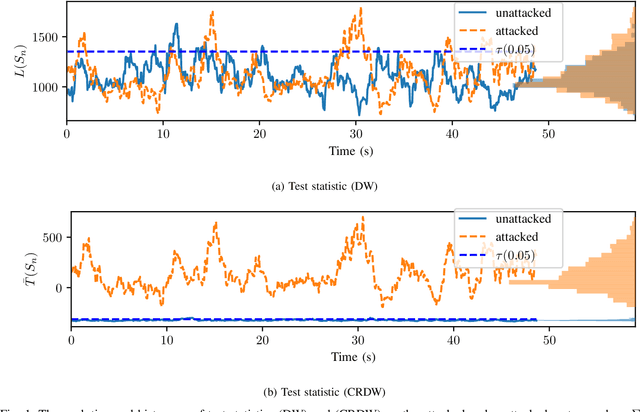

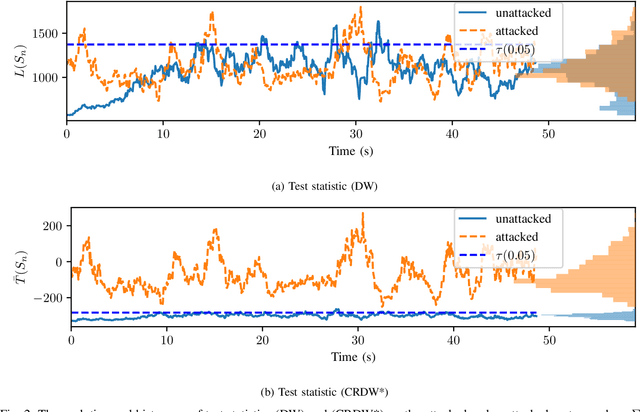

Abstract:Attack detection and mitigation strategies for cyberphysical systems (CPS) are an active area of research, and researchers have developed a variety of attack-detection tools such as dynamic watermarking. However, such methods often make assumptions that are difficult to guarantee, such as exact knowledge of the distribution of measurement noise. Here, we develop a new dynamic watermarking method that we call covariance-robust dynamic watermarking, which is able to handle uncertainties in the covariance of measurement noise. Specifically, we consider two cases. In the first this covariance is fixed but unknown, and in the second this covariance is slowly-varying. For our tests, we only require knowledge of a set within which the covariance lies. Furthermore, we connect this problem to that of algorithmic fairness and the nascent field of fair hypothesis testing, and we show that our tests satisfy some notions of fairness. Finally, we exhibit the efficacy of our tests on empirical examples chosen to reflect values observed in a standard simulation model of autonomous vehicles.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge