Stefan Streif

Benchmarking Reinforcement Learning via Stochastic Converse Optimality: Generating Systems with Known Optimal Policies

Mar 18, 2026Abstract:The objective comparison of Reinforcement Learning (RL) algorithms is notoriously complex as outcomes and benchmarking of performances of different RL approaches are critically sensitive to environmental design, reward structures, and stochasticity inherent in both algorithmic learning and environmental dynamics. To manage this complexity, we introduce a rigorous benchmarking framework by extending converse optimality to discrete-time, control-affine, nonlinear systems with noise. Our framework provides necessary and sufficient conditions, under which a prescribed value function and policy are optimal for constructed systems, enabling the systematic generation of benchmark families via homotopy variations and randomized parameters. We validate it by automatically constructing diverse environments, demonstrating our framework's capacity for a controlled and comprehensive evaluation across algorithms. By assessing standard methods against a ground-truth optimum, our work delivers a reproducible foundation for precise and rigorous RL benchmarking.

A Tutorial to Multirate Extended Kalman Filter Design for Monitoring of Agricultural Anaerobic Digestion Plants

Dec 23, 2025Abstract:In many applications of biotechnology, measurements are available at different sampling rates, e.g., due to online sensors and offline lab analysis. Offline measurements typically involve time delays that may be unknown a priori due to the underlying laboratory procedures. This multirate (MR) setting poses a challenge to Kalman filtering, where conventionally measurement data is assumed to be available on an equidistant time grid and without delays. The present study derives the MR version of an extended Kalman filter (EKF) based on sample state augmentation, and applies it to the anaerobic digestion (AD) process in a simulative agricultural setting. The performance of the MR-EKF is investigated for various scenarios, i.e., varying delay lengths, measurement noise levels, plant-model mismatch (PMM), and initial state error. Provided with an adequate tuning, the MR-EKF could be demonstrated to reliably estimate the process state, to appropriately fuse delayed offline measurements, and to smooth noisy online measurements well. Because of the sample state augmentation approach, the delay length of offline measurements does not critically impair state estimation performance, provided observability is not lost during the delays. Poor state initialization and PMM affect convergence more than measurement noise levels. Further, selecting an appropriate tuning was found to be critically important for successful application of the MR-EKF, for which a systematic approach is presented. This study provides implementation guidance for practitioners aiming at successfully applying state estimation for multirate systems. It thereby contributes to develop demand-driven operation of biogas plants, which may aid in stabilizing a renewable electricity grid.

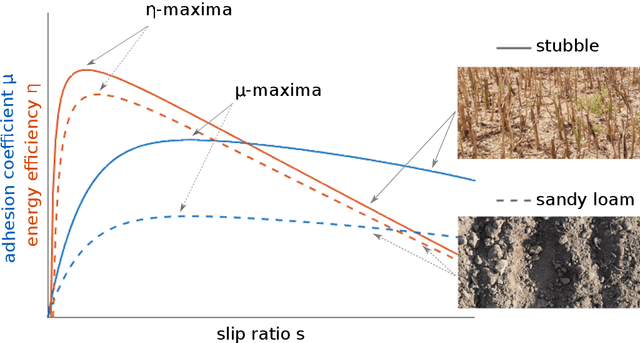

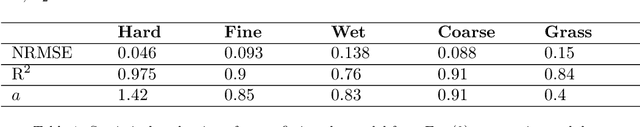

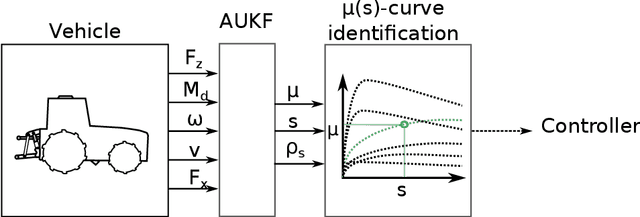

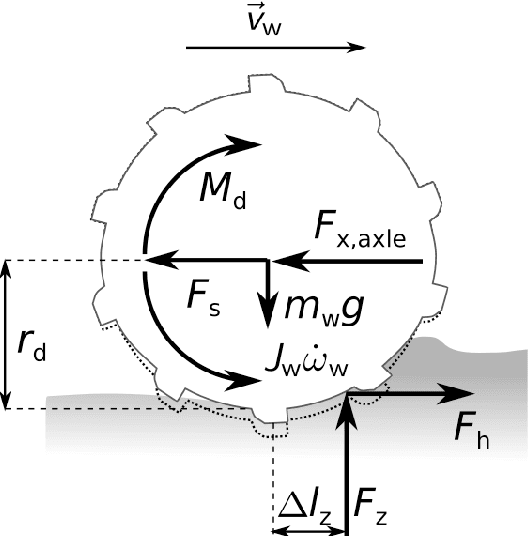

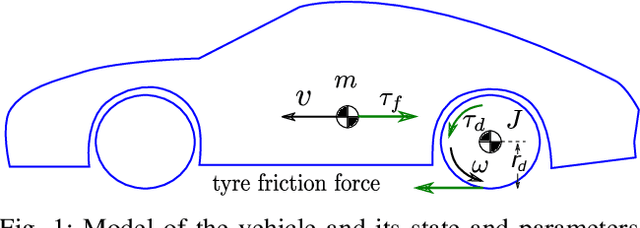

Experimental verification of an online traction parameter identification method

Mar 20, 2023

Abstract:Traction parameters, that characterize the ground-wheel contact dynamics, are the central factor in the energy efficiency of vehicles. To optimize fuel consumption, reduce wear of tires, increase productivity etc., knowledge of current traction parameters is unavoidable. Unfortunately, these parameters are difficult to measure and require expensive force and torque sensors. An alternative way is to use system identification to determine them. In this work, we validate such a method in field experiments with a mobile robot. The method is based on an adaptive Kalman filter. We show how it estimates the traction parameters online, during the motion on the field, and compare them to their values determined via a 6-directional force-torque sensor installed for verification. Data of adhesion slip ratio curves is recorded and compared to curves from literature for additional validation of the method. The results can establish a foundation for a number of optimal traction methods.

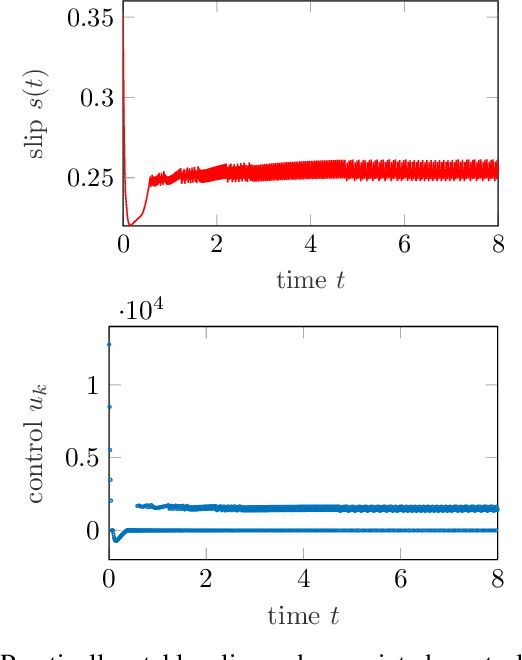

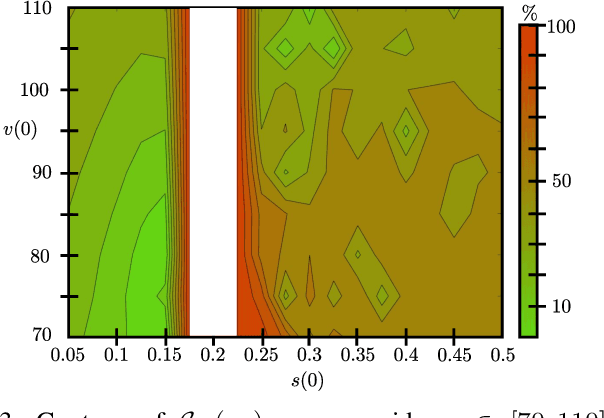

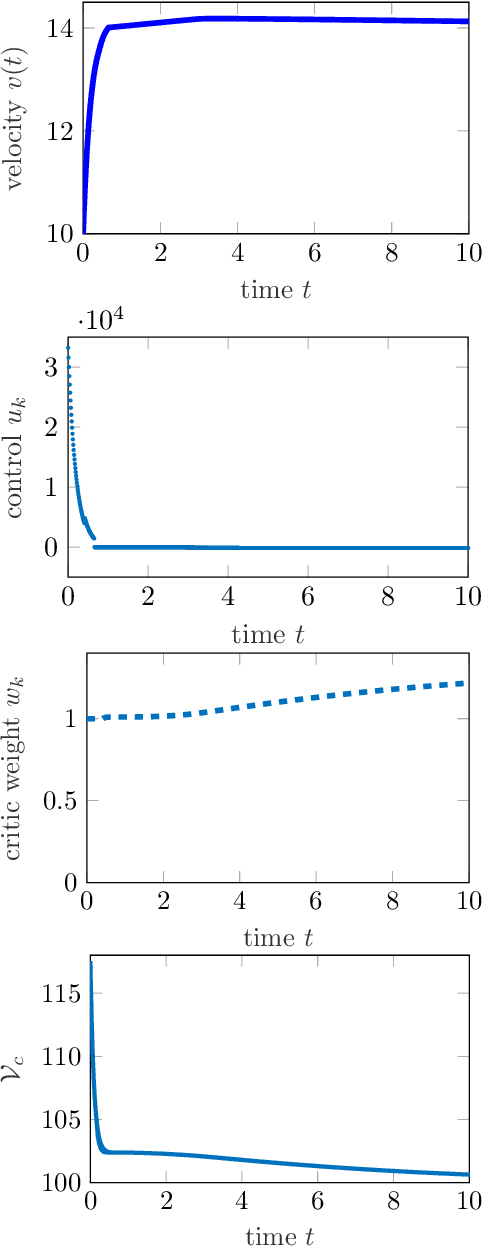

A stabilizing reinforcement learning approach for sampled systems with partially unknown models

Aug 31, 2022

Abstract:Reinforcement learning is commonly associated with training of reward-maximizing (or cost-minimizing) agents, in other words, controllers. It can be applied in model-free or model-based fashion, using a priori or online collected system data to train involved parametric architectures. In general, online reinforcement learning does not guarantee closed loop stability unless special measures are taken, for instance, through learning constraints or tailored training rules. Particularly promising are hybrids of reinforcement learning with "classical" control approaches. In this work, we suggest a method to guarantee practical stability of the system-controller closed loop in a purely online learning setting, i.e., without offline training. Moreover, we assume only partial knowledge of the system model. To achieve the claimed results, we employ techniques of classical adaptive control. The implementation of the overall control scheme is provided explicitly in a digital, sampled setting. That is, the controller receives the state of the system and computes the control action at discrete, specifically, equidistant moments in time. The method is tested in adaptive traction control and cruise control where it proved to significantly reduce the cost.

On the Turnpike to Design of Deep Neural Nets: Explicit Depth Bounds

Jan 08, 2021

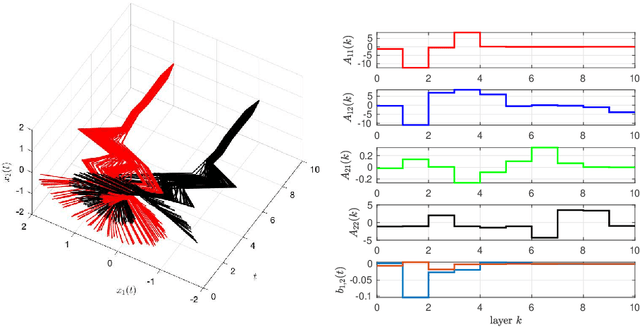

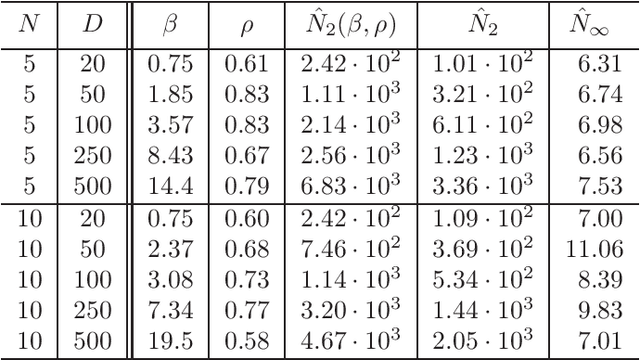

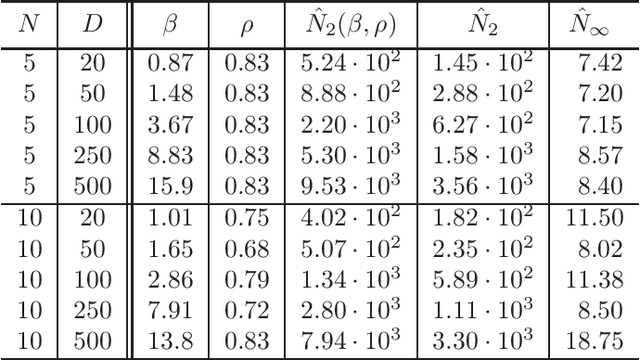

Abstract:It is well-known that the training of Deep Neural Networks (DNN) can be formalized in the language of optimal control. In this context, this paper leverages classical turnpike properties of optimal control problems to attempt a quantifiable answer to the question of how many layers should be considered in a DNN. The underlying assumption is that the number of neurons per layer -- i.e., the width of the DNN -- is kept constant. Pursuing a different route than the classical analysis of approximation properties of sigmoidal functions, we prove explicit bounds on the required depths of DNNs based on asymptotic reachability assumptions and a dissipativity-inducing choice of the regularization terms in the training problem. Numerical results obtained for the two spiral task data set for classification indicate that the proposed estimates can provide non-conservative depth bounds.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge