Stefan Ruschke

Reliable Evaluation of MRI Motion Correction: Dataset and Insights

Jun 06, 2025Abstract:Correcting motion artifacts in MRI is important, as they can hinder accurate diagnosis. However, evaluating deep learning-based and classical motion correction methods remains fundamentally difficult due to the lack of accessible ground-truth target data. To address this challenge, we study three evaluation approaches: real-world evaluation based on reference scans, simulated motion, and reference-free evaluation, each with its merits and shortcomings. To enable evaluation with real-world motion artifacts, we release PMoC3D, a dataset consisting of unprocessed Paired Motion-Corrupted 3D brain MRI data. To advance evaluation quality, we introduce MoMRISim, a feature-space metric trained for evaluating motion reconstructions. We assess each evaluation approach and find real-world evaluation together with MoMRISim, while not perfect, to be most reliable. Evaluation based on simulated motion systematically exaggerates algorithm performance, and reference-free evaluation overrates oversmoothed deep learning outputs.

Resolution-Robust 3D MRI Reconstruction with 2D Diffusion Priors: Diverse-Resolution Training Outperforms Interpolation

Dec 24, 2024

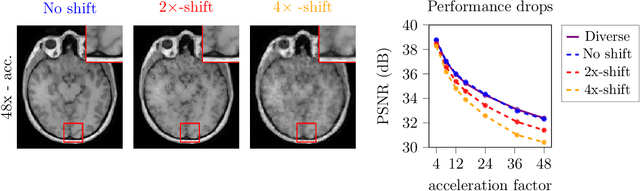

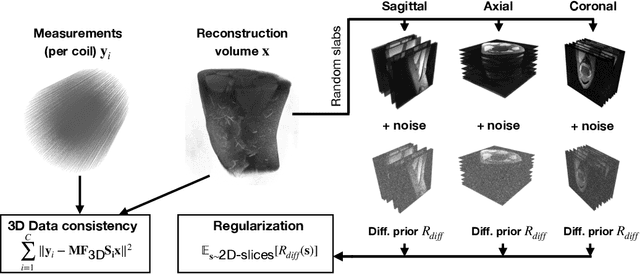

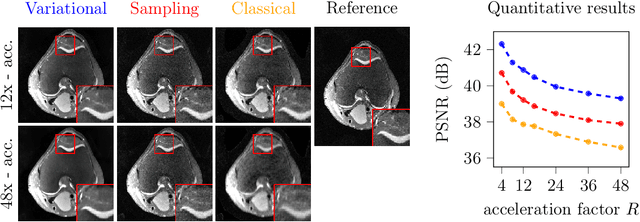

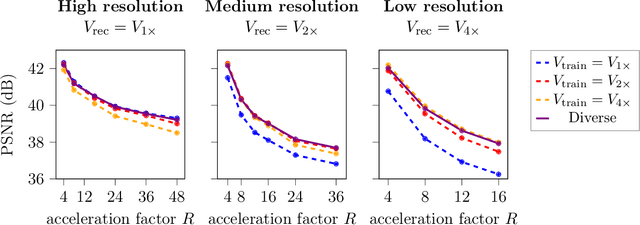

Abstract:Deep learning-based 3D imaging, in particular magnetic resonance imaging (MRI), is challenging because of limited availability of 3D training data. Therefore, 2D diffusion models trained on 2D slices are starting to be leveraged for 3D MRI reconstruction. However, as we show in this paper, existing methods pertain to a fixed voxel size, and performance degrades when the voxel size is varied, as it is often the case in clinical practice. In this paper, we propose and study several approaches for resolution-robust 3D MRI reconstruction with 2D diffusion priors. As a result of this investigation, we obtain a simple resolution-robust variational 3D reconstruction approach based on diffusion-guided regularization of randomly sampled 2D slices. This method provides competitive reconstruction quality compared to posterior sampling baselines. Towards resolving the sensitivity to resolution-shifts, we investigate state-of-the-art model-based approaches including Gaussian splatting, neural representations, and infinite-dimensional diffusion models, as well as a simple data-centric approach of training the diffusion model on several resolutions. Our experiments demonstrate that the model-based approaches fail to close the performance gap in 3D MRI. In contrast, the data-centric approach of training the diffusion model on various resolutions effectively provides a resolution-robust method without compromising accuracy.

MotionTTT: 2D Test-Time-Training Motion Estimation for 3D Motion Corrected MRI

Sep 14, 2024

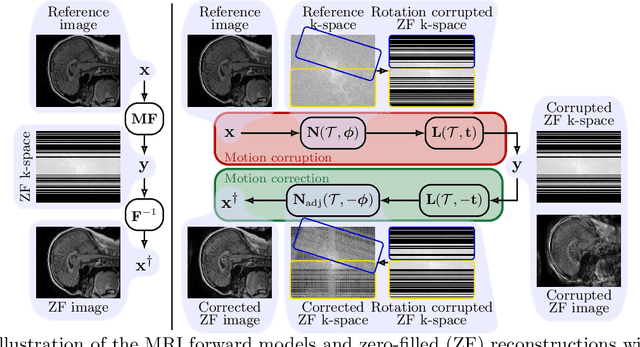

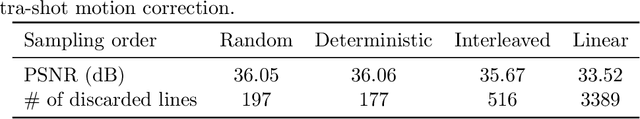

Abstract:A major challenge of the long measurement times in magnetic resonance imaging (MRI), an important medical imaging technology, is that patients may move during data acquisition. This leads to severe motion artifacts in the reconstructed images and volumes. In this paper, we propose a deep learning-based test-time-training method for accurate motion estimation. The key idea is that a neural network trained for motion-free reconstruction has a small loss if there is no motion, thus optimizing over motion parameters passed through the reconstruction network enables accurate estimation of motion. The estimated motion parameters enable to correct for the motion and to reconstruct accurate motion-corrected images. Our method uses 2D reconstruction networks to estimate rigid motion in 3D, and constitutes the first deep learning based method for 3D rigid motion estimation towards 3D-motion-corrected MRI. We show that our method can provably reconstruct motion parameters for a simple signal and neural network model. We demonstrate the effectiveness of our method for both retrospectively simulated motion and prospectively collected real motion-corrupted data.

Implicit Neural Networks with Fourier-Feature Inputs for Free-breathing Cardiac MRI Reconstruction

May 11, 2023

Abstract:In this paper, we propose an approach for cardiac magnetic resonance imaging (MRI), which aims to reconstruct a real-time video of a beating heart from continuous highly under-sampled measurements. This task is challenging since the object to be reconstructed (the heart) is continuously changing during signal acquisition. To address this challenge, we represent the beating heart with an implicit neural network and fit the network so that the representation of the heart is consistent with the measurements. The network in the form of a multi-layer perceptron with Fourier-feature inputs acts as an effective signal prior and enables adjusting the regularization strength in both the spatial and temporal dimensions of the signal. We examine the proposed approach for 2D free-breathing cardiac real-time MRI in different operating regimes, i.e., for different image resolutions, slice thicknesses, and acquisition lengths. Our method achieves reconstruction quality on par with or slightly better than state-of-the-art untrained convolutional neural networks and superior image quality compared to a recent method that fits an implicit representation directly to Fourier-domain measurements. However, this comes at a higher computational cost. Our approach does not require any additional patient data or biosensors including electrocardiography, making it potentially applicable in a wide range of clinical scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge