Sorachi Kato

RAPTR: Radar-based 3D Pose Estimation using Transformer

Nov 11, 2025Abstract:Radar-based indoor 3D human pose estimation typically relied on fine-grained 3D keypoint labels, which are costly to obtain especially in complex indoor settings involving clutter, occlusions, or multiple people. In this paper, we propose \textbf{RAPTR} (RAdar Pose esTimation using tRansformer) under weak supervision, using only 3D BBox and 2D keypoint labels which are considerably easier and more scalable to collect. Our RAPTR is characterized by a two-stage pose decoder architecture with a pseudo-3D deformable attention to enhance (pose/joint) queries with multi-view radar features: a pose decoder estimates initial 3D poses with a 3D template loss designed to utilize the 3D BBox labels and mitigate depth ambiguities; and a joint decoder refines the initial poses with 2D keypoint labels and a 3D gravity loss. Evaluated on two indoor radar datasets, RAPTR outperforms existing methods, reducing joint position error by $34.3\%$ on HIBER and $76.9\%$ on MMVR. Our implementation is available at https://github.com/merlresearch/radar-pose-transformer.

Range Image-Based Implicit Neural Compression for LiDAR Point Clouds

Apr 24, 2025

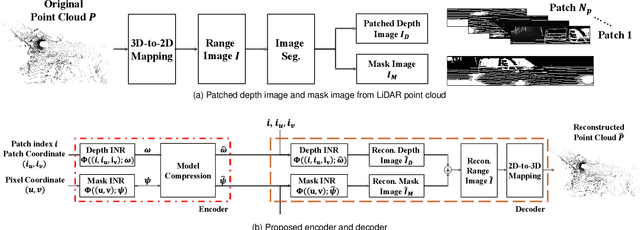

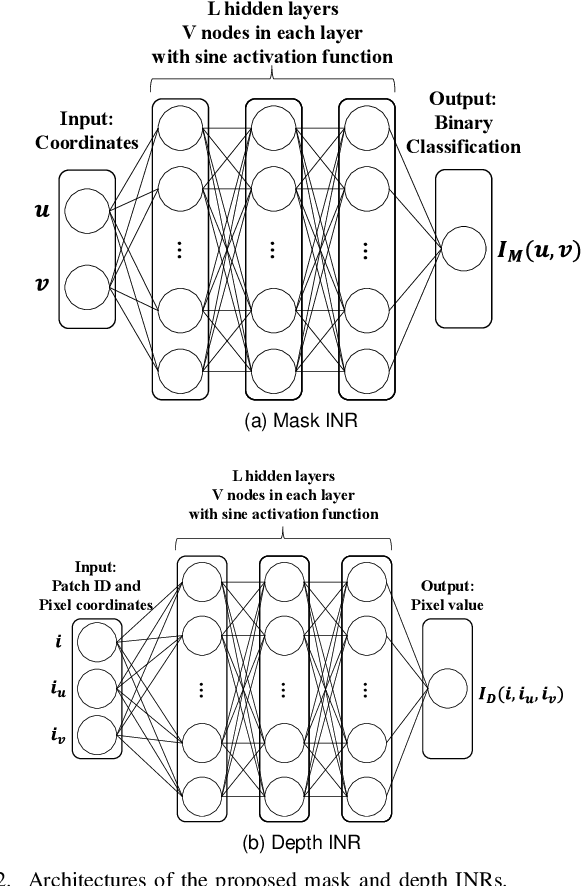

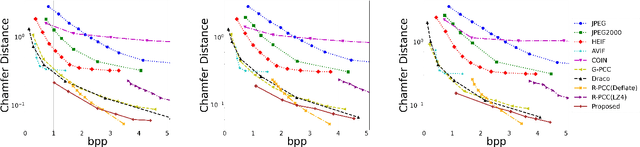

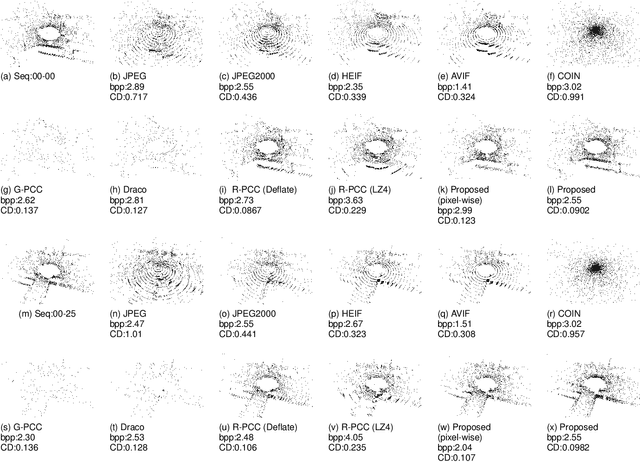

Abstract:This paper presents a novel scheme to efficiently compress Light Detection and Ranging~(LiDAR) point clouds, enabling high-precision 3D scene archives, and such archives pave the way for a detailed understanding of the corresponding 3D scenes. We focus on 2D range images~(RIs) as a lightweight format for representing 3D LiDAR observations. Although conventional image compression techniques can be adapted to improve compression efficiency for RIs, their practical performance is expected to be limited due to differences in bit precision and the distinct pixel value distribution characteristics between natural images and RIs. We propose a novel implicit neural representation~(INR)--based RI compression method that effectively handles floating-point valued pixels. The proposed method divides RIs into depth and mask images and compresses them using patch-wise and pixel-wise INR architectures with model pruning and quantization, respectively. Experiments on the KITTI dataset show that the proposed method outperforms existing image, point cloud, RI, and INR-based compression methods in terms of 3D reconstruction and detection quality at low bitrates and decoding latency.

Multi-Band Wi-Fi Neural Dynamic Fusion

Jul 17, 2024

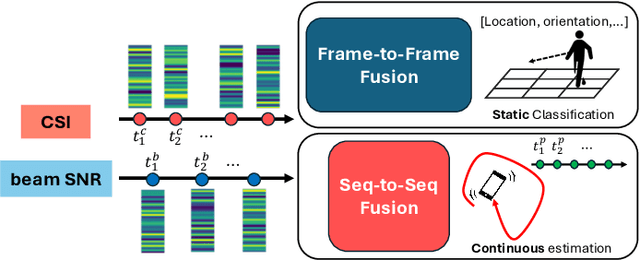

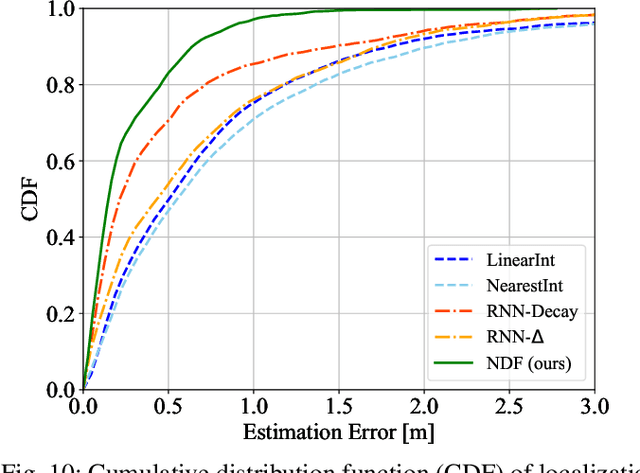

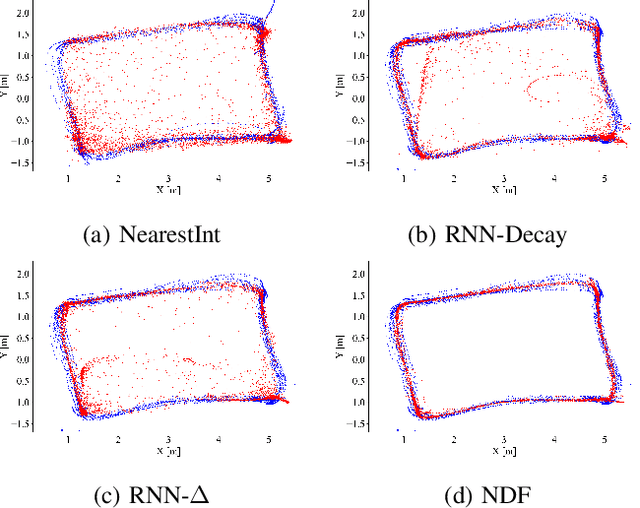

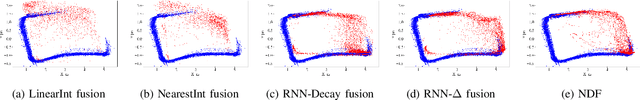

Abstract:Wi-Fi channel measurements across different bands, e.g., sub-7-GHz and 60-GHz bands, are asynchronous due to the uncoordinated nature of distinct standards protocols, e.g., 802.11ac/ax/be and 802.11ad/ay. Multi-band Wi-Fi fusion has been considered before on a frame-to-frame basis for simple classification tasks, which does not require fine-time-scale alignment. In contrast, this paper considers asynchronous sequence-to-sequence fusion between sub-7-GHz channel state information (CSI) and 60-GHz beam signal-to-noise-ratio~(SNR)s for more challenging tasks such as continuous coordinate estimation. To handle the timing disparity between asynchronous multi-band Wi-Fi channel measurements, this paper proposes a multi-band neural dynamic fusion (NDF) framework. This framework uses separate encoders to embed the multi-band Wi-Fi measurement sequences to separate initial latent conditions. Using a continuous-time ordinary differential equation (ODE) modeling, these initial latent conditions are propagated to respective latent states of the multi-band channel measurements at the same time instances for a latent alignment and a post-ODE fusion, and at their original time instances for measurement reconstruction. We derive a customized loss function based on the variational evidence lower bound (ELBO) that balances between the multi-band measurement reconstruction and continuous coordinate estimation. We evaluate the NDF framework using an in-house multi-band Wi-Fi testbed and demonstrate substantial performance improvements over a comprehensive list of single-band and multi-band baseline methods.

MMVR: Millimeter-wave Multi-View Radar Dataset and Benchmark for Indoor Perception

Jun 15, 2024

Abstract:Compared with an extensive list of automotive radar datasets that support autonomous driving, indoor radar datasets are scarce at a smaller scale in the format of low-resolution radar point clouds and usually under an open-space single-room setting. In this paper, we scale up indoor radar data collection using multi-view high-resolution radar heatmap in a multi-day, multi-room, and multi-subject setting, with an emphasis on the diversity of environment and subjects. Referred to as the millimeter-wave multi-view radar (MMVR) dataset, it consists of $345$K multi-view radar frames collected from $25$ human subjects over $6$ different rooms, $446$K annotated bounding boxes/segmentation instances, and $7.59$ million annotated keypoints to support three major perception tasks of object detection, pose estimation, and instance segmentation, respectively. For each task, we report performance benchmarks under two protocols: a single subject in an open space and multiple subjects in several cluttered rooms with two data splits: random split and cross-environment split over $395$ 1-min data segments. We anticipate that MMVR facilitates indoor radar perception development for indoor vehicle (robot/humanoid) navigation, building energy management, and elderly care for better efficiency, user experience, and safety.

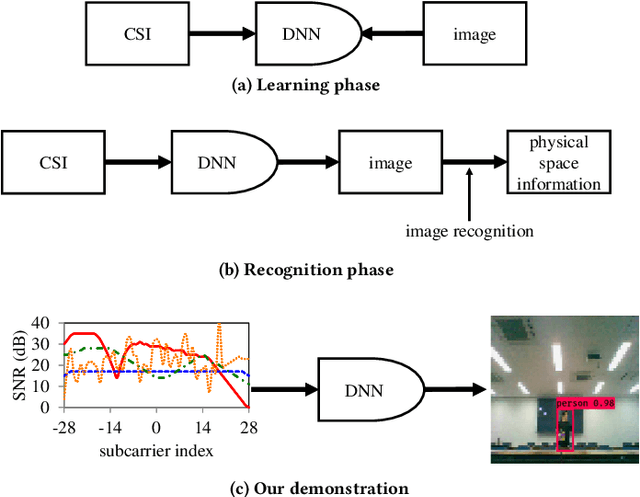

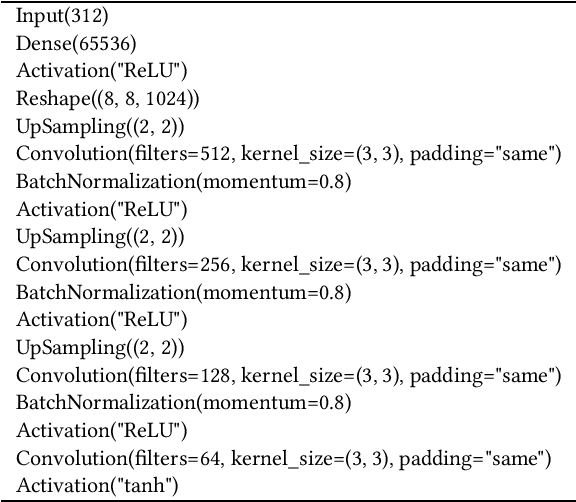

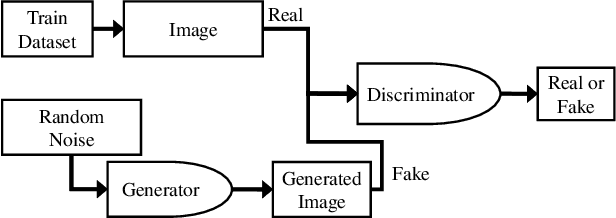

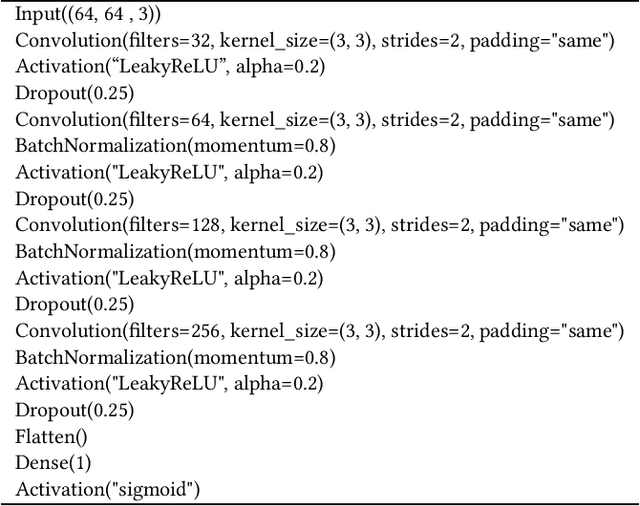

CSI2Image: Image Reconstruction from Channel State Information Using Generative Adversarial Networks

Sep 16, 2020

Abstract:This study aims to find the upper limit of the wireless sensing capability of acquiring physical space information. This is a challenging objective, because at present, wireless sensing studies continue to succeed in acquiring novel phenomena. Thus, although a complete answer cannot be obtained yet, a step is taken towards it here. To achieve this, CSI2Image, a novel channel-state-information (CSI)-to-image conversion method based on generative adversarial networks (GANs), is proposed. The type of physical information acquired using wireless sensing can be estimated by checking wheth\-er the reconstructed image captures the desired physical space information. Three types of learning methods are demonstrated: gen\-er\-a\-tor-only learning, GAN-only learning, and hybrid learning. Evaluating the performance of CSI2Image is difficult, because both the clarity of the image and the presence of the desired physical space information must be evaluated. To solve this problem, a quantitative evaluation methodology using an object detection library is also proposed. CSI2Image was implemented using IEEE 802.11ac compressed CSI, and the evaluation results show that the image was successfully reconstructed. The results demonstrate that gen\-er\-a\-tor-only learning is sufficient for simple wireless sensing problems, but in complex wireless sensing problems, GANs are important for reconstructing generalized images with more accurate physical space information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge