Soonam Lee

Three dimensional blind image deconvolution for fluorescence microscopy using generative adversarial networks

Apr 19, 2019

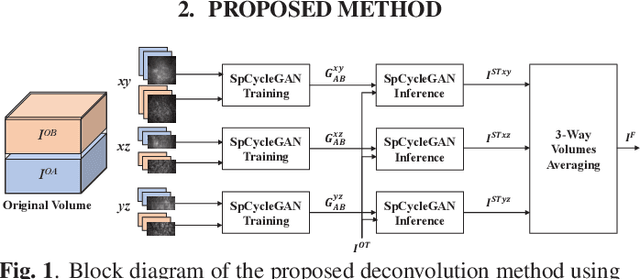

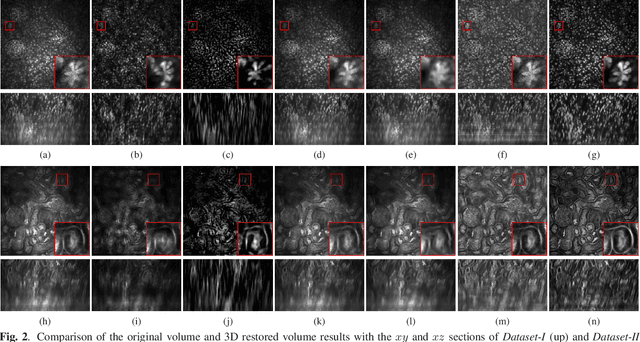

Abstract:Due to image blurring image deconvolution is often used for studying biological structures in fluorescence microscopy. Fluorescence microscopy image volumes inherently suffer from intensity inhomogeneity, blur, and are corrupted by various types of noise which exacerbate image quality at deeper tissue depth. Therefore, quantitative analysis of fluorescence microscopy in deeper tissue still remains a challenge. This paper presents a three dimensional blind image deconvolution method for fluorescence microscopy using 3-way spatially constrained cycle-consistent adversarial networks. The restored volumes of the proposed deconvolution method and other well-known deconvolution methods, denoising methods, and an inhomogeneity correction method are visually and numerically evaluated. Experimental results indicate that the proposed method can restore and improve the quality of blurred and noisy deep depth microscopy image visually and quantitatively.

Three Dimensional Fluorescence Microscopy Image Synthesis and Segmentation

Apr 21, 2018

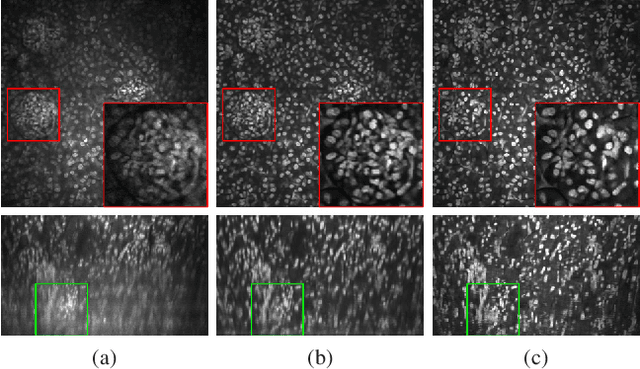

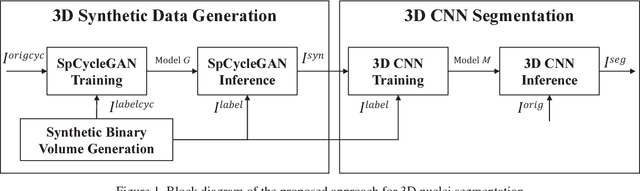

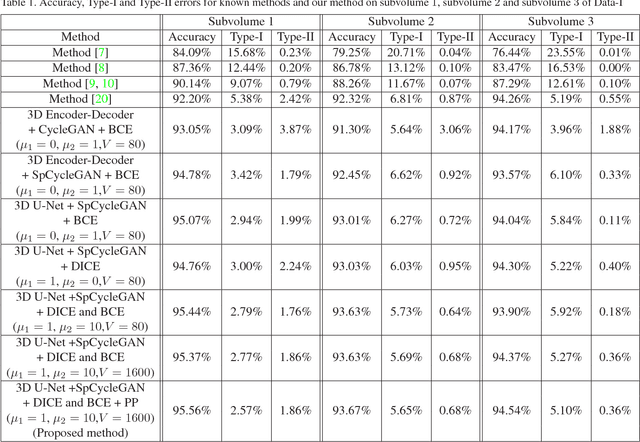

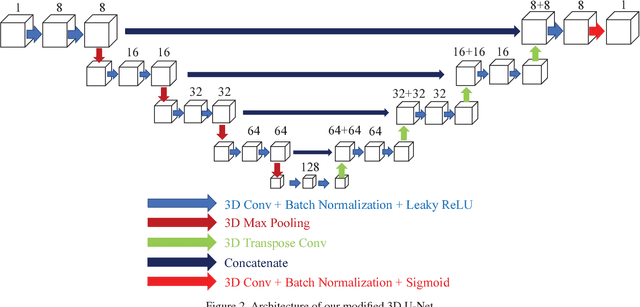

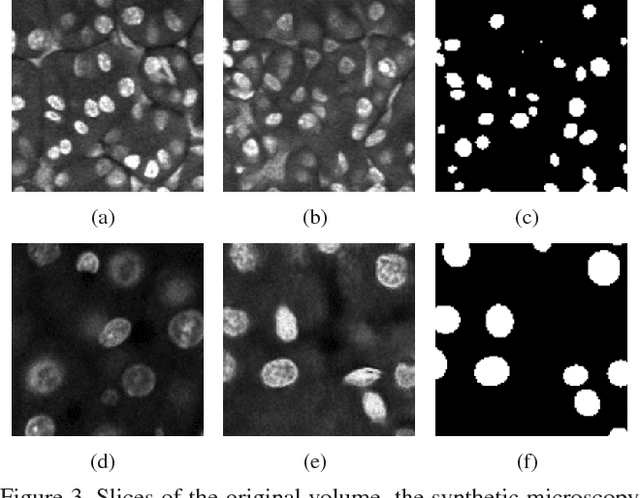

Abstract:Advances in fluorescence microscopy enable acquisition of 3D image volumes with better image quality and deeper penetration into tissue. Segmentation is a required step to characterize and analyze biological structures in the images and recent 3D segmentation using deep learning has achieved promising results. One issue is that deep learning techniques require a large set of groundtruth data which is impractical to annotate manually for large 3D microscopy volumes. This paper describes a 3D deep learning nuclei segmentation method using synthetic 3D volumes for training. A set of synthetic volumes and the corresponding groundtruth are generated using spatially constrained cycle-consistent adversarial networks. Segmentation results demonstrate that our proposed method is capable of segmenting nuclei successfully for various data sets.

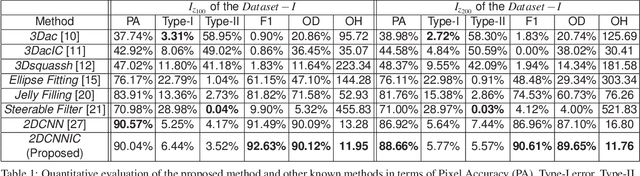

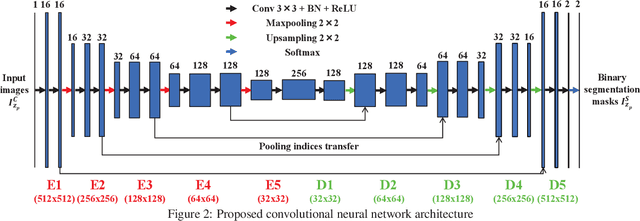

Tubule segmentation of fluorescence microscopy images based on convolutional neural networks with inhomogeneity correction

Feb 10, 2018

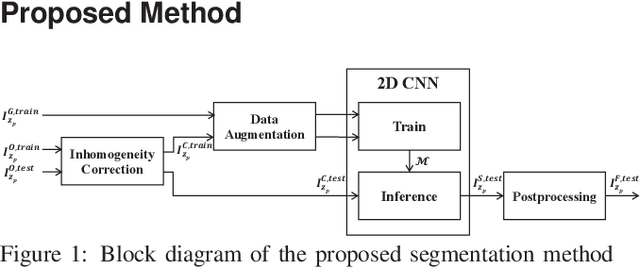

Abstract:Fluorescence microscopy has become a widely used tool for studying various biological structures of in vivo tissue or cells. However, quantitative analysis of these biological structures remains a challenge due to their complexity which is exacerbated by distortions caused by lens aberrations and light scattering. Moreover, manual quantification of such image volumes is an intractable and error-prone process, making the need for automated image analysis methods crucial. This paper describes a segmentation method for tubular structures in fluorescence microscopy images using convolutional neural networks with data augmentation and inhomogeneity correction. The segmentation results of the proposed method are visually and numerically compared with other microscopy segmentation methods. Experimental results indicate that the proposed method has better performance with correctly segmenting and identifying multiple tubular structures compared to other methods.

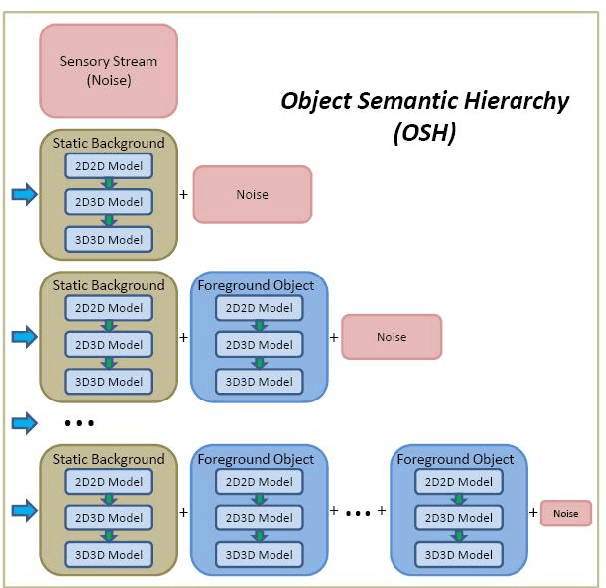

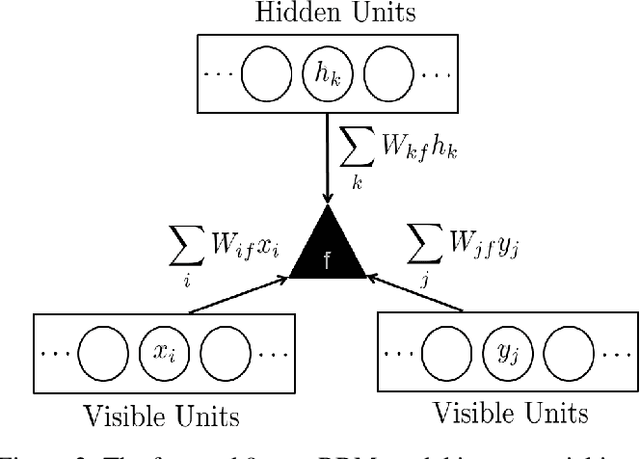

Background subtraction using the factored 3-way restricted Boltzmann machines

Feb 05, 2018

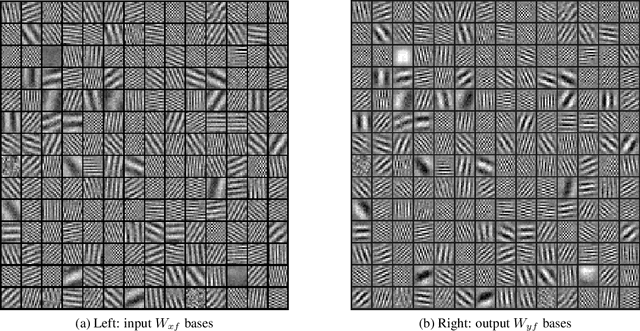

Abstract:In this paper, we proposed a method for reconstructing the 3D model based on continuous sensory input. The robot can draw on extremely large data from the real world using various sensors. However, the sensory inputs are usually too noisy and high-dimensional data. It is very difficult and time consuming for robot to process using such raw data when the robot tries to construct 3D model. Hence, there needs to be a method that can extract useful information from such sensory inputs. To address this problem our method utilizes the concept of Object Semantic Hierarchy (OSH). Different from the previous work that used this hierarchy framework, we extract the motion information using the Deep Belief Network technique instead of applying classical computer vision approaches. We have trained on two large sets of random dot images (10,000) which are translated and rotated, respectively, and have successfully extracted several bases that explain the translation and rotation motion. Based on this translation and rotation bases, background subtraction have become possible using Object Semantic Hierarchy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge