Siyang Cao

Radar-Camera Fused Multi-Object Tracking: Online Calibration and Common Feature

Oct 23, 2025

Abstract:This paper presents a Multi-Object Tracking (MOT) framework that fuses radar and camera data to enhance tracking efficiency while minimizing manual interventions. Contrary to many studies that underutilize radar and assign it a supplementary role--despite its capability to provide accurate range/depth information of targets in a world 3D coordinate system--our approach positions radar in a crucial role. Meanwhile, this paper utilizes common features to enable online calibration to autonomously associate detections from radar and camera. The main contributions of this work include: (1) the development of a radar-camera fusion MOT framework that exploits online radar-camera calibration to simplify the integration of detection results from these two sensors, (2) the utilization of common features between radar and camera data to accurately derive real-world positions of detected objects, and (3) the adoption of feature matching and category-consistency checking to surpass the limitations of mere position matching in enhancing sensor association accuracy. To the best of our knowledge, we are the first to investigate the integration of radar-camera common features and their use in online calibration for achieving MOT. The efficacy of our framework is demonstrated by its ability to streamline the radar-camera mapping process and improve tracking precision, as evidenced by real-world experiments conducted in both controlled environments and actual traffic scenarios. Code is available at https://github.com/radar-lab/Radar_Camera_MOT

CalibRefine: Deep Learning-Based Online Automatic Targetless LiDAR-Camera Calibration with Iterative and Attention-Driven Post-Refinement

Feb 26, 2025Abstract:Accurate multi-sensor calibration is essential for deploying robust perception systems in applications such as autonomous driving, robotics, and intelligent transportation. Existing LiDAR-camera calibration methods often rely on manually placed targets, preliminary parameter estimates, or intensive data preprocessing, limiting their scalability and adaptability in real-world settings. In this work, we propose a fully automatic, targetless, and online calibration framework, CalibRefine, which directly processes raw LiDAR point clouds and camera images. Our approach is divided into four stages: (1) a Common Feature Discriminator that trains on automatically detected objects--using relative positions, appearance embeddings, and semantic classes--to generate reliable LiDAR-camera correspondences, (2) a coarse homography-based calibration, (3) an iterative refinement to incrementally improve alignment as additional data frames become available, and (4) an attention-based refinement that addresses non-planar distortions by leveraging a Vision Transformer and cross-attention mechanisms. Through extensive experiments on two urban traffic datasets, we show that CalibRefine delivers high-precision calibration results with minimal human involvement, outperforming state-of-the-art targetless methods and remaining competitive with, or surpassing, manually tuned baselines. Our findings highlight how robust object-level feature matching, together with iterative and self-supervised attention-based adjustments, enables consistent sensor fusion in complex, real-world conditions without requiring ground-truth calibration matrices or elaborate data preprocessing.

TransRAD: Retentive Vision Transformer for Enhanced Radar Object Detection

Jan 29, 2025

Abstract:Despite significant advancements in environment perception capabilities for autonomous driving and intelligent robotics, cameras and LiDARs remain notoriously unreliable in low-light conditions and adverse weather, which limits their effectiveness. Radar serves as a reliable and low-cost sensor that can effectively complement these limitations. However, radar-based object detection has been underexplored due to the inherent weaknesses of radar data, such as low resolution, high noise, and lack of visual information. In this paper, we present TransRAD, a novel 3D radar object detection model designed to address these challenges by leveraging the Retentive Vision Transformer (RMT) to more effectively learn features from information-dense radar Range-Azimuth-Doppler (RAD) data. Our approach leverages the Retentive Manhattan Self-Attention (MaSA) mechanism provided by RMT to incorporate explicit spatial priors, thereby enabling more accurate alignment with the spatial saliency characteristics of radar targets in RAD data and achieving precise 3D radar detection across Range-Azimuth-Doppler dimensions. Furthermore, we propose Location-Aware NMS to effectively mitigate the common issue of duplicate bounding boxes in deep radar object detection. The experimental results demonstrate that TransRAD outperforms state-of-the-art methods in both 2D and 3D radar detection tasks, achieving higher accuracy, faster inference speed, and reduced computational complexity. Code is available at https://github.com/radar-lab/TransRAD

mmWave Radar for Sit-to-Stand Analysis: A Comparative Study with Wearables and Kinect

Nov 22, 2024Abstract:This study explores a novel approach for analyzing Sit-to-Stand (STS) movements using millimeter-wave (mmWave) radar technology. The goal is to develop a non-contact sensing, privacy-preserving, and all-day operational method for healthcare applications, including fall risk assessment. We used a 60GHz mmWave radar system to collect radar point cloud data, capturing STS motions from 45 participants. By employing a deep learning pose estimation model, we learned the human skeleton from Kinect built-in body tracking and applied Inverse Kinematics (IK) to calculate joint angles, segment STS motions, and extract commonly used features in fall risk assessment. Radar extracted features were then compared with those obtained from Kinect and wearable sensors. The results demonstrated the effectiveness of mmWave radar in capturing general motion patterns and large joint movements (e.g., trunk). Additionally, the study highlights the advantages and disadvantages of individual sensors and suggests the potential of integrated sensor technologies to improve the accuracy and reliability of motion analysis in clinical and biomedical research settings.

Deep Learning-Based Robust Multi-Object Tracking via Fusion of mmWave Radar and Camera Sensors

Jul 10, 2024Abstract:Autonomous driving holds great promise in addressing traffic safety concerns by leveraging artificial intelligence and sensor technology. Multi-Object Tracking plays a critical role in ensuring safer and more efficient navigation through complex traffic scenarios. This paper presents a novel deep learning-based method that integrates radar and camera data to enhance the accuracy and robustness of Multi-Object Tracking in autonomous driving systems. The proposed method leverages a Bi-directional Long Short-Term Memory network to incorporate long-term temporal information and improve motion prediction. An appearance feature model inspired by FaceNet is used to establish associations between objects across different frames, ensuring consistent tracking. A tri-output mechanism is employed, consisting of individual outputs for radar and camera sensors and a fusion output, to provide robustness against sensor failures and produce accurate tracking results. Through extensive evaluations of real-world datasets, our approach demonstrates remarkable improvements in tracking accuracy, ensuring reliable performance even in low-visibility scenarios.

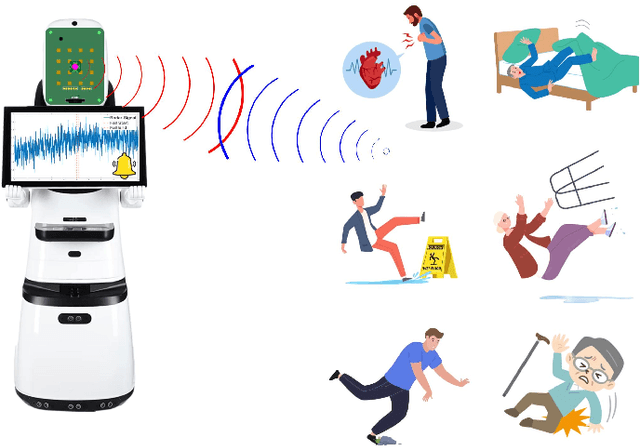

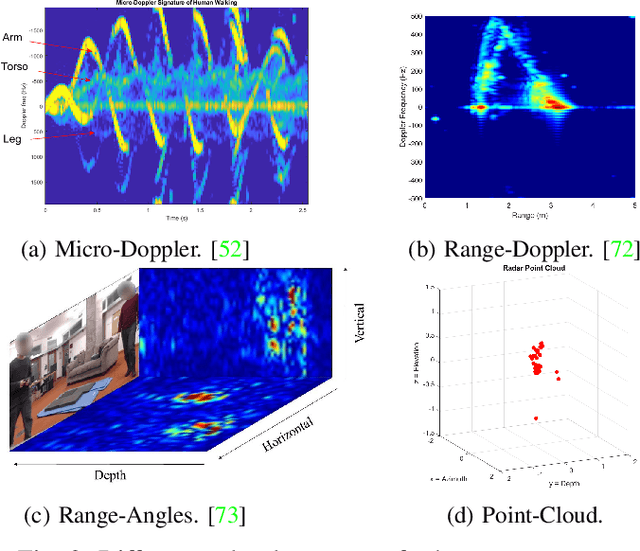

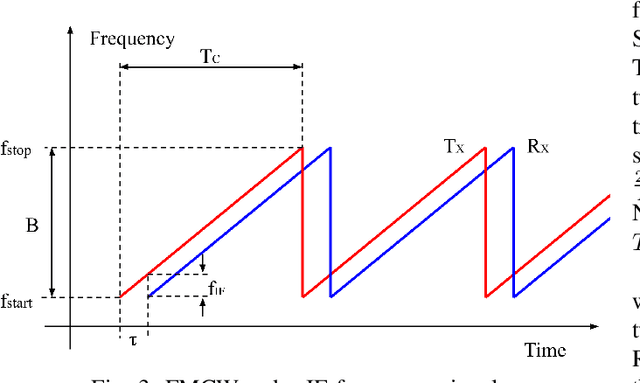

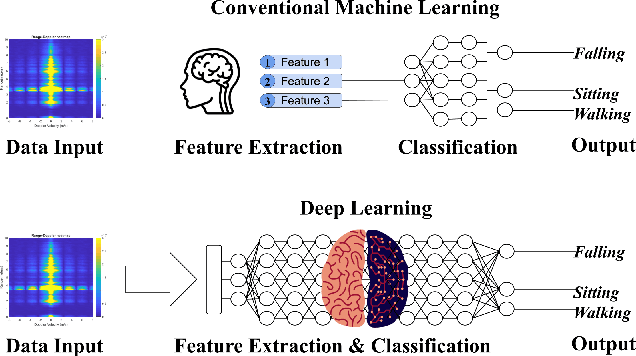

A Survey on Radar-Based Fall Detection

Dec 07, 2023

Abstract:Fall detection, particularly critical for high-risk demographics like the elderly, is a key public health concern where timely detection can greatly minimize harm. With the advancements in radio frequency technology, radar has emerged as a powerful tool for human detection and tracking. Traditional machine learning algorithms, such as Support Vector Machines (SVM) and k-Nearest Neighbors (kNN), have shown promising outcomes. However, deep learning approaches, notably Convolutional Neural Networks (CNN) and Recurrent Neural Networks (RNN), have outperformed in learning intricate features and managing large, unstructured datasets. This survey offers an in-depth analysis of radar-based fall detection, with emphasis on Micro-Doppler, Range-Doppler, and Range-Doppler-Angles techniques. We discuss the intricacies and challenges in fall detection and emphasize the necessity for a clear definition of falls and appropriate detection criteria, informed by diverse influencing factors. We present an overview of radar signal processing principles and the underlying technology of radar-based fall detection, providing an accessible insight into machine learning and deep learning algorithms. After examining 74 research articles on radar-based fall detection published since 2000, we aim to bridge current research gaps and underscore the potential future research strategies, emphasizing the real-world applications possibility and the unexplored potential of deep learning in improving radar-based fall detection.

Online Targetless Radar-Camera Extrinsic Calibration Based on the Common Features of Radar and Camera

Sep 02, 2023Abstract:Sensor fusion is essential for autonomous driving and autonomous robots, and radar-camera fusion systems have gained popularity due to their complementary sensing capabilities. However, accurate calibration between these two sensors is crucial to ensure effective fusion and improve overall system performance. Calibration involves intrinsic and extrinsic calibration, with the latter being particularly important for achieving accurate sensor fusion. Unfortunately, many target-based calibration methods require complex operating procedures and well-designed experimental conditions, posing challenges for researchers attempting to reproduce the results. To address this issue, we introduce a novel approach that leverages deep learning to extract a common feature from raw radar data (i.e., Range-Doppler-Angle data) and camera images. Instead of explicitly representing these common features, our method implicitly utilizes these common features to match identical objects from both data sources. Specifically, the extracted common feature serves as an example to demonstrate an online targetless calibration method between the radar and camera systems. The estimation of the extrinsic transformation matrix is achieved through this feature-based approach. To enhance the accuracy and robustness of the calibration, we apply the RANSAC and Levenberg-Marquardt (LM) nonlinear optimization algorithm for deriving the matrix. Our experiments in the real world demonstrate the effectiveness and accuracy of our proposed method.

3D Radar and Camera Co-Calibration: A Flexible and Accurate Method for Target-based Extrinsic Calibration

Jul 28, 2023

Abstract:Advances in autonomous driving are inseparable from sensor fusion. Heterogeneous sensors are widely used for sensor fusion due to their complementary properties, with radar and camera being the most equipped sensors. Intrinsic and extrinsic calibration are essential steps in sensor fusion. The extrinsic calibration, independent of the sensor's own parameters, and performed after the sensors are installed, greatly determines the accuracy of sensor fusion. Many target-based methods require cumbersome operating procedures and well-designed experimental conditions, making them extremely challenging. To this end, we propose a flexible, easy-to-reproduce and accurate method for extrinsic calibration of 3D radar and camera. The proposed method does not require a specially designed calibration environment, and instead places a single corner reflector (CR) on the ground to iteratively collect radar and camera data simultaneously using Robot Operating System (ROS), and obtain radar-camera point correspondences based on their timestamps, and then use these point correspondences as input to solve the perspective-n-point (PnP) problem, and finally get the extrinsic calibration matrix. Also, RANSAC is used for robustness and the Levenberg-Marquardt (LM) nonlinear optimization algorithm is used for accuracy. Multiple controlled environment experiments as well as real-world experiments demonstrate the efficiency and accuracy (AED error is 15.31 pixels and Acc up to 89\%) of the proposed method.

mmPose-NLP: A Natural Language Processing Approach to Precise Skeletal Pose Estimation using mmWave Radars

Jul 21, 2021

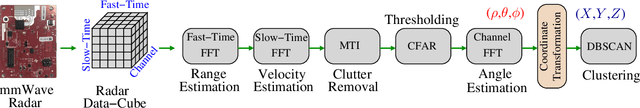

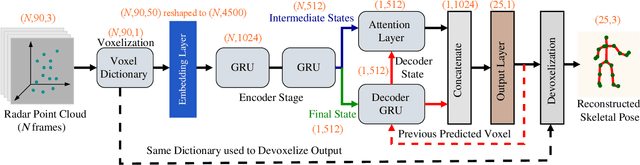

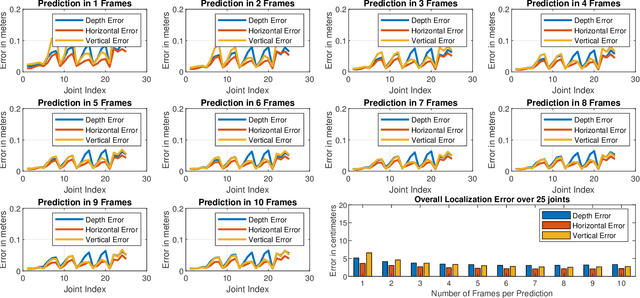

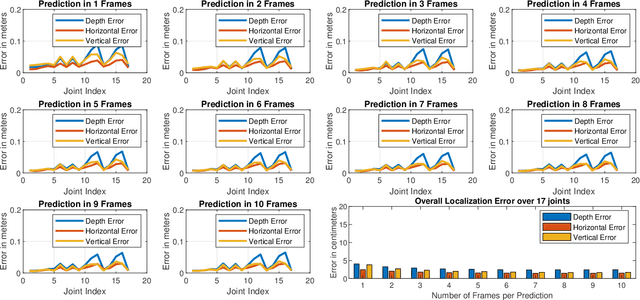

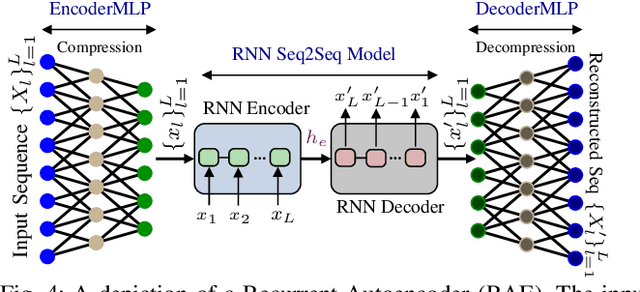

Abstract:In this paper we presented mmPose-NLP, a novel Natural Language Processing (NLP) inspired Sequence-to-Sequence (Seq2Seq) skeletal key-point estimator using millimeter-wave (mmWave) radar data. To the best of the author's knowledge, this is the first method to precisely estimate upto 25 skeletal key-points using mmWave radar data alone. Skeletal pose estimation is critical in several applications ranging from autonomous vehicles, traffic monitoring, patient monitoring, gait analysis, to defense security forensics, and aid both preventative and actionable decision making. The use of mmWave radars for this task, over traditionally employed optical sensors, provide several advantages, primarily its operational robustness to scene lighting and adverse weather conditions, where optical sensor performance degrade significantly. The mmWave radar point-cloud (PCL) data is first voxelized (analogous to tokenization in NLP) and $N$ frames of the voxelized radar data (analogous to a text paragraph in NLP) is subjected to the proposed mmPose-NLP architecture, where the voxel indices of the 25 skeletal key-points (analogous to keyword extraction in NLP) are predicted. The voxel indices are converted back to real world 3-D coordinates using the voxel dictionary used during the tokenization process. Mean Absolute Error (MAE) metrics were used to measure the accuracy of the proposed system against the ground truth, with the proposed mmPose-NLP offering <3 cm localization errors in the depth, horizontal and vertical axes. The effect of the number of input frames vs performance/accuracy was also studied for N = {1,2,..,10}. A comprehensive methodology, results, discussions and limitations are presented in this paper. All the source codes and results are made available on GitHub for furthering research and development in this critical yet emerging domain of skeletal key-point estimation using mmWave radars.

mmFall: Fall Detection using 4D MmWave Radar and Variational Recurrent Autoencoder

Mar 28, 2020

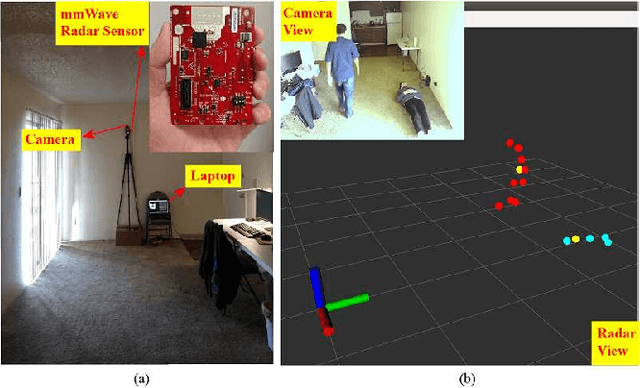

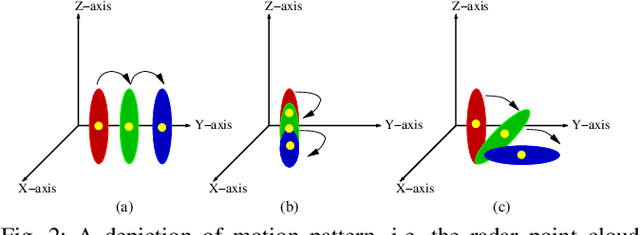

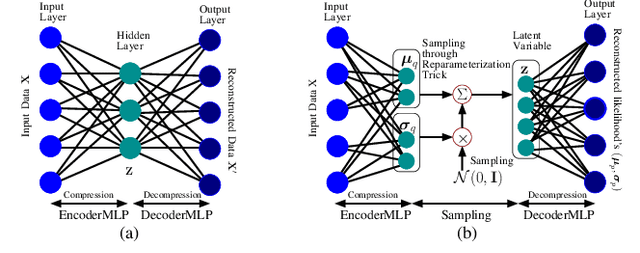

Abstract:In this paper we propose mmFall - a novel fall detection system, which comprises of (i) the emerging millimeter-wave (mmWave) radar sensor to collect the human body's point cloud along with the body centroid, and (ii) a variational recurrent autoencoder (VRAE) to compute the anomaly level of the body motion based on the acquired point cloud. A fall is claimed to have occurred when the spike in anomaly level and the drop in centroid height occur simultaneously. The mmWave radar sensor provides several advantages, such as privacycompliance and high-sensitivity to motion, over the traditional sensing modalities. However, (i) randomness in radar point cloud data and (ii) difficulties in fall collection/labeling in the traditional supervised fall detection approaches are the two main challenges. To overcome the randomness in radar data, the proposed VRAE uses variational inference, a probabilistic approach rather than the traditional deterministic approach, to infer the posterior probability of the body's latent motion state at each frame, followed by a recurrent neural network (RNN) to learn the temporal features of the motion over multiple frames. Moreover, to circumvent the difficulties in fall data collection/labeling, the VRAE is built upon an autoencoder architecture in a semi-supervised approach, and trained on only normal activities of daily living (ADL) such that in the inference stage the VRAE will generate a spike in the anomaly level once an abnormal motion, such as fall, occurs. During the experiment, we implemented the VRAE along with two other baselines, and tested on the dataset collected in an apartment. The receiver operating characteristic (ROC) curve indicates that our proposed model outperforms the other two baselines, and achieves 98% detection out of 50 falls at the expense of just 2 false alarms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge