Simeng Bian

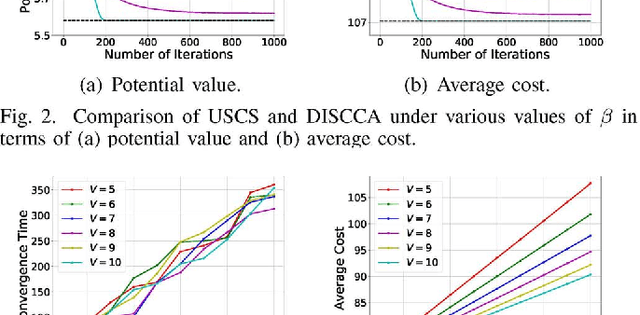

Service Chain Composition with Failures in NFV Systems: A Game-Theoretic Perspective

Aug 01, 2020

Abstract:For state-of-the-art network function virtualization (NFV) systems, it remains a key challenge to conduct effective service chain composition for different network services (NSs) with ultra-low request latencies and minimum network congestion. To this end, existing solutions often require full knowledge of the network state, while ignoring the privacy issues and overlooking the non-cooperative behaviors of users. What is more, they may fall short in the face of unexpected failures such as user unavailability and virtual machine breakdown. In this paper, we formulate the problem of service chain composition in NFV systems with failures as a non-cooperative game. By showing that such a game is a weighted potential game and exploiting the unique problem structure, we propose two effective distributed schemes that guide the service chain compositions of different NSs towards the Nash equilibrium (NE) state with both near-optimal latencies and minimum congestion. Besides, we develop two novel learning-aided schemes as comparisons, which are based on deep reinforcement learning (DRL) and Monte Carlo tree search (MCTS) techniques, respectively. Our theoretical analysis and simulation results demonstrate the effectiveness of our proposed schemes, as well as the adaptivity when faced with failures.

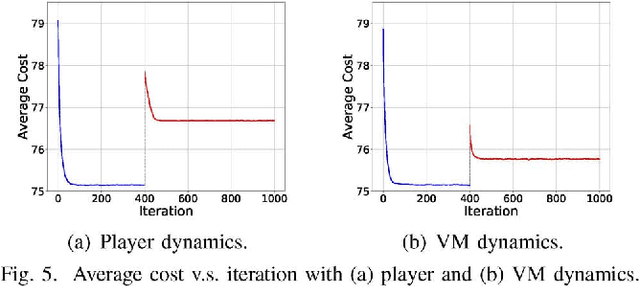

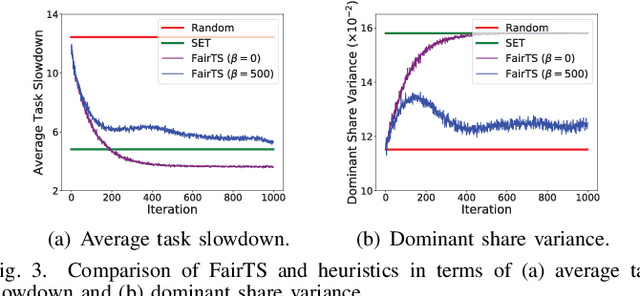

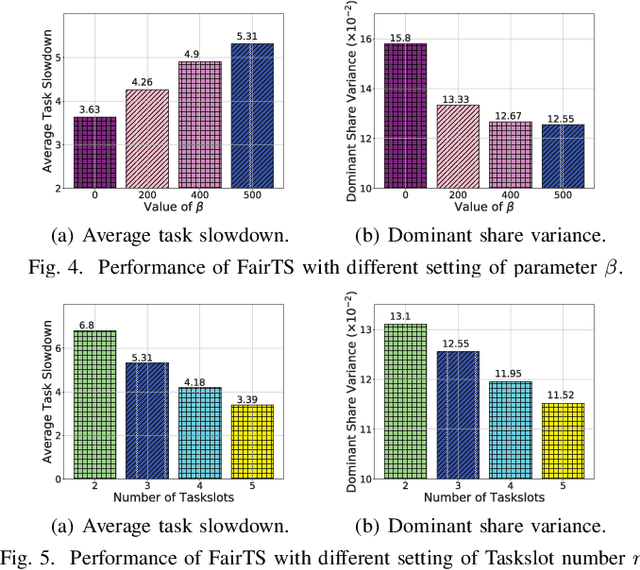

Online Task Scheduling for Fog Computing with Multi-Resource Fairness

Aug 01, 2020

Abstract:In fog computing systems, one key challenge is online task scheduling, i.e., to decide the resource allocation for tasks that are continuously generated from end devices. The design is challenging because of various uncertainties manifested in fog computing systems; e.g., tasks' resource demands remain unknown before their actual arrivals. Recent works have applied deep reinforcement learning (DRL) techniques to conduct online task scheduling and improve various objectives. However, they overlook the multi-resource fairness for different tasks, which is key to achieving fair resource sharing among tasks but in general non-trivial to achieve. Thusly, it is still an open problem to design an online task scheduling scheme with multi-resource fairness. In this paper, we address the above challenges. Particularly, by leveraging DRL techniques and adopting the idea of dominant resource fairness (DRF), we propose FairTS, an online task scheduling scheme that learns directly from experience to effectively shorten average task slowdown while ensuring multi-resource fairness among tasks. Simulation results show that FairTS outperforms state-of-the-art schemes with an ultra-low task slowdown and better resource fairness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge