Sikai Yang

SoilX: Calibration-Free Comprehensive Soil Sensing Through Contrastive Cross-Component Learning

Nov 07, 2025Abstract:Precision agriculture demands continuous and accurate monitoring of soil moisture (M) and key macronutrients, including nitrogen (N), phosphorus (P), and potassium (K), to optimize yields and conserve resources. Wireless soil sensing has been explored to measure these four components; however, current solutions require recalibration (i.e., retraining the data processing model) to handle variations in soil texture, characterized by aluminosilicates (Al) and organic carbon (C), limiting their practicality. To address this, we introduce SoilX, a calibration-free soil sensing system that jointly measures six key components: {M, N, P, K, C, Al}. By explicitly modeling C and Al, SoilX eliminates texture- and carbon-dependent recalibration. SoilX incorporates Contrastive Cross-Component Learning (3CL), with two customized terms: the Orthogonality Regularizer and the Separation Loss, to effectively disentangle cross-component interference. Additionally, we design a novel tetrahedral antenna array with an antenna-switching mechanism, which can robustly measure soil dielectric permittivity independent of device placement. Extensive experiments demonstrate that SoilX reduces estimation errors by 23.8% to 31.5% over baselines and generalizes well to unseen fields.

See Behind Walls in Real-time Using Aerial Drones and Augmented Reality

Oct 17, 2024

Abstract:This work presents ARD2, a framework that enables real-time through-wall surveillance using two aerial drones and an augmented reality (AR) device. ARD2 consists of two main steps: target direction estimation and contour reconstruction. In the first stage, ARD2 leverages geometric relationships between the drones, the user, and the target to project the target's direction onto the user's AR display. In the second stage, images from the drones are synthesized to reconstruct the target's contour, allowing the user to visualize the target behind walls. Experimental results demonstrate the system's accuracy in both direction estimation and contour reconstruction.

Magnetic Distortion Resistant Orientation Estimation

Oct 16, 2024

Abstract:Inertial Measurement Unit (IMU) sensors, including accelerometers, gyroscopes, and magnetometers, are used to estimate the orientation of mobile devices. However, indoor magnetic fields are often distorted, causing the magnetometer's readings to deviate from true north and resulting in inaccurate orientation estimates. Existing solutions either ignore magnetic distortion or avoid using the magnetometer when distortion is detected. In this paper, we develop MDR, a Magnetic Distortion Resistant orientation estimation system that fundamentally models and corrects magnetic distortion. MDR builds a database to record magnetic directions at different locations and uses it to correct orientation estimates affected by magnetic distortion. To avoid the overhead of database preparation, MDR adopts practical designs to automatically update the database in parallel with orientation estimation. Experiments on 27+ hours of arm motion data show that MDR outperforms the state-of-the-art method by 35.34%.

Tri-Cam: Practical Eye Gaze Tracking via Camera Network

Sep 29, 2024

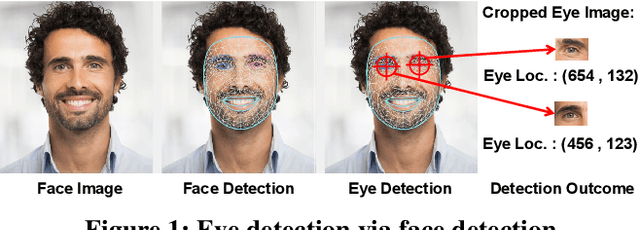

Abstract:As human eyes serve as conduits of rich information, unveiling emotions, intentions, and even aspects of an individual's health and overall well-being, gaze tracking also enables various human-computer interaction applications, as well as insights in psychological and medical research. However, existing gaze tracking solutions fall short at handling free user movement, and also require laborious user effort in system calibration. We introduce Tri-Cam, a practical deep learning-based gaze tracking system using three affordable RGB webcams. It features a split network structure for efficient training, as well as designated network designs to handle the separated gaze tracking tasks. Tri-Cam is also equipped with an implicit calibration module, which makes use of mouse click opportunities to reduce calibration overhead on the user's end. We evaluate Tri-Cam against Tobii, the state-of-the-art commercial eye tracker, achieving comparable accuracy, while supporting a wider free movement area. In conclusion, Tri-Cam provides a user-friendly, affordable, and robust gaze tracking solution that could practically enable various applications.

See Where You Read with Eye Gaze Tracking and Large Language Model

Sep 28, 2024

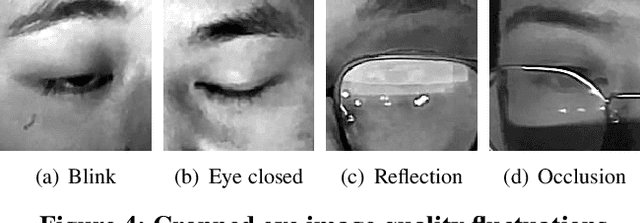

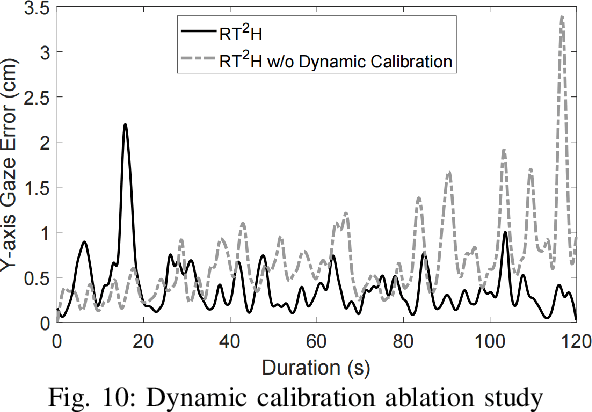

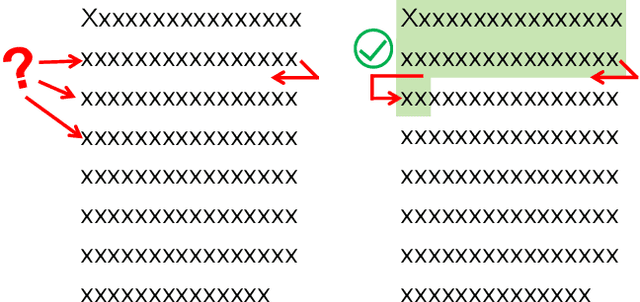

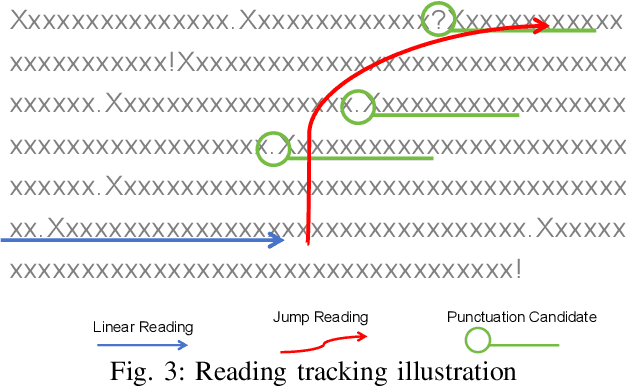

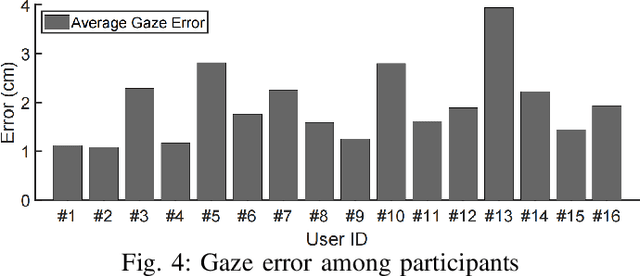

Abstract:Losing track of reading progress during line switching can be frustrating. Eye gaze tracking technology offers a potential solution by highlighting read paragraphs, aiding users in avoiding wrong line switches. However, the gap between gaze tracking accuracy (2-3 cm) and text line spacing (3-5 mm) makes direct application impractical. Existing methods leverage the linear reading pattern but fail during jump reading. This paper presents a reading tracking and highlighting system that supports both linear and jump reading. Based on experimental insights from the gaze nature study of 16 users, two gaze error models are designed to enable both jump reading detection and relocation. The system further leverages the large language model's contextual perception capability in aiding reading tracking. A reading tracking domain-specific line-gaze alignment opportunity is also exploited to enable dynamic and frequent calibration of the gaze results. Controlled experiments demonstrate reliable linear reading tracking, as well as 84% accuracy in tracking jump reading. Furthermore, real field tests with 18 volunteers demonstrated the system's effectiveness in tracking and highlighting read paragraphs, improving reading efficiency, and enhancing user experience.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge