Shuaikai Shi

Benchmarking Machine Learning Methods for Distributed Acoustic Sensing

Mar 26, 2025Abstract:Distributed acoustic sensing (DAS) technology represents an innovative fiber-optic-based sensing methodology that enables real-time acoustic signal monitoring through the detection of minute perturbations along optical fibers. This sensing approach offers compelling advantages, including extensive measurement ranges, exceptional spatial resolution, and an expansive dynamic measurement spectrum. The integration of machine learning (ML) paradigms presents transformative potential for DAS technology, encompassing critical domains such as data augmentation, sophisticated preprocessing techniques, and advanced acoustic event classification and recognition. By leveraging ML algorithms, DAS systems can transition from traditional data processing methodologies to more automated and intelligent analytical frameworks. The computational intelligence afforded by ML-enhanced DAS technologies facilitates unprecedented monitoring capabilities across diverse critical infrastructure sectors. Particularly noteworthy are the technology's applications in transportation infrastructure, energy management systems, and Natural disaster monitoring frameworks, where the precision of data acquisition and the reliability of intelligent decision-making mechanisms are paramount. This research critically examines the comparative performance characteristics of classical machine learning methodologies and state-of-the-art deep learning models in the context of DAS data recognition and interpretation, offering comprehensive insights into the evolving landscape of intelligent sensing technologies.

A Conditional Diffusion Model for Electrical Impedance Tomography Image Reconstruction

Dec 22, 2024

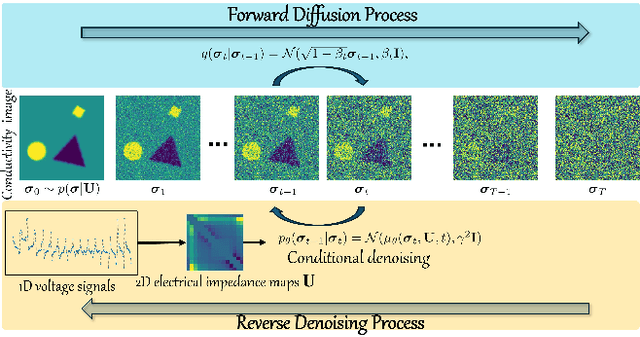

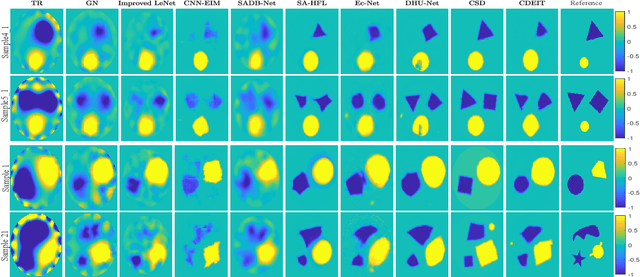

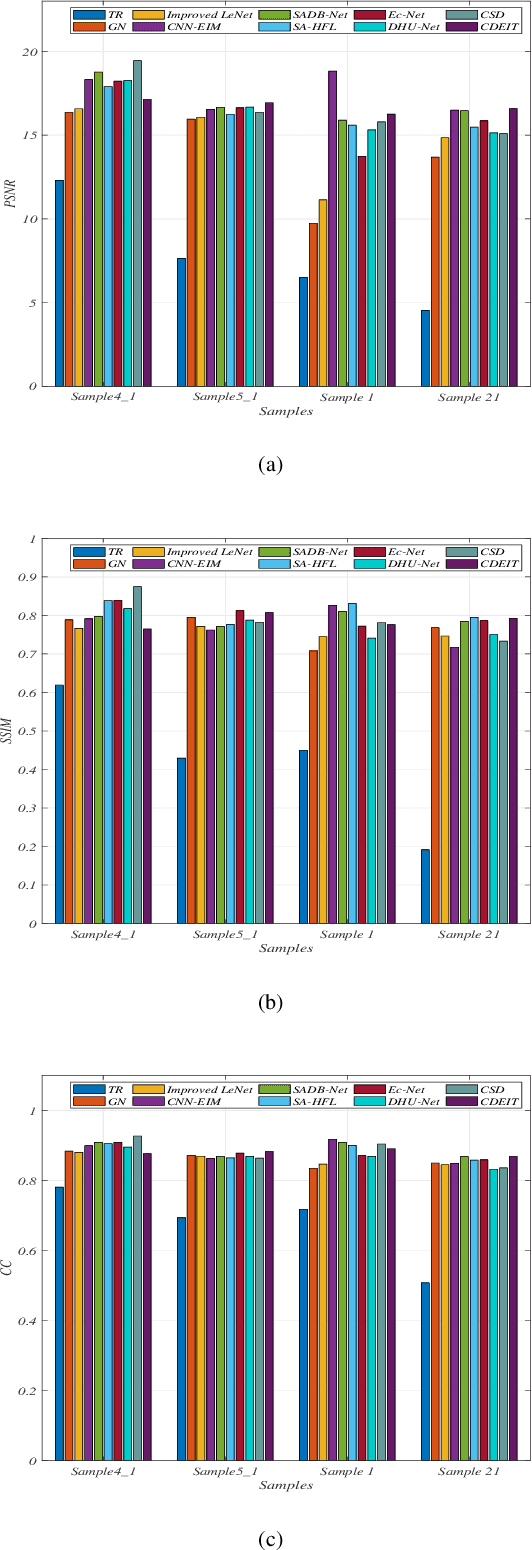

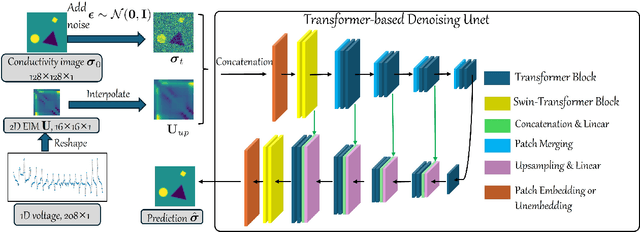

Abstract:Electrical impedance tomography (EIT) is a non-invasive imaging technique, capable of reconstructing images of the electrical conductivity of tissues and materials. It is popular in diverse application areas, from medical imaging to industrial process monitoring and tactile sensing, due to its low cost, real-time capabilities and non-ionizing nature. EIT visualizes the conductivity distribution within a body by measuring the boundary voltages, given a current injection. However, EIT image reconstruction is ill-posed due to the mismatch between the under-sampled voltage data and the high-resolution conductivity image. A variety of approaches, both conventional and deep learning-based, have been proposed, capitalizing on the use of spatial regularizers, and the paradigm of image regression. In this research, a novel method based on the conditional diffusion model for EIT reconstruction is proposed, termed CDEIT. Specifically, CDEIT consists of the forward diffusion process, which first gradually adds Gaussian noise to the clean conductivity images, and a reverse denoising process, which learns to predict the original conductivity image from its noisy version, conditioned on the boundary voltages. Following model training, CDEIT applies the conditional reverse process on test voltage data to generate the desired conductivities. Moreover, we provide the details of a normalization procedure, which demonstrates how EIT image reconstruction models trained on simulated datasets can be applied on real datasets with varying sizes, excitation currents and background conductivities. Experiments conducted on a synthetic dataset and two real datasets demonstrate that the proposed model outperforms state-of-the-art methods. The CDEIT software is available as open-source (https://github.com/shuaikaishi/CDEIT) for reproducibility purposes.

Unsupervised Hyperspectral and Multispectral Images Fusion Based on the Cycle Consistency

Jul 07, 2023

Abstract:Hyperspectral images (HSI) with abundant spectral information reflected materials property usually perform low spatial resolution due to the hardware limits. Meanwhile, multispectral images (MSI), e.g., RGB images, have a high spatial resolution but deficient spectral signatures. Hyperspectral and multispectral image fusion can be cost-effective and efficient for acquiring both high spatial resolution and high spectral resolution images. Many of the conventional HSI and MSI fusion algorithms rely on known spatial degradation parameters, i.e., point spread function, spectral degradation parameters, spectral response function, or both of them. Another class of deep learning-based models relies on the ground truth of high spatial resolution HSI and needs large amounts of paired training images when working in a supervised manner. Both of these models are limited in practical fusion scenarios. In this paper, we propose an unsupervised HSI and MSI fusion model based on the cycle consistency, called CycFusion. The CycFusion learns the domain transformation between low spatial resolution HSI (LrHSI) and high spatial resolution MSI (HrMSI), and the desired high spatial resolution HSI (HrHSI) are considered to be intermediate feature maps in the transformation networks. The CycFusion can be trained with the objective functions of marginal matching in single transform and cycle consistency in double transforms. Moreover, the estimated PSF and SRF are embedded in the model as the pre-training weights, which further enhances the practicality of our proposed model. Experiments conducted on several datasets show that our proposed model outperforms all compared unsupervised fusion methods. The codes of this paper will be available at this address: https: //github.com/shuaikaishi/CycFusion for reproducibility.

Hyperspectral and Multispectral Image Fusion Using the Conditional Denoising Diffusion Probabilistic Model

Jul 07, 2023

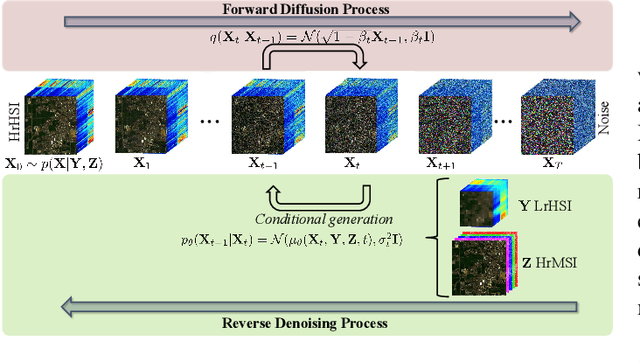

Abstract:Hyperspectral images (HSI) have a large amount of spectral information reflecting the characteristics of matter, while their spatial resolution is low due to the limitations of imaging technology. Complementary to this are multispectral images (MSI), e.g., RGB images, with high spatial resolution but insufficient spectral bands. Hyperspectral and multispectral image fusion is a technique for acquiring ideal images that have both high spatial and high spectral resolution cost-effectively. Many existing HSI and MSI fusion algorithms rely on known imaging degradation models, which are often not available in practice. In this paper, we propose a deep fusion method based on the conditional denoising diffusion probabilistic model, called DDPM-Fus. Specifically, the DDPM-Fus contains the forward diffusion process which gradually adds Gaussian noise to the high spatial resolution HSI (HrHSI) and another reverse denoising process which learns to predict the desired HrHSI from its noisy version conditioning on the corresponding high spatial resolution MSI (HrMSI) and low spatial resolution HSI (LrHSI). Once the training is completes, the proposed DDPM-Fus implements the reverse process on the test HrMSI and LrHSI to generate the fused HrHSI. Experiments conducted on one indoor and two remote sensing datasets show the superiority of the proposed model when compared with other advanced deep learningbased fusion methods. The codes of this work will be opensourced at this address: https://github.com/shuaikaishi/DDPMFus for reproducibility.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge