Shreyas Seshadri

Emphasis control for parallel neural TTS

Oct 06, 2021

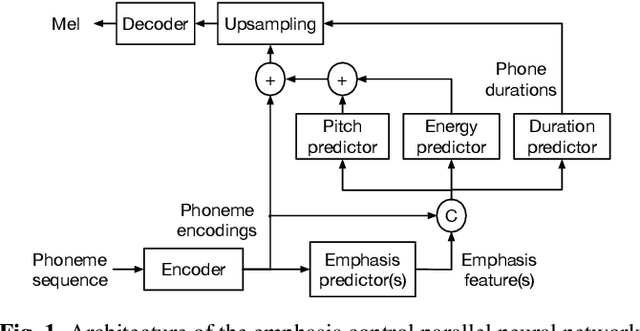

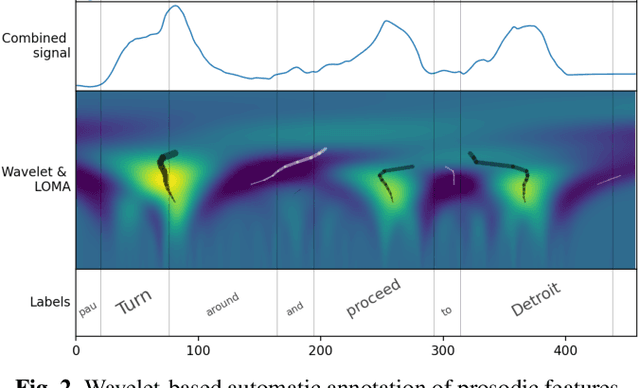

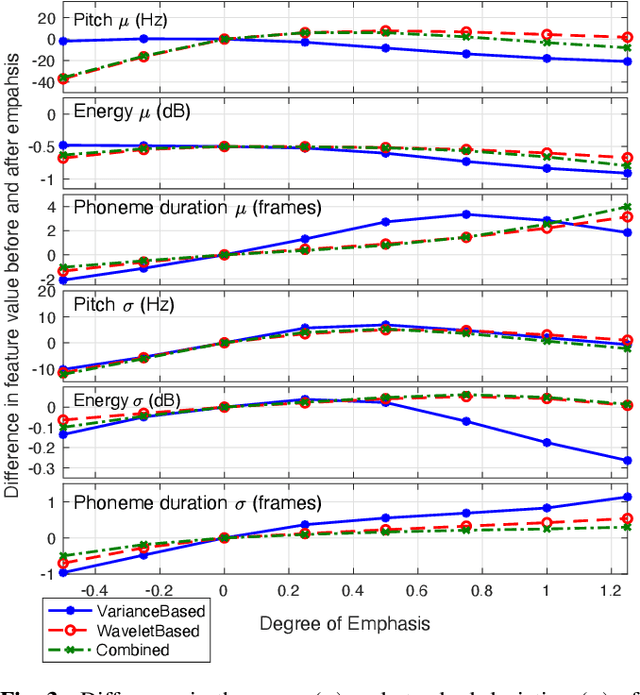

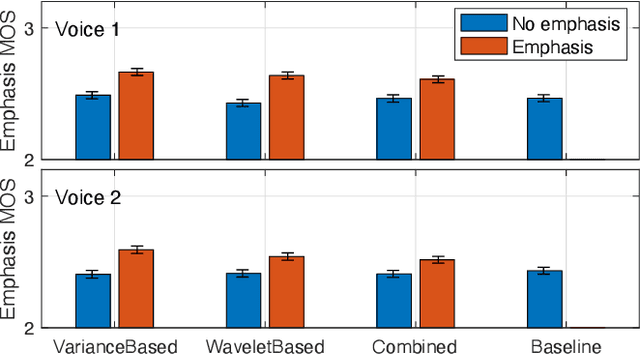

Abstract:The semantic information conveyed by a speech signal is strongly influenced by local variations in prosody. Recent parallel neural text-to-speech (TTS) synthesis methods are able to generate speech with high fidelity while maintaining high performance. However, these systems often lack simple control over the output prosody, thus restricting the semantic information conveyable for a given text. This paper proposes a hierarchical parallel neural TTS system for prosodic emphasis control by learning a latent space that directly corresponds to a change in emphasis. Three candidate features for the latent space are compared: 1) Variance of pitch and duration within words in a sentence, 2) a wavelet based feature computed from pitch, energy, and duration and 3) a learned combination of the above features. Objective measures reveal that the proposed methods are able to achieve a wide range of emphasis modification, and subjective evaluations on the degree of emphasis and the overall quality indicate that they show promise for real-world applications.

Hierarchical prosody modeling and control in non-autoregressive parallel neural TTS

Oct 06, 2021

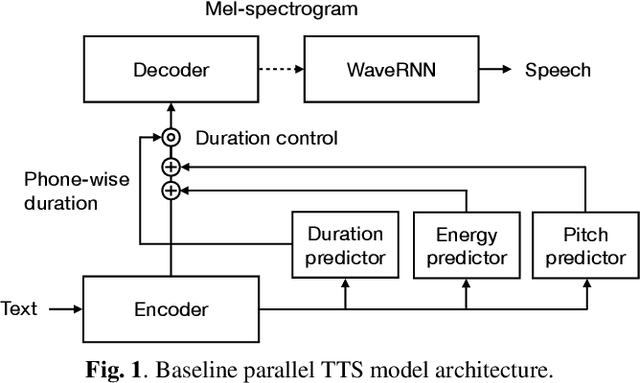

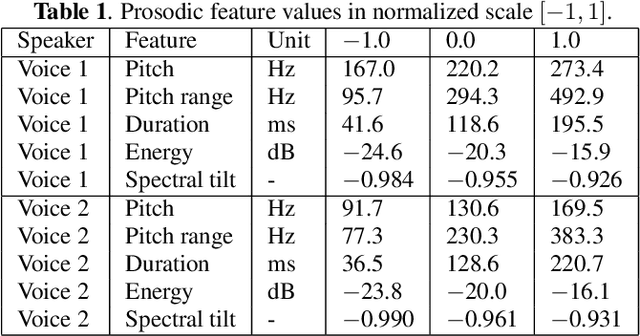

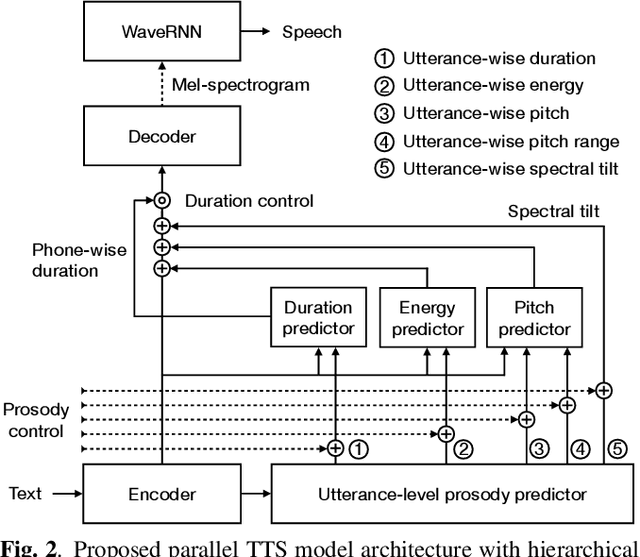

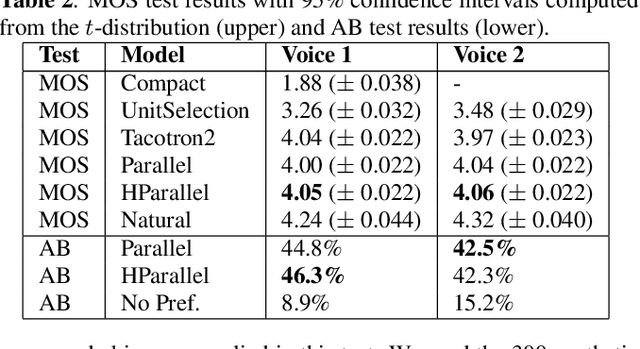

Abstract:Neural text-to-speech (TTS) synthesis can generate speech that is indistinguishable from natural speech. However, the synthetic speech often represents the average prosodic style of the database instead of having more versatile prosodic variation. Moreover, many models lack the ability to control the output prosody, which does not allow for different styles for the same text input. In this work, we train a non-autoregressive parallel neural TTS model hierarchically conditioned on both coarse and fine-grained acoustic speech features to learn a latent prosody space with intuitive and meaningful dimensions. Experiments show that a non-autoregressive TTS model hierarchically conditioned on utterance-wise pitch, pitch range, duration, energy, and spectral tilt can effectively control each prosodic dimension, generate a wide variety of speaking styles, and provide word-wise emphasis control, while maintaining equal or better quality to the baseline model.

SylNet: An Adaptable End-to-End Syllable Count Estimator for Speech

Jun 24, 2019

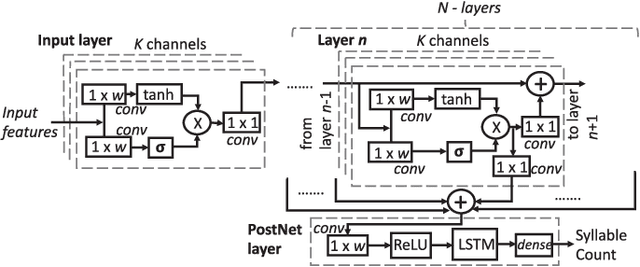

Abstract:Automatic syllable count estimation (SCE) is used in a variety of applications ranging from speaking rate estimation to detecting social activity from wearable microphones or developmental research concerned with quantifying speech heard by language-learning children in different environments. The majority of previously utilized SCE methods have relied on heuristic DSP methods, and only a small number of bi-directional long short-term memory (BLSTM) approaches have made use of modern machine learning approaches in the SCE task. This paper presents a novel end-to-end method called SylNet for automatic syllable counting from speech, built on the basis of a recent developments in neural network architectures. We describe how the entire model can be optimized directly to minimize SCE error on the training data without annotations aligned at the syllable level, and how it can be adapted to new languages using limited speech data with known syllable counts. Experiments on several different languages reveal that SylNet generalizes to languages beyond its training data and further improves with adaptation. It also outperforms several previously proposed methods for syllabification, including end-to-end BLSTMs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge