Shiyan Chen

SpikeGS: 3D Gaussian Splatting from Spike Streams with High-Speed Camera Motion

Jul 14, 2024Abstract:Novel View Synthesis plays a crucial role by generating new 2D renderings from multi-view images of 3D scenes. However, capturing high-speed scenes with conventional cameras often leads to motion blur, hindering the effectiveness of 3D reconstruction. To address this challenge, high-frame-rate dense 3D reconstruction emerges as a vital technique, enabling detailed and accurate modeling of real-world objects or scenes in various fields, including Virtual Reality or embodied AI. Spike cameras, a novel type of neuromorphic sensor, continuously record scenes with an ultra-high temporal resolution, showing potential for accurate 3D reconstruction. Despite their promise, existing approaches, such as applying Neural Radiance Fields (NeRF) to spike cameras, encounter challenges due to the time-consuming rendering process. To address this issue, we make the first attempt to introduce the 3D Gaussian Splatting (3DGS) into spike cameras in high-speed capture, providing 3DGS as dense and continuous clues of views, then constructing SpikeGS. Specifically, to train SpikeGS, we establish computational equations between the rendering process of 3DGS and the processes of instantaneous imaging and exposing-like imaging of the continuous spike stream. Besides, we build a very lightweight but effective mapping process from spikes to instant images to support training. Furthermore, we introduced a new spike-based 3D rendering dataset for validation. Extensive experiments have demonstrated our method possesses the high quality of novel view rendering, proving the tremendous potential of spike cameras in modeling 3D scenes.

SpikeMM: Flexi-Magnification of High-Speed Micro-Motions

Jun 01, 2024Abstract:The amplification of high-speed micro-motions holds significant promise, with applications spanning fault detection in fast-paced industrial environments to refining precision in medical procedures. However, conventional motion magnification algorithms often encounter challenges in high-speed scenarios due to low sampling rates or motion blur. In recent years, spike cameras have emerged as a superior alternative for visual tasks in such environments, owing to their unique capability to capture temporal and spatial frequency domains with exceptional fidelity. Unlike conventional cameras, which operate at fixed, low frequencies, spike cameras emulate the functionality of the retina, asynchronously capturing photon changes at each pixel position using spike streams. This innovative approach comprehensively records temporal and spatial visual information, rendering it particularly suitable for magnifying high-speed micro-motions.This paper introduces SpikeMM, a pioneering spike-based algorithm tailored specifically for high-speed motion magnification. SpikeMM integrates multi-level information extraction, spatial upsampling, and motion magnification modules, offering a self-supervised approach adaptable to a wide range of scenarios. Notably, SpikeMM facilitates seamless integration with high-performance super-resolution and motion magnification algorithms. We substantiate the efficacy of SpikeMM through rigorous validation using scenes captured by spike cameras, showcasing its capacity to magnify motions in real-world high-frequency settings.

SpikeReveal: Unlocking Temporal Sequences from Real Blurry Inputs with Spike Streams

Mar 14, 2024Abstract:Reconstructing a sequence of sharp images from the blurry input is crucial for enhancing our insights into the captured scene and poses a significant challenge due to the limited temporal features embedded in the image. Spike cameras, sampling at rates up to 40,000 Hz, have proven effective in capturing motion features and beneficial for solving this ill-posed problem. Nonetheless, existing methods fall into the supervised learning paradigm, which suffers from notable performance degradation when applied to real-world scenarios that diverge from the synthetic training data domain. Moreover, the quality of reconstructed images is capped by the generated images based on motion analysis interpolation, which inherently differs from the actual scene, affecting the generalization ability of these methods in real high-speed scenarios. To address these challenges, we propose the first self-supervised framework for the task of spike-guided motion deblurring. Our approach begins with the formulation of a spike-guided deblurring model that explores the theoretical relationships among spike streams, blurry images, and their corresponding sharp sequences. We subsequently develop a self-supervised cascaded framework to alleviate the issues of spike noise and spatial-resolution mismatching encountered in the deblurring model. With knowledge distillation and re-blurring loss, we further design a lightweight deblur network to generate high-quality sequences with brightness and texture consistency with the original input. Quantitative and qualitative experiments conducted on our real-world and synthetic datasets with spikes validate the superior generalization of the proposed framework. Our code, data and trained models will be available at \url{https://github.com/chenkang455/S-SDM}.

Unveiling the Potential of Spike Streams for Foreground Occlusion Removal from Densely Continuous Views

Jul 03, 2023

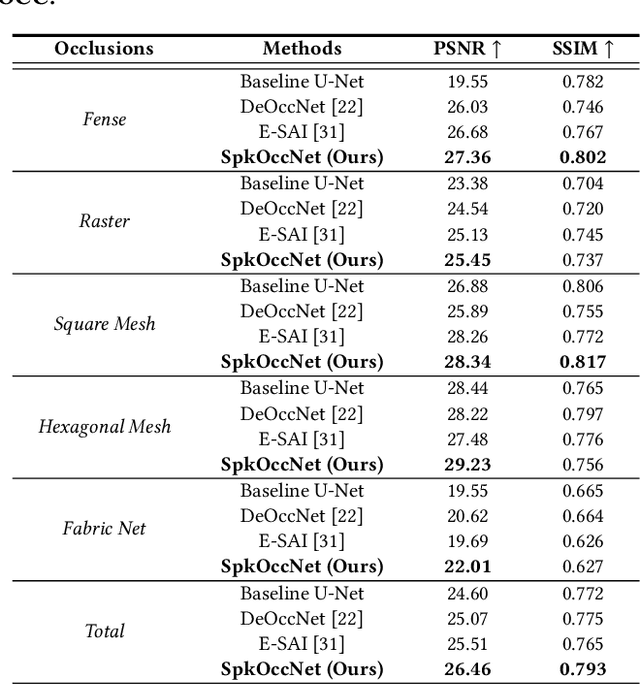

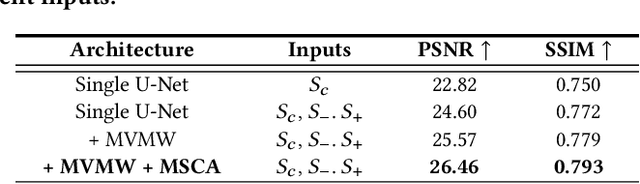

Abstract:The extraction of a clean background image by removing foreground occlusion holds immense practical significance, but it also presents several challenges. Presently, the majority of de-occlusion research focuses on addressing this issue through the extraction and synthesis of discrete images from calibrated camera arrays. Nonetheless, the restoration quality tends to suffer when faced with dense occlusions or high-speed motions due to limited perspectives and motion blur. To successfully remove dense foreground occlusion, an effective multi-view visual information integration approach is required. Introducing the spike camera as a novel type of neuromorphic sensor offers promising capabilities with its ultra-high temporal resolution and high dynamic range. In this paper, we propose an innovative solution for tackling the de-occlusion problem through continuous multi-view imaging using only one spike camera without any prior knowledge of camera intrinsic parameters and camera poses. By rapidly moving the spike camera, we continually capture the dense stream of spikes from the occluded scene. To process the spikes, we build a novel model \textbf{SpkOccNet}, in which we integrate information of spikes from continuous viewpoints within multi-windows, and propose a novel cross-view mutual attention mechanism for effective fusion and refinement. In addition, we contribute the first real-world spike-based dataset \textbf{S-OCC} for occlusion removal. The experimental results demonstrate that our proposed model efficiently removes dense occlusions in diverse scenes while exhibiting strong generalization.

Exploring Asymmetric Tunable Blind-Spots for Self-supervised Denoising in Real-World Scenarios

Mar 29, 2023

Abstract:Self-supervised denoising has attracted widespread attention due to its ability to train without clean images. However, noise in real-world scenarios is often spatially correlated, which causes many self-supervised algorithms based on the pixel-wise independent noise assumption to perform poorly on real-world images. Recently, asymmetric pixel-shuffle downsampling (AP) has been proposed to disrupt the spatial correlation of noise. However, downsampling introduces aliasing effects, and the post-processing to eliminate these effects can destroy the spatial structure and high-frequency details of the image, in addition to being time-consuming. In this paper, we systematically analyze downsampling-based methods and propose an Asymmetric Tunable Blind-Spot Network (AT-BSN) to address these issues. We design a blind-spot network with a freely tunable blind-spot size, using a large blind-spot during training to suppress local spatially correlated noise while minimizing damage to the global structure, and a small blind-spot during inference to minimize information loss. Moreover, we propose blind-spot self-ensemble and distillation of non-blind-spot network to further improve performance and reduce computational complexity. Experimental results demonstrate that our method achieves state-of-the-art results while comprehensively outperforming other self-supervised methods in terms of image texture maintaining, parameter count, computation cost, and inference time.

SpikeCV: Open a Continuous Computer Vision Era

Mar 21, 2023

Abstract:SpikeCV is a new open-source computer vision platform for the spike camera, which is a neuromorphic visual sensor that has developed rapidly in recent years. In the spike camera, each pixel position directly accumulates the light intensity and asynchronously fires spikes. The output binary spikes can reach a frequency of 40,000 Hz. As a new type of visual expression, spike sequence has high spatiotemporal completeness and preserves the continuous visual information of the external world. Taking advantage of the low latency and high dynamic range of the spike camera, many spike-based algorithms have made significant progress, such as high-quality imaging and ultra-high-speed target detection. To build up a community ecology for the spike vision to facilitate more users to take advantage of the spike camera, SpikeCV provides a variety of ultra-high-speed scene datasets, hardware interfaces, and an easy-to-use modules library. SpikeCV focuses on encapsulation for spike data, standardization for dataset interfaces, modularization for vision tasks, and real-time applications for challenging scenes. With the advent of the open-source Python ecosystem, modules of SpikeCV can be used as a Python library to fulfilled most of the numerical analysis needs of researchers. We demonstrate the efficiency of the SpikeCV on offline inference and real-time applications. The project repository address are \url{https://openi.pcl.ac.cn/Cordium/SpikeCV} and \url{https://github.com/Zyj061/SpikeCV

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge