Sergey Mechtaev

HoarePrompt: Structural Reasoning About Program Correctness in Natural Language

Mar 25, 2025

Abstract:While software requirements are often expressed in natural language, verifying the correctness of a program against natural language requirements is a hard and underexplored problem. Large language models (LLMs) are promising candidates for addressing this challenge, however our experience shows that they are ineffective in this task, often failing to detect even straightforward bugs. To address this gap, we introduce HoarePrompt, a novel approach that adapts fundamental ideas from program analysis and verification to natural language artifacts. Drawing inspiration from the strongest postcondition calculus, HoarePrompt employs a systematic, step-by-step process in which an LLM generates natural language descriptions of reachable program states at various points in the code. To manage loops, we propose few-shot-driven k-induction, an adaptation of the k-induction method widely used in model checking. Once program states are described, HoarePrompt leverages the LLM to assess whether the program, annotated with these state descriptions, conforms to the natural language requirements. For evaluating the quality of classifiers of program correctness with respect to natural language requirements, we constructed CoCoClaNeL, a challenging dataset of solutions to programming competition problems. Our experiments show that HoarePrompt improves the MCC by 62% compared to directly using Zero-shot-CoT prompts for correctness classification. Furthermore, HoarePrompt outperforms a classifier that assesses correctness via LLM-based test generation by increasing the MCC by 93%. The inductive reasoning mechanism contributes a 28% boost to MCC, underscoring its effectiveness in managing loops.

Fairness-guided SMT-based Rectification of Decision Trees and Random Forests

Nov 22, 2020

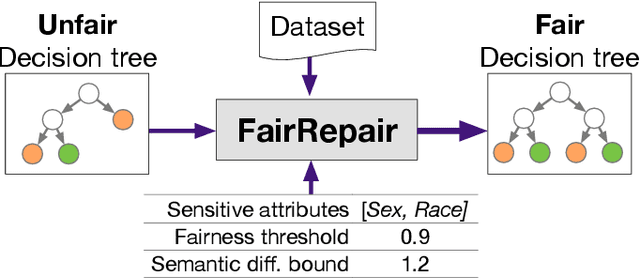

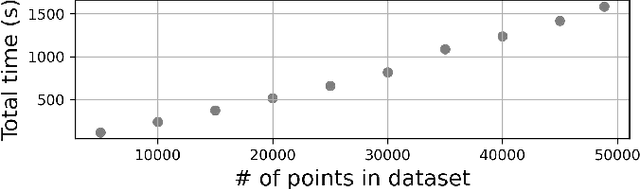

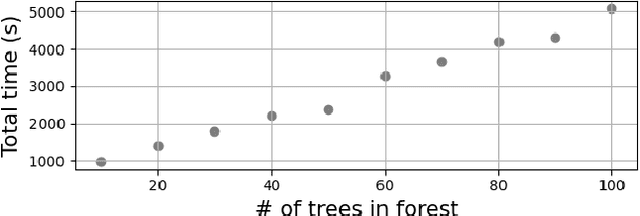

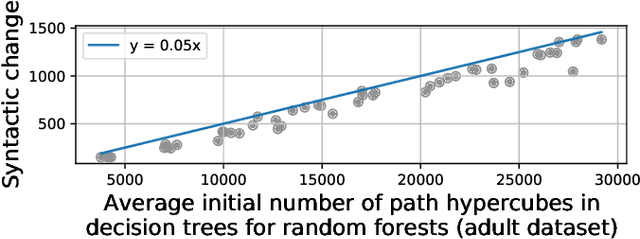

Abstract:Data-driven decision making is gaining prominence with the popularity of various machine learning models. Unfortunately, real-life data used in machine learning training may capture human biases, and as a result the learned models may lead to unfair decision making. In this paper, we provide a solution to this problem for decision trees and random forests. Our approach converts any decision tree or random forest into a fair one with respect to a specific data set, fairness criteria, and sensitive attributes. The \emph{FairRepair} tool, built based on our approach, is inspired by automated program repair techniques for traditional programs. It uses an SMT solver to decide which paths in the decision tree could have their outcomes flipped to improve the fairness of the model. Our experiments on the well-known adult dataset from UC Irvine demonstrate that FairRepair scales to realistic decision trees and random forests. Furthermore, FairRepair provides formal guarantees about soundness and completeness of finding a repair. Since our fairness-guided repair technique repairs decision trees and random forests obtained from a given (unfair) data-set, it can help to identify and rectify biases in decision-making in an organisation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge