Fairness-guided SMT-based Rectification of Decision Trees and Random Forests

Paper and Code

Nov 22, 2020

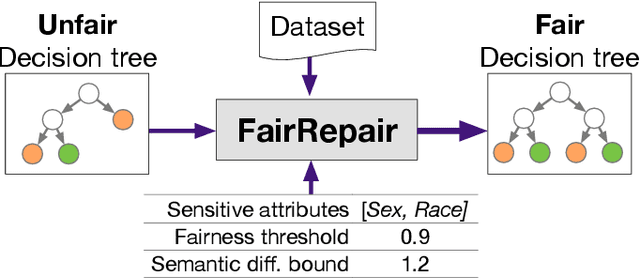

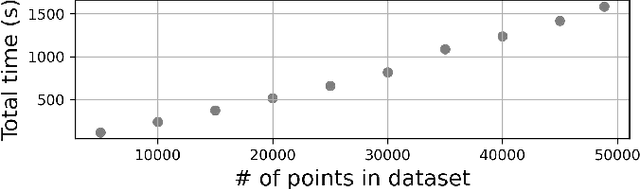

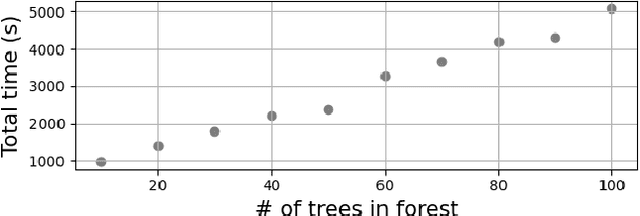

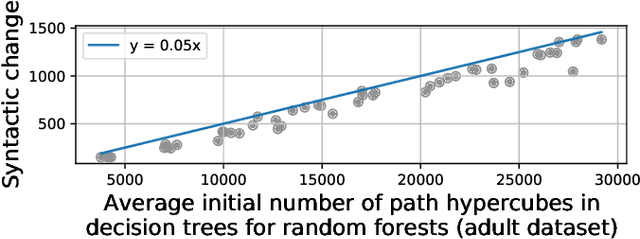

Data-driven decision making is gaining prominence with the popularity of various machine learning models. Unfortunately, real-life data used in machine learning training may capture human biases, and as a result the learned models may lead to unfair decision making. In this paper, we provide a solution to this problem for decision trees and random forests. Our approach converts any decision tree or random forest into a fair one with respect to a specific data set, fairness criteria, and sensitive attributes. The \emph{FairRepair} tool, built based on our approach, is inspired by automated program repair techniques for traditional programs. It uses an SMT solver to decide which paths in the decision tree could have their outcomes flipped to improve the fairness of the model. Our experiments on the well-known adult dataset from UC Irvine demonstrate that FairRepair scales to realistic decision trees and random forests. Furthermore, FairRepair provides formal guarantees about soundness and completeness of finding a repair. Since our fairness-guided repair technique repairs decision trees and random forests obtained from a given (unfair) data-set, it can help to identify and rectify biases in decision-making in an organisation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge