Scott Coull

Efficient Malware Analysis Using Metric Embeddings

Dec 05, 2022Abstract:In this paper, we explore the use of metric learning to embed Windows PE files in a low-dimensional vector space for downstream use in a variety of applications, including malware detection, family classification, and malware attribute tagging. Specifically, we enrich labeling on malicious and benign PE files using computationally expensive, disassembly-based malicious capabilities. Using these capabilities, we derive several different types of metric embeddings utilizing an embedding neural network trained via contrastive loss, Spearman rank correlation, and combinations thereof. We then examine performance on a variety of transfer tasks performed on the EMBER and SOREL datasets, demonstrating that for several tasks, low-dimensional, computationally efficient metric embeddings maintain performance with little decay, which offers the potential to quickly retrain for a variety of transfer tasks at significantly reduced storage overhead. We conclude with an examination of practical considerations for the use of our proposed embedding approach, such as robustness to adversarial evasion and introduction of task-specific auxiliary objectives to improve performance on mission critical tasks.

Exploring Backdoor Poisoning Attacks Against Malware Classifiers

Apr 11, 2020

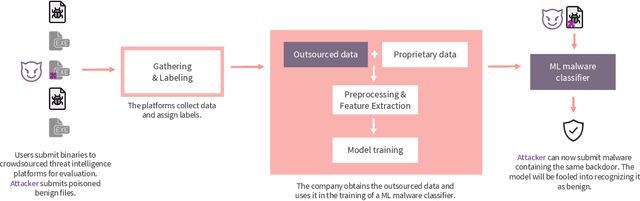

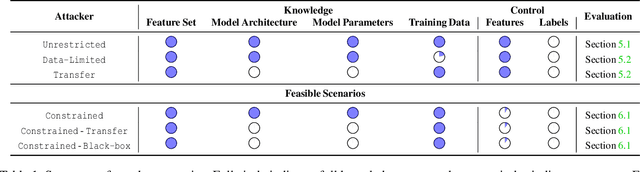

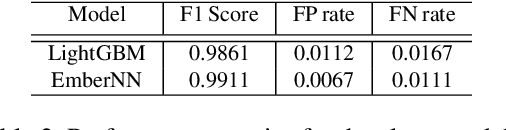

Abstract:Current training pipelines for machine learning (ML) based malware classification rely on crowdsourced threat feeds, exposing a natural attack injection point. We study for the first time the susceptibility of ML malware classifiers to backdoor poisoning attacks, specifically focusing on challenging "clean label" attacks where attackers do not control the sample labeling process. We propose the use of techniques from explainable machine learning to guide the selection of relevant features and their values to create a watermark in a model-agnostic fashion. Using a dataset of 800,000 Windows binaries, we demonstrate effective attacks against gradient boosting decision trees and a neural network model for malware classification under various constraints imposed on the attacker. For example, an attacker injecting just 1% poison samples in the training process can achieve a success rate greater than 97% by crafting a watermark of 8 features out of more than 2,300 available features. To demonstrate the feasibility of our backdoor attacks in practice, we create a watermarking utility for Windows PE files that preserves the binary's functionality. Finally, we experiment with potential defensive strategies and show the difficulties of completely defending against these powerful attacks, especially when the attacks blend in with the legitimate sample distribution.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge