Sanchita Basak

Data-Driven Optimization of Public Transit Schedule

Nov 30, 2019

Abstract:Bus transit systems are the backbone of public transportation in the United States. An important indicator of the quality of service in such infrastructures is on-time performance at stops, with published transit schedules playing an integral role governing the level of success of the service. However there are relatively few optimization architectures leveraging stochastic search that focus on optimizing bus timetables with the objective of maximizing probability of bus arrivals at timepoints with delays within desired on-time ranges. In addition to this, there is a lack of substantial research considering monthly and seasonal variations of delay patterns integrated with such optimization strategies. To address these,this paper makes the following contributions to the corpus of studies on transit on-time performance optimization: (a) an unsupervised clustering mechanism is presented which groups months with similar seasonal delay patterns, (b) the problem is formulated as a single-objective optimization task and a greedy algorithm, a genetic algorithm (GA) as well as a particle swarm optimization (PSO) algorithm are employed to solve it, (c) a detailed discussion on empirical results comparing the algorithms are provided and sensitivity analysis on hyper-parameters of the heuristics are presented along with execution times, which will help practitioners looking at similar problems. The analyses conducted are insightful in the local context of improving public transit scheduling in the Nashville metro region as well as informative from a global perspective as an elaborate case study which builds upon the growing corpus of empirical studies using nature-inspired approaches to transit schedule optimization.

A Review of Deep Learning with Special Emphasis on Architectures, Applications and Recent Trends

May 30, 2019

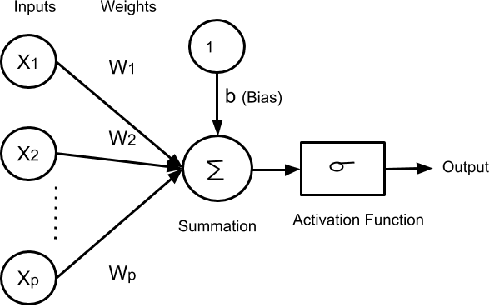

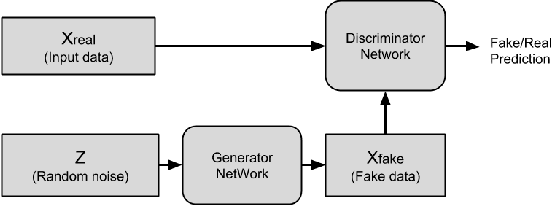

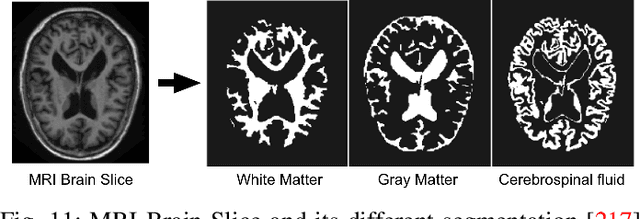

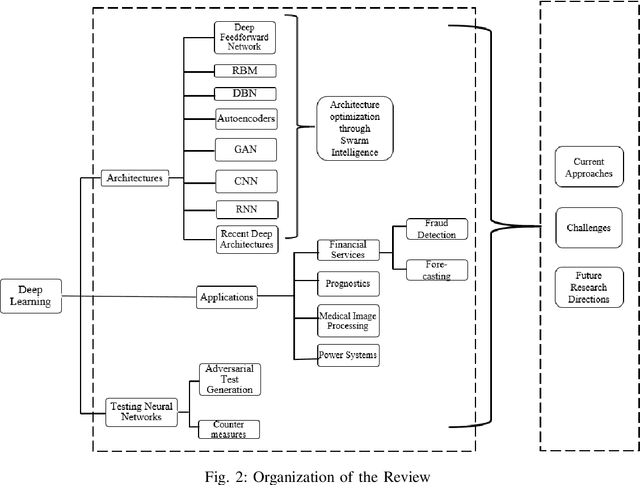

Abstract:Deep learning (DL) has solved a problem that as little as five years ago was thought by many to be intractable - the automatic recognition of patterns in data; and it can do so with accuracy that often surpasses human beings. It has solved problems beyond the realm of traditional, hand-crafted machine learning algorithms and captured the imagination of practitioners trying to make sense out of the flood of data that now inundates our society. As public awareness of the efficacy of DL increases so does the desire to make use of it. But even for highly trained professionals it can be daunting to approach the rapidly increasing body of knowledge produced by experts in the field. Where does one start? How does one determine if a particular model is applicable to their problem? How does one train and deploy such a network? A primer on the subject can be a good place to start. With that in mind, we present an overview of some of the key multilayer ANNs that comprise DL. We also discuss some new automatic architecture optimization protocols that use multi-agent approaches. Further, since guaranteeing system uptime is becoming critical to many computer applications, we include a section on using neural networks for fault detection and subsequent mitigation. This is followed by an exploratory survey of several application areas where DL has emerged as a game-changing technology: anomalous behavior detection in financial applications or in financial time-series forecasting, predictive and prescriptive analytics, medical image processing and analysis and power systems research. The thrust of this review is to outline emerging areas of application-oriented research within the DL community as well as to provide a reference to researchers seeking to use it in their work for what it does best: statistical pattern recognition with unparalleled learning capacity with the ability to scale with information.

Chaotic Quantum Double Delta Swarm Algorithm using Chebyshev Maps: Theoretical Foundations, Performance Analyses and Convergence Issues

Nov 05, 2018

Abstract:Quantum Double Delta Swarm (QDDS) Algorithm is a new metaheuristic algorithm inspired by the convergence mechanism to the center of potential generated within a single well of a spatially co-located double-delta well setup. It mimics the wave nature of candidate positions in solution spaces and draws upon quantum mechanical interpretations much like other quantum-inspired computational intelligence paradigms. In this work, we introduce a Chebyshev map driven chaotic perturbation in the optimization phase of the algorithm to diversify weights placed on contemporary and historical, socially-optimal agents' solutions. We follow this up with a characterization of solution quality on a suite of 23 single-objective functions and carry out a comparative analysis with eight other related nature-inspired approaches. By comparing solution quality and successful runs over dynamic solution ranges, insights about the nature of convergence are obtained. A two-tailed t-test establishes the statistical significance of the solution data whereas Cohen's d and Hedge's g values provide a measure of effect sizes. We trace the trajectory of the fittest pseudo-agent over all function evaluations to comment on the dynamics of the system and prove that the proposed algorithm is theoretically globally convergent under the assumptions adopted for proofs of other closely-related random search algorithms.

A Data-driven Prognostic Architecture for Online Monitoring of Hard Disks Using Deep LSTM Networks

Oct 21, 2018

Abstract:With the advent of pervasive cloud computing technologies, service reliability and availability are becoming major concerns,especially as we start to integrate cyber-physical systems with the cloud networks. A number of smart and connected community systems such as emergency response systems utilize cloud networks to analyze real-time data streams and provide context-sensitive decision support.Improving overall system reliability requires us to study all the aspects of the end-to-end of this distributed system,including the backend data servers. In this paper, we describe a bi-layered prognostic architecture for predicting the Remaining Useful Life (RUL) of components of backend servers,especially those that are subjected to degradation. We show that our architecture is especially good at predicting the remaining useful life of hard disks. A Deep LSTM Network is used as the backbone of this fast, data-driven decision framework and dynamically captures the pattern of the incoming data. In the article, we discuss the architecture of the neural network and describe the mechanisms to choose the various hyper-parameters. We describe the challenges faced in extracting effective training sets from highly unorganized and class-imbalanced big data and establish methods for online predictions with extensive data pre-processing, feature extraction and validation through test sets with unknown remaining useful lives of the hard disks. Our algorithm performs especially well in predicting RUL near the critical zone of a device approaching failure.The proposed architecture is able to predict whether a disk is going to fail in next ten days with an average precision of 0.8435.In future, we will extend this architecture to learn and predict the RUL of the edge devices in the end-to-end distributed systems of smart communities, taking into consideration context-sensitive external features such as weather.

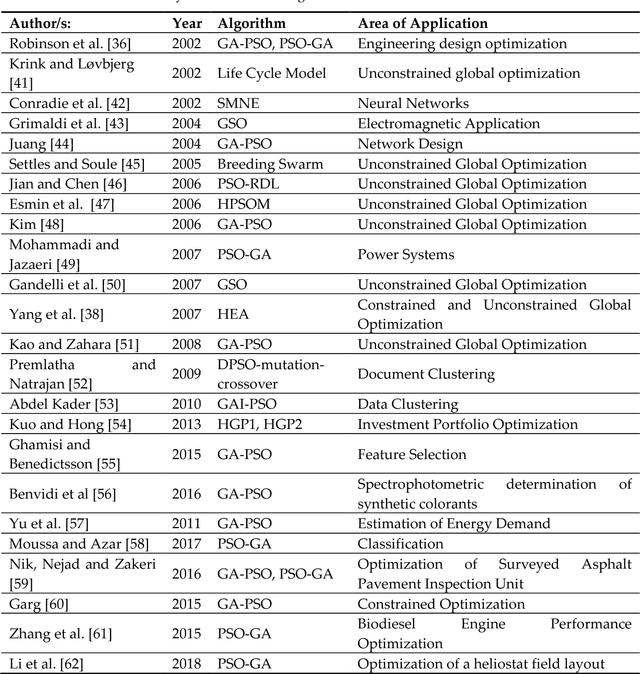

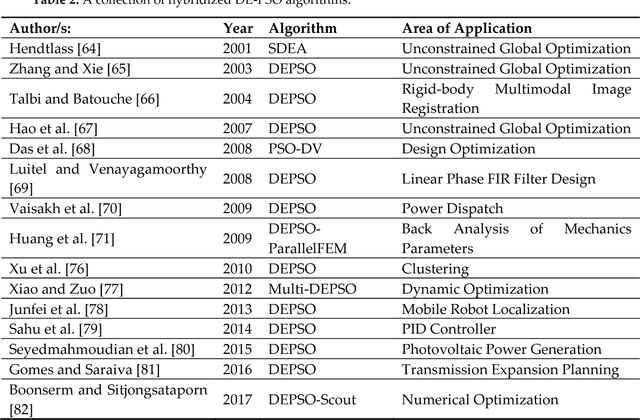

Particle Swarm Optimization: A survey of historical and recent developments with hybridization perspectives

Apr 15, 2018

Abstract:Particle Swarm Optimization (PSO) is a metaheuristic global optimization paradigm that has gained prominence in the last two decades due to its ease of application in unsupervised, complex multidimensional problems which cannot be solved using traditional deterministic algorithms. The canonical particle swarm optimizer is based on the flocking behavior and social co-operation of birds and fish schools and draws heavily from the evolutionary behavior of these organisms. This paper serves to provide a thorough survey of the PSO algorithm with special emphasis on the development, deployment and improvements of its most basic as well as some of the state-of-the-art implementations. Concepts and directions on choosing the inertia weight, constriction factor, cognition and social weights and perspectives on convergence, parallelization, elitism, niching and discrete optimization as well as neighborhood topologies are outlined. Hybridization attempts with other evolutionary and swarm paradigms in selected applications are covered and an up-to-date review is put forward for the interested reader.

Data Clustering using a Hybrid of Fuzzy C-Means and Quantum-behaved Particle Swarm Optimization

Dec 15, 2017

Abstract:Fuzzy clustering has become a widely used data mining technique and plays an important role in grouping, traversing and selectively using data for user specified applications. The deterministic Fuzzy C-Means (FCM) algorithm may result in suboptimal solutions when applied to multidimensional data in real-world, time-constrained problems. In this paper the Quantum-behaved Particle Swarm Optimization (QPSO) with a fully connected topology is coupled with the Fuzzy C-Means Clustering algorithm and is tested on a suite of datasets from the UCI Machine Learning Repository. The global search ability of the QPSO algorithm helps in avoiding stagnation in local optima while the soft clustering approach of FCM helps to partition data based on membership probabilities. Clustering performance indices such as F-Measure, Accuracy, Quantization Error, Intercluster and Intracluster distances are reported for competitive techniques such as PSO K-Means, QPSO K-Means and QPSO FCM over all datasets considered. Experimental results indicate that QPSO FCM provides comparable and in most cases superior results when compared to the others.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge