Sameer Wagh

FALCON: Honest-Majority Maliciously Secure Framework for Private Deep Learning

Apr 05, 2020

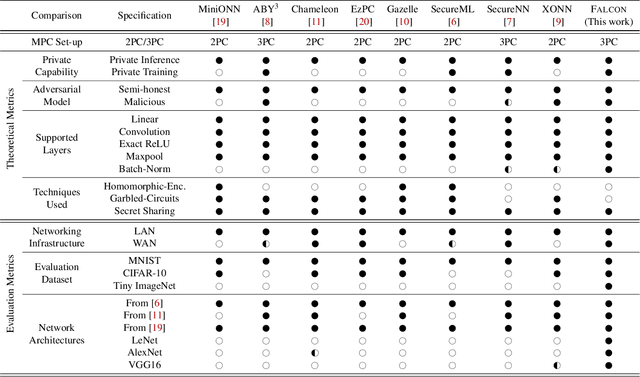

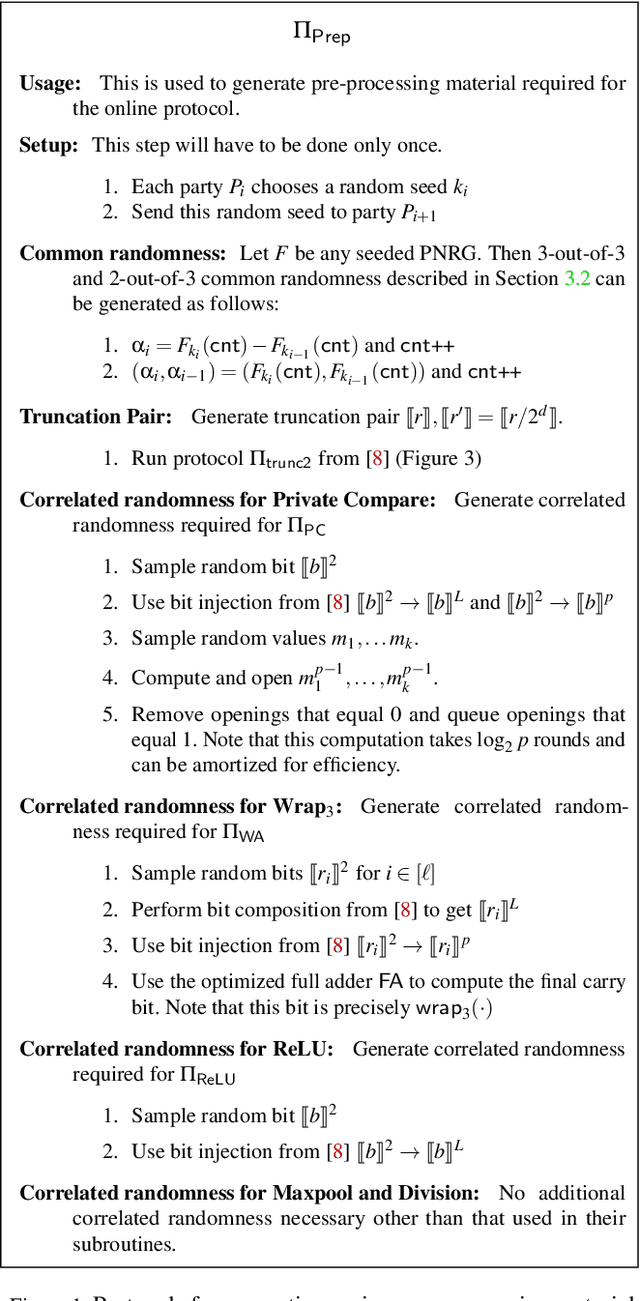

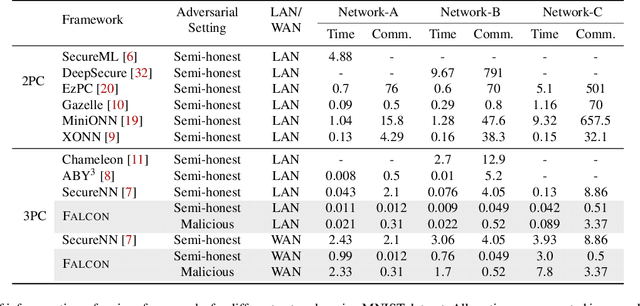

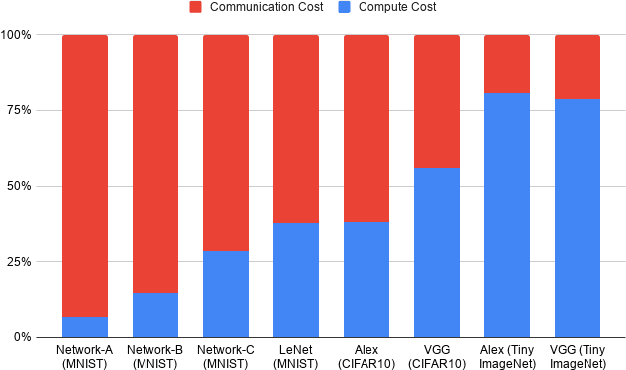

Abstract:This paper aims to enable training and inference of neural networks in a manner that protects the privacy of sensitive data. We propose FALCON - an end-to-end 3-party protocol for fast and secure computation of deep learning algorithms on large networks. FALCON presents three main advantages. It is highly expressive. To the best of our knowledge, it is the first secure framework to support high capacity networks with over a hundred million parameters such as VGG16 as well as the first to support batch normalization, a critical component of deep learning that enables training of complex network architectures such as AlexNet. Next, FALCON guarantees security with abort against malicious adversaries, assuming an honest majority. It ensures that the protocol always completes with correct output for honest participants or aborts when it detects the presence of a malicious adversary. Lastly, FALCON presents new theoretical insights for protocol design that make it highly efficient and allow it to outperform existing secure deep learning solutions. Compared to prior art for private inference, we are about 8x faster than SecureNN (PETS '19) on average and comparable to ABY3 (CCS '18). We are about 16-200x more communication efficient than either of these. For private training, we are about 6x faster than SecureNN, 4.4x faster than ABY3 and about 2-60x more communication efficient. This is the first paper to show via experiments in the WAN setting, that for multi-party machine learning computations over large networks and datasets, compute operations dominate the overall latency, as opposed to the communication.

Towards Probabilistic Verification of Machine Unlearning

Mar 09, 2020

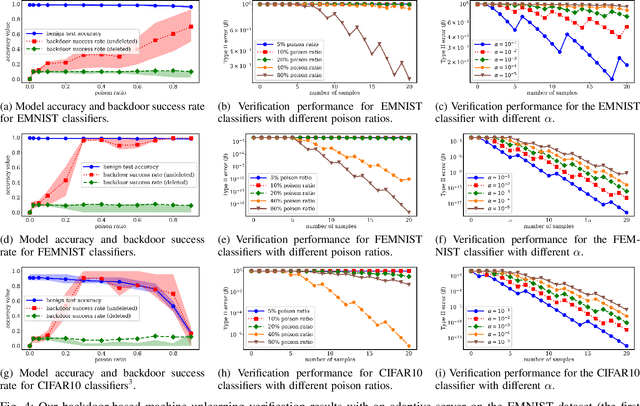

Abstract:Right to be forgotten, also known as the right to erasure, is the right of individuals to have their data erased from an entity storing it. The General Data Protection Regulation in the European Union legally solidified the status of this long held notion. As a consequence, there is a growing need for the development of mechanisms whereby users can verify if service providers comply with their deletion requests. In this work, we take the first step in proposing a formal framework to study the design of such verification mechanisms for data deletion requests -- also known as machine unlearning -- in the context of systems that provide machine learning as a service. We propose a backdoor-based verification mechanism and demonstrate its effectiveness in certifying data deletion with high confidence using the above framework. Our mechanism makes a novel use of backdoor attacks in ML as a basis for quantitatively inferring machine unlearning. In our mechanism, each user poisons part of its training data by injecting a user-specific backdoor trigger associated with a user-specific target label. The prediction of target labels on test samples with the backdoor trigger is then used as an indication of the user's data being used to train the ML model. We formalize the verification process as a hypothesis testing problem, and provide theoretical guarantees on the statistical power of the hypothesis test. We experimentally demonstrate that our approach has minimal effect on the machine learning service but provides high confidence verification of unlearning. We show that with a $30\%$ poison ratio and merely $20$ test queries, our verification mechanism has both false positive and false negative ratios below $10^{-5}$. Furthermore, we also show the effectiveness of our approach by testing it against an adaptive adversary that uses a state-of-the-art backdoor defense method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge