Sameer Malik

RAVU: Retrieval Augmented Video Understanding with Compositional Reasoning over Graph

May 06, 2025

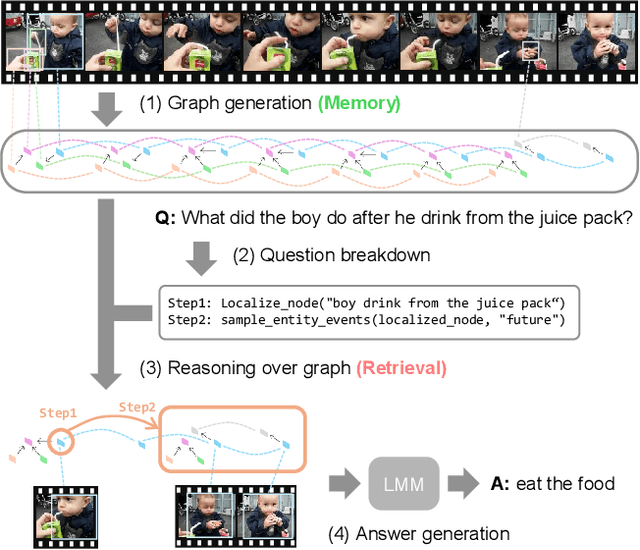

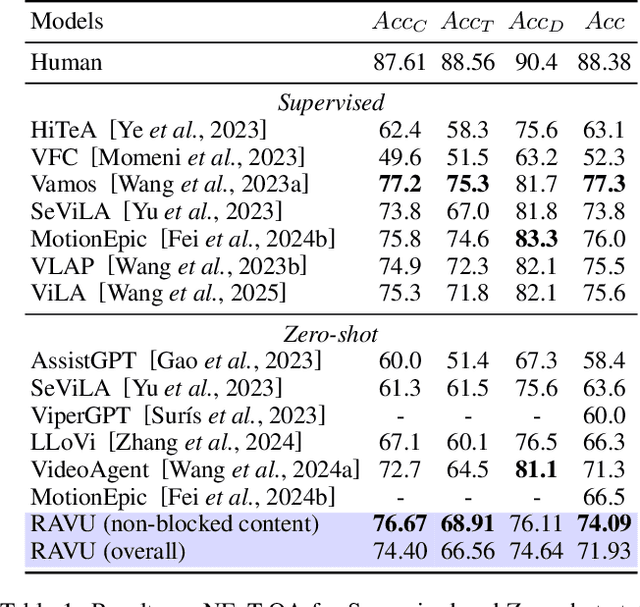

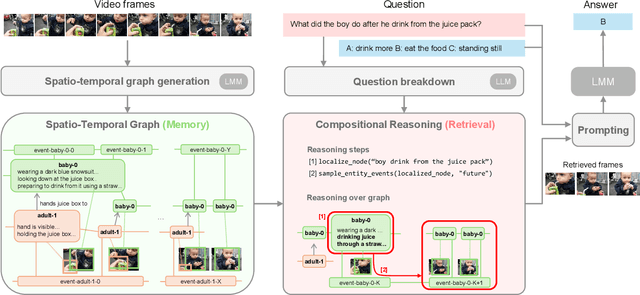

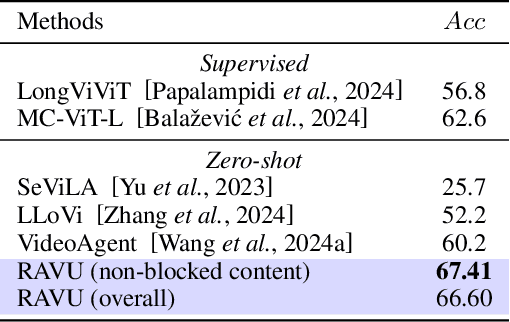

Abstract:Comprehending long videos remains a significant challenge for Large Multi-modal Models (LMMs). Current LMMs struggle to process even minutes to hours videos due to their lack of explicit memory and retrieval mechanisms. To address this limitation, we propose RAVU (Retrieval Augmented Video Understanding), a novel framework for video understanding enhanced by retrieval with compositional reasoning over a spatio-temporal graph. We construct a graph representation of the video, capturing both spatial and temporal relationships between entities. This graph serves as a long-term memory, allowing us to track objects and their actions across time. To answer complex queries, we decompose the queries into a sequence of reasoning steps and execute these steps on the graph, retrieving relevant key information. Our approach enables more accurate understanding of long videos, particularly for queries that require multi-hop reasoning and tracking objects across frames. Our approach demonstrate superior performances with limited retrieved frames (5-10) compared with other SOTA methods and baselines on two major video QA datasets, NExT-QA and EgoSchema.

Low Light Video Enhancement by Learning on Static Videos with Cross-Frame Attention

Oct 09, 2022

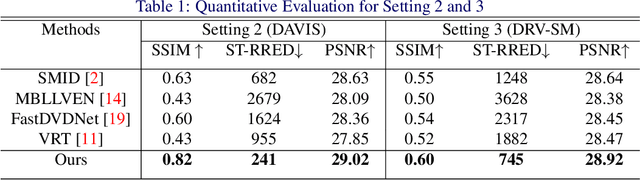

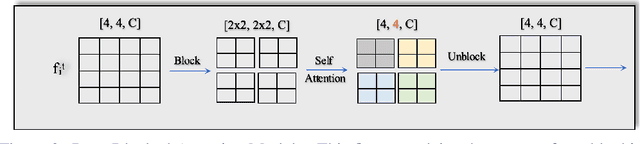

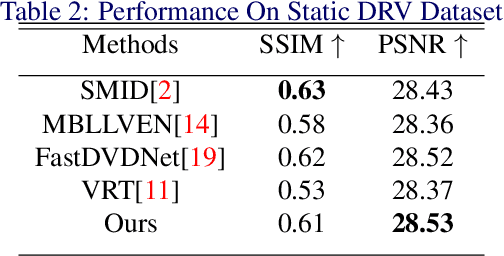

Abstract:The design of deep learning methods for low light video enhancement remains a challenging problem owing to the difficulty in capturing low light and ground truth video pairs. This is particularly hard in the context of dynamic scenes or moving cameras where a long exposure ground truth cannot be captured. We approach this problem by training a model on static videos such that the model can generalize to dynamic videos. Existing methods adopting this approach operate frame by frame and do not exploit the relationships among neighbouring frames. We overcome this limitation through a selfcross dilated attention module that can effectively learn to use information from neighbouring frames even when dynamics between the frames are different during training and test times. We validate our approach through experiments on multiple datasets and show that our method outperforms other state-of-the-art video enhancement algorithms when trained only on static videos.

Quality Assessment of Low Light Restored Images: A Subjective Study and an Unsupervised Model

Feb 04, 2022

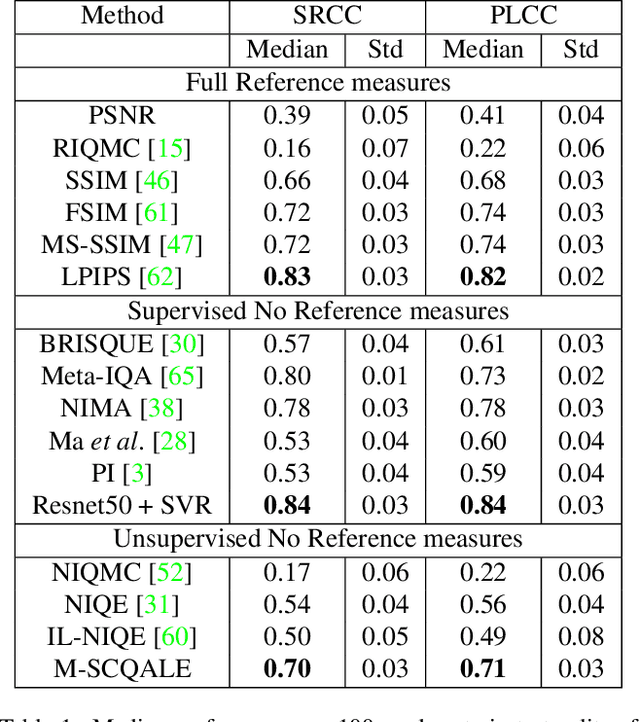

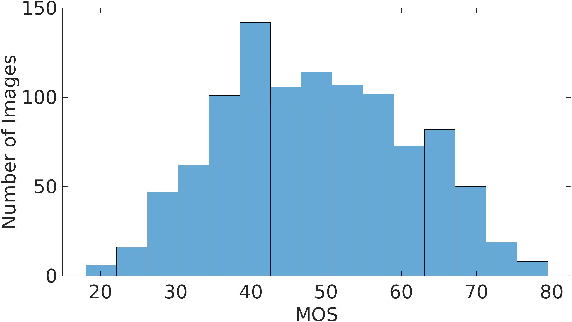

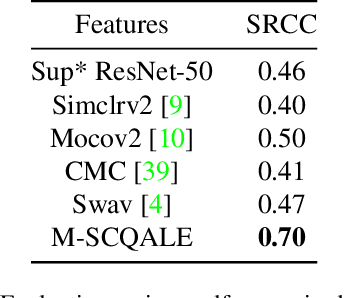

Abstract:The quality assessment (QA) of restored low light images is an important tool for benchmarking and improving low light restoration (LLR) algorithms. While several LLR algorithms exist, the subjective perception of the restored images has been much less studied. Challenges in capturing aligned low light and well-lit image pairs and collecting a large number of human opinion scores of quality for training, warrant the design of unsupervised (or opinion unaware) no-reference (NR) QA methods. This work studies the subjective perception of low light restored images and their unsupervised NR QA. Our contributions are two-fold. We first create a dataset of restored low light images using various LLR methods, conduct a subjective QA study and benchmark the performance of existing QA methods. We then present a self-supervised contrastive learning technique to extract distortion aware features from the restored low light images. We show that these features can be effectively used to build an opinion unaware image quality analyzer. Detailed experiments reveal that our unsupervised NR QA model achieves state-of-the-art performance among all such quality measures for low light restored images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge