Salih Selek

Multi-Label Classification with Generative AI Models in Healthcare: A Case Study of Suicidality and Risk Factors

Jul 22, 2025

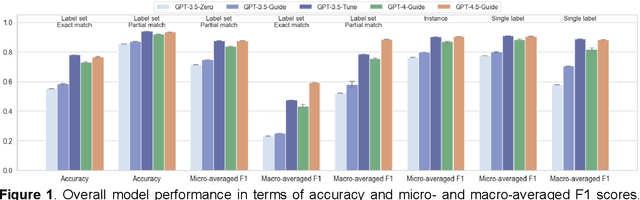

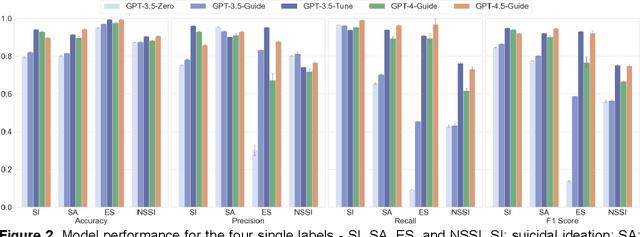

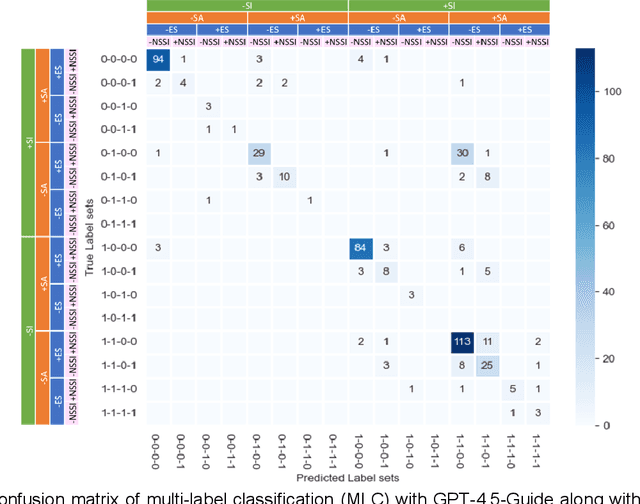

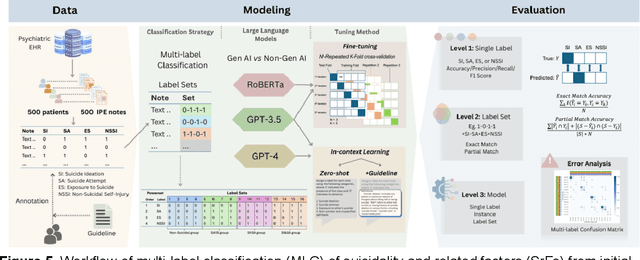

Abstract:Suicide remains a pressing global health crisis, with over 720,000 deaths annually and millions more affected by suicide ideation (SI) and suicide attempts (SA). Early identification of suicidality-related factors (SrFs), including SI, SA, exposure to suicide (ES), and non-suicidal self-injury (NSSI), is critical for timely intervention. While prior studies have applied AI to detect SrFs in clinical notes, most treat suicidality as a binary classification task, overlooking the complexity of cooccurring risk factors. This study explores the use of generative large language models (LLMs), specifically GPT-3.5 and GPT-4.5, for multi-label classification (MLC) of SrFs from psychiatric electronic health records (EHRs). We present a novel end to end generative MLC pipeline and introduce advanced evaluation methods, including label set level metrics and a multilabel confusion matrix for error analysis. Finetuned GPT-3.5 achieved top performance with 0.94 partial match accuracy and 0.91 F1 score, while GPT-4.5 with guided prompting showed superior performance across label sets, including rare or minority label sets, indicating a more balanced and robust performance. Our findings reveal systematic error patterns, such as the conflation of SI and SA, and highlight the models tendency toward cautious over labeling. This work not only demonstrates the feasibility of using generative AI for complex clinical classification tasks but also provides a blueprint for structuring unstructured EHR data to support large scale clinical research and evidence based medicine.

Suicide Phenotyping from Clinical Notes in Safety-Net Psychiatric Hospital Using Multi-Label Classification with Pre-Trained Language Models

Sep 27, 2024

Abstract:Accurate identification and categorization of suicidal events can yield better suicide precautions, reducing operational burden, and improving care quality in high-acuity psychiatric settings. Pre-trained language models offer promise for identifying suicidality from unstructured clinical narratives. We evaluated the performance of four BERT-based models using two fine-tuning strategies (multiple single-label and single multi-label) for detecting coexisting suicidal events from 500 annotated psychiatric evaluation notes. The notes were labeled for suicidal ideation (SI), suicide attempts (SA), exposure to suicide (ES), and non-suicidal self-injury (NSSI). RoBERTa outperformed other models using binary relevance (acc=0.86, F1=0.78). MentalBERT (F1=0.74) also exceeded BioClinicalBERT (F1=0.72). RoBERTa fine-tuned with a single multi-label classifier further improved performance (acc=0.88, F1=0.81), highlighting that models pre-trained on domain-relevant data and the single multi-label classification strategy enhance efficiency and performance. Keywords: EHR-based Phynotyping; Natural Language Processing; Secondary Use of EHR Data; Suicide Classification; BERT-based Model; Psychiatry; Mental Health

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge