Sajjad Abbasi

Classification of Diabetic Retinopathy Using Unlabeled Data and Knowledge Distillation

Sep 01, 2020

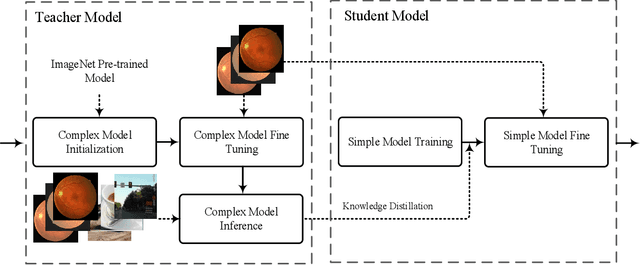

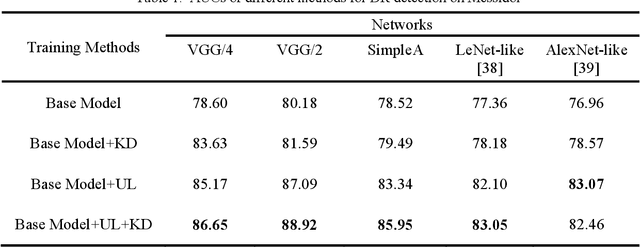

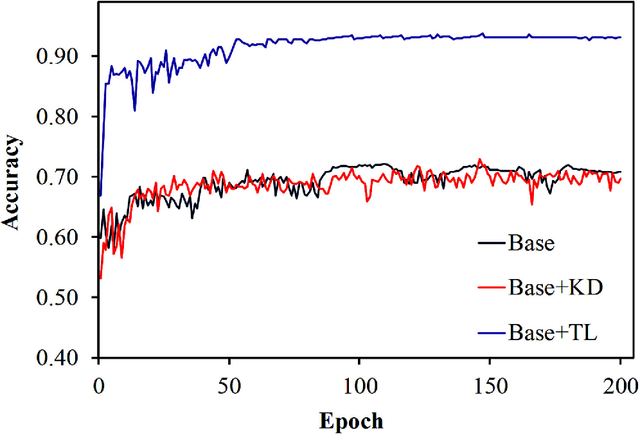

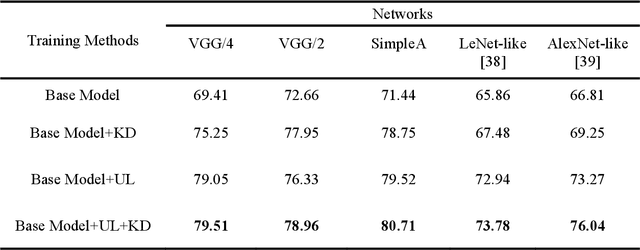

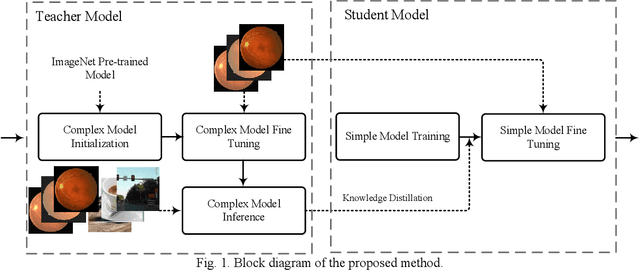

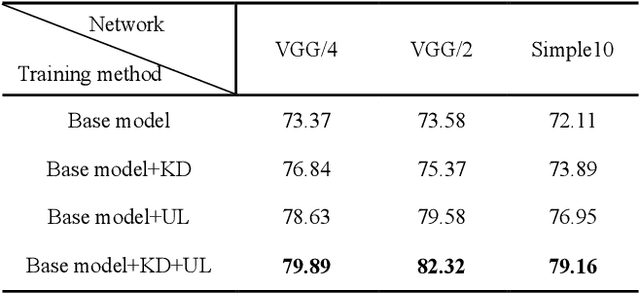

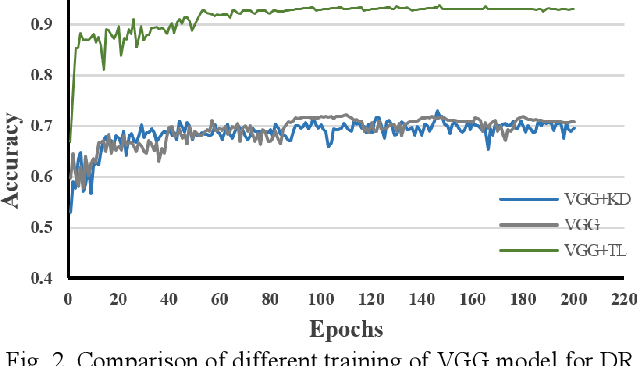

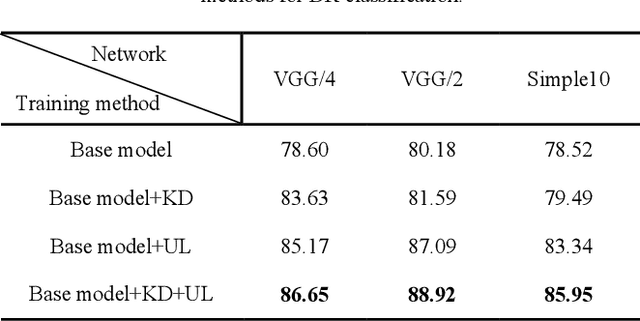

Abstract:Knowledge distillation allows transferring knowledge from a pre-trained model to another. However, it suffers from limitations, and constraints related to the two models need to be architecturally similar. Knowledge distillation addresses some of the shortcomings associated with transfer learning by generalizing a complex model to a lighter model. However, some parts of the knowledge may not be distilled by knowledge distillation sufficiently. In this paper, a novel knowledge distillation approach using transfer learning is proposed. The proposed method transfers the entire knowledge of a model to a new smaller one. To accomplish this, unlabeled data are used in an unsupervised manner to transfer the maximum amount of knowledge to the new slimmer model. The proposed method can be beneficial in medical image analysis, where labeled data are typically scarce. The proposed approach is evaluated in the context of classification of images for diagnosing Diabetic Retinopathy on two publicly available datasets, including Messidor and EyePACS. Simulation results demonstrate that the approach is effective in transferring knowledge from a complex model to a lighter one. Furthermore, experimental results illustrate that the performance of different small models is improved significantly using unlabeled data and knowledge distillation.

Unlabeled Data Deployment for Classification of Diabetic Retinopathy Images Using Knowledge Transfer

Feb 09, 2020

Abstract:Convolutional neural networks (CNNs) are extensively beneficial for medical image processing. Medical images are plentiful, but there is a lack of annotated data. Transfer learning is used to solve the problem of lack of labeled data and grants CNNs better training capability. Transfer learning can be used in many different medical applications; however, the model under transfer should have the same size as the original network. Knowledge distillation is recently proposed to transfer the knowledge of a model to another one and can be useful to cover the shortcomings of transfer learning. But some parts of the knowledge may not be distilled by knowledge distillation. In this paper, a novel knowledge distillation using transfer learning is proposed to transfer the whole knowledge of a model to another one. The proposed method can be beneficial and practical for medical image analysis in which a small number of labeled data are available. The proposed process is tested for diabetic retinopathy classification. Simulation results demonstrate that using the proposed method, knowledge of an extensive network can be transferred to a smaller model.

Modeling Teacher-Student Techniques in Deep Neural Networks for Knowledge Distillation

Dec 31, 2019

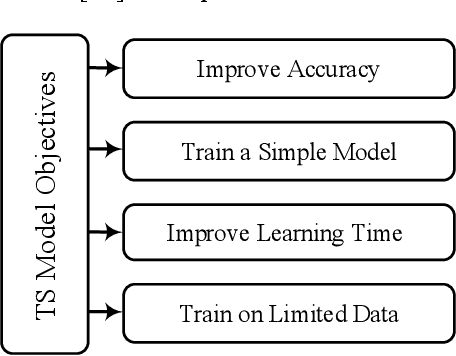

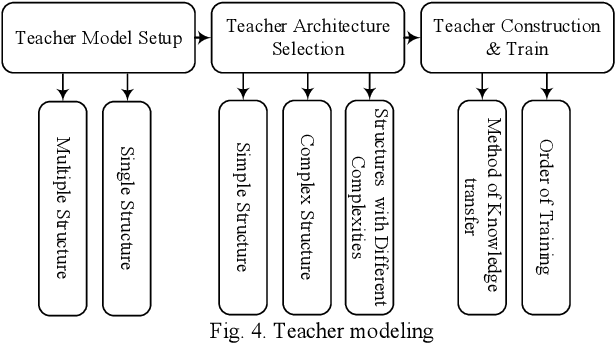

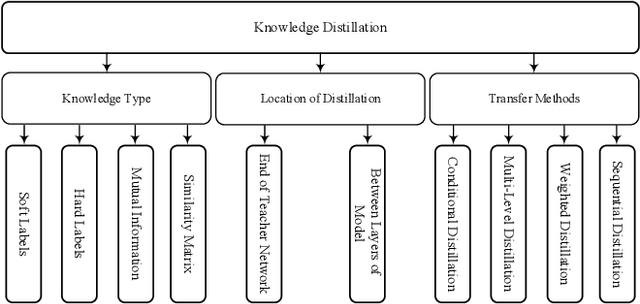

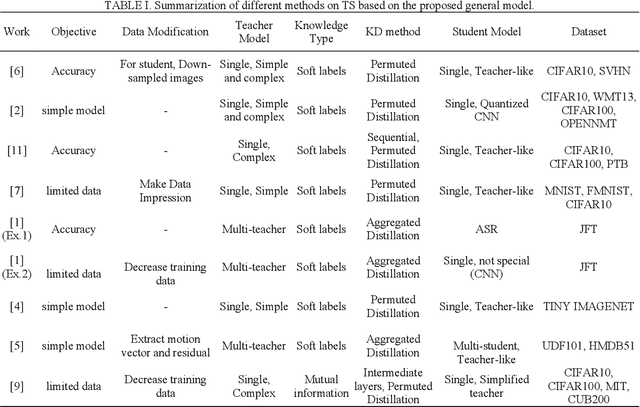

Abstract:Knowledge distillation (KD) is a new method for transferring knowledge of a structure under training to another one. The typical application of KD is in the form of learning a small model (named as a student) by soft labels produced by a complex model (named as a teacher). Due to the novel idea introduced in KD, recently, its notion is used in different methods such as compression and processes that are going to enhance the model accuracy. Although different techniques are proposed in the area of KD, there is a lack of a model to generalize KD techniques. In this paper, various studies in the scope of KD are investigated and analyzed to build a general model for KD. All the methods and techniques in KD can be summarized through the proposed model. By utilizing the proposed model, different methods in KD are better investigated and explored. The advantages and disadvantages of different approaches in KD can be better understood and develop a new strategy for KD can be possible. Using the proposed model, different KD methods are represented in an abstract view.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge