Classification of Diabetic Retinopathy Using Unlabeled Data and Knowledge Distillation

Paper and Code

Sep 01, 2020

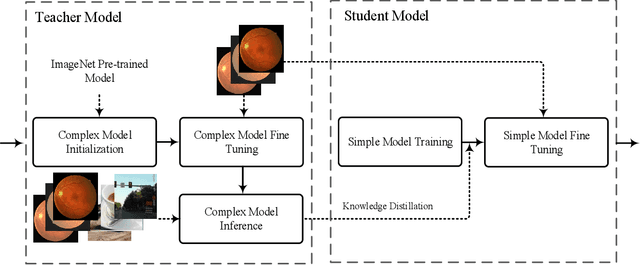

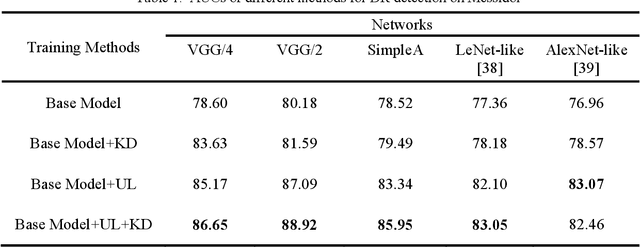

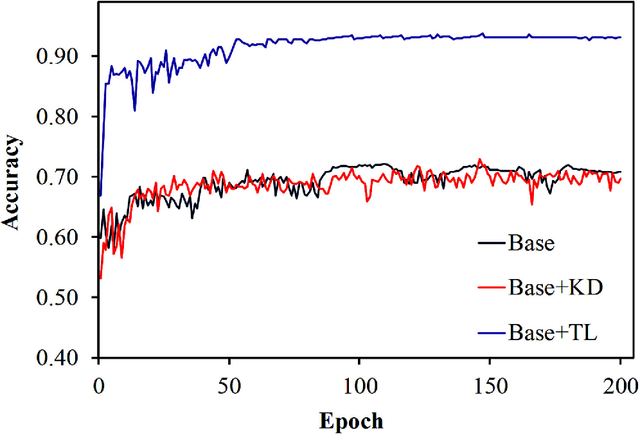

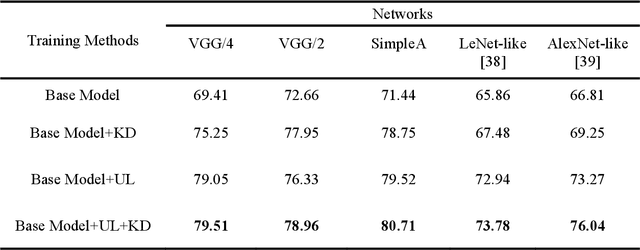

Knowledge distillation allows transferring knowledge from a pre-trained model to another. However, it suffers from limitations, and constraints related to the two models need to be architecturally similar. Knowledge distillation addresses some of the shortcomings associated with transfer learning by generalizing a complex model to a lighter model. However, some parts of the knowledge may not be distilled by knowledge distillation sufficiently. In this paper, a novel knowledge distillation approach using transfer learning is proposed. The proposed method transfers the entire knowledge of a model to a new smaller one. To accomplish this, unlabeled data are used in an unsupervised manner to transfer the maximum amount of knowledge to the new slimmer model. The proposed method can be beneficial in medical image analysis, where labeled data are typically scarce. The proposed approach is evaluated in the context of classification of images for diagnosing Diabetic Retinopathy on two publicly available datasets, including Messidor and EyePACS. Simulation results demonstrate that the approach is effective in transferring knowledge from a complex model to a lighter one. Furthermore, experimental results illustrate that the performance of different small models is improved significantly using unlabeled data and knowledge distillation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge