S. J. Ben Yoo

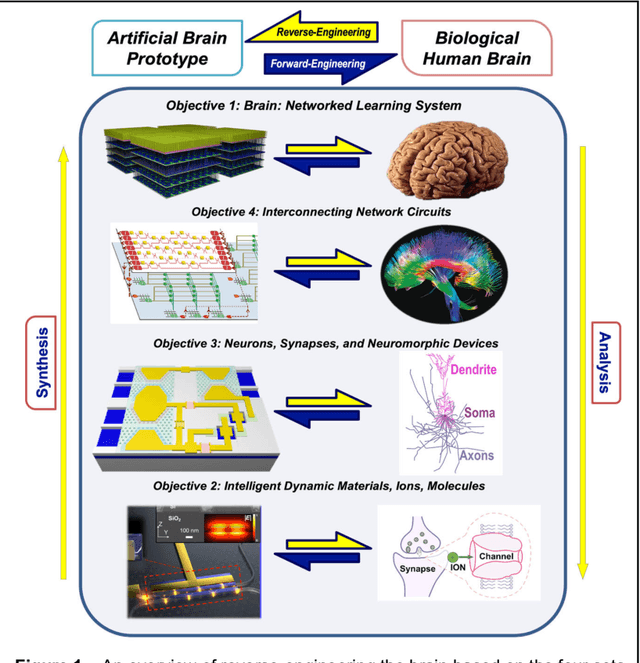

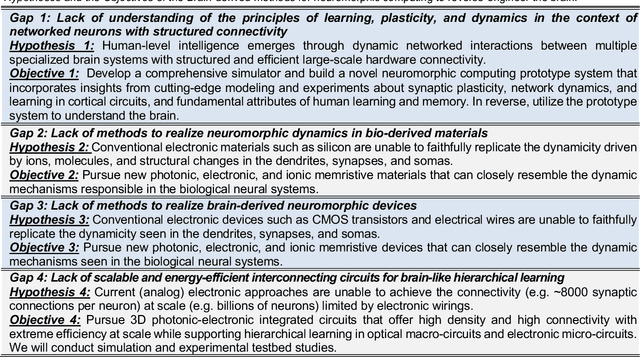

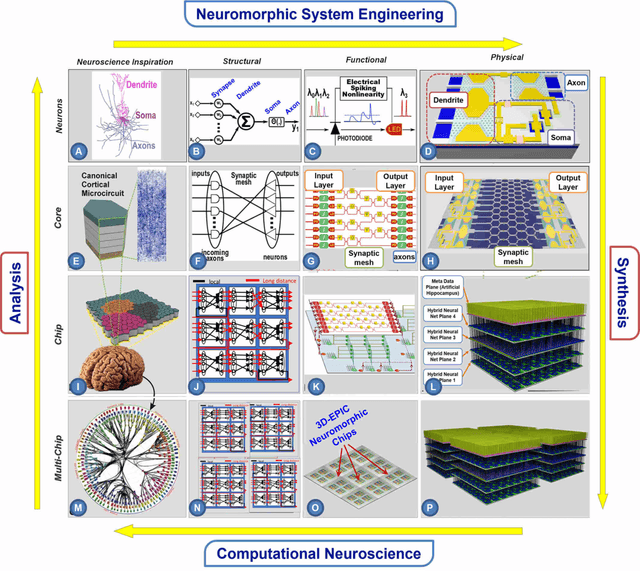

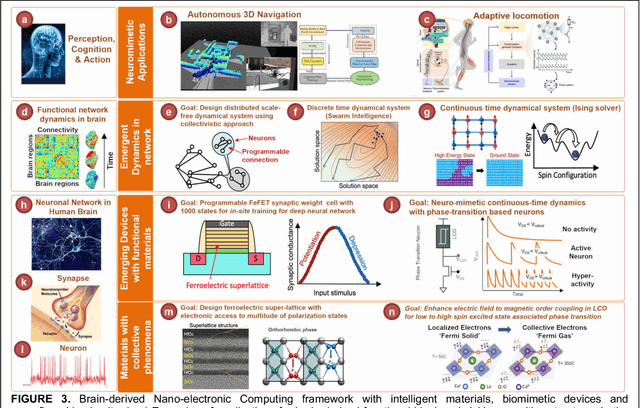

Towards Reverse-Engineering the Brain: Brain-Derived Neuromorphic Computing Approach with Photonic, Electronic, and Ionic Dynamicity in 3D integrated circuits

Mar 28, 2024

Abstract:The human brain has immense learning capabilities at extreme energy efficiencies and scale that no artificial system has been able to match. For decades, reverse engineering the brain has been one of the top priorities of science and technology research. Despite numerous efforts, conventional electronics-based methods have failed to match the scalability, energy efficiency, and self-supervised learning capabilities of the human brain. On the other hand, very recent progress in the development of new generations of photonic and electronic memristive materials, device technologies, and 3D electronic-photonic integrated circuits (3D EPIC ) promise to realize new brain-derived neuromorphic systems with comparable connectivity, density, energy-efficiency, and scalability. When combined with bio-realistic learning algorithms and architectures, it may be possible to realize an 'artificial brain' prototype with general self-learning capabilities. This paper argues the possibility of reverse-engineering the brain through architecting a prototype of a brain-derived neuromorphic computing system consisting of artificial electronic, ionic, photonic materials, devices, and circuits with dynamicity resembling the bio-plausible molecular, neuro/synaptic, neuro-circuit, and multi-structural hierarchical macro-circuits of the brain based on well-tested computational models. We further argue the importance of bio-plausible local learning algorithms applicable to the neuromorphic computing system that capture the flexible and adaptive unsupervised and self-supervised learning mechanisms central to human intelligence. Most importantly, we emphasize that the unique capabilities in brain-derived neuromorphic computing prototype systems will enable us to understand links between specific neuronal and network-level properties with system-level functioning and behavior.

Scalable Nanophotonic-Electronic Spiking Neural Networks

Aug 28, 2022

Abstract:Spiking neural networks (SNN) provide a new computational paradigm capable of highly parallelized, real-time processing. Photonic devices are ideal for the design of high-bandwidth, parallel architectures matching the SNN computational paradigm. Co-integration of CMOS and photonic elements allow low-loss photonic devices to be combined with analog electronics for greater flexibility of nonlinear computational elements. As such, we designed and simulated an optoelectronic spiking neuron circuit on a monolithic silicon photonics (SiPh) process that replicates useful spiking behaviors beyond the leaky integrate-and-fire (LIF). Additionally, we explored two learning algorithms with the potential for on-chip learning using Mach-Zehnder Interferometric (MZI) meshes as synaptic interconnects. A variation of Random Backpropagation (RPB) was experimentally demonstrated on-chip and matched the performance of a standard linear regression on a simple classification task. Meanwhile, the Contrastive Hebbian Learning (CHL) rule was applied to a simulated neural network composed of MZI meshes for a random input-output mapping task. The CHL-trained MZI network performed better than random guessing but does not match the performance of the ideal neural network (without the constraints imposed by the MZI meshes). Through these efforts, we demonstrate that co-integrated CMOS and SiPh technologies are well-suited to the design of scalable SNN computing architectures.

Izhikevich-Inspired Optoelectronic Neurons with Excitatory and Inhibitory Inputs for Energy-Efficient Photonic Spiking Neural Networks

May 03, 2021

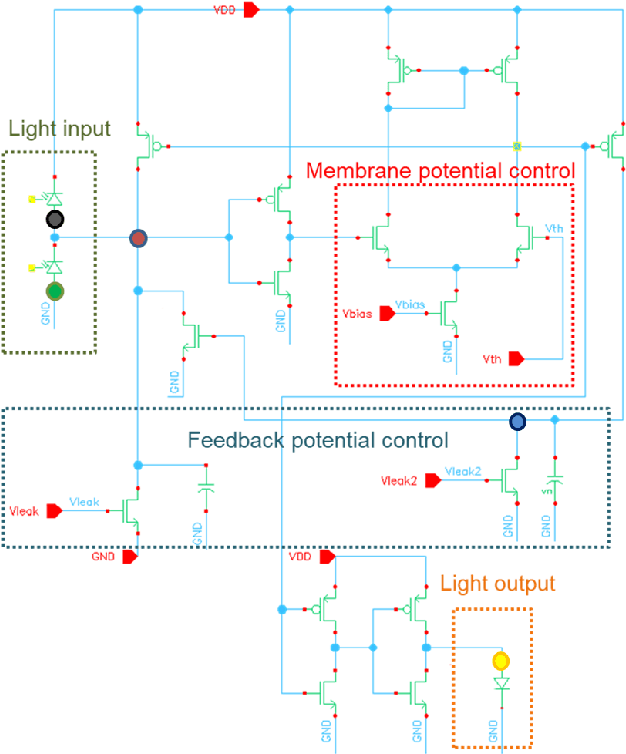

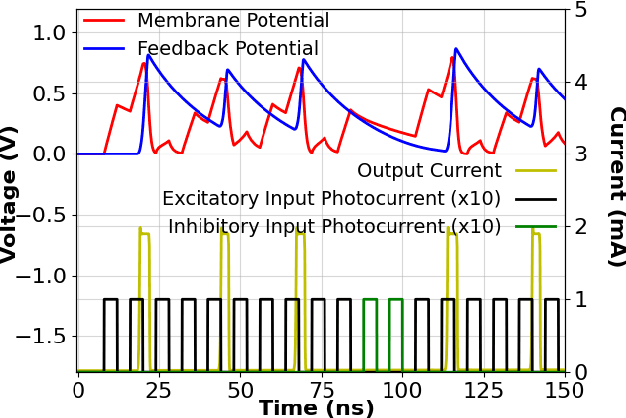

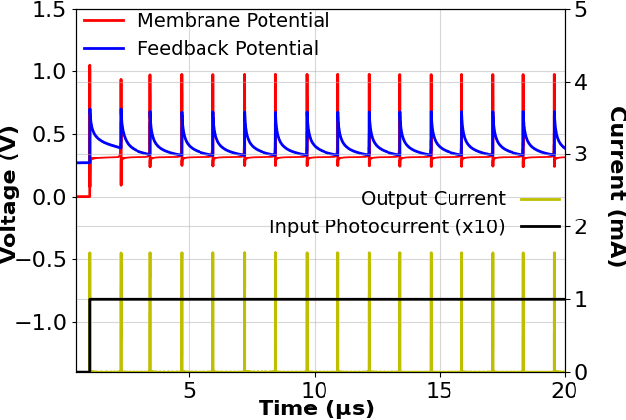

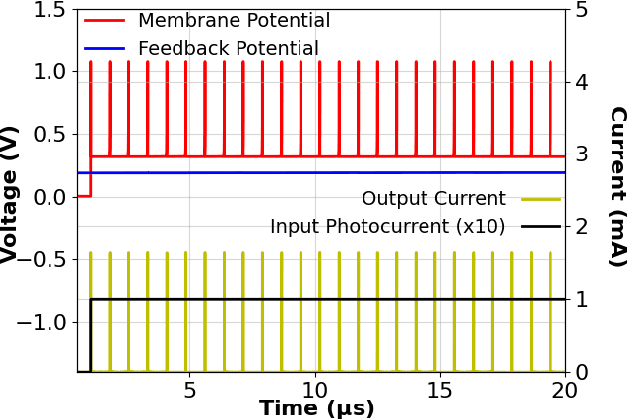

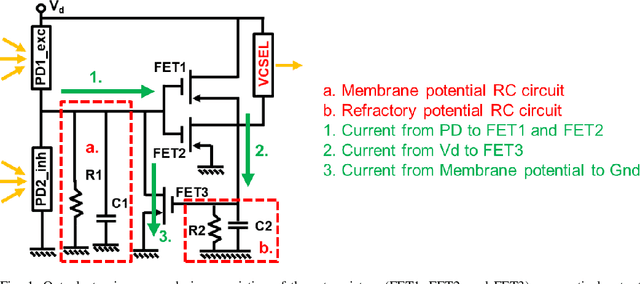

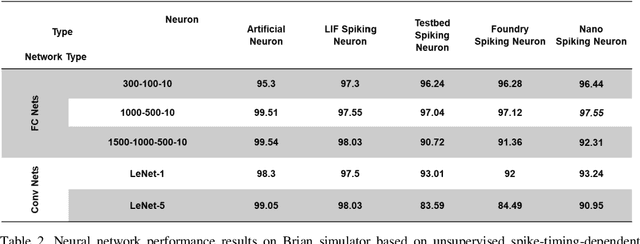

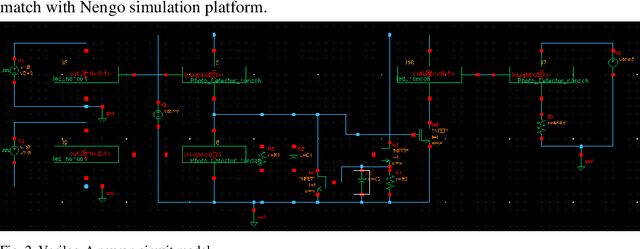

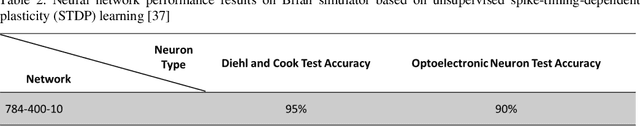

Abstract:We designed, prototyped, and experimentally demonstrated, for the first time to our knowledge, an optoelectronic spiking neuron inspired by the Izhikevich model incorporating both excitatory and inhibitory optical spiking inputs and producing optical spiking outputs accordingly. The optoelectronic neurons consist of three transistors acting as electrical spiking circuits, a vertical-cavity surface-emitting laser (VCSEL) for optical spiking outputs, and two photodetectors for excitatory and inhibitory optical spiking inputs. Additional inclusion of capacitors and resistors complete the Izhikevich-inspired optoelectronic neurons, which receive excitatory and inhibitory optical spikes as inputs from other optoelectronic neurons. We developed a detailed optoelectronic neuron model in Verilog-A and simulated the circuit-level operation of various cases with excitatory input and inhibitory input signals. The experimental results closely resemble the simulated results and demonstrate how the excitatory inputs trigger the optical spiking outputs while the inhibitory inputs suppress the outputs. Utilizing the simulated neuron model, we conducted simulations using fully connected (FC) and convolutional neural networks (CNN). The simulation results using MNIST handwritten digits recognition show 90% accuracy on unsupervised learning and 97% accuracy on a supervised modified FC neural network. We further designed a nanoscale optoelectronic neuron utilizing quantum impedance conversion where a 200 aJ/spike input can trigger the output from on-chip nanolasers with 10 fJ/spike. The nanoscale neuron can support a fanout of ~80 or overcome 19 dB excess optical loss while running at 10 GSpikes/second in the neural network, which corresponds to 100x throughput and 1000x energy-efficiency improvement compared to state-of-art electrical neuromorphic hardware such as Loihi and NeuroGrid.

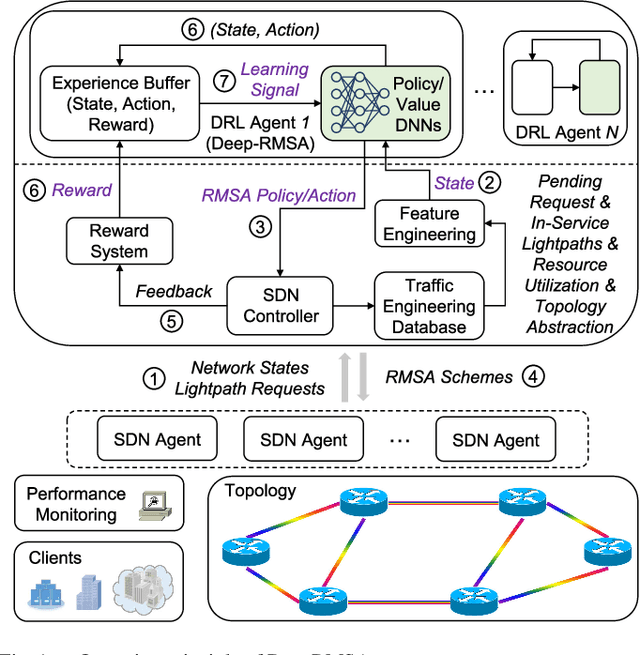

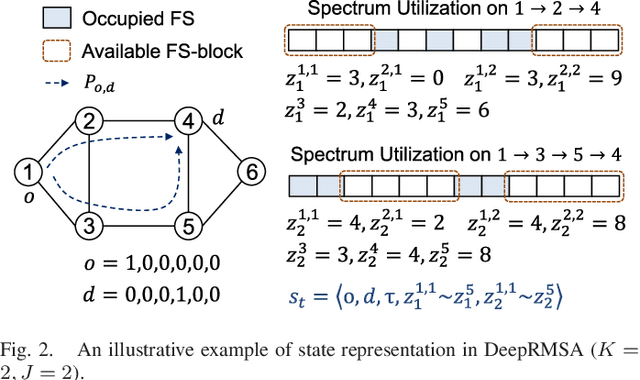

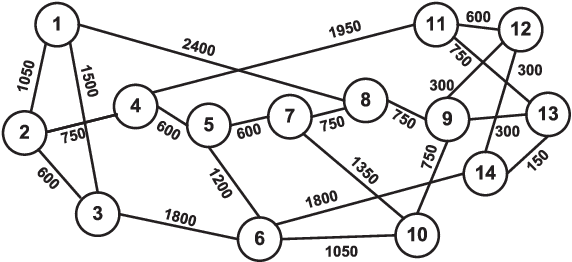

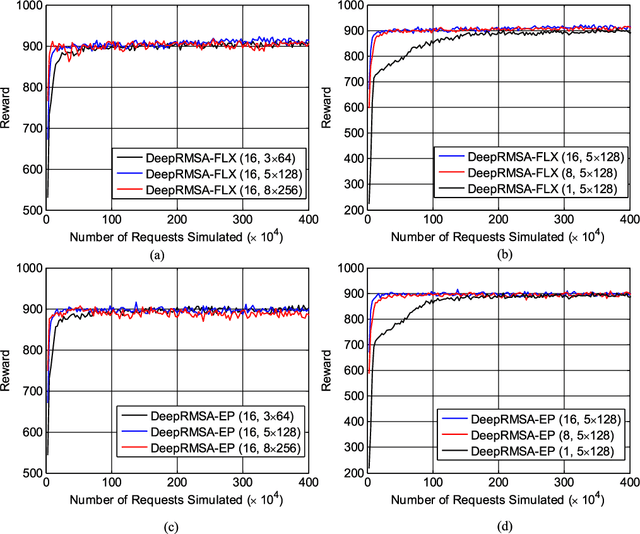

DeepRMSA: A Deep Reinforcement Learning Framework for Routing, Modulation and Spectrum Assignment in Elastic Optical Networks

May 15, 2019

Abstract:This paper proposes DeepRMSA, a deep reinforcement learning framework for routing, modulation and spectrum assignment (RMSA) in elastic optical networks (EONs). DeepRMSA learns the correct online RMSA policies by parameterizing the policies with deep neural networks (DNNs) that can sense complex EON states. The DNNs are trained with experiences of dynamic lightpath provisioning. We first modify the asynchronous advantage actor-critic algorithm and present an episode-based training mechanism for DeepRMSA, namely, DeepRMSA-EP. DeepRMSA-EP divides the dynamic provisioning process into multiple episodes (each containing the servicing of a fixed number of lightpath requests) and performs training by the end of each episode. The optimization target of DeepRMSA-EP at each step of servicing a request is to maximize the cumulative reward within the rest of the episode. Thus, we obviate the need for estimating the rewards related to unknown future states. To overcome the instability issue in the training of DeepRMSA-EP due to the oscillations of cumulative rewards, we further propose a window-based flexible training mechanism, i.e., DeepRMSA-FLX. DeepRMSA-FLX attempts to smooth out the oscillations by defining the optimization scope at each step as a sliding window, and ensuring that the cumulative rewards always include rewards from a fixed number of requests. Evaluations with the two sample topologies show that DeepRMSA-FLX can effectively stabilize the training while achieving blocking probability reductions of more than 20.3% and 14.3%, when compared with the baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge