Ruola Ning

Deep Efficient End-to-end Reconstruction (DEER) Network for Low-dose Few-view Breast CT from Projection Data

Dec 16, 2019

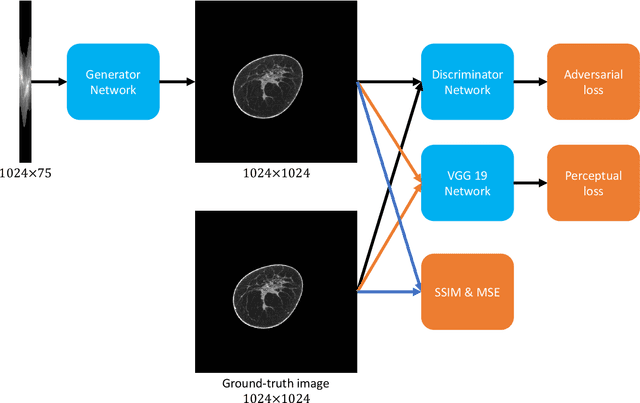

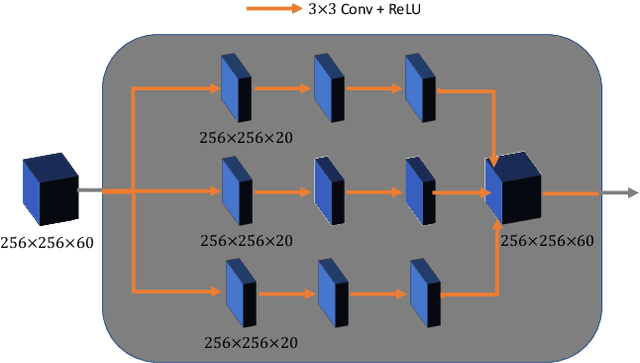

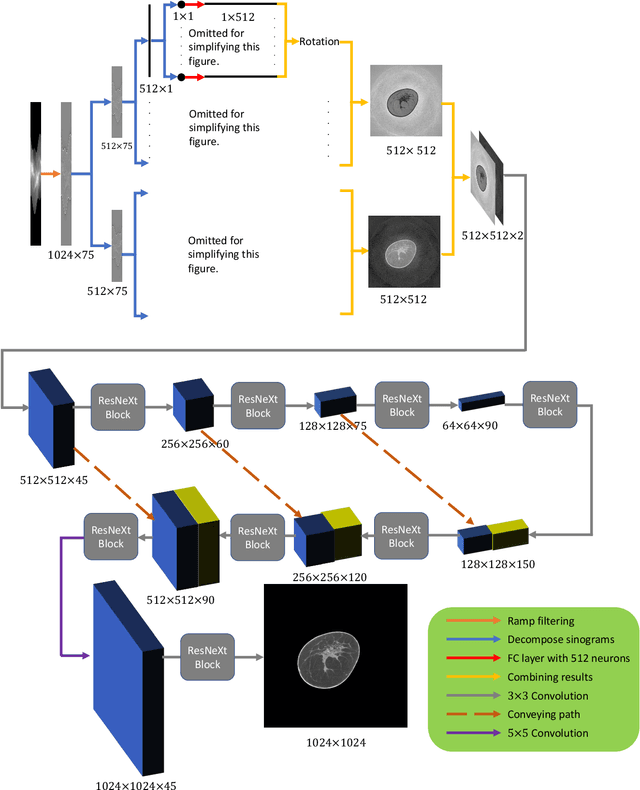

Abstract:Breast CT provides image volumes with isotropic resolution in high contrast, enabling detection of calcification (down to a few hundred microns in size) and subtle density differences. Since breast is sensitive to x-ray radiation, dose reduction of breast CT is an important topic, and for this purpose low-dose few-view scanning is a main approach. In this article, we propose a Deep Efficient End-to-end Reconstruction (DEER) network for low-dose few-view breast CT. The major merits of our network include high dose efficiency, excellent image quality, and low model complexity. By the design, the proposed network can learn the reconstruction process in terms of as less as O(N) parameters, where N is the size of an image to be reconstructed, which represents orders of magnitude improvements relative to the state-of-the-art deep-learning based reconstruction methods that map projection data to tomographic images directly. As a result, our method does not require expensive GPUs to train and run. Also, validated on a cone-beam breast CT dataset prepared by Koning Corporation on a commercial scanner, our method demonstrates competitive performance over the state-of-the-art reconstruction networks in terms of image quality.

Deep-learning-based Breast CT for Radiation Dose Reduction

Sep 25, 2019

Abstract:Cone-beam breast computed tomography (CT) provides true 3D breast images with isotropic resolution and high-contrast information, detecting calcifications as small as a few hundred microns and revealing subtle tissue differences. However, breast is highly sensitive to x-ray radiation. It is critically important for healthcare to reduce radiation dose. Few-view cone-beam CT only uses a fraction of x-ray projection data acquired by standard cone-beam breast CT, enabling significant reduction of the radiation dose. However, insufficient sampling data would cause severe streak artifacts in CT images reconstructed using conventional methods. In this study, we propose a deep-learning-based method to establish a residual neural network model for the image reconstruction, which is applied for few-view breast CT to produce high quality breast CT images. We respectively evaluate the deep-learning-based image reconstruction using one third and one quarter of x-ray projection views of the standard cone-beam breast CT. Based on clinical breast imaging dataset, we perform a supervised learning to train the neural network from few-view CT images to corresponding full-view CT images. Experimental results show that the deep learning-based image reconstruction method allows few-view breast CT to achieve a radiation dose <6 mGy per cone-beam CT scan, which is a threshold set by FDA for mammographic screening.

Dual Network Architecture for Few-view CT -- Trained on ImageNet Data and Transferred for Medical Imaging

Jul 17, 2019

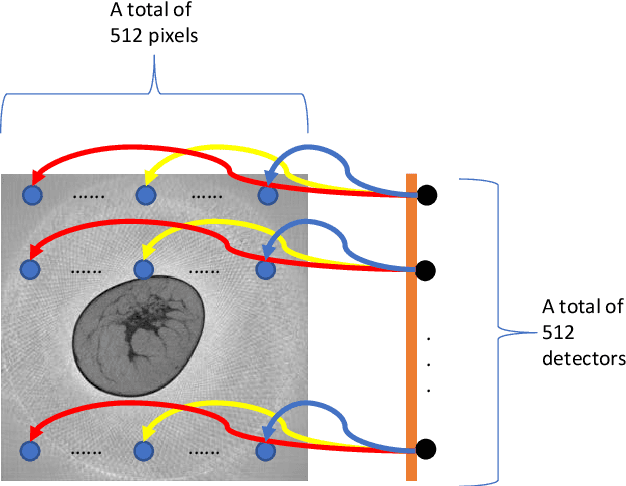

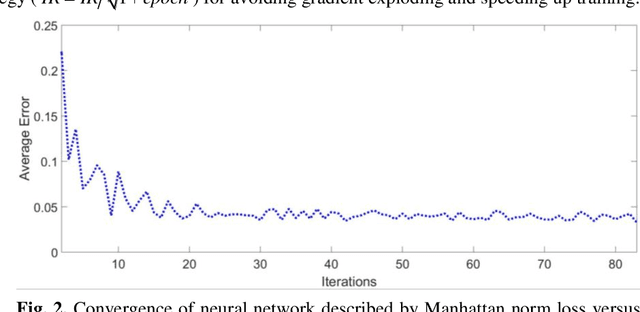

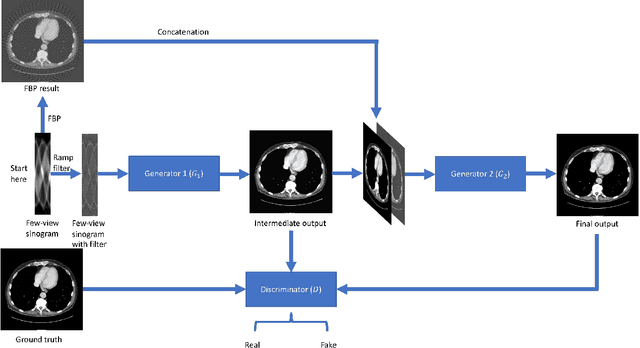

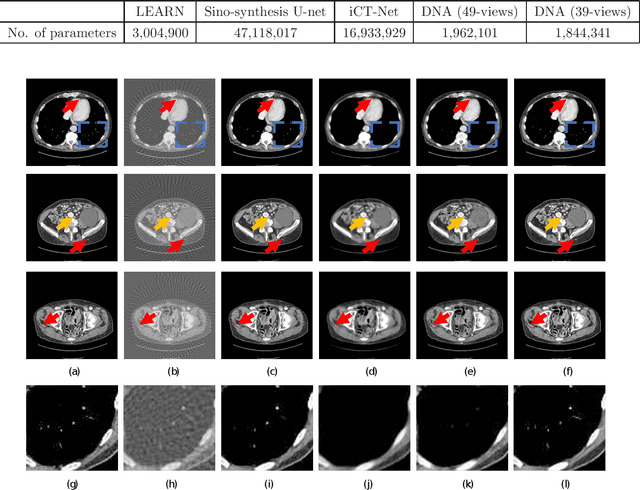

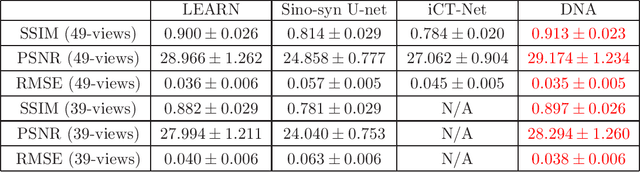

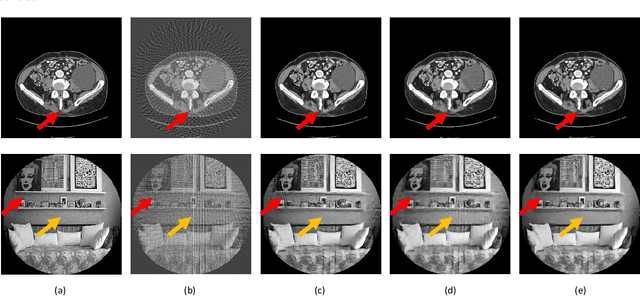

Abstract:X-ray computed tomography (CT) reconstructs cross-sectional images from projection data. However, ionizing X-ray radiation associated with CT scanning might induce cancer and genetic damage and raises public concerns, and the reduction of radiation dose has attracted major attention. Few-view CT image reconstruction is an important topic to reduce the radiation dose. Recently, data-driven algorithms have shown great potential to solve the few-view CT problem. In this paper, we develop a dual network architecture (DNA) for reconstructing images directly from sinograms. In the proposed DNA method, a point-based fully-connected layer learns the backprojection process requesting significantly less memory than the prior art and with O(C*N*N_c) parameters where N and N_c denote the dimension of reconstructed images and number of projections respectively. C is an adjustable parameter that can be set as low as 1. Our experimental results demonstrate that DNA produces a competitive performance over the other state-of-the-art methods. Interestingly, natural images can be used to pre-train DNA to avoid overfitting when the amount of real patient images is limited.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge