Rukshan Wijesinghe

Neural Mixture Models with Expectation-Maximization for End-to-end Deep Clustering

Jul 06, 2021

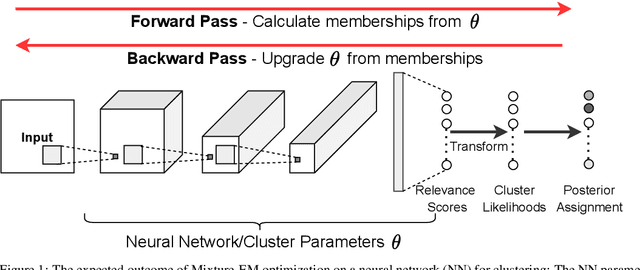

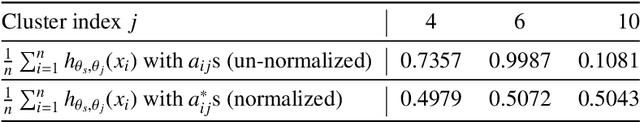

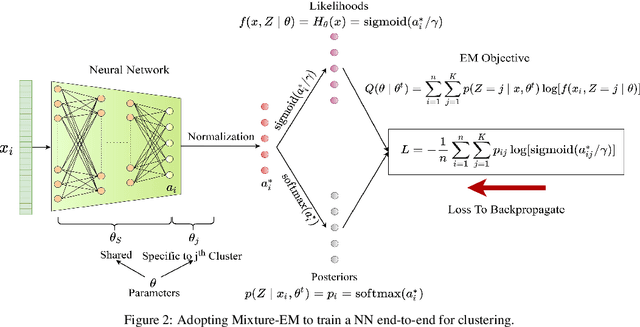

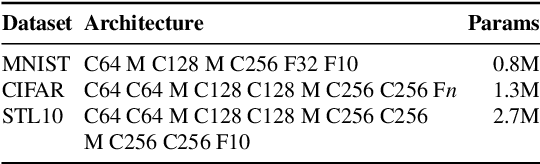

Abstract:Any clustering algorithm must synchronously learn to model the clusters and allocate data to those clusters in the absence of labels. Mixture model-based methods model clusters with pre-defined statistical distributions and allocate data to those clusters based on the cluster likelihoods. They iteratively refine those distribution parameters and member assignments following the Expectation-Maximization (EM) algorithm. However, the cluster representability of such hand-designed distributions that employ a limited amount of parameters is not adequate for most real-world clustering tasks. In this paper, we realize mixture model-based clustering with a neural network where the final layer neurons, with the aid of an additional transformation, approximate cluster distribution outputs. The network parameters pose as the parameters of those distributions. The result is an elegant, much-generalized representation of clusters than a restricted mixture of hand-designed distributions. We train the network end-to-end via batch-wise EM iterations where the forward pass acts as the E-step and the backward pass acts as the M-step. In image clustering, the mixture-based EM objective can be used as the clustering objective along with existing representation learning methods. In particular, we show that when mixture-EM optimization is fused with consistency optimization, it improves the sole consistency optimization performance in clustering. Our trained networks outperform single-stage deep clustering methods that still depend on k-means, with unsupervised classification accuracy of 63.8% in STL10, 58% in CIFAR10, 25.9% in CIFAR100, and 98.9% in MNIST.

Transferring Domain Knowledge with an Adviser in Continuous Tasks

Feb 16, 2021

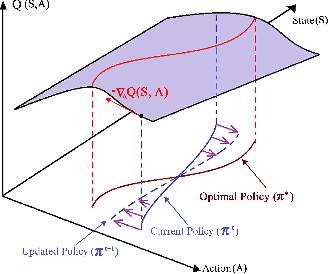

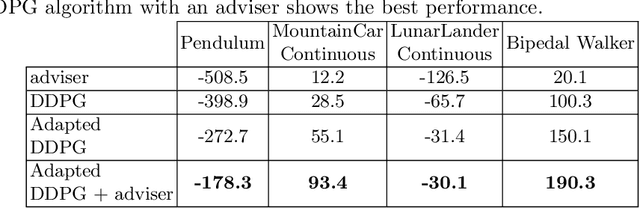

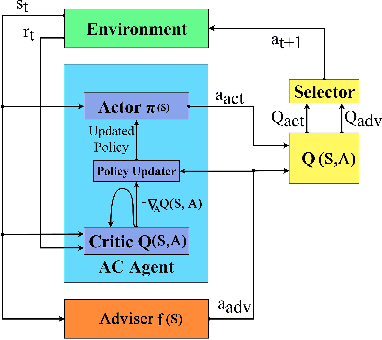

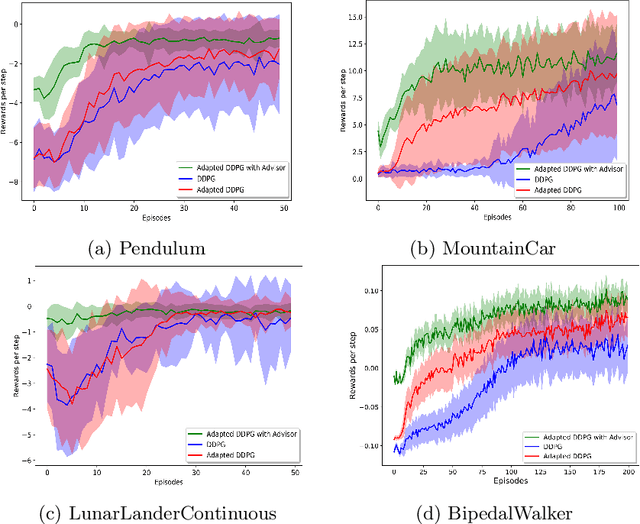

Abstract:Recent advances in Reinforcement Learning (RL) have surpassed human-level performance in many simulated environments. However, existing reinforcement learning techniques are incapable of explicitly incorporating already known domain-specific knowledge into the learning process. Therefore, the agents have to explore and learn the domain knowledge independently through a trial and error approach, which consumes both time and resources to make valid responses. Hence, we adapt the Deep Deterministic Policy Gradient (DDPG) algorithm to incorporate an adviser, which allows integrating domain knowledge in the form of pre-learned policies or pre-defined relationships to enhance the agent's learning process. Our experiments on OpenAi Gym benchmark tasks show that integrating domain knowledge through advisers expedites the learning and improves the policy towards better optima.

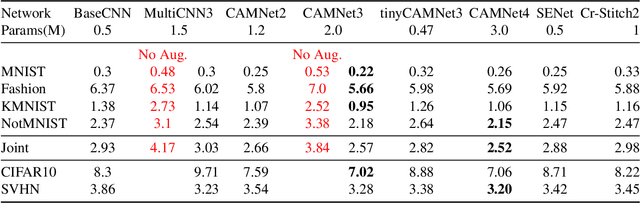

Feature-dependent Cross-Connections in Multi-Path Neural Networks

Jun 24, 2020

Abstract:Learning a particular task from a dataset, samples in which originate from diverse contexts, is challenging, and usually addressed by deepening or widening standard neural networks. As opposed to conventional network widening, multi-path architectures restrict the quadratic increment of complexity to a linear scale. However, existing multi-column/path networks or model ensembling methods do not consider any feature-dependent allocation of parallel resources, and therefore, tend to learn redundant features. Given a layer in a multi-path network, if we restrict each path to learn a context-specific set of features and introduce a mechanism to intelligently allocate incoming feature maps to such paths, each path can specialize in a certain context, reducing the redundancy and improving the quality of extracted features. This eventually leads to better-optimized usage of parallel resources. To do this, we propose inserting feature-dependent cross-connections between parallel sets of feature maps in successive layers. The weights of these cross-connections are learned based on the input features of the particular layer. Our multi-path networks show improved image recognition accuracy at a similar complexity compared to conventional and state-of-the-art methods for deepening, widening and adaptive feature extracting, in both small and large scale datasets.

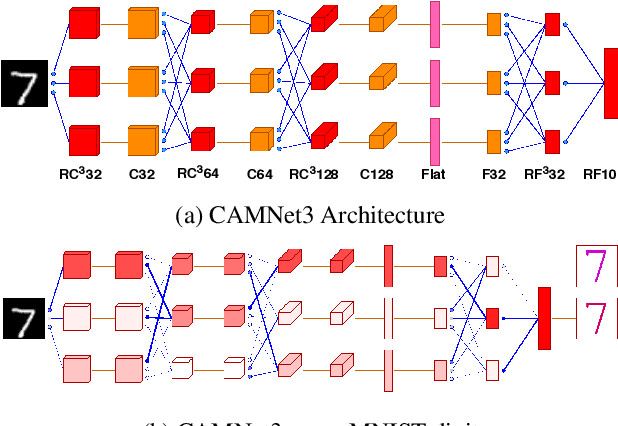

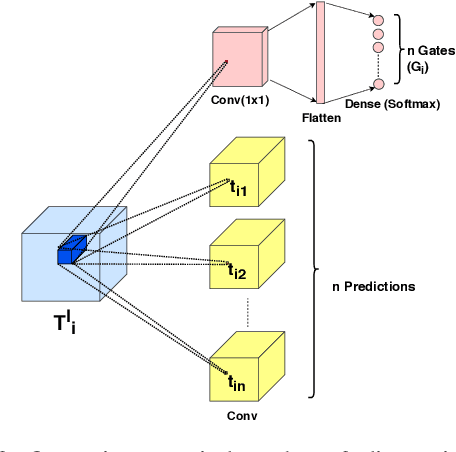

Context-Aware Multipath Networks

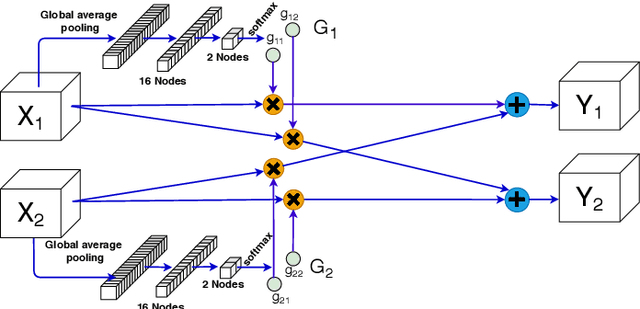

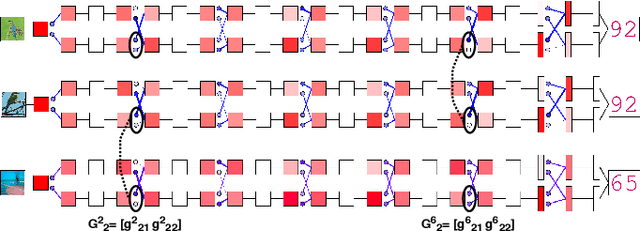

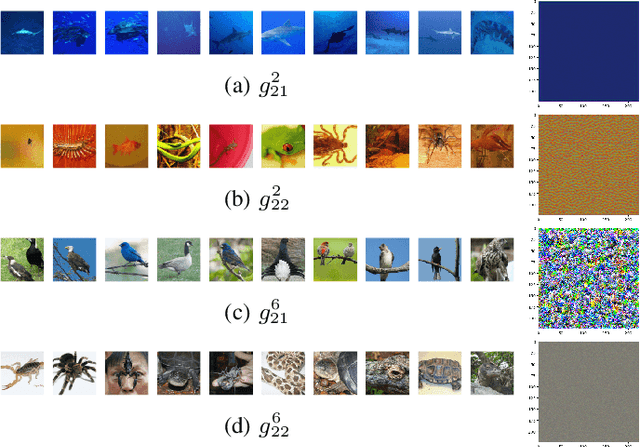

Jul 26, 2019

Abstract:Making a single network effectively address diverse contexts---learning the variations within a dataset or multiple datasets---is an intriguing step towards achieving generalized intelligence. Existing approaches of deepening, widening, and assembling networks are not cost effective in general. In view of this, networks which can allocate resources according to the context of the input and regulate flow of information across the network are effective. In this paper, we present Context-Aware Multipath Network (CAMNet), a multi-path neural network with data-dependant routing between parallel tensors. We show that our model performs as a generalized model capturing variations in individual datasets and multiple different datasets, both simultaneously and sequentially. CAMNet surpasses the performance of classification and pixel-labeling tasks in comparison with the equivalent single-path, multi-path, and deeper single-path networks, considering datasets individually, sequentially, and in combination. The data-dependent routing between tensors in CAMNet enables the model to control the flow of information end-to-end, deciding which resources to be common or domain-specific.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge