Ruiming Guo

USF Spectral Estimation: Prevalence of Gaussian Cramér-Rao Bounds Despite Modulo Folding

May 06, 2025

Abstract:Spectral Estimation (SpecEst) is a core area of signal processing with a history spanning two centuries and applications across various fields. With the advent of digital acquisition, SpecEst algorithms have been widely applied to tasks like frequency super-resolution. However, conventional digital acquisition imposes a trade-off: for a fixed bit budget, one can optimize either signal dynamic range or digital resolution (noise floor), but not both simultaneously. The Unlimited Sensing Framework (USF) overcomes this limitation using modulo non-linearity in analog hardware, enabling a novel approach to SpecEst (USF-SpecEst). However, USF-SpecEst requires new theoretical and algorithmic developments to handle folded samples effectively. In this paper, we derive the Cram\'er-Rao Bounds (CRBs) for SpecEst with noisy modulo-folded samples and reveal a surprising result: the CRBs for USF-SpecEst are scaled versions of the Gaussian CRBs for conventional samples. Numerical experiments validate these bounds, providing a benchmark for USF-SpecEst and facilitating its practical deployment.

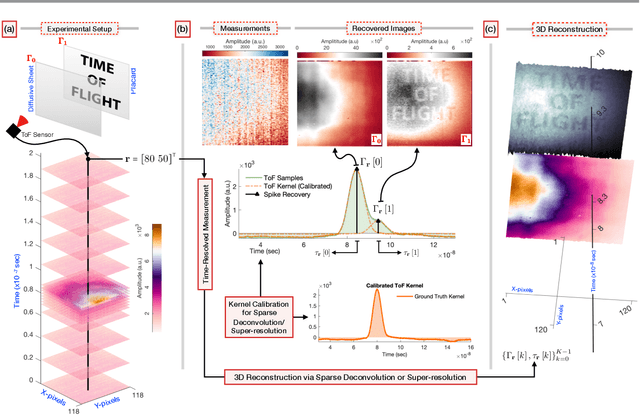

Blind Time-of-Flight Imaging: Sparse Deconvolution on the Continuum with Unknown Kernels

Oct 31, 2024

Abstract:In recent years, computational Time-of-Flight (ToF) imaging has emerged as an exciting and a novel imaging modality that offers new and powerful interpretations of natural scenes, with applications extending to 3D, light-in-flight, and non-line-of-sight imaging. Mathematically, ToF imaging relies on algorithmic super-resolution, as the back-scattered sparse light echoes lie on a finer time resolution than what digital devices can capture. Traditional methods necessitate knowledge of the emitted light pulses or kernels and employ sparse deconvolution to recover scenes. Unlike previous approaches, this paper introduces a novel, blind ToF imaging technique that does not require kernel calibration and recovers sparse spikes on a continuum, rather than a discrete grid. By studying the shared characteristics of various ToF modalities, we capitalize on the fact that most physical pulses approximately satisfy the Strang-Fix conditions from approximation theory. This leads to a new mathematical formulation for sparse super-resolution. Our recovery approach uses an optimization method that is pivoted on an alternating minimization strategy. We benchmark our blind ToF method against traditional kernel calibration methods, which serve as the baseline. Extensive hardware experiments across different ToF modalities demonstrate the algorithmic advantages, flexibility and empirical robustness of our approach. We show that our work facilitates super-resolution in scenarios where distinguishing between closely spaced objects is challenging, while maintaining performance comparable to known kernel situations. Examples of light-in-flight imaging and light-sweep videos highlight the practical benefits of our blind super-resolution method in enhancing the understanding of natural scenes.

* 27 pages

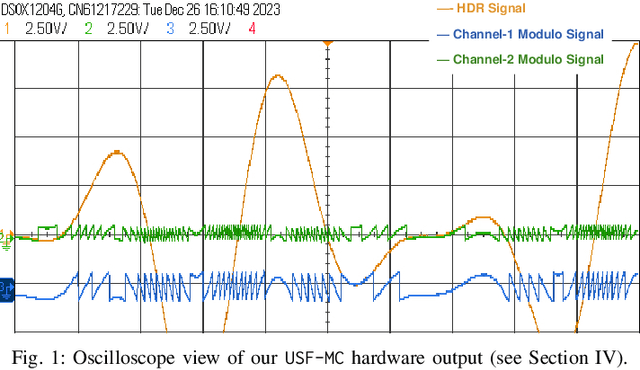

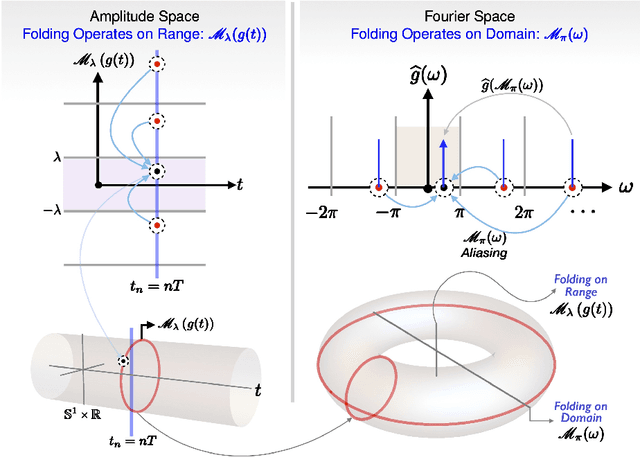

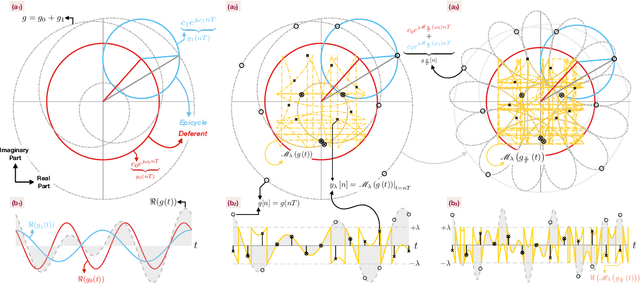

Sub-Nyquist USF Spectral Estimation: $K$ Frequencies with $6K + 4$ Modulo Samples

Sep 24, 2024

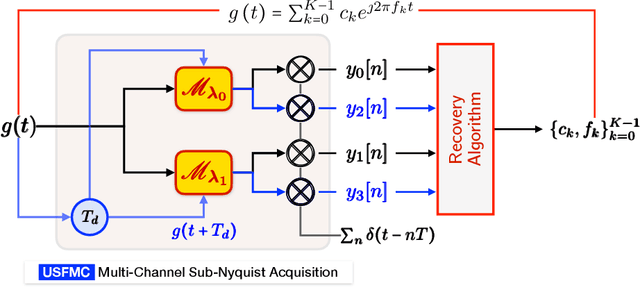

Abstract:Digital acquisition of high bandwidth signals is particularly challenging when Nyquist rate sampling is impractical. This has led to extensive research in sub-Nyquist sampling methods, primarily for spectral and sinusoidal frequency estimation. However, these methods struggle with high-dynamic-range (HDR) signals that can saturate analog-to-digital converters (ADCs). Addressing this, we introduce a novel sub-Nyquist spectral estimation method, driven by the Unlimited Sensing Framework (USF), utilizing a multi-channel system. The sub-Nyquist USF method aliases samples in both amplitude and frequency domains, rendering the inverse problem particularly challenging. Towards this goal, our exact recovery theorem establishes that $K$ sinusoids of arbitrary amplitudes and frequencies can be recovered from $6K + 4$ modulo samples, remarkably, independent of the sampling rate or folding threshold. In the true spirit of sub-Nyquist sampling, via modulo ADC hardware experiments, we demonstrate successful spectrum estimation of HDR signals in the kHz range using Hz range sampling rates (0.078\% Nyquist rate). Our experiments also reveal up to a 33-fold improvement in frequency estimation accuracy using one less bit compared to conventional ADCs. These findings open new avenues in spectral estimation applications, e.g., radars, direction-of-arrival (DoA) estimation, and cognitive radio, showcasing the potential of USF.

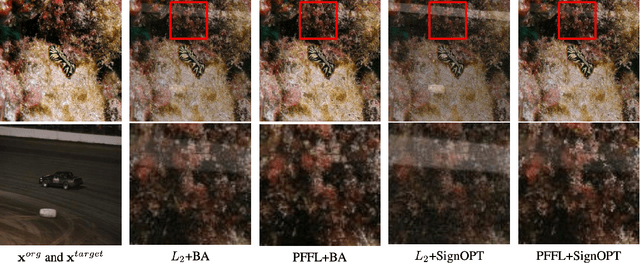

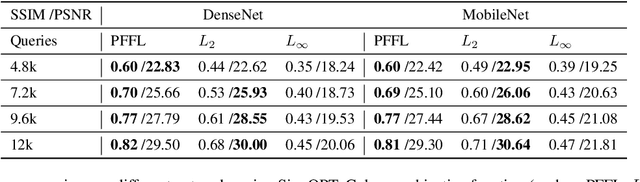

Towards Imperceptible Query-limited Adversarial Attacks with Perceptual Feature Fidelity Loss

Jan 31, 2021

Abstract:Recently, there has been a large amount of work towards fooling deep-learning-based classifiers, particularly for images, via adversarial inputs that are visually similar to the benign examples. However, researchers usually use Lp-norm minimization as a proxy for imperceptibility, which oversimplifies the diversity and richness of real-world images and human visual perception. In this work, we propose a novel perceptual metric utilizing the well-established connection between the low-level image feature fidelity and human visual sensitivity, where we call it Perceptual Feature Fidelity Loss. We show that our metric can robustly reflect and describe the imperceptibility of the generated adversarial images validated in various conditions. Moreover, we demonstrate that this metric is highly flexible, which can be conveniently integrated into different existing optimization frameworks to guide the noise distribution for better imperceptibility. The metric is particularly useful in the challenging black-box attack with limited queries, where the imperceptibility is hard to achieve due to the non-trivial perturbation power.

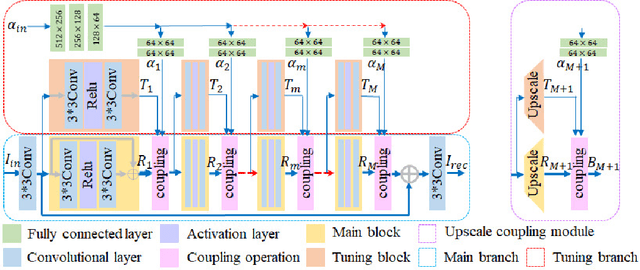

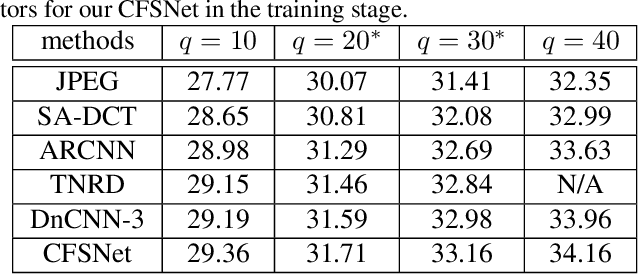

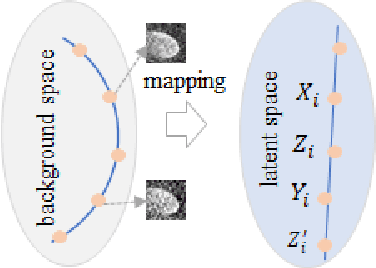

CFSNet: Toward a Controllable Feature Space for Image Restoration

Apr 01, 2019

Abstract:Deep learning methods have witnessed the great progress in image restoration with specific metrics (e.g., PSNR, SSIM). However, the perceptual quality of the restored image is relatively subjective, and it is necessary for users to control the reconstruction result according to personal preferences or image characteristics, which cannot be done using existing deterministic networks. This motivates us to exquisitely design a unified interactive framework for general image restoration tasks. Under this framework, users can control continuous transition of different objectives, e.g., the perception-distortion trade-off of image super-resolution, the trade-off between noise reduction and detail preservation. We achieve this goal by controlling latent features of the designed network. To be specific, our proposed framework, named Controllable Feature Space Network (CFSNet), is entangled by two branches based on different objectives. Our model can adaptively learn the coupling coefficients of different layers and channels, which provides finer control of the restored image quality. Experiments on several typical image restoration tasks fully validate the effective benefits of the proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge