Rudolf Herdt

University of Bremen, aisencia

Concept Based Explanations and Class Contrasting

Feb 05, 2025Abstract:Explaining deep neural networks is challenging, due to their large size and non-linearity. In this paper, we introduce a concept-based explanation method, in order to explain the prediction for an individual class, as well as contrasting any two classes, i.e. explain why the model predicts one class over the other. We test it on several openly available classification models trained on ImageNet1K, as well as on a segmentation model trained to detect tumor in stained tissue samples. We perform both qualitative and quantitative tests. For example, for a ResNet50 model from pytorch model zoo, we can use the explanation for why the model predicts a class 'A' to automatically select six dataset crops where the model does not predict class 'A'. The model then predicts class 'A' again for the newly combined image in 71\% of the cases (works for 710 out of the 1000 classes). The code including an .ipynb example is available on git: https://github.com/rherdt185/concept-based-explanations-and-class-contrasting.

Visualize and Paint GAN Activations

May 24, 2024

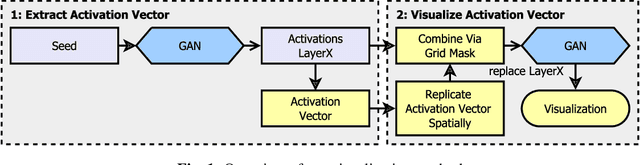

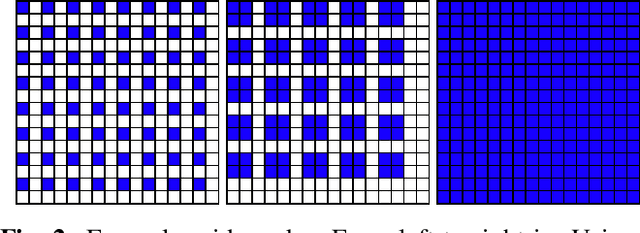

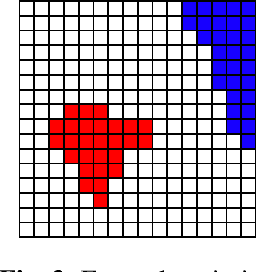

Abstract:We investigate how generated structures of GANs correlate with their activations in hidden layers, with the purpose of better understanding the inner workings of those models and being able to paint structures with unconditionally trained GANs. This gives us more control over the generated images, allowing to generate them from a semantic segmentation map while not requiring such a segmentation in the training data. To this end we introduce the concept of tileable features, allowing us to identify activations that work well for painting.

Enhancing the analysis of murine neonatal ultrasonic vocalizations: Development, evaluation, and application of different mathematical models

May 17, 2024Abstract:Rodents employ a broad spectrum of ultrasonic vocalizations (USVs) for social communication. As these vocalizations offer valuable insights into affective states, social interactions, and developmental stages of animals, various deep learning approaches have aimed to automate both the quantitative (detection) and qualitative (classification) analysis of USVs. Here, we present the first systematic evaluation of different types of neural networks for USV classification. We assessed various feedforward networks, including a custom-built, fully-connected network and convolutional neural network, different residual neural networks (ResNets), an EfficientNet, and a Vision Transformer (ViT). Paired with a refined, entropy-based detection algorithm (achieving recall of 94.9% and precision of 99.3%), the best architecture (achieving 86.79% accuracy) was integrated into a fully automated pipeline capable of analyzing extensive USV datasets with high reliability. Additionally, users can specify an individual minimum accuracy threshold based on their research needs. In this semi-automated setup, the pipeline selectively classifies calls with high pseudo-probability, leaving the rest for manual inspection. Our study focuses exclusively on neonatal USVs. As part of an ongoing phenotyping study, our pipeline has proven to be a valuable tool for identifying key differences in USVs produced by mice with autism-like behaviors.

Smooth Deep Saliency

Apr 04, 2024Abstract:In this work, we investigate methods to reduce the noise in deep saliency maps coming from convolutional downsampling, with the purpose of explaining how a deep learning model detects tumors in scanned histological tissue samples. Those methods make the investigated models more interpretable for gradient-based saliency maps, computed in hidden layers. We test our approach on different models trained for image classification on ImageNet1K, and models trained for tumor detection on Camelyon16 and in-house real-world digital pathology scans of stained tissue samples. Our results show that the checkerboard noise in the gradient gets reduced, resulting in smoother and therefore easier to interpret saliency maps.

Model Stitching and Visualization How GAN Generators can Invert Networks in Real-Time

Feb 04, 2023

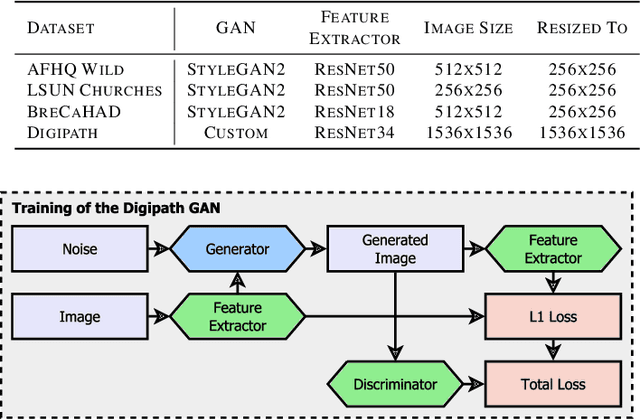

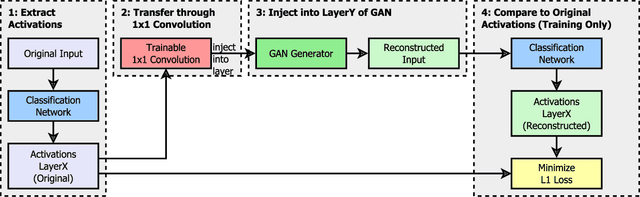

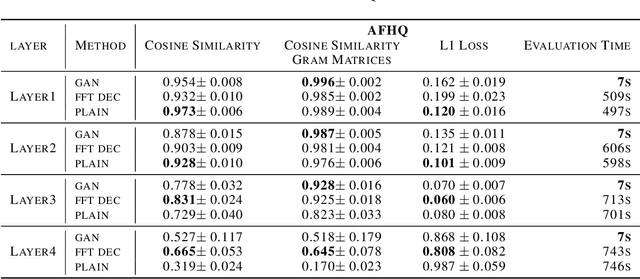

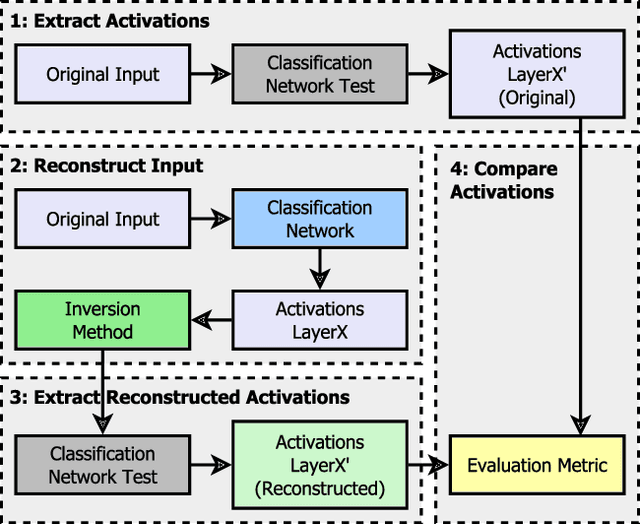

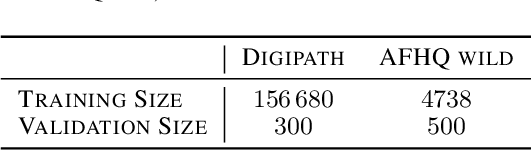

Abstract:Critical applications, such as in the medical field, require the rapid provision of additional information to interpret decisions made by deep learning methods. In this work, we propose a fast and accurate method to visualize activations of classification and semantic segmentation networks by stitching them with a GAN generator utilizing convolutions. We test our approach on images of animals from the AFHQ wild dataset and real-world digital pathology scans of stained tissue samples. Our method provides comparable results to established gradient descent methods on these datasets while running about two orders of magnitude faster.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge