Ruben Contreras

Reinforcement Learning for UAV control with Policy and Reward Shaping

Dec 06, 2022Abstract:In recent years, unmanned aerial vehicle (UAV) related technology has expanded knowledge in the area, bringing to light new problems and challenges that require solutions. Furthermore, because the technology allows processes usually carried out by people to be automated, it is in great demand in industrial sectors. The automation of these vehicles has been addressed in the literature, applying different machine learning strategies. Reinforcement learning (RL) is an automation framework that is frequently used to train autonomous agents. RL is a machine learning paradigm wherein an agent interacts with an environment to solve a given task. However, learning autonomously can be time consuming, computationally expensive, and may not be practical in highly-complex scenarios. Interactive reinforcement learning allows an external trainer to provide advice to an agent while it is learning a task. In this study, we set out to teach an RL agent to control a drone using reward-shaping and policy-shaping techniques simultaneously. Two simulated scenarios were proposed for the training; one without obstacles and one with obstacles. We also studied the influence of each technique. The results show that an agent trained simultaneously with both techniques obtains a lower reward than an agent trained using only a policy-based approach. Nevertheless, the agent achieves lower execution times and less dispersion during training.

* 9 pages, 9 figures, Preprint accepted at the 41st International Conference of the Chilean Computer Science Society, SCCC 2022, Santiago, Chile, 2022

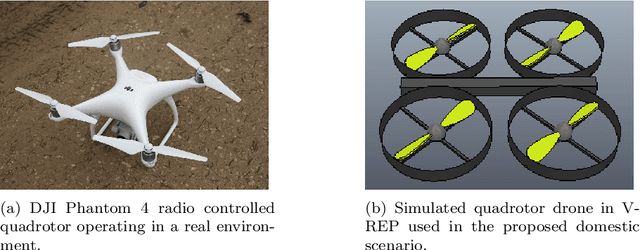

Unmanned Aerial Vehicle Control Through Domain-based Automatic Speech Recognition

Sep 09, 2020

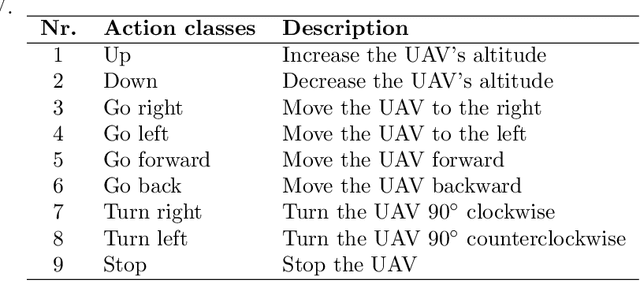

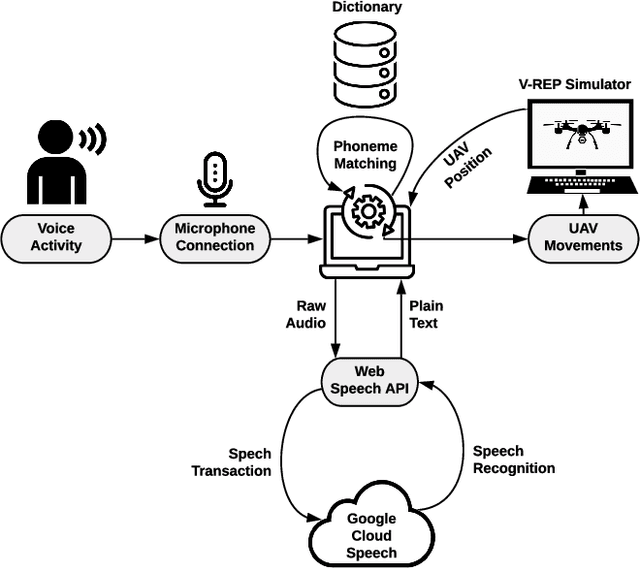

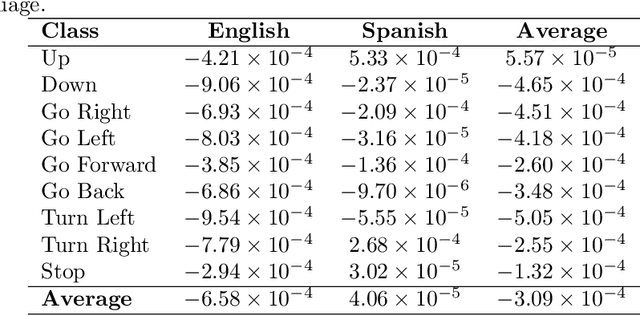

Abstract:Currently, unmanned aerial vehicles, such as drones, are becoming a part of our lives and reaching out to many areas of society, including the industrialized world. A common alternative to control the movements and actions of the drone is through unwired tactile interfaces, for which different remote control devices can be found. However, control through such devices is not a natural, human-like communication interface, which sometimes is difficult to master for some users. In this work, we present a domain-based speech recognition architecture to effectively control an unmanned aerial vehicle such as a drone. The drone control is performed using a more natural, human-like way to communicate the instructions. Moreover, we implement an algorithm for command interpretation using both Spanish and English languages, as well as to control the movements of the drone in a simulated domestic environment. The conducted experiments involve participants giving voice commands to the drone in both languages in order to compare the effectiveness of each of them, considering the mother tongue of the participants in the experiment. Additionally, different levels of distortion have been applied to the voice commands in order to test the proposed approach when facing noisy input signals. The obtained results show that the unmanned aerial vehicle is capable of interpreting user voice instructions achieving an improvement in speech-to-action recognition for both languages when using phoneme matching in comparison to only using the cloud-based algorithm without domain-based instructions. Using raw audio inputs, the cloud-based approach achieves 74.81% and 97.04% accuracy for English and Spanish instructions respectively, whereas using our phoneme matching approach the results are improved achieving 93.33% and 100.00% accuracy for English and Spanish languages.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge