Ruairidh M. Battleday

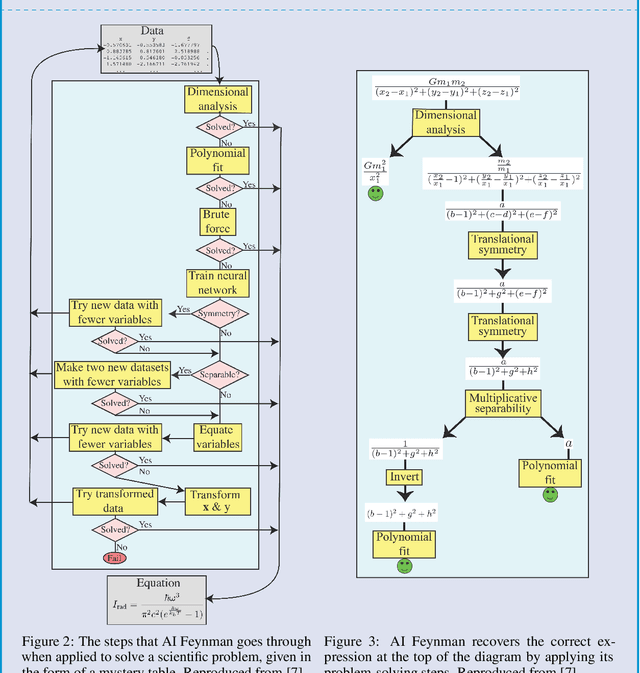

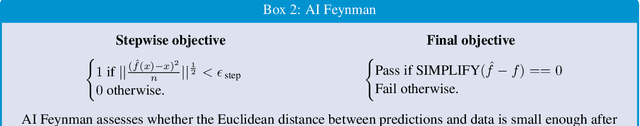

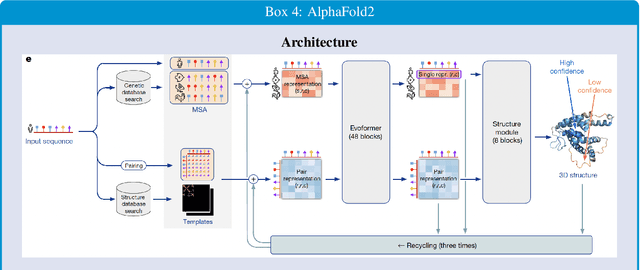

Artificial intelligence for science: The easy and hard problems

Aug 24, 2024

Abstract:A suite of impressive scientific discoveries have been driven by recent advances in artificial intelligence. These almost all result from training flexible algorithms to solve difficult optimization problems specified in advance by teams of domain scientists and engineers with access to large amounts of data. Although extremely useful, this kind of problem solving only corresponds to one part of science - the "easy problem." The other part of scientific research is coming up with the problem itself - the "hard problem." Solving the hard problem is beyond the capacities of current algorithms for scientific discovery because it requires continual conceptual revision based on poorly defined constraints. We can make progress on understanding how humans solve the hard problem by studying the cognitive science of scientists, and then use the results to design new computational agents that automatically infer and update their scientific paradigms.

End-to-end Deep Prototype and Exemplar Models for Predicting Human Behavior

Jul 17, 2020

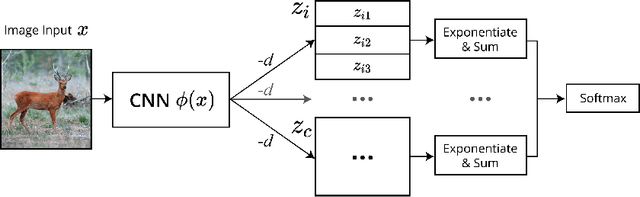

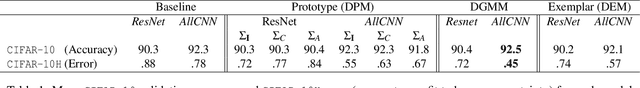

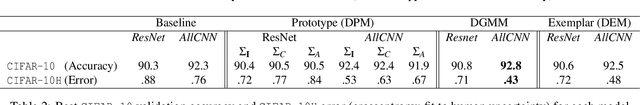

Abstract:Traditional models of category learning in psychology focus on representation at the category level as opposed to the stimulus level, even though the two are likely to interact. The stimulus representations employed in such models are either hand-designed by the experimenter, inferred circuitously from human judgments, or borrowed from pretrained deep neural networks that are themselves competing models of category learning. In this work, we extend classic prototype and exemplar models to learn both stimulus and category representations jointly from raw input. This new class of models can be parameterized by deep neural networks (DNN) and trained end-to-end. Following their namesakes, we refer to them as Deep Prototype Models, Deep Exemplar Models, and Deep Gaussian Mixture Models. Compared to typical DNNs, we find that their cognitively inspired counterparts both provide better intrinsic fit to human behavior and improve ground-truth classification.

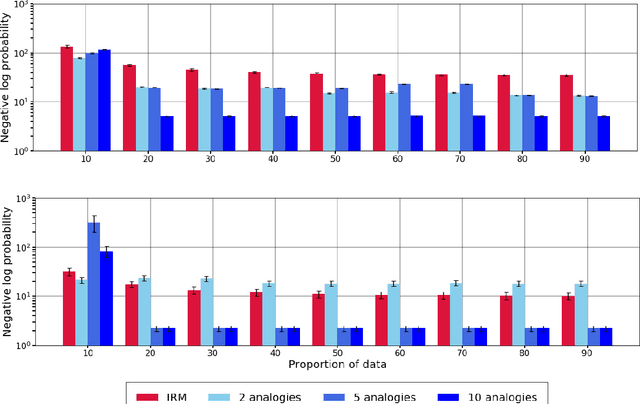

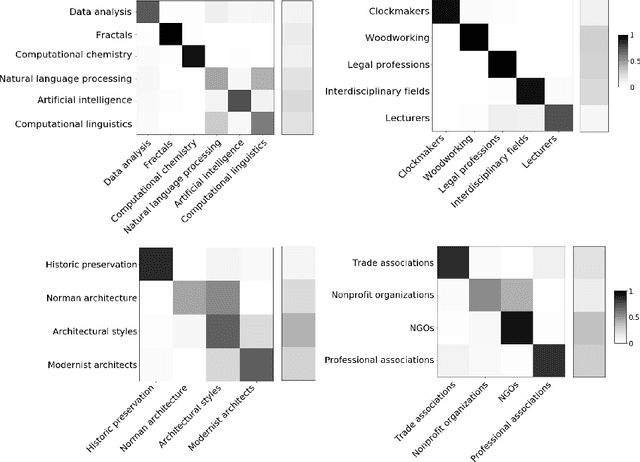

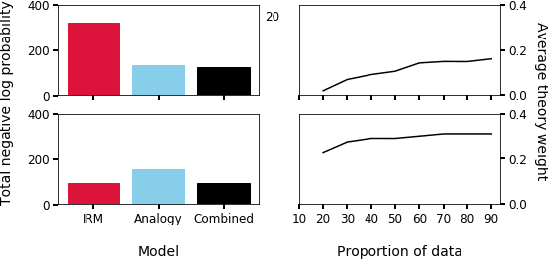

Analogy as Nonparametric Bayesian Inference over Relational Systems

Jun 07, 2020

Abstract:Much of human learning and inference can be framed within the computational problem of relational generalization. In this project, we propose a Bayesian model that generalizes relational knowledge to novel environments by analogically weighting predictions from previously encountered relational structures. First, we show that this learner outperforms a naive, theory-based learner on relational data derived from random- and Wikipedia-based systems when experience with the environment is small. Next, we show how our formalization of analogical similarity translates to the selection and weighting of analogies. Finally, we combine the analogy- and theory-based learners in a single nonparametric Bayesian model, and show that optimal relational generalization transitions from relying on analogies to building a theory of the novel system with increasing experience in it. Beyond predicting unobserved interactions better than either baseline, this formalization gives a computational-level perspective on the formation and abstraction of analogies themselves.

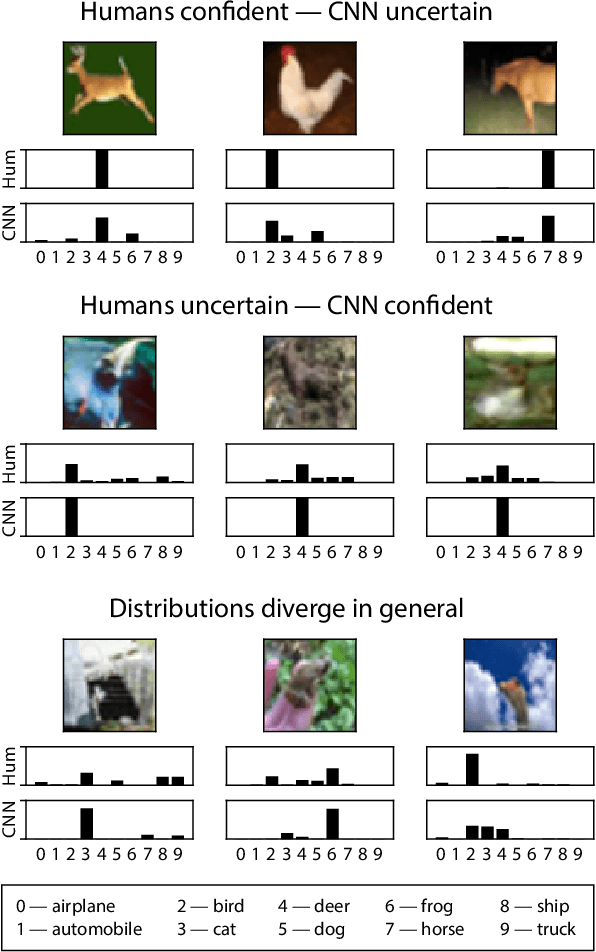

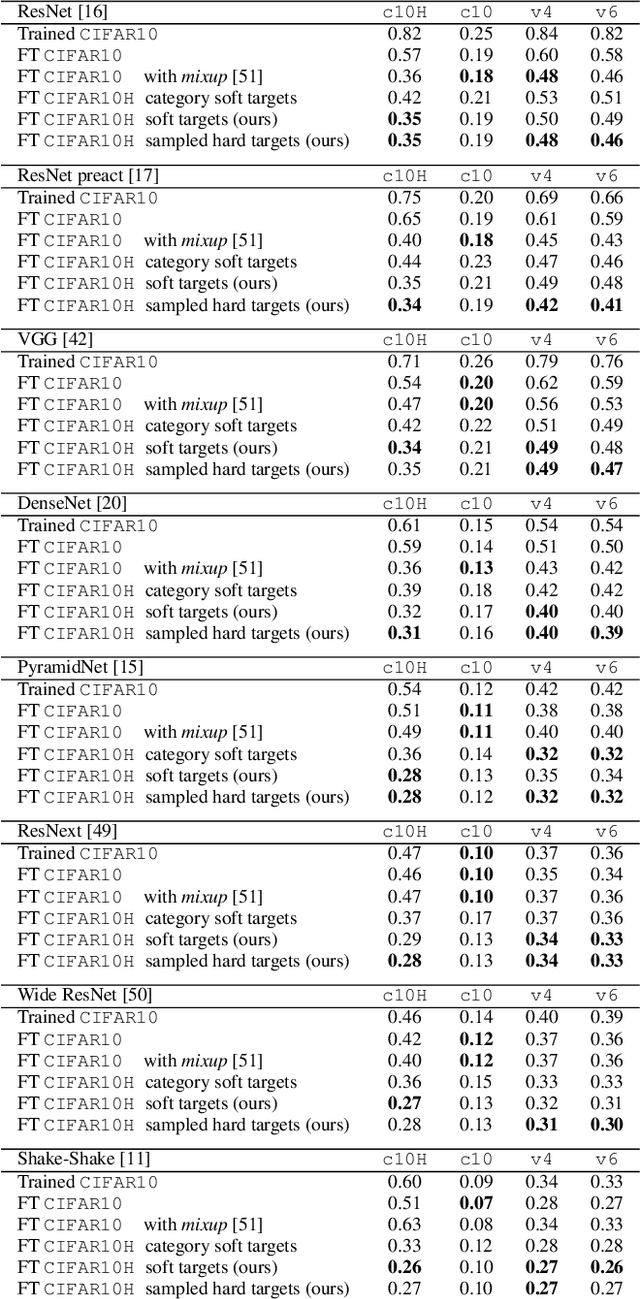

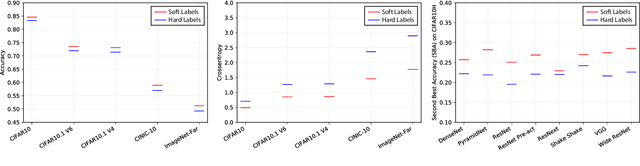

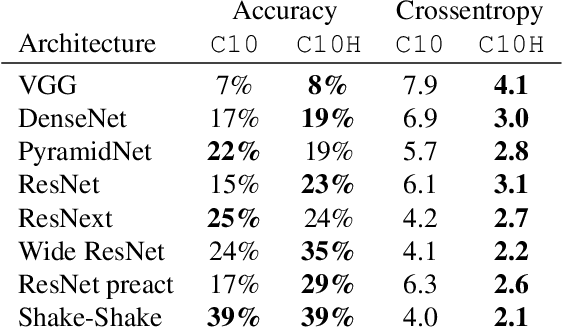

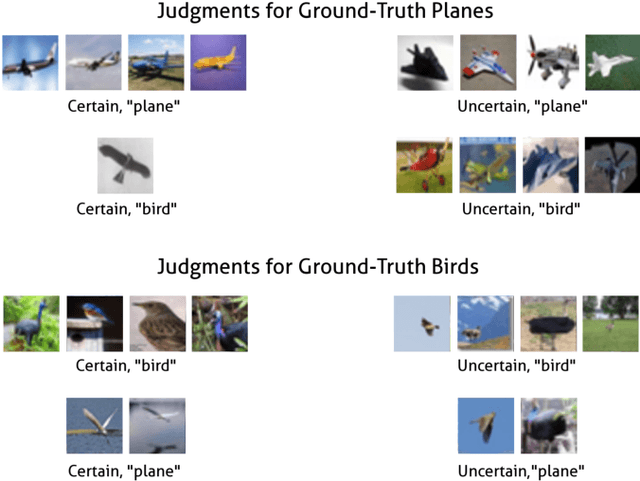

Human uncertainty makes classification more robust

Aug 19, 2019

Abstract:The classification performance of deep neural networks has begun to asymptote at near-perfect levels. However, their ability to generalize outside the training set and their robustness to adversarial attacks have not. In this paper, we make progress on this problem by training with full label distributions that reflect human perceptual uncertainty. We first present a new benchmark dataset which we call CIFAR10H, containing a full distribution of human labels for each image of the CIFAR10 test set. We then show that, while contemporary classifiers fail to exhibit human-like uncertainty on their own, explicit training on our dataset closes this gap, supports improved generalization to increasingly out-of-training-distribution test datasets, and confers robustness to adversarial attacks.

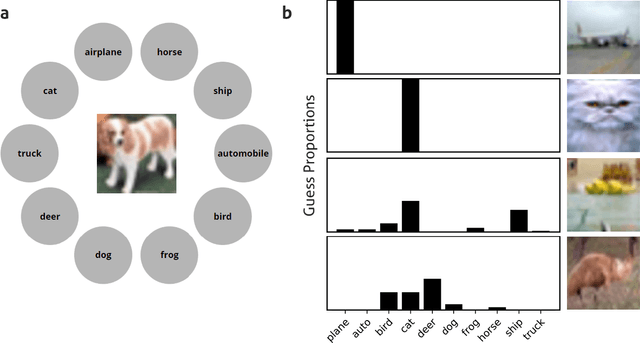

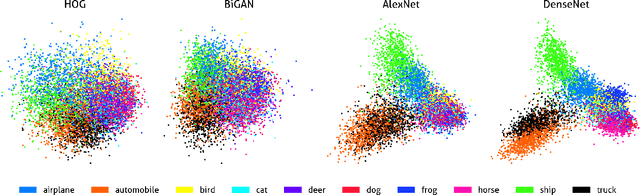

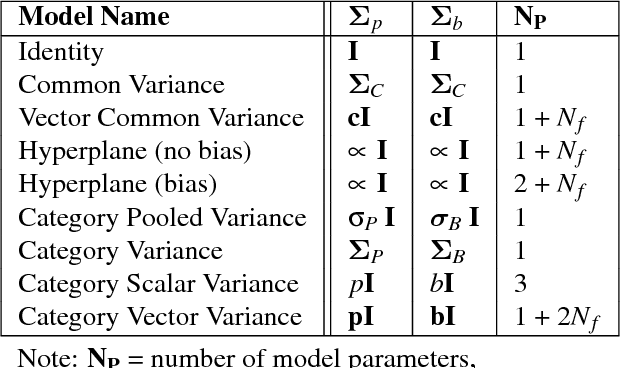

Capturing human categorization of natural images at scale by combining deep networks and cognitive models

Apr 26, 2019

Abstract:Human categorization is one of the most important and successful targets of cognitive modeling in psychology, yet decades of development and assessment of competing models have been contingent on small sets of simple, artificial experimental stimuli. Here we extend this modeling paradigm to the domain of natural images, revealing the crucial role that stimulus representation plays in categorization and its implications for conclusions about how people form categories. Applying psychological models of categorization to natural images required two significant advances. First, we conducted the first large-scale experimental study of human categorization, involving over 500,000 human categorization judgments of 10,000 natural images from ten non-overlapping object categories. Second, we addressed the traditional bottleneck of representing high-dimensional images in cognitive models by exploring the best of current supervised and unsupervised deep and shallow machine learning methods. We find that selecting sufficiently expressive, data-driven representations is crucial to capturing human categorization, and using these representations allows simple models that represent categories with abstract prototypes to outperform the more complex memory-based exemplar accounts of categorization that have dominated in studies using less naturalistic stimuli.

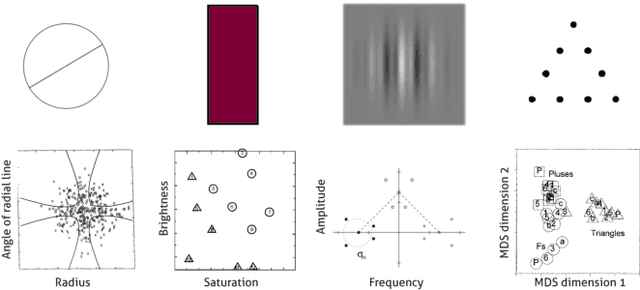

Modeling Human Categorization of Natural Images Using Deep Feature Representations

Nov 13, 2017

Abstract:Over the last few decades, psychologists have developed sophisticated formal models of human categorization using simple artificial stimuli. In this paper, we use modern machine learning methods to extend this work into the realm of naturalistic stimuli, enabling human categorization to be studied over the complex visual domain in which it evolved and developed. We show that representations derived from a convolutional neural network can be used to model behavior over a database of >300,000 human natural image classifications, and find that a group of models based on these representations perform well, near the reliability of human judgments. Interestingly, this group includes both exemplar and prototype models, contrasting with the dominance of exemplar models in previous work. We are able to improve the performance of the remaining models by preprocessing neural network representations to more closely capture human similarity judgments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge