Rishabh Saumil Thakkar

Hierarchical Control for Multi-Agent Autonomous Racing

Feb 28, 2022

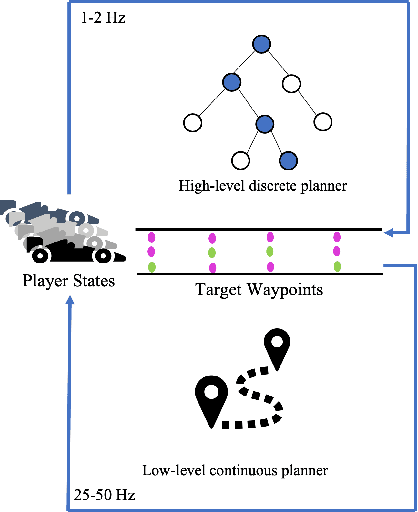

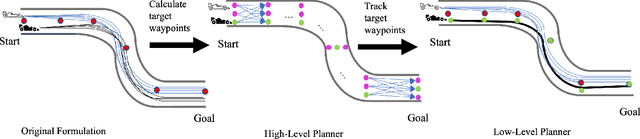

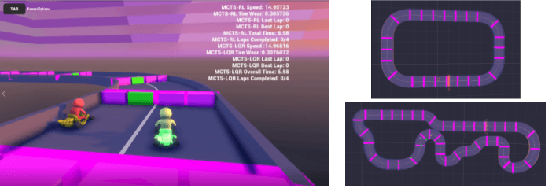

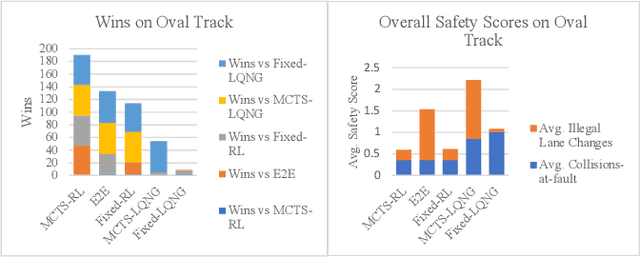

Abstract:We develop a hierarchical controller for multi-agent autonomous racing. A high-level planner approximates the race as a discrete game with simplified dynamics that encodes the complex safety and fairness rules seen in real-life racing and calculates a series of target waypoints. The low-level controller takes the resulting waypoints as a reference trajectory and computes high-resolution control inputs by solving a simplified formulation of a multi-agent racing game. We consider two approaches for the low-level planner to construct two hierarchical controllers. One approach uses multi-agent reinforcement learning (MARL), and the other solves a linear-quadratic Nash game (LQNG) to produce control inputs. We test the controllers against three baselines: an end-to-end MARL controller, a MARL controller tracking a fixed racing line, and an LQNG controller tracking a fixed racing line. Quantitative results show that the proposed hierarchical methods outperform their respective baseline methods in terms of head-to-head race wins and abiding by the rules. The hierarchical controller using MARL for low-level control consistently outperformed all other methods by winning over 88% of head-to-head races and more consistently adhered to the complex racing rules. Qualitatively, we observe the proposed controllers mimicking actions performed by expert human drivers such as shielding/blocking, overtaking, and long-term planning for delayed advantages. We show that hierarchical planning for game-theoretic reasoning produces competitive behavior even when challenged with complex rules and constraints.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge