Riccardo Bertoglio

A Map-Free LiDAR-Based System for Autonomous Navigation in Vineyards

Jul 06, 2023

Abstract:Agricultural robots have the potential to increase production yields and reduce costs by performing repetitive and time-consuming tasks. However, for robots to be effective, they must be able to navigate autonomously in fields or orchards without human intervention. In this paper, we introduce a navigation system that utilizes LiDAR and wheel encoder sensors for in-row, turn, and end-row navigation in row structured agricultural environments, such as vineyards. Our approach exploits the simple and precise geometrical structure of plants organized in parallel rows. We tested our system in both simulated and real environments, and the results demonstrate the effectiveness of our approach in achieving accurate and robust navigation. Our navigation system achieves mean displacement errors from the center line of 0.049 m and 0.372 m for in-row navigation in the simulated and real environments, respectively. In addition, we developed an end-row points detection that allows end-row navigation in vineyards, a task often ignored by most works.

Surgical fine-tuning for Grape Bunch Segmentation under Visual Domain Shifts

Jul 03, 2023

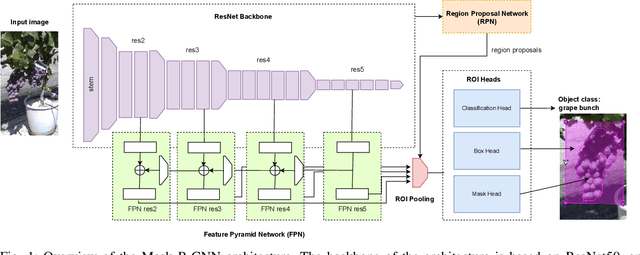

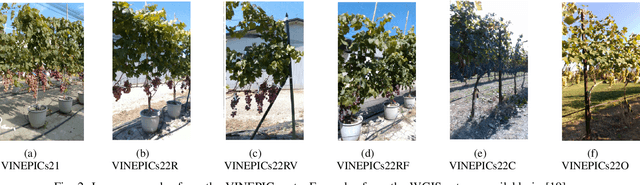

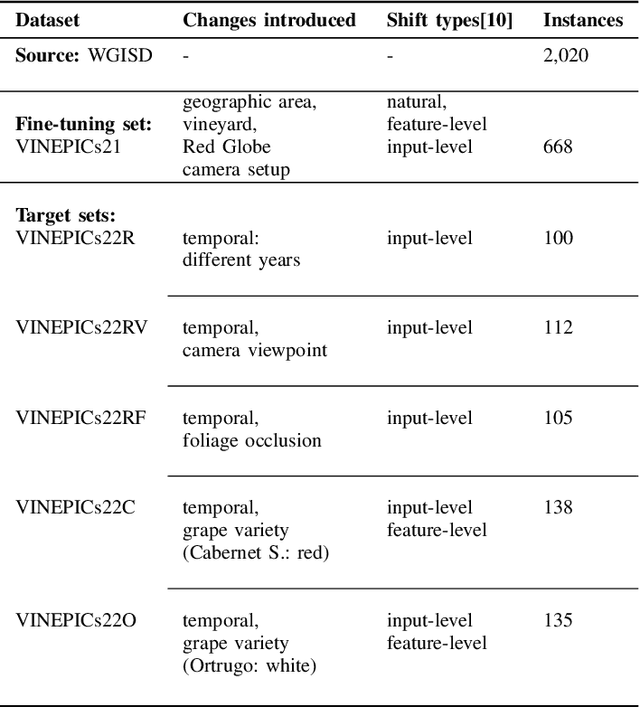

Abstract:Mobile robots will play a crucial role in the transition towards sustainable agriculture. To autonomously and effectively monitor the state of plants, robots ought to be equipped with visual perception capabilities that are robust to the rapid changes that characterise agricultural settings. In this paper, we focus on the challenging task of segmenting grape bunches from images collected by mobile robots in vineyards. In this context, we present the first study that applies surgical fine-tuning to instance segmentation tasks. We show how selectively tuning only specific model layers can support the adaptation of pre-trained Deep Learning models to newly-collected grape images that introduce visual domain shifts, while also substantially reducing the number of tuned parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge