Raphael Norman-Tenazas

Large Language Models are Highly Aligned with Human Ratings of Emotional Stimuli

Aug 19, 2025

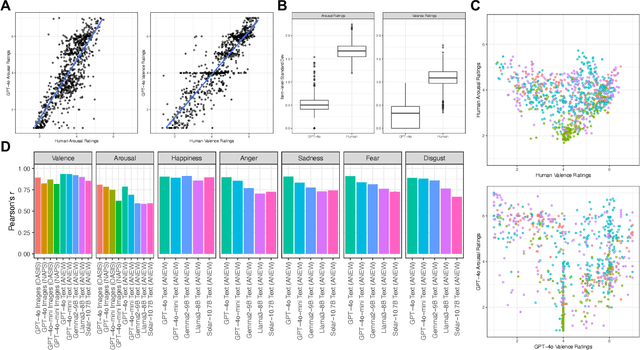

Abstract:Emotions exert an immense influence over human behavior and cognition in both commonplace and high-stress tasks. Discussions of whether or how to integrate large language models (LLMs) into everyday life (e.g., acting as proxies for, or interacting with, human agents), should be informed by an understanding of how these tools evaluate emotionally loaded stimuli or situations. A model's alignment with human behavior in these cases can inform the effectiveness of LLMs for certain roles or interactions. To help build this understanding, we elicited ratings from multiple popular LLMs for datasets of words and images that were previously rated for their emotional content by humans. We found that when performing the same rating tasks, GPT-4o responded very similarly to human participants across modalities, stimuli and most rating scales (r = 0.9 or higher in many cases). However, arousal ratings were less well aligned between human and LLM raters, while happiness ratings were most highly aligned. Overall LLMs aligned better within a five-category (happiness, anger, sadness, fear, disgust) emotion framework than within a two-dimensional (arousal and valence) organization. Finally, LLM ratings were substantially more homogenous than human ratings. Together these results begin to describe how LLM agents interpret emotional stimuli and highlight similarities and differences among biological and artificial intelligence in key behavioral domains.

Using evolutionary computation to optimize task performance of unclocked, recurrent Boolean circuits in FPGAs

Mar 19, 2024

Abstract:It has been shown that unclocked, recurrent networks of Boolean gates in FPGAs can be used for low-SWaP reservoir computing. In such systems, topology and node functionality of the network are randomly initialized. To create a network that solves a task, weights are applied to output nodes and learning is achieved by adjusting those weights with conventional machine learning methods. However, performance is often limited compared to networks where all parameters are learned. Herein, we explore an alternative learning approach for unclocked, recurrent networks in FPGAs. We use evolutionary computation to evolve the Boolean functions of network nodes. In one type of implementation the output nodes are used directly to perform a task and all learning is via evolution of the network's node functions. In a second type of implementation a back-end classifier is used as in traditional reservoir computing. In that case, both evolution of node functions and adjustment of output node weights contribute to learning. We demonstrate the practicality of node function evolution, obtaining an accuracy improvement of ~30% on an image classification task while processing at a rate of over three million samples per second. We additionally demonstrate evolvability of network memory and dynamic output signals.

Exploiting Large Neuroimaging Datasets to Create Connectome-Constrained Approaches for more Robust, Efficient, and Adaptable Artificial Intelligence

May 26, 2023Abstract:Despite the progress in deep learning networks, efficient learning at the edge (enabling adaptable, low-complexity machine learning solutions) remains a critical need for defense and commercial applications. We envision a pipeline to utilize large neuroimaging datasets, including maps of the brain which capture neuron and synapse connectivity, to improve machine learning approaches. We have pursued different approaches within this pipeline structure. First, as a demonstration of data-driven discovery, the team has developed a technique for discovery of repeated subcircuits, or motifs. These were incorporated into a neural architecture search approach to evolve network architectures. Second, we have conducted analysis of the heading direction circuit in the fruit fly, which performs fusion of visual and angular velocity features, to explore augmenting existing computational models with new insight. Our team discovered a novel pattern of connectivity, implemented a new model, and demonstrated sensor fusion on a robotic platform. Third, the team analyzed circuitry for memory formation in the fruit fly connectome, enabling the design of a novel generative replay approach. Finally, the team has begun analysis of connectivity in mammalian cortex to explore potential improvements to transformer networks. These constraints increased network robustness on the most challenging examples in the CIFAR-10-C computer vision robustness benchmark task, while reducing learnable attention parameters by over an order of magnitude. Taken together, these results demonstrate multiple potential approaches to utilize insight from neural systems for developing robust and efficient machine learning techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge