Raffaele Gaetano

EVERGREEN

Geographical Context Matters: Bridging Fine and Coarse Spatial Information to Enhance Continental Land Cover Mapping

Apr 16, 2025

Abstract:Land use and land cover mapping from Earth Observation (EO) data is a critical tool for sustainable land and resource management. While advanced machine learning and deep learning algorithms excel at analyzing EO imagery data, they often overlook crucial geospatial metadata information that could enhance scalability and accuracy across regional, continental, and global scales. To address this limitation, we propose BRIDGE-LC (Bi-level Representation Integration for Disentangled GEospatial Land Cover), a novel deep learning framework that integrates multi-scale geospatial information into the land cover classification process. By simultaneously leveraging fine-grained (latitude/longitude) and coarse-grained (biogeographical region) spatial information, our lightweight multi-layer perceptron architecture learns from both during training but only requires fine-grained information for inference, allowing it to disentangle region-specific from region-agnostic land cover features while maintaining computational efficiency. To assess the quality of our framework, we use an open-access in-situ dataset and adopt several competing classification approaches commonly considered for large-scale land cover mapping. We evaluated all approaches through two scenarios: an extrapolation scenario in which training data encompasses samples from all biogeographical regions, and a leave-one-region-out scenario where one region is excluded from training. We also explore the spatial representation learned by our model, highlighting a connection between its internal manifold and the geographical information used during training. Our results demonstrate that integrating geospatial information improves land cover mapping performance, with the most substantial gains achieved by jointly leveraging both fine- and coarse-grained spatial information.

Semi Supervised Heterogeneous Domain Adaptation via Disentanglement and Pseudo-Labelling

Jun 20, 2024

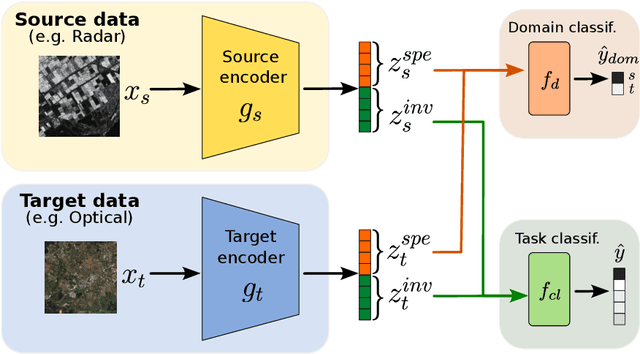

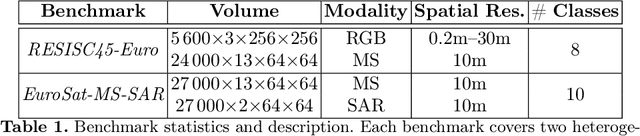

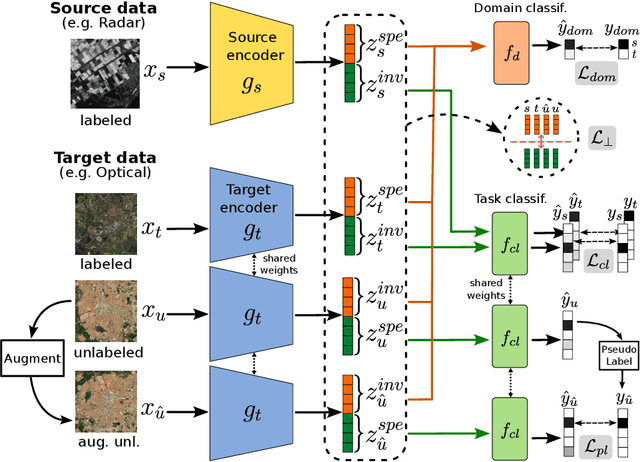

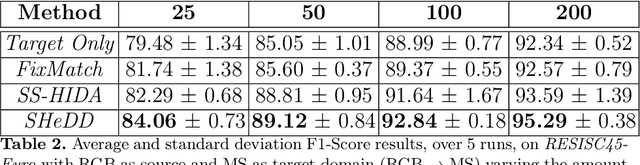

Abstract:Semi-supervised domain adaptation methods leverage information from a source labelled domain with the goal of generalizing over a scarcely labelled target domain. While this setting already poses challenges due to potential distribution shifts between domains, an even more complex scenario arises when source and target data differs in modality representation (e.g. they are acquired by sensors with different characteristics). For instance, in remote sensing, images may be collected via various acquisition modes (e.g. optical or radar), different spectral characteristics (e.g. RGB or multi-spectral) and spatial resolutions. Such a setting is denoted as Semi-Supervised Heterogeneous Domain Adaptation (SSHDA) and it exhibits an even more severe distribution shift due to modality heterogeneity across domains.To cope with the challenging SSHDA setting, here we introduce SHeDD (Semi-supervised Heterogeneous Domain Adaptation via Disentanglement) an end-to-end neural framework tailored to learning a target domain classifier by leveraging both labelled and unlabelled data from heterogeneous data sources. SHeDD is designed to effectively disentangle domain-invariant representations, relevant for the downstream task, from domain-specific information, that can hinder the cross-modality transfer. Additionally, SHeDD adopts an augmentation-based consistency regularization mechanism that takes advantages of reliable pseudo-labels on the unlabelled target samples to further boost its generalization ability on the target domain. Empirical evaluations on two remote sensing benchmarks, encompassing heterogeneous data in terms of acquisition modes and spectral/spatial resolutions, demonstrate the quality of SHeDD compared to both baseline and state-of-the-art competing approaches. Our code is publicly available here: https://github.com/tanodino/SSHDA/

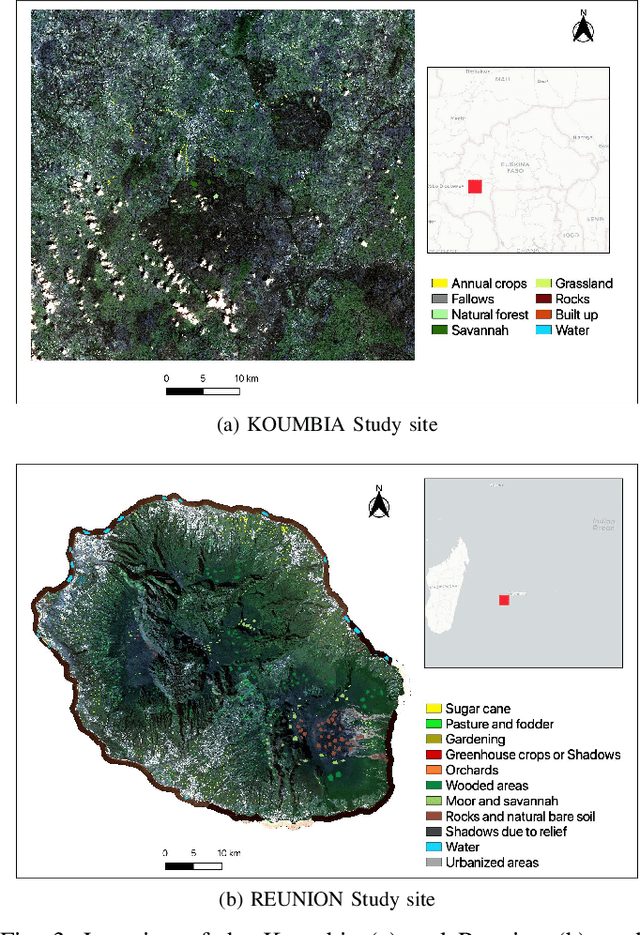

Reuse out-of-year data to enhance land cover mappingvia feature disentanglement and contrastive learning

Apr 17, 2024

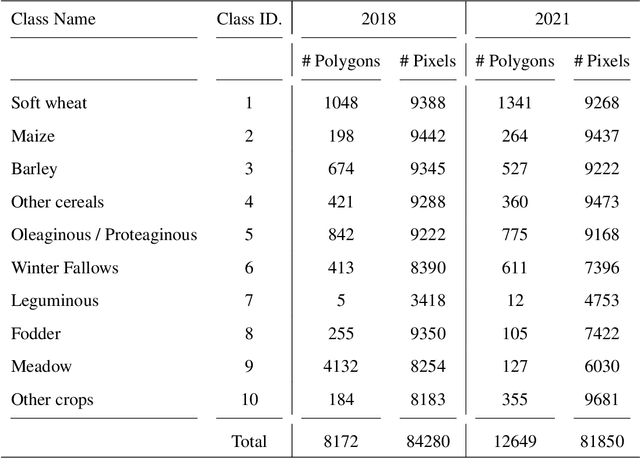

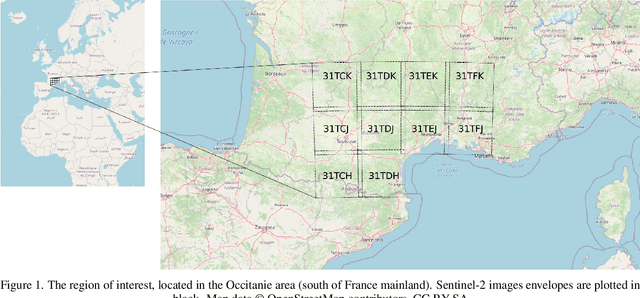

Abstract:Timely up-to-date land use/land cover (LULC) maps play a pivotal role in supporting agricultural territory management, environmental monitoring and facilitating well-informed and sustainable decision-making. Typically, when creating a land cover (LC) map, precise ground truth data is collected through time-consuming and expensive field campaigns. This data is then utilized in conjunction with satellite image time series (SITS) through advanced machine learning algorithms to get the final map. Unfortunately, each time this process is repeated (e.g., annually over a region to estimate agricultural production or potential biodiversity loss), new ground truth data must be collected, leading to the complete disregard of previously gathered reference data despite the substantial financial and time investment they have required. How to make value of historical data, from the same or similar study sites, to enhance the current LULC mapping process constitutes a significant challenge that could enable the financial and human-resource efforts invested in previous data campaigns to be valued again. Aiming to tackle this important challenge, we here propose a deep learning framework based on recent advances in domain adaptation and generalization to combine remote sensing and reference data coming from two different domains (e.g. historical data and fresh ones) to ameliorate the current LC mapping process. Our approach, namely REFeD (data Reuse with Effective Feature Disentanglement for land cover mapping), leverages a disentanglement strategy, based on contrastive learning, where invariant and specific per-domain features are derived to recover the intrinsic information related to the downstream LC mapping task and alleviate possible distribution shifts between domains. Additionally, REFeD is equipped with an effective supervision scheme where feature disentanglement is further enforced via multiple levels of supervision at different granularities. The experimental assessment over two study areas covering extremely diverse and contrasted landscapes, namely Koumbia (located in the West-Africa region, in Burkina Faso) and Centre Val de Loire (located in centre Europe, France), underlines the quality of our framework and the obtained findings demonstrate that out-of-year information coming from the same (or similar) study site, at different periods of time, can constitute a valuable additional source of information to enhance the LC mapping process.

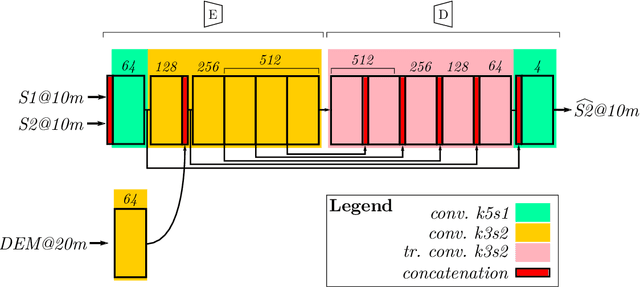

Comparison of convolutional neural networks for cloudy optical images reconstruction from single or multitemporal joint SAR and optical images

Apr 01, 2022

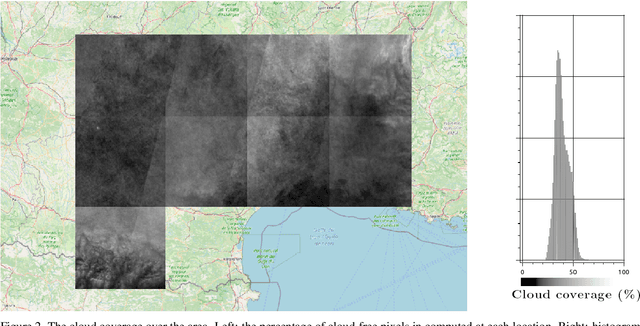

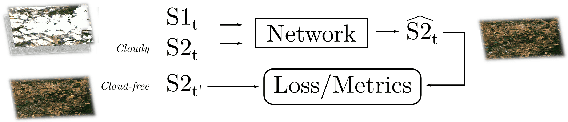

Abstract:With the increasing availability of optical and synthetic aperture radar (SAR) images thanks to the Sentinel constellation, and the explosion of deep learning, new methods have emerged in recent years to tackle the reconstruction of optical images that are impacted by clouds. In this paper, we focus on the evaluation of convolutional neural networks that use jointly SAR and optical images to retrieve the missing contents in one single polluted optical image. We propose a simple framework that ease the creation of datasets for the training of deep nets targeting optical image reconstruction, and for the validation of machine learning based or deterministic approaches. These methods are quite different in terms of input images constraints, and comparing them is a problematic task not addressed in the literature. We show how space partitioning data structures help to query samples in terms of cloud coverage, relative acquisition date, pixel validity and relative proximity between SAR and optical images. We generate several datasets to compare the reconstructed images from networks that use a single pair of SAR and optical image, versus networks that use multiple pairs, and a traditional deterministic approach performing interpolation in temporal domain.

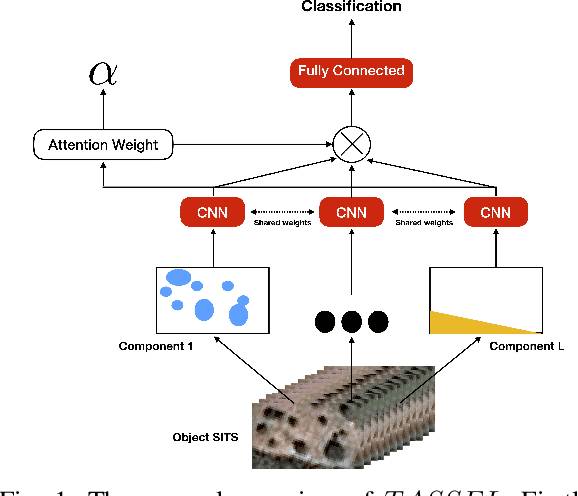

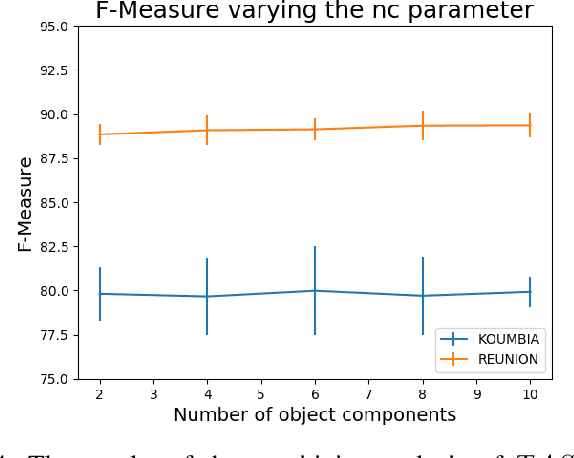

Attentive Weakly Supervised land cover mapping for object-based satellite image time series data with spatial interpretation

Apr 30, 2020

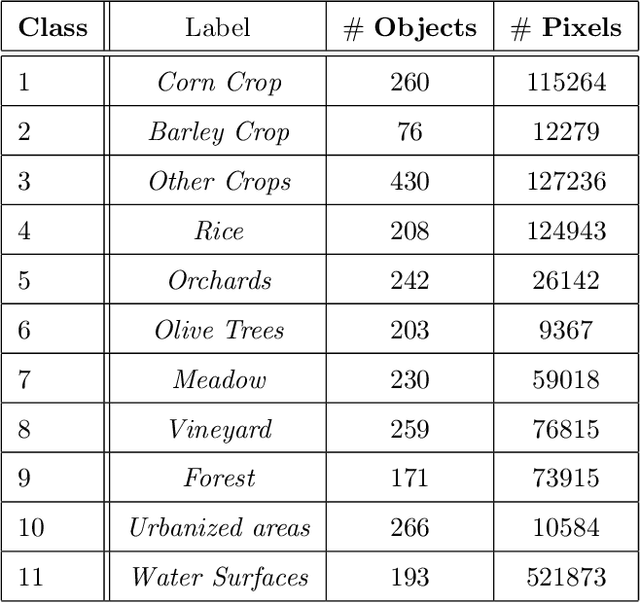

Abstract:Nowadays, modern Earth Observation systems continuously collect massive amounts of satellite information. The unprecedented possibility to acquire high resolution Satellite Image Time Series (SITS) data (series of images with high revisit time period on the same geographical area) is opening new opportunities to monitor the different aspects of the Earth Surface but, at the same time, it is raising up new challenges in term of suitable methods to analyze and exploit such huge amount of rich and complex image data. One of the main task associated to SITS data analysis is related to land cover mapping where satellite data are exploited via learning methods to recover the Earth Surface status aka the corresponding land cover classes. Due to operational constraints, the collected label information, on which machine learning strategies are trained, is often limited in volume and obtained at coarse granularity carrying out inexact and weak knowledge that can affect the whole process. To cope with such issues, in the context of object-based SITS land cover mapping, we propose a new deep learning framework, named TASSEL (aTtentive weAkly Supervised Satellite image time sEries cLassifier), that is able to intelligently exploit the weak supervision provided by the coarse granularity labels. Furthermore, our framework also produces an additional side-information that supports the model interpretability with the aim to make the black box gray. Such side-information allows to associate spatial interpretation to the model decision via visual inspection.

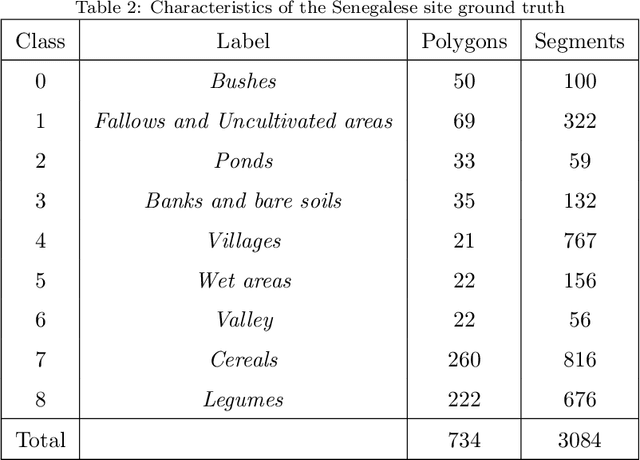

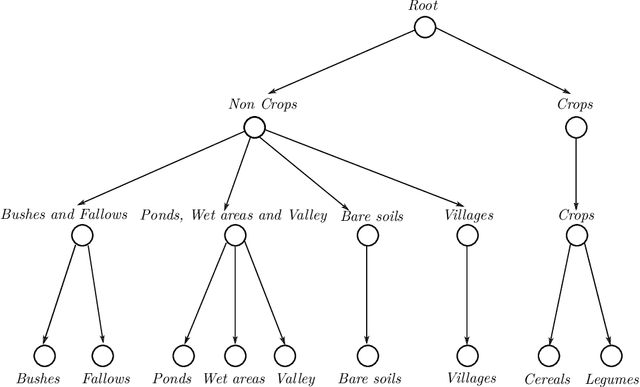

Object-based multi-temporal and multi-source land cover mapping leveraging hierarchical class relationships

Nov 20, 2019

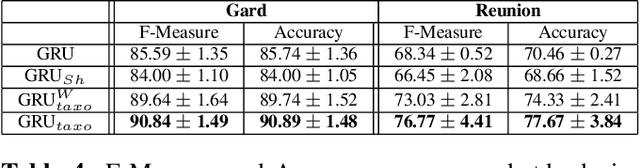

Abstract:European satellite missions Sentinel-1 (S1) and Sentinel-2 (S2) provide at highspatial resolution and high revisit time, respectively, radar and optical imagesthat support a wide range of Earth surface monitoring tasks such as LandUse/Land Cover mapping. A long-standing challenge in the remote sensingcommunity is about how to efficiently exploit multiple sources of information and leverage their complementary. In this particular case, get the most out ofradar and optical satellite image time series (SITS). Here, we propose to dealwith land cover mapping through a deep learning framework especially tailoredto leverage the multi-source complementarity provided by radar and opticalSITS. The proposed architecture is based on an extension of Recurrent NeuralNetwork (RNN) enriched via a customized attention mechanism capable to fitthe specificity of SITS data. In addition, we propose a new pretraining strategythat exploits domain expert knowledge to guide the model parameter initial-ization. Thorough experimental evaluations involving several machine learningcompetitors, on two contrasted study sites, have demonstrated the suitabilityof our new attention mechanism combined with the extend RNN model as wellas the benefit/limit to inject domain expert knowledge in the neural networktraining process.

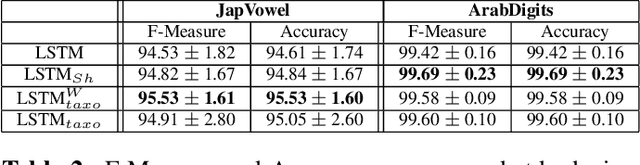

Supervised level-wise pretraining for recurrent neural network initialization in multi-class classification

Nov 04, 2019

Abstract:Recurrent Neural Networks (RNNs) can be seriously impacted by the initial parameters assignment, which may result in poor generalization performances on new unseen data. With the objective to tackle this crucial issue, in the context of RNN based classification, we propose a new supervised layer-wise pretraining strategy to initialize network parameters. The proposed approach leverages a data-aware strategy that sets up a taxonomy of classification problems automatically derived by the model behavior. To the best of our knowledge, despite the great interest in RNN-based classification, this is the first data-aware strategy dealing with the initialization of such models. The proposed strategy has been tested on four benchmarks coming from two different domains, i.e., Speech Recognition and Remote Sensing. Results underline the significance of our approach and point out that data-aware strategies positively support the initialization of Recurrent Neural Network based classification models.

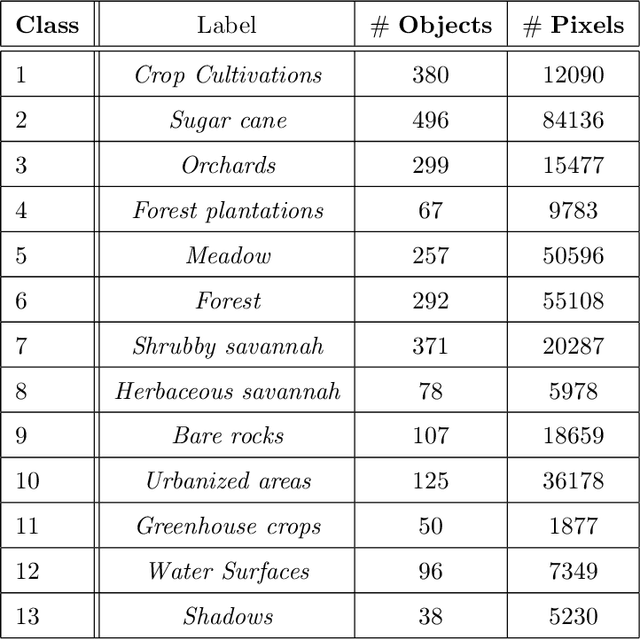

Combining Sentinel-1 and Sentinel-2 Time Series via RNN for object-based land cover classification

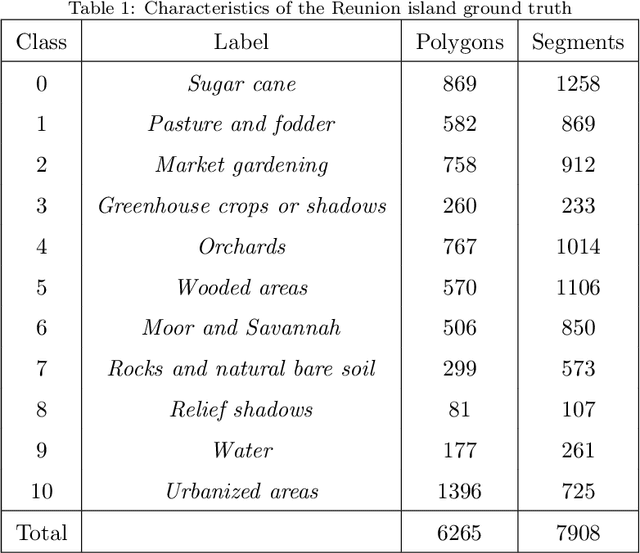

Dec 13, 2018

Abstract:Radar and Optical Satellite Image Time Series (SITS) are sources of information that are commonly employed to monitor earth surfaces for tasks related to ecology, agriculture, mobility, land management planning and land cover monitoring. Many studies have been conducted using one of the two sources, but how to smartly combine the complementary information provided by radar and optical SITS is still an open challenge. In this context, we propose a new neural architecture for the combination of Sentinel-1 (S1) and Sentinel-2 (S2) imagery at object level, applied to a real-world land cover classification task. Experiments carried out on the Reunion Island, a overseas department of France in the Indian Ocean, demonstrate the significance of our proposal.

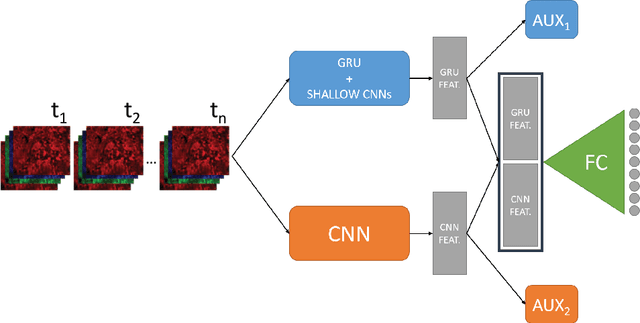

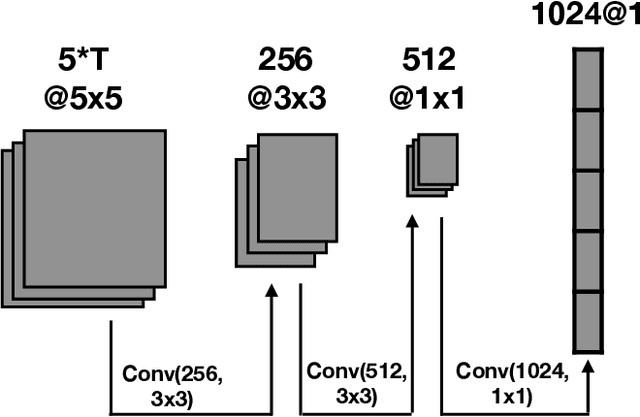

DuPLO: A DUal view Point deep Learning architecture for time series classificatiOn

Sep 20, 2018

Abstract:Nowadays, modern Earth Observation systems continuously generate huge amounts of data. A notable example is represented by the Sentinel-2 mission, which provides images at high spatial resolution (up to 10m) with high temporal revisit period (every 5 days), which can be organized in Satellite Image Time Series (SITS). While the use of SITS has been proved to be beneficial in the context of Land Use/Land Cover (LULC) map generation, unfortunately, machine learning approaches commonly leveraged in remote sensing field fail to take advantage of spatio-temporal dependencies present in such data. Recently, new generation deep learning methods allowed to significantly advance research in this field. These approaches have generally focused on a single type of neural network, i.e., Convolutional Neural Networks (CNNs) or Recurrent Neural Networks (RNNs), which model different but complementary information: spatial autocorrelation (CNNs) and temporal dependencies (RNNs). In this work, we propose the first deep learning architecture for the analysis of SITS data, namely \method{} (DUal view Point deep Learning architecture for time series classificatiOn), that combines Convolutional and Recurrent neural networks to exploit their complementarity. Our hypothesis is that, since CNNs and RNNs capture different aspects of the data, a combination of both models would produce a more diverse and complete representation of the information for the underlying land cover classification task. Experiments carried out on two study sites characterized by different land cover characteristics (i.e., the \textit{Gard} site in France and the \textit{Reunion Island} in the Indian Ocean), demonstrate the significance of our proposal.

MRFusion: A Deep Learning architecture to fuse PAN and MS imagery for land cover mapping

Jun 29, 2018

Abstract:Nowadays, Earth Observation systems provide a multitude of heterogeneous remote sensing data. How to manage such richness leveraging its complementarity is a crucial chal- lenge in modern remote sensing analysis. Data Fusion techniques deal with this point proposing method to combine and exploit complementarity among the different data sensors. Considering optical Very High Spatial Resolution (VHSR) images, satellites obtain both Multi Spectral (MS) and panchro- matic (PAN) images at different spatial resolution. VHSR images are extensively exploited to produce land cover maps to deal with agricultural, ecological, and socioeconomic issues as well as assessing ecosystem status, monitoring biodiversity and provid- ing inputs to conceive food risk monitoring systems. Common techniques to produce land cover maps from such VHSR images typically opt for a prior pansharpening of the multi-resolution source for a full resolution processing. Here, we propose a new deep learning architecture to jointly use PAN and MS imagery for a direct classification without any prior image fusion or resampling process. By managing the spectral information at its native spatial resolution, our method, named MRFusion, aims at avoiding the possible infor- mation loss induced by pansharpening or any other hand-crafted preprocessing. Moreover, the proposed architecture is suitably designed to learn non-linear transformations of the sources with the explicit aim of taking as much as possible advantage of the complementarity of PAN and MS imagery. Experiments are carried out on two-real world scenarios depicting large areas with different land cover characteristics. The characteristics of the proposed scenarios underline the applicability and the generality of our method in operational settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge