Rafal Wisniewski

Provably Safe Reinforcement Learning for Stochastic Reach-Avoid Problems with Entropy Regularization

Jan 15, 2026Abstract:We consider the problem of learning the optimal policy for Markov decision processes with safety constraints. We formulate the problem in a reach-avoid setup. Our goal is to design online reinforcement learning algorithms that ensure safety constraints with arbitrarily high probability during the learning phase. To this end, we first propose an algorithm based on the optimism in the face of uncertainty (OFU) principle. Based on the first algorithm, we propose our main algorithm, which utilizes entropy regularization. We investigate the finite-sample analysis of both algorithms and derive their regret bounds. We demonstrate that the inclusion of entropy regularization improves the regret and drastically controls the episode-to-episode variability that is inherent in OFU-based safe RL algorithms.

Provably Safe Reinforcement Learning using Entropy Regularizer

Jan 13, 2026Abstract:We consider the problem of learning the optimal policy for Markov decision processes with safety constraints. We formulate the problem in a reach-avoid setup. Our goal is to design online reinforcement learning algorithms that ensure safety constraints with arbitrarily high probability during the learning phase. To this end, we first propose an algorithm based on the optimism in the face of uncertainty (OFU) principle. Based on the first algorithm, we propose our main algorithm, which utilizes entropy regularization. We investigate the finite-sample analysis of both algorithms and derive their regret bounds. We demonstrate that the inclusion of entropy regularization improves the regret and drastically controls the episode-to-episode variability that is inherent in OFU-based safe RL algorithms.

Safe Reinforcement Learning for Constrained Markov Decision Processes with Stochastic Stopping Time

Mar 23, 2024Abstract:In this paper, we present an online reinforcement learning algorithm for constrained Markov decision processes with a safety constraint. Despite the necessary attention of the scientific community, considering stochastic stopping time, the problem of learning optimal policy without violating safety constraints during the learning phase is yet to be addressed. To this end, we propose an algorithm based on linear programming that does not require a process model. We show that the learned policy is safe with high confidence. We also propose a method to compute a safe baseline policy, which is central in developing algorithms that do not violate the safety constraints. Finally, we provide simulation results to show the efficacy of the proposed algorithm. Further, we demonstrate that efficient exploration can be achieved by defining a subset of the state-space called proxy set.

PAC-Bayes Generalisation Bounds for Dynamical Systems Including Stable RNNs

Dec 15, 2023

Abstract:In this paper, we derive a PAC-Bayes bound on the generalisation gap, in a supervised time-series setting for a special class of discrete-time non-linear dynamical systems. This class includes stable recurrent neural networks (RNN), and the motivation for this work was its application to RNNs. In order to achieve the results, we impose some stability constraints, on the allowed models. Here, stability is understood in the sense of dynamical systems. For RNNs, these stability conditions can be expressed in terms of conditions on the weights. We assume the processes involved are essentially bounded and the loss functions are Lipschitz. The proposed bound on the generalisation gap depends on the mixing coefficient of the data distribution, and the essential supremum of the data. Furthermore, the bound converges to zero as the dataset size increases. In this paper, we 1) formalize the learning problem, 2) derive a PAC-Bayesian error bound for such systems, 3) discuss various consequences of this error bound, and 4) show an illustrative example, with discussions on computing the proposed bound. Unlike other available bounds the derived bound holds for non i.i.d. data (time-series) and it does not grow with the number of steps of the RNN.

PAC-Bayesian-Like Error Bound for a Class of Linear Time-Invariant Stochastic State-Space Models

Dec 30, 2022Abstract:In this paper we derive a PAC-Bayesian-Like error bound for a class of stochastic dynamical systems with inputs, namely, for linear time-invariant stochastic state-space models (stochastic LTI systems for short). This class of systems is widely used in control engineering and econometrics, in particular, they represent a special case of recurrent neural networks. In this paper we 1) formalize the learning problem for stochastic LTI systems with inputs, 2) derive a PAC-Bayesian-Like error bound for such systems, 3) discuss various consequences of this error bound.

Privacy-Preserving Distributed Expectation Maximization for Gaussian Mixture Model using Subspace Perturbation

Sep 16, 2022

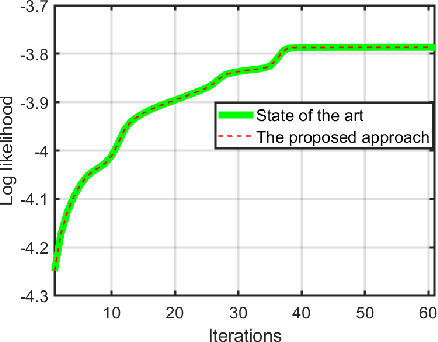

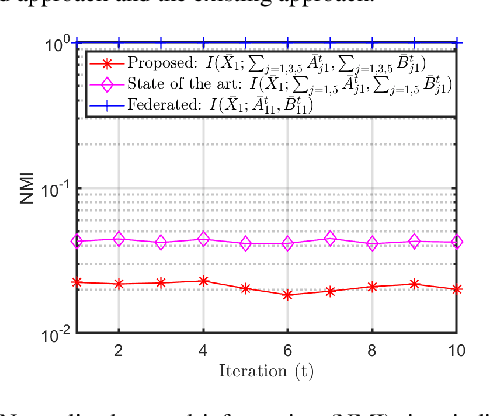

Abstract:Privacy has become a major concern in machine learning. In fact, the federated learning is motivated by the privacy concern as it does not allow to transmit the private data but only intermediate updates. However, federated learning does not always guarantee privacy-preservation as the intermediate updates may also reveal sensitive information. In this paper, we give an explicit information-theoretical analysis of a federated expectation maximization algorithm for Gaussian mixture model and prove that the intermediate updates can cause severe privacy leakage. To address the privacy issue, we propose a fully decentralized privacy-preserving solution, which is able to securely compute the updates in each maximization step. Additionally, we consider two different types of security attacks: the honest-but-curious and eavesdropping adversary models. Numerical validation shows that the proposed approach has superior performance compared to the existing approach in terms of both the accuracy and privacy level.

Optimal Prediction of Unmeasured Output from Measurable Outputs In LTI Systems

Sep 06, 2021

Abstract:In this short article, we showcase the derivation of an optimal predictor, when one part of system's output is not measured but is able to be predicted from the rest of the system's output which is measured. According to author's knowledge, similar derivations have been done before but not in state-space representation.

PAC-Bayesian theory for stochastic LTI systems

Mar 25, 2021

Abstract:In this paper we derive a PAC-Bayesian error bound for autonomous stochastic LTI state-space models. The motivation for deriving such error bounds is that they will allow deriving similar error bounds for more general dynamical systems, including recurrent neural networks. In turn, PACBayesian error bounds are known to be useful for analyzing machine learning algorithms and for deriving new ones.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge