Quynh Ngoc Thi Do

Cross-lingual transfer learning for spoken language understanding

Apr 03, 2019

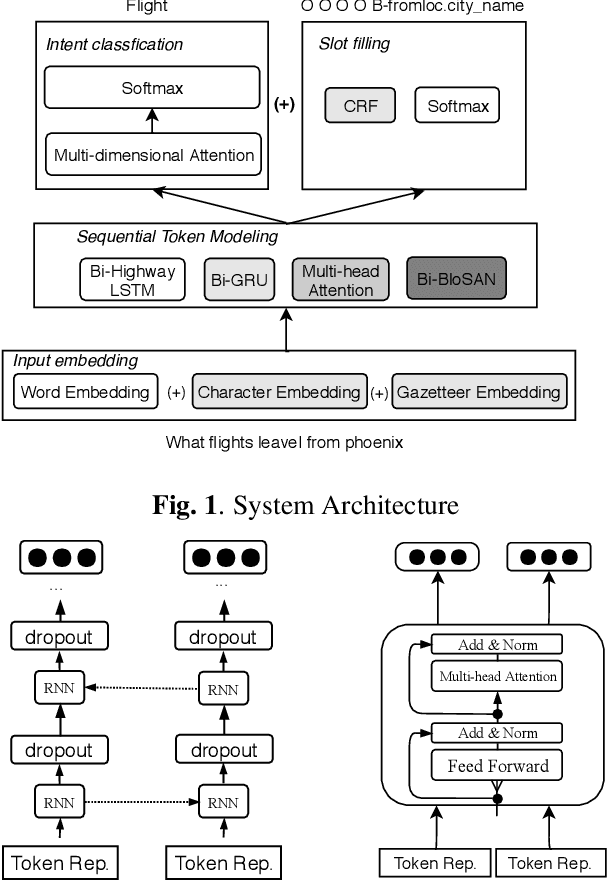

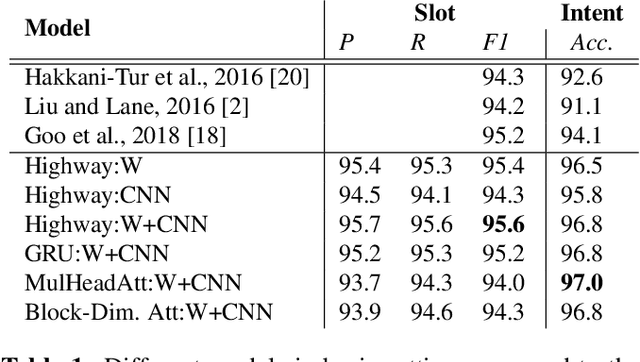

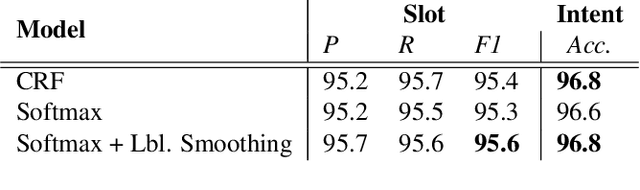

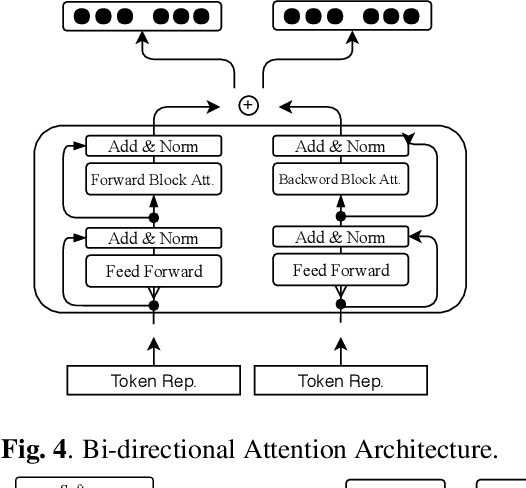

Abstract:Typically, spoken language understanding (SLU) models are trained on annotated data which are costly to gather. Aiming to reduce data needs for bootstrapping a SLU system for a new language, we present a simple but effective weight transfer approach using data from another language. The approach is evaluated with our promising multi-task SLU framework developed towards different languages. We evaluate our approach on the ATIS and a real-world SLU dataset, showing that i) our monolingual models outperform the state-of-the-art, ii) we can reduce data amounts needed for bootstrapping a SLU system for a new language greatly, and iii) while multitask training improves over separate training, different weight transfer settings may work best for different SLU modules.

Improving Implicit Semantic Role Labeling by Predicting Semantic Frame Arguments

Oct 05, 2017

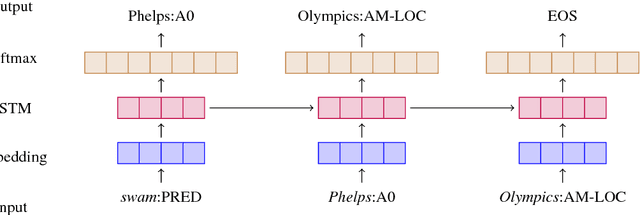

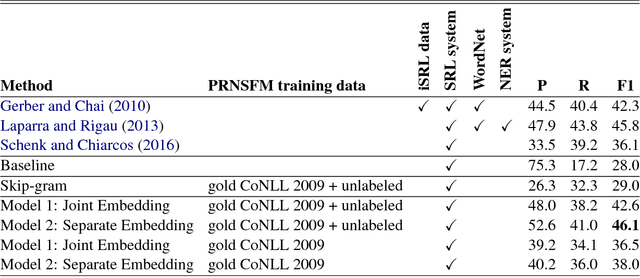

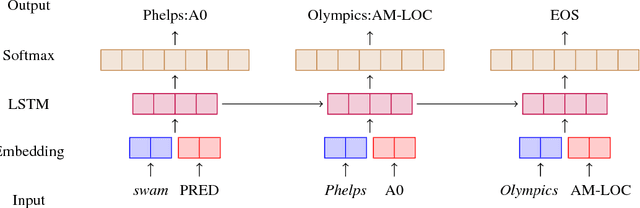

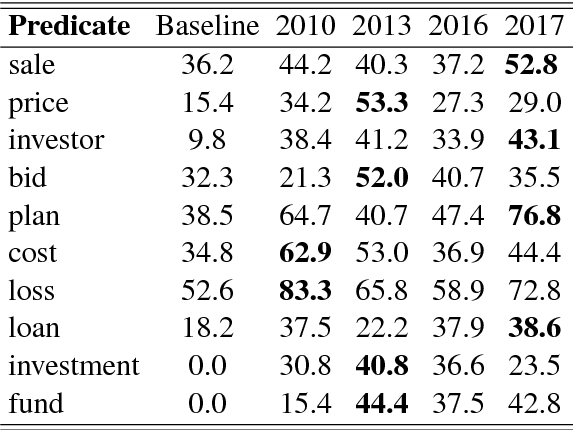

Abstract:Implicit semantic role labeling (iSRL) is the task of predicting the semantic roles of a predicate that do not appear as explicit arguments, but rather regard common sense knowledge or are mentioned earlier in the discourse. We introduce an approach to iSRL based on a predictive recurrent neural semantic frame model (PRNSFM) that uses a large unannotated corpus to learn the probability of a sequence of semantic arguments given a predicate. We leverage the sequence probabilities predicted by the PRNSFM to estimate selectional preferences for predicates and their arguments. On the NomBank iSRL test set, our approach improves state-of-the-art performance on implicit semantic role labeling with less reliance than prior work on manually constructed language resources.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge