Qishen Chen

Context-based Transfer and Efficient Iterative Learning for Unbiased Scene Graph Generation

Dec 29, 2023

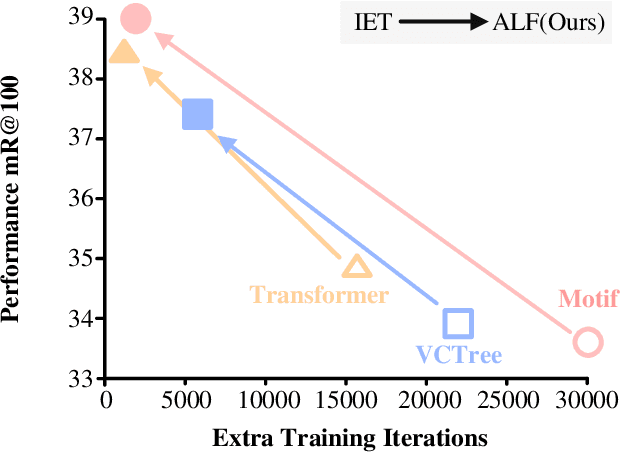

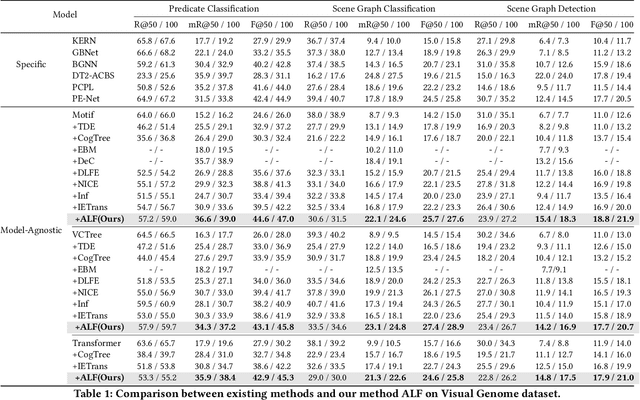

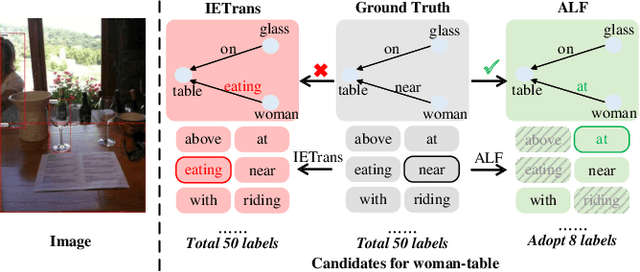

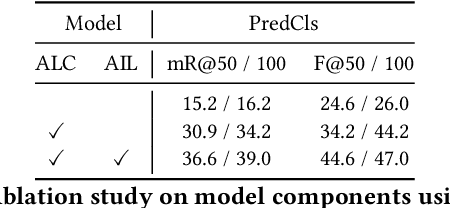

Abstract:Unbiased Scene Graph Generation (USGG) aims to address biased predictions in SGG. To that end, data transfer methods are designed to convert coarse-grained predicates into fine-grained ones, mitigating imbalanced distribution. However, them overlook contextual relevance between transferred labels and subject-object pairs, such as unsuitability of 'eating' for 'woman-table'. Furthermore, they typically involve a two-stage process with significant computational costs, starting with pre-training a model for data transfer, followed by training from scratch using transferred labels. Thus, we introduce a plug-and-play method named CITrans, which iteratively trains SGG models with progressively enhanced data. First, we introduce Context-Restricted Transfer (CRT), which imposes subject-object constraints within predicates' semantic space to achieve fine-grained data transfer. Subsequently, Efficient Iterative Learning (EIL) iteratively trains models and progressively generates enhanced labels which are consistent with model's learning state, thereby accelerating the training process. Finally, extensive experiments show that CITrans achieves state-of-the-art and results with high efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge