Prasen Kumar Sharma

A Generalized Zero-Shot Quantization of Deep Convolutional Neural Networks via Learned Weights Statistics

Dec 11, 2021

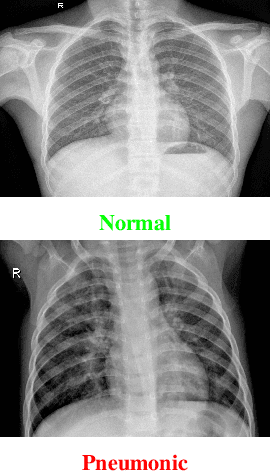

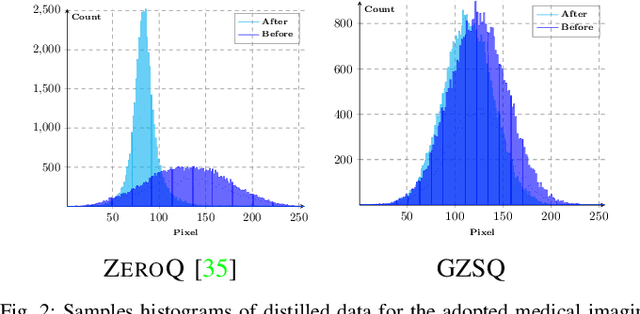

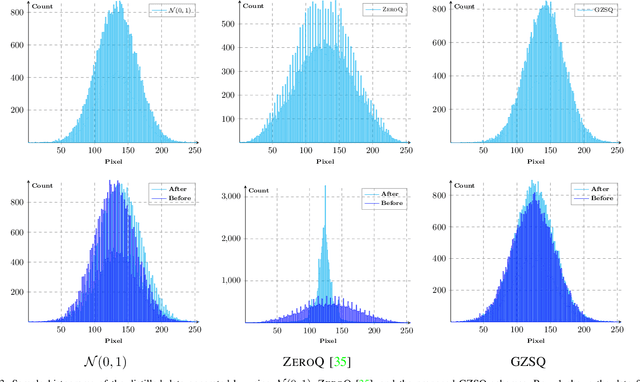

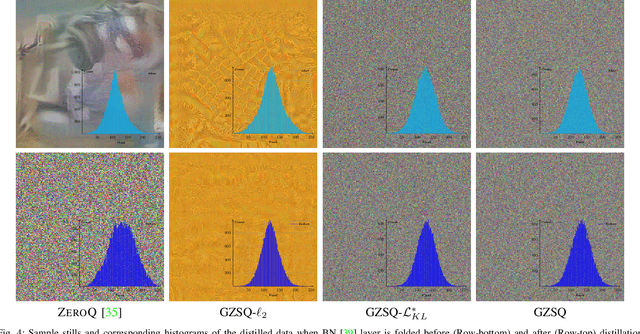

Abstract:Quantizing the floating-point weights and activations of deep convolutional neural networks to fixed-point representation yields reduced memory footprints and inference time. Recently, efforts have been afoot towards zero-shot quantization that does not require original unlabelled training samples of a given task. These best-published works heavily rely on the learned batch normalization (BN) parameters to infer the range of the activations for quantization. In particular, these methods are built upon either empirical estimation framework or the data distillation approach, for computing the range of the activations. However, the performance of such schemes severely degrades when presented with a network that does not accommodate BN layers. In this line of thought, we propose a generalized zero-shot quantization (GZSQ) framework that neither requires original data nor relies on BN layer statistics. We have utilized the data distillation approach and leveraged only the pre-trained weights of the model to estimate enriched data for range calibration of the activations. To the best of our knowledge, this is the first work that utilizes the distribution of the pretrained weights to assist the process of zero-shot quantization. The proposed scheme has significantly outperformed the existing zero-shot works, e.g., an improvement of ~ 33% in classification accuracy for MobileNetV2 and several other models that are w & w/o BN layers, for a variety of tasks. We have also demonstrated the efficacy of the proposed work across multiple open-source quantization frameworks. Importantly, our work is the first attempt towards the post-training zero-shot quantization of futuristic unnormalized deep neural networks.

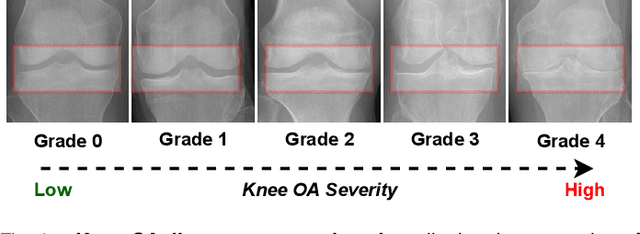

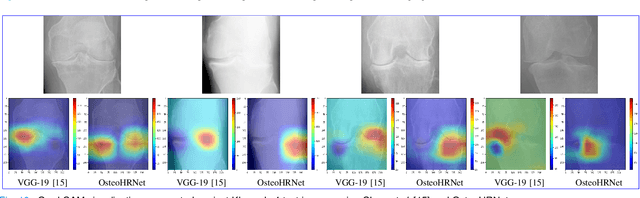

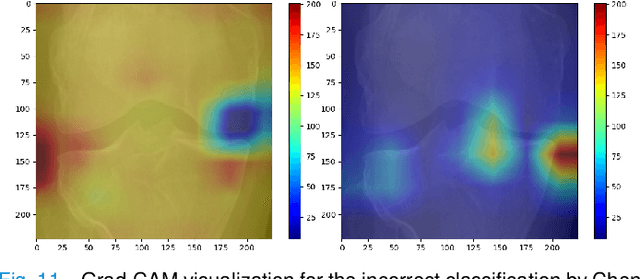

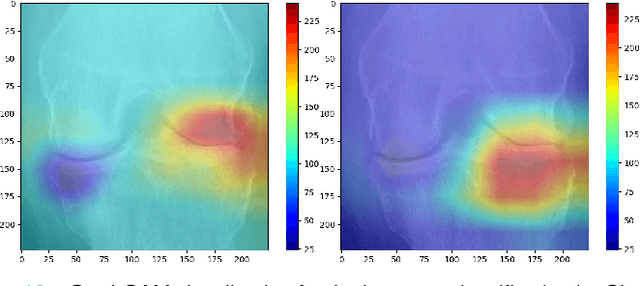

Knee Osteoarthritis Severity Prediction using an Attentive Multi-Scale Deep Convolutional Neural Network

Jun 27, 2021

Abstract:Knee Osteoarthritis (OA) is a destructive joint disease identified by joint stiffness, pain, and functional disability concerning millions of lives across the globe. It is generally assessed by evaluating physical symptoms, medical history, and other joint screening tests like radiographs, Magnetic Resonance Imaging (MRI), and Computed Tomography (CT) scans. Unfortunately, the conventional methods are very subjective, which forms a barrier in detecting the disease progression at an early stage. This paper presents a deep learning-based framework, namely OsteoHRNet, that automatically assesses the Knee OA severity in terms of Kellgren and Lawrence (KL) grade classification from X-rays. As a primary novelty, the proposed approach is built upon one of the most recent deep models, called the High-Resolution Network (HRNet), to capture the multi-scale features of knee X-rays. In addition, we have also incorporated an attention mechanism to filter out the counterproductive features and boost the performance further. Our proposed model has achieved the best multiclass accuracy of 71.74% and MAE of 0.311 on the baseline cohort of the OAI dataset, which is a remarkable gain over the existing best-published works. We have also employed the Gradient-based Class Activation Maps (Grad-CAMs) visualization to justify the proposed network learning.

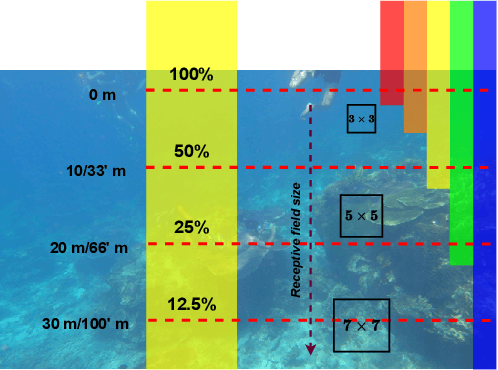

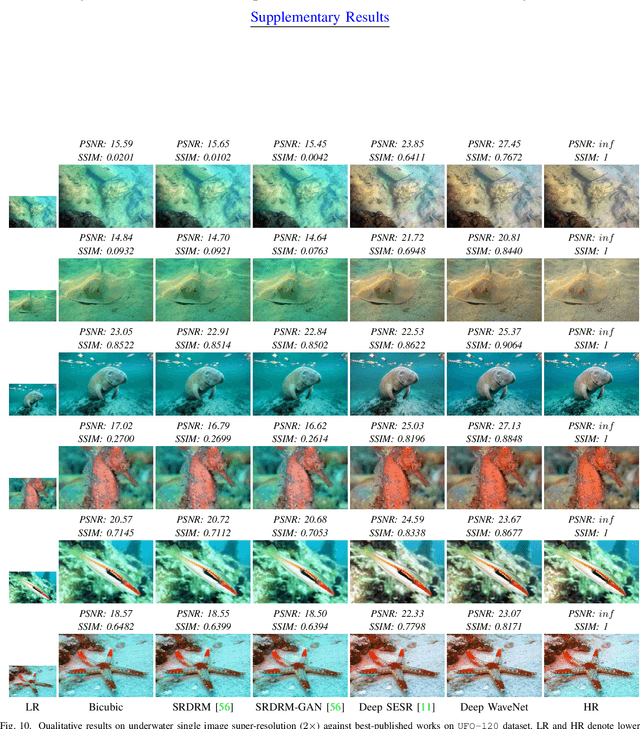

Wavelength-based Attributed Deep Neural Network for Underwater Image Restoration

Jun 15, 2021

Abstract:Underwater images, in general, suffer from low contrast and high color distortions due to the non-uniform attenuation of the light as it propagates through the water. In addition, the degree of attenuation varies with the wavelength resulting in the asymmetric traversing of colors. Despite the prolific works for underwater image restoration (UIR) using deep learning, the above asymmetricity has not been addressed in the respective network engineering. As the first novelty, this paper shows that attributing the right receptive field size (context) based on the traversing range of the color channel may lead to a substantial performance gain for the task of UIR. Further, it is important to suppress the irrelevant multi-contextual features and increase the representational power of the model. Therefore, as a second novelty, we have incorporated an attentive skip mechanism to adaptively refine the learned multi-contextual features. The proposed framework, called Deep WaveNet, is optimized using the traditional pixel-wise and feature-based cost functions. An extensive set of experiments have been carried out to show the efficacy of the proposed scheme over existing best-published literature on benchmark datasets. More importantly, we have demonstrated a comprehensive validation of enhanced images across various high-level vision tasks, e.g., underwater image semantic segmentation, and diver's 2D pose estimation. A sample video to exhibit our real-world performance is available at \url{https://www.youtube.com/watch?v=8qtuegBdfac}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge