Pingwen Zhang

Nonlinear Assimilation with Score-based Sequential Langevin Sampling

Nov 20, 2024

Abstract:This paper presents a novel approach for nonlinear assimilation called score-based sequential Langevin sampling (SSLS) within a recursive Bayesian framework. SSLS decomposes the assimilation process into a sequence of prediction and update steps, utilizing dynamic models for prediction and observation data for updating via score-based Langevin Monte Carlo. An annealing strategy is incorporated to enhance convergence and facilitate multi-modal sampling. The convergence of SSLS in TV-distance is analyzed under certain conditions, providing insights into error behavior related to hyper-parameters. Numerical examples demonstrate its outstanding performance in high-dimensional and nonlinear scenarios, as well as in situations with sparse or partial measurements. Furthermore, SSLS effectively quantifies the uncertainty associated with the estimated states, highlighting its potential for error calibration.

State-observation augmented diffusion model for nonlinear assimilation

Jul 31, 2024

Abstract:Data assimilation has become a crucial technique aiming to combine physical models with observational data to estimate state variables. Traditional assimilation algorithms often face challenges of high nonlinearity brought by both the physical and observational models. In this work, we propose a novel data-driven assimilation algorithm based on generative models to address such concerns. Our State-Observation Augmented Diffusion (SOAD) model is designed to handle nonlinear physical and observational models more effectively. The marginal posterior associated with SOAD has been derived and then proved to match the real posterior under mild assumptions, which shows theoretical superiority over previous score-based assimilation works. Experimental results also indicate that our SOAD model may offer improved accuracy over existing data-driven methods.

DRM Revisited: A Complete Error Analysis

Jul 12, 2024

Abstract:In this work, we address a foundational question in the theoretical analysis of the Deep Ritz Method (DRM) under the over-parameteriztion regime: Given a target precision level, how can one determine the appropriate number of training samples, the key architectural parameters of the neural networks, the step size for the projected gradient descent optimization procedure, and the requisite number of iterations, such that the output of the gradient descent process closely approximates the true solution of the underlying partial differential equation to the specified precision?

Characteristic Learning for Provable One Step Generation

May 09, 2024

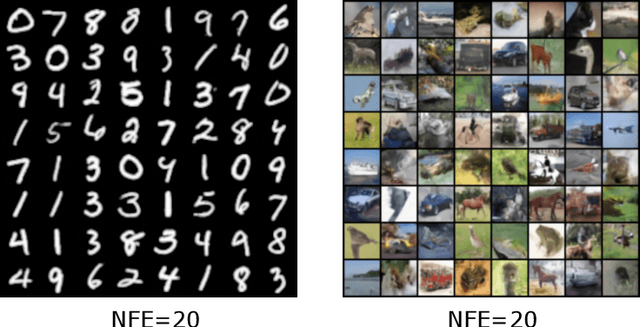

Abstract:We propose the characteristic generator, a novel one-step generative model that combines the efficiency of sampling in Generative Adversarial Networks (GANs) with the stable performance of flow-based models. Our model is driven by characteristics, along which the probability density transport can be described by ordinary differential equations (ODEs). Specifically, We estimate the velocity field through nonparametric regression and utilize Euler method to solve the probability flow ODE, generating a series of discrete approximations to the characteristics. We then use a deep neural network to fit these characteristics, ensuring a one-step mapping that effectively pushes the prior distribution towards the target distribution. In the theoretical aspect, we analyze the errors in velocity matching, Euler discretization, and characteristic fitting to establish a non-asymptotic convergence rate for the characteristic generator in 2-Wasserstein distance. To the best of our knowledge, this is the first thorough analysis for simulation-free one step generative models. Additionally, our analysis refines the error analysis of flow-based generative models in prior works. We apply our method on both synthetic and real datasets, and the results demonstrate that the characteristic generator achieves high generation quality with just a single evaluation of neural network.

Latent assimilation with implicit neural representations for unknown dynamics

Sep 18, 2023Abstract:Data assimilation is crucial in a wide range of applications, but it often faces challenges such as high computational costs due to data dimensionality and incomplete understanding of underlying mechanisms. To address these challenges, this study presents a novel assimilation framework, termed Latent Assimilation with Implicit Neural Representations (LAINR). By introducing Spherical Implicit Neural Representations (SINR) along with a data-driven uncertainty estimator of the trained neural networks, LAINR enhances efficiency in assimilation process. Experimental results indicate that LAINR holds certain advantage over existing methods based on AutoEncoders, both in terms of accuracy and efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge