Philippe Chatigny

Learning the Efficient Frontier

Oct 13, 2023Abstract:The efficient frontier (EF) is a fundamental resource allocation problem where one has to find an optimal portfolio maximizing a reward at a given level of risk. This optimal solution is traditionally found by solving a convex optimization problem. In this paper, we introduce NeuralEF: a fast neural approximation framework that robustly forecasts the result of the EF convex optimization problem with respect to heterogeneous linear constraints and variable number of optimization inputs. By reformulating an optimization problem as a sequence to sequence problem, we show that NeuralEF is a viable solution to accelerate large-scale simulation while handling discontinuous behavior.

Neural forecasting at scale

Sep 22, 2021

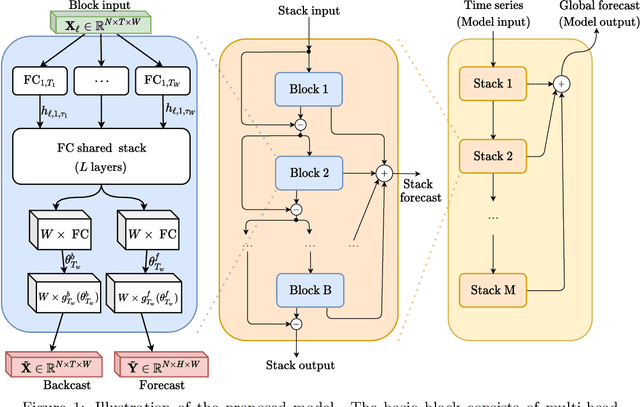

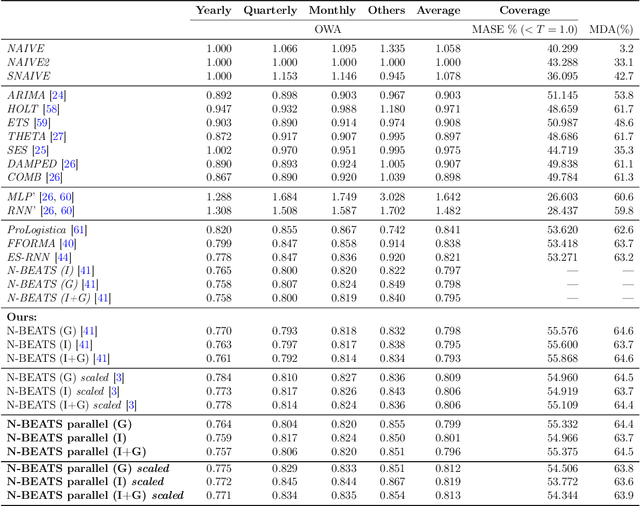

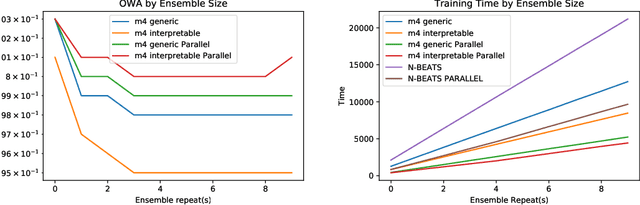

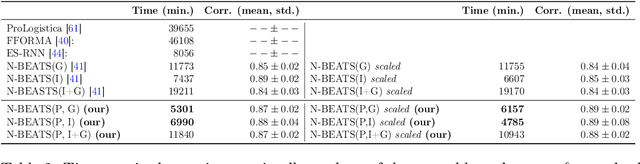

Abstract:We study the problem of efficiently scaling ensemble-based deep neural networks for time series (TS) forecasting on a large set of time series. Current state-of-the-art deep ensemble models have high memory and computational requirements, hampering their use to forecast millions of TS in practical scenarios. We propose N-BEATS(P), a global multivariate variant of the N-BEATS model designed to allow simultaneous training of multiple univariate TS forecasting models. Our model addresses the practical limitations of related models, reducing the training time by half and memory requirement by a factor of 5, while keeping the same level of accuracy. We have performed multiple experiments detailing the various ways to train our model and have obtained results that demonstrate its capacity to support zero-shot TS forecasting, i.e., to train a neural network on a source TS dataset and deploy it on a different target TS dataset without retraining, which provides an efficient and reliable solution to forecast at scale even in difficult forecasting conditions.

Financial Time Series Representation Learning

Mar 27, 2020

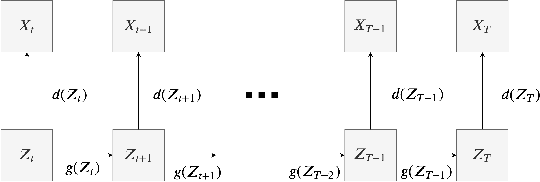

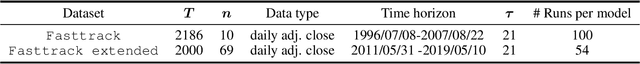

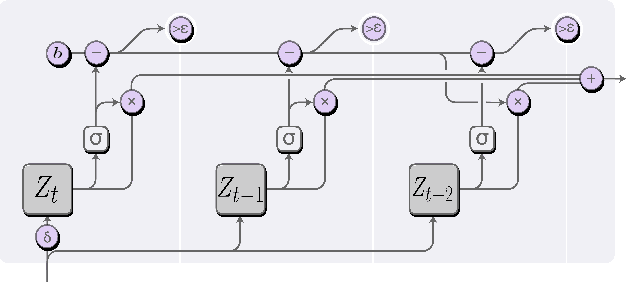

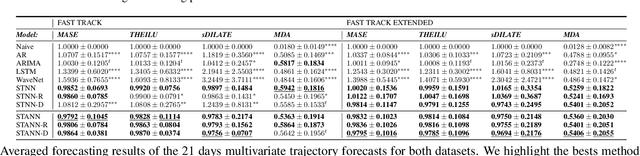

Abstract:This paper addresses the difficulty of forecasting multiple financial time series (TS) conjointly using deep neural networks (DNN). We investigate whether DNN-based models could forecast these TS more efficiently by learning their representation directly. To this end, we make use of the dynamic factor graph (DFG) from that we enhance by proposing a novel variable-length attention-based mechanism to render it memory-augmented. Using this mechanism, we propose an unsupervised DNN architecture for multivariate TS forecasting that allows to learn and take advantage of the relationships between these TS. We test our model on two datasets covering 19 years of investment funds activities. Our experimental results show that our proposed approach outperforms significantly typical DNN-based and statistical models at forecasting their 21-day price trajectory.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge